Streamlining Biomass Supply Chains: The Critical Path to Economical Decentralized Sustainable Aviation Fuel (SAF) Production

This article provides a comprehensive analysis of biomass logistics optimization strategies tailored for decentralized Sustainable Aviation Fuel (SAF) production.

Streamlining Biomass Supply Chains: The Critical Path to Economical Decentralized Sustainable Aviation Fuel (SAF) Production

Abstract

This article provides a comprehensive analysis of biomass logistics optimization strategies tailored for decentralized Sustainable Aviation Fuel (SAF) production. Targeting researchers and bioenergy professionals, we explore the foundational challenges of feedstock variability and supply chain geometry, detail methodological advances in preprocessing, transport, and digital integration, address critical troubleshooting in storage, contamination, and cost volatility, and validate approaches through techno-economic analysis and lifecycle assessment. The synthesis offers a roadmap for overcoming key logistical bottlenecks to enable scalable, cost-effective decentralized bio-refineries.

The Decentralized SAF Challenge: Understanding Biomass Logistics Bottlenecks and Feedstock Fundamentals

Technical Support Center: Troubleshooting Guides & FAQs

FAQs on Experimental Setup & Biomass Logistics

Q1: What are the critical thresholds for defining "decentralized" scale in SAF production from biomass, and how do they impact reactor design? A: Current research indicates decentralized SAF production typically ranges from 1,000 to 50,000 tons of SAF per year. This scale minimizes biomass transport radius while achieving economic viability. Key thresholds are:

- < 1,000 tons/year: Often not economically viable due to high fixed costs per unit output.

- 1,000 - 10,000 tons/year: Optimal for local agricultural or forestry waste, using modular thermochemical (e.g., pyrolysis, gasification-FT) units.

- 10,000 - 50,000 tons/year: Suitable for regional waste streams (e.g., municipal solid waste, larger agricultural residues), potentially integrating biochemical (e.g., hydroprocessed esters) pathways.

Table 1: Scale Definitions and Logistics Impact

| Production Scale (tSAF/yr) | Biomass Feedstock Radius | Recommended Conversion Pathway | Primary Economic Driver |

|---|---|---|---|

| 1,000 - 5,000 | < 50 km | Fast Pyrolysis with Upgrading | Low-CAPEX modular systems, local policy incentives |

| 5,000 - 20,000 | 50 - 100 km | Gasification-Fischer-Tropsch | Feedstock consistency, cost of hydrogen supply |

| 20,000 - 50,000 | 100 - 150 km | Hydroprocessing of Bio-Oils | Economy of scale on upgrading, offtake agreements |

Experimental Protocol: Determining Optimal Decentralized Scale via Techno-Economic Analysis (TEA)

- Define Feedstock: Characterize local biomass (e.g., moisture, ash, lipid/carbohydrate content).

- Model Logistics: Use GIS software to map feedstock density and calculate transport costs (€/ton/km) for varying plant sizes.

- Process Simulation: Use Aspen Plus or similar to model mass/energy balances for candidate pathways at multiple scales.

- Costing: Apply CAPEX scaling exponents (typically 0.6-0.7) and estimate OPEX.

- Calculate MSP: Determine the Minimum Selling Price (MSP) of SAF for each scale-scenario.

- Sensitivity Analysis: Identify key cost drivers (e.g., feedstock cost, hydrogen price, catalyst lifetime).

Q2: During lab-scale hydroprocessing of bio-oils to SAF, we observe rapid catalyst deactivation. What are the primary troubleshooting steps? A: Catalyst deactivation in hydrodeoxygenation (HDO) is commonly caused by coking, sintering, or poisoning.

- Check Feedstock Pretreatment: Ensure bio-oil is stabilized and filtered to < 0.1 µm to remove particulates. Protocol: Use vacuum filtration followed by a guard bed of silica/alumina before the main catalyst bed.

- Analyze Deactivated Catalyst: Perform Thermogravimetric Analysis (TGA) to quantify coke burn-off, and X-ray Diffraction (XRD) to check for metal sintering or phase changes.

- Adjust Process Conditions:

- Increase H₂ partial pressure (e.g., from 50 to 80 bar) to suppress coke formation.

- Lower reaction temperature (e.g., from 350°C to 320°C) if sintering is suspected.

- Introduce a catalyst regeneration step with controlled air/oxygen burn.

Q3: How do we accurately model the cost-optimal location for a decentralized SAF production facility? A: This requires a integrated logistics and facility siting model. Common errors include omitting spatial feedstock variability or infrastructure access costs. Troubleshooting Guide:

- Error: Model suggests location with highest biomass density, ignoring grid connectivity or hydrogen supply.

- Solution: Incorporate a multi-criteria analysis with weighted factors: feedstock procurement cost (60%), utility access (20%), product distribution (20%).

- Error: Transportation cost model assumes linear cost with distance, underestimating rural road premiums.

- Solution: Use region-specific transport tariffs: €0.25/ton/km for paved roads, €0.35/ton/km for unpaved roads.

Experimental Protocol: GIS-Based Facility Siting Optimization

- Data Layer Collection: Gather spatial data for biomass yield, road networks, electrical grid lines, natural gas pipelines (for H₂), and protected areas.

- Cost Raster Creation: Convert each layer to a cost raster (€/ton or €/m²) reflecting procurement or development cost.

- Weighted Overlay: Apply weights from expert judgment or Analytical Hierarchy Process to combine rasters.

- Site Selection: Identify pixels with the lowest total cost score meeting minimum area (e.g., 5 hectares) and slope (<5%) constraints.

- Validation: Perform a location-allocation analysis to ensure the selected site can minimize total transport cost for the aggregated biomass.

The Scientist's Toolkit: Research Reagent Solutions for SAF Catalysis Testing

Table 2: Essential Materials for Catalytic Upgrading Experiments

| Reagent/Material | Function | Example Vendor/Product Code |

|---|---|---|

| NiMo/Al₂O₃ Catalyst | Standard hydrotreating catalyst for deoxygenation and hydrodenitrogenation. | Sigma-Aldrich, 457849 |

| Pt/SAPO-11 Catalyst | Bifunctional catalyst for hydroisomerization to improve SAF cold-flow properties. | Alfa Aesar, 45742 |

| Simulated Bio-Oil Blend | Standardized feed for catalyst benchmarking (e.g., guaiacol, acetic acid, sorbitol in water). | Prepared in-lab per NREL protocol |

| n-Dodecane | Model hydrocarbon compound for reactor calibration and as a solvent. | Fisher Chemical, D/4120/PB17 |

| High-Purity H₂ Gas (≥99.999%) | Reactant for hydroprocessing reactions; purity critical to prevent catalyst poisoning. | Linde, H₂ GRADE 6.0 |

| SiO₂ Guard Bed Material | Protects main catalyst bed from particulates and alkali metals. | Fuji Silysia, CHROMATOSORB 60A |

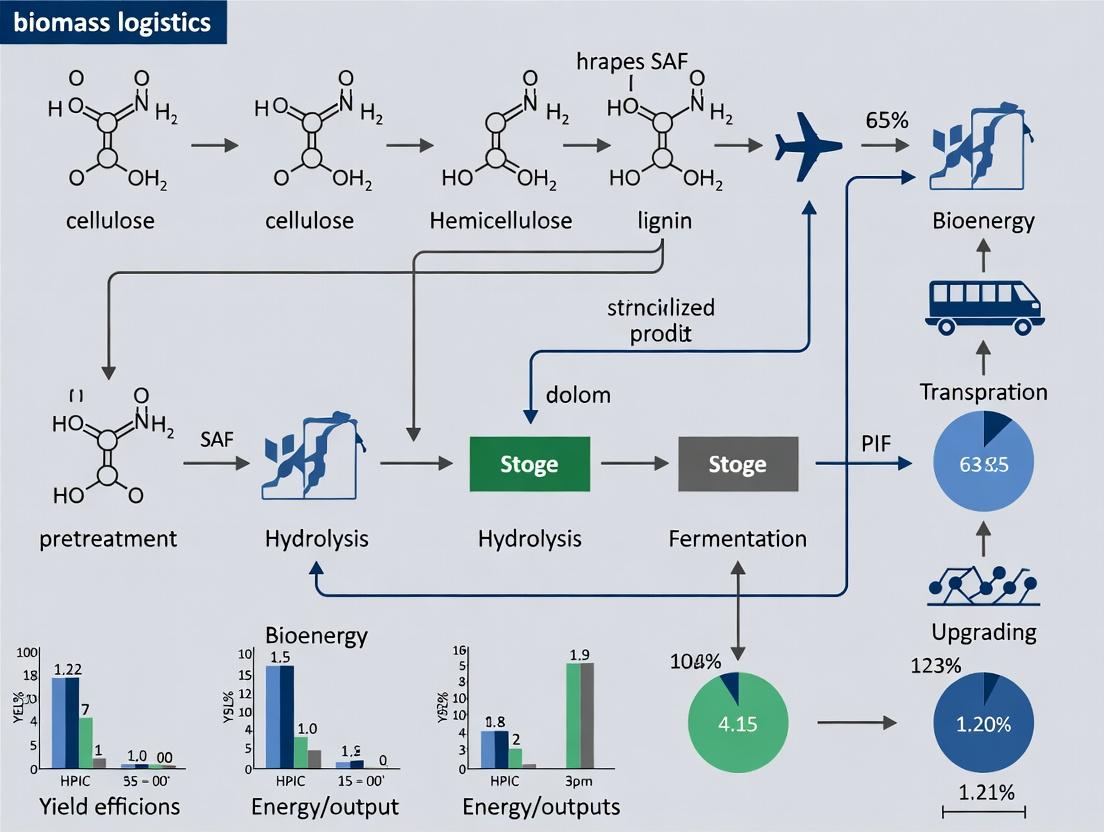

Visualization: Experimental Workflow for Decentralized SAF Pathway Evaluation

Title: Workflow for Evaluating Decentralized SAF Pathways

Visualization: Common Catalyst Deactivation Pathways in HDO

Title: Hydroprocessing Catalyst Deactivation Mechanisms

Technical Support Center: Feedstock Preprocessing & Analysis

Troubleshooting Guides & FAQs

Q1: During lignocellulosic analysis of corn stover, my results for acid-insoluble lignin (AIL) are inconsistent and often exceed typical reported ranges. What could be causing this?

A: High AIL values often indicate incomplete hydrolysis of structural carbohydrates or contamination. Follow this protocol:

- Ensure Proper Particle Size: Mill feedstock to pass a 20-mesh screen (<0.85 mm). Larger particles resist hydrolysis.

- Validate Acid Concentration: Precisely prepare 72% w/w sulfuric acid. Use a calibrated densitometer. Deviation of ±0.5% can alter results.

- Control Hydrolysis Temperature: Perform primary hydrolysis in a water bath at 30°C ± 0.5°C for 60 minutes with frequent stirring.

- Secondary Hydrolysis Dilution: Dilute to 4% acid concentration with deionized water. Autoclave at 121°C for 60 minutes. Ensure consistent autoclave ramp and vent cycles.

- Filtering Protocol: Use pre-weighed, grade 4 porosity ceramic filter crucibles. Wash residue with hot DI water until pH neutral. Dry at 105°C overnight before weighing. Reagent Solution: Use NIST standard reference material 8492 (poplar biomass) to calibrate your method.

Q2: My hydrothermal liquefaction (HTL) of sewage sludge yields excessive solid bio-char (>25 wt%) and low biocrude. How can I optimize this?

A: High char formation suggests excessive repolymerization reactions. Modify your HTL protocol:

- Adjust Slurry Properties: Ensure homogeneous slurry with ≤15% solid loading. Use a high-shear mixer for 30 minutes prior to reaction.

- Incorporate a Catalyst: Add 5 wt% sodium carbonate (Na₂CO₃) as a homogeneous catalyst to suppress char pathways and promote deoxygenation.

- Optimize Temperature Profile: Rapid heating is critical. Use a preheated fluidized sand bath or direct electrical heating to reach 350°C in <15 minutes. Hold for 20 minutes.

- Employ a Reducing Atmosphere: Purge reactor headspace with nitrogen (20 bar cold fill pressure) to maintain anoxic conditions. Essential Material: Parr Series 4570 High-Pressure/High-Temperature reactor with rapid heating jacket.

Q3: When cultivating Miscanthus x giganteus as an energy crop for field trials, germination rates are poor. What is the established protocol for propagation?

A: Miscanthus has low seed viability; use rhizome propagation.

- Rhizome Selection & Preparation: Harvest dormant rhizomes from 2-3 year old plants. Cut into sections ensuring each has ≥2 healthy buds (nodes).

- Pre-Planting Treatment: Dip rhizome sections in a fungicide solution (e.g., Captan at 2 g/L) for 15 minutes to prevent rot.

- Soil Preparation: Plant in well-drained soil with pH 5.5-7.5. Apply a pre-emergent herbicide (e.g., pendimethalin) following agricultural extension guidelines.

- Planting Protocol: Plant rhizomes 10 cm deep, with 1-meter spacing between plants and rows. Initial irrigation of 25 mm is critical. Research Reagent: Use Rhizome propagation kits from specialized nurseries (e.g., New Energy Farms) for trial consistency.

Q4: My FT-IR spectra for characterizing SAF intermediates from waste oils show unresolved peaks in the 1600-1800 cm⁻¹ region. How can I improve resolution?

A: This region (C=O stretch) is critical for identifying carboxylic acids, ketones, and esters. Improve sample preparation:

- Sample Purity: Pre-purify the bio-intermediate via silica gel column chromatography using hexane/ethyl acetate gradient elution to isolate fractions.

- Thin Film Method: For liquid samples, apply a thin, uniform film between NaCl plates. Do not overtighten.

- Instrument Calibration: Perform daily background and wavelength calibration using a polystyrene standard. Ensure relative humidity in the instrument is below 40%.

- Spectral Parameters: Set resolution to 2 cm⁻¹ and accumulate 64 scans to improve signal-to-noise ratio. Reagent Solution: Use certified FT-IR calibration standards from Sigma-Aldrich (Product # IRMS01).

Table 1: Typical Biochemical Composition of Primary SAF Feedstocks (Dry Basis)

| Feedstock Type | Example | Glucan (wt%) | Xylan (wt%) | Lignin (wt%) | Ash (wt%) | Reference |

|---|---|---|---|---|---|---|

| Agricultural Residue | Corn Stover | 35-40 | 18-22 | 15-19 | 4-7 | NREL 2023 |

| Agricultural Residue | Wheat Straw | 33-38 | 20-24 | 16-20 | 6-9 | BioRes. 2024 |

| Energy Crop | Miscanthus | 42-48 | 22-25 | 20-24 | 1.5-3 | GCB Bioenergy 2023 |

| Energy Crop | Switchgrass | 32-37 | 20-23 | 17-20 | 3-5.5 | Frontiers 2024 |

| Waste Stream | Municipal Sewage Sludge | 5-10* | 2-5* | 15-25 | 30-50 | WER 2024 |

| Waste Stream | Waste Forestry Wood | 40-45 | 15-20 | 25-30 | <1 | Fuel 2023 |

Primarily cellulosic, not differentiated. *Includes non-lignin aromatic polymers.

Table 2: Decentralized Preprocessing Unit Performance Metrics

| Process Unit | Input Feedstock | Key Operational Parameter | Target Output Specification | Energy Demand (MJ/ton dry) |

|---|---|---|---|---|

| Size Reduction | Baled Residues | Hammer Mill Screen Size (mm) | 90% particles <3 mm | 50-80 |

| Torrefaction | Woody Crops | Temp: 275°C, Residence: 30 min | Mass Yield: 70%, Energy Density: >22 GJ/ton | 800-1200 |

| Fast Pyrolysis | Dry Sludge | Temp: 500°C, Vapor Residence: 2s | Bio-Oil Yield: >55 wt% | 1500-2000 (Heat Integration) |

| Pelletization | Torrefied Biomass | Die Temp: 95°C, Pressure: 150 MPa | Pellet Durability Index >97.5% | 80-120 |

Experimental Protocol: Standardized Feedstock Suitability Assessment

Title: Protocol for Determining Biomass Feedstock Suitability for Decentralized Hydroprocessing to SAF.

Objective: To provide a standardized methodology for evaluating diverse feedstocks' compatibility with decentralized hydroprocessed esters and fatty acids (HEFA) or Fischer-Tropsch (FT) conversion pathways.

Materials:

- Feedstock: 5 kg (dry weight equivalent) of milled and homogenized sample.

- Reagents: Dichloromethane (HPLC grade), deionized water (18.2 MΩ·cm), sulfuric acid (ACS grade), nitrogen gas (high purity).

- Equipment: Soxhlet extraction apparatus, bomb calorimeter (IKA C2000), TGA/DSC analyzer, CHNS/O elemental analyzer (PerkinElmer 2400), 300 mL Parr batch reactor.

Procedure:

- Preprocessing & Characterization:

- Mill feedstock to ≤2 mm. Determine moisture content (AOAC 934.01).

- Perform proximate (ASTM E870) and ultimate analysis (ASTM D5373).

- Determine higher heating value (HHV) using bomb calorimetry (ASTM D5865).

- Extractives Analysis (Critical for Waste Oils/Fats):

- Weigh 10 g of dry biomass (W₁) into a cellulose thimble.

- Perform Soxhlet extraction with 200 mL DCM for 6 hours (12 cycles/hour).

- Dry the thimble and residue at 105°C for 4 hours, cool in desiccator, and weigh (W₂).

- Calculate extractives: [(W₁ - W₂) / W₁] * 100%.

- Rotovap the extract to recover lipids/oils for FAME analysis.

- Thermochemical Conversion Screening (Micro-Reactor):

- Load 5 g of dry feedstock into the Parr reactor.

- For HEFA pathway screening, add 50 mL of co-solvent (e.g., dodecane) and 0.1 g of NiMo/Al₂O₃ catalyst.

- Purge system 3x with N₂, then pressurize to 30 bar with H₂.

- Heat to 350°C at 10°C/min, hold for 2 hours with 500 RPM stirring.

- Cool, collect gas, and separate liquid product for GC-MS analysis (ASTM D2887 mod.).

- Data Synthesis: Calculate key metrics: carbon efficiency to liquids (%), deoxygenation level (%), and energy yield (MJ output/MJ input).

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Biomass-to-SAF Research

| Item | Function/Application | Example Product/Specification |

|---|---|---|

| NREL Standard Biomass | Analytical calibration for compositional analysis. | NIST RM 8492 (Poplar) & 8493 (Switchgrass). |

| Certified SAF Standards | GC-MS/FID calibration for hydrocarbon fuel analysis. | Supelco SAF Paraffin Mix (C8-C20), HEFA SME Mix. |

| Solid Acid Catalyst | For catalytic fast pyrolysis or hydrolysis. | Zeolite ZSM-5 (SiO₂/Al₂O₃ = 30), <2 µm particle size. |

| Hydrotreating Catalyst | For HEFA or pyrolysis oil upgrading experiments. | NiMo/γ-Al₂O₃ (3-5 mm extrudates), pre-sulfided. |

| Lignin Model Compound | For studying depolymerization pathways. | Gualacylglycerol-β-guaiacyl ether (GGGE). |

| Stable Isotope Tracer | For tracking carbon flow in metabolic/catalytic studies. | ¹³C₆-Glucose (for energy crops), ¹³C-Palmitic Acid (for waste oils). |

| Anaerobic Digestion Inoculum | For biogas potential assays of wet waste streams. | Adapted anaerobic sludge, certified volatile solids content. |

Visualizations

Title: SAF Feedstock Assessment Workflow

Title: Decentralized Biomass Logistics Network

Technical Support Center: Troubleshooting Guides & FAQs

This support center provides solutions for common experimental challenges in biomass logistics research for decentralized Sustainable Aviation Fuel (SAF) production. The guidance is framed within the thesis: Optimizing biomass logistics for decentralized SAF production research.

FAQ Section: Common Experimental & Analytical Issues

Q1: During biomass feedstock characterization, our measured bulk density values show high variance (>15% CV) between replicates, skewing our logistics models. How can we improve measurement consistency? A: High variance is often due to non-standardized compaction methods and particle size distribution. Implement the following protocol:

- Use a standardized test cylinder (e.g., ASABE S269.5 standard: 0.2m diameter, height ≥0.3m).

- Pre-process all biomass through a defined mill screen (e.g., 3mm).

- Fill the cylinder from a set height (e.g., 0.5m) using a funnel to ensure consistent drop.

- Apply a controlled static load (e.g., 5 kg weight for 60 seconds) to simulate handling compaction.

- Weigh and calculate density (kg/m³). Conduct triplicate runs per feedstock batch.

Q2: Our GIS-based analysis for optimal pre-processing hub location is sensitive to seasonal variability in feedstock moisture content, altering the economic radius. How do we parameterize this variability? A: Integrate seasonal moisture modifiers into your GIS model using the following steps:

- Data Collection: For your region, compile historical monthly average precipitation and humidity data.

- Establish Correlation: Conduct a controlled field experiment to create a moisture uptake model for your key feedstocks (e.g., agricultural residues, energy crops).

- Protocol: Place dried samples in open mesh containers at field sites. Weigh samples weekly over 12 months. Correlate weight gain with historical weather data for that period/location.

- Apply Modifier: Use the derived seasonal moisture coefficient to adjust the effective biomass weight and bulk density in your transport cost calculations for each month.

Q3: When testing different baling/compaction technologies for low-bulk-density feedstocks (e.g., corn stover), how do we quantitatively compare the total logistical cost impact? A: Develop a standardized evaluation matrix. The key is to measure parameters that directly feed into Total Logistics Cost ($/dry ton) models. Below is a comparative framework.

| Parameter to Measure | Experimental Protocol | Unit | Impact on Cost Model |

|---|---|---|---|

| Final Bulk Density | ASABE S269.5 (see Q1) after baling. | kg/m³ | Directly affects transport cost (more kg/load). |

| Density Recovery after Decompaction | Measure density post-compaction, then after standard simulated handling (e.g., drop test). Re-measure. | % | Impacts handling losses and downstream processing uniformity. |

| Energy for Compaction | Use a load cell and energy meter on the baler press. Record kWh per ton of input biomass. | kWh/ton | Contributes to pre-processing operating cost. |

| Particle Size Reduction Post-Bale | Sieve analysis (ASTM C136/C136M) of material after bale breakup. | % fines (<3mm) | Affects conversion yield and preprocessing energy. |

| Moisture Loss/Gain during Storage | Instrument bales with moisture sensors, track over 30-90 days in controlled storage. | % point change | Impacts dry matter loss, storage cost, and conversion efficiency. |

Q4: Our life cycle analysis (LCA) for a decentralized network shows unexpected emissions hotspots from feedstock storage. What are the key experimental parameters to measure for accurate storage modeling? A: Storage emissions are driven by microbial activity. Establish a micro-scale storage simulation to measure:

- Dry Matter Loss (DML): The most critical metric.

- Protocol: Place biomass in sealed, ventilated containers at controlled temperatures (20°C, 35°C) and moisture contents (15%, 25% w.b.). Weigh initially and after 30, 60, 90 days. Use dry weight (oven-dry at 105°C for 24h) for accurate DML calculation.

- CO₂/CH₄ Off-gassing: Use a gas chromatograph to sample headspace gas in sealed storage jars weekly.

- Heat Generation: Insert temperature probes into the center of biomass piles to monitor for exothermic activity.

Experimental Protocol: Integrated Hurdle Assessment

Title: Protocol for Evaluating Geographic, Seasonal, and Density Interactions in Feedstock Logistics.

Objective: To simultaneously assess the combined impact of the three key hurdles on feedstock quality and logistics cost for a given candidate biomass.

Methodology:

- Site & Sample Selection: Select 3-5 representative collection points within a plausible geographic dispersion (e.g., 50km radius). At each point, collect ~100kg of target feedstock.

- Seasonal Sampling: Repeat collection at two key seasons: at harvest (low moisture) and post-harvest rainy season (high moisture).

- Pre-processing: Split each sample. Process one half with a standard mill (3mm screen). Process the other half with a densification method (e.g., pellet mill).

- Measurement Matrix: For each sample (Location x Season x Pre-process), measure:

- Bulk Density (ASABE S269.5)

- Moisture Content (ASABE S358.2)

- Calorific Value (ASTM D5865)

- Angle of Repose (for flowability)

- Data Integration: Input results into a centralized logistics model (e.g., a mixed-integer linear programming model) that includes transport distance from each location to a central depot, storage duration, and preprocessing cost. The model should output a normalized cost ($/GJ) for each combination.

Visualizations

The Scientist's Toolkit: Research Reagent Solutions & Essential Materials

| Item | Function in Biomass Logistics Research |

|---|---|

| Standard Test Cylinders (ASABE S269.5) | Provides a consistent volume for accurate and comparable bulk density measurements of loose or baled biomass. |

| Moisture Analyzer / Oven (ASABE S358.2) | Precisely determines feedstock moisture content on a wet or dry basis, critical for yield correction and storage modeling. |

| Portable Gas Chromatograph (GC) | Measures trace gases (CO₂, CH₄, O₂) emitted during storage experiments, quantifying decomposition and emissions factors for LCA. |

| Mechanical Sieve Shaker & Stack | Analyzes particle size distribution post-processing, which affects bulk density, flowability, and conversion efficiency. |

| GIS Software (e.g., QGIS, ArcGIS) with Network Analysis | Models geographic dispersion, calculates transport distances/costs, and optimizes facility (hub) locations based on spatial data. |

| Calorimeter (Bomb) | Measures the higher heating value (HHV) of biomass samples, allowing logistics costs to be normalized to energy content ($/GJ). |

| Data Logger with Temperature/Moisture Probes | Monitors climactic conditions within storage piles or bales over time, linking environmental factors to quality degradation. |

| Uniaxial Compaction Test Rig | A lab-scale device to simulate and measure the energy required for densification (baling, pelleting) of different feedstocks. |

Technical Support Center: Troubleshooting & FAQs

This support center addresses common experimental and modeling challenges within the context of optimizing biomass logistics for decentralized Sustainable Aviation Fuel (SAF) production. The FAQs and guides are designed for researchers and scientists conducting techno-economic analyses (TEA) and life cycle assessments (LCA) in this field.

FAQ 1: How do I accurately model the variable cost of biomass collection given fluctuating moisture content? Answer: Fluctuating moisture content (MC) directly impacts dry-ton yield, transportation weight, and preprocessing energy. Implement a dynamic correction factor in your cost model. Weigh feedstock samples (wet weight) at the field edge, then dry them in a convection oven at 105°C for 24 hours to determine dry weight. Calculate MC (%) = [(Wet Weight - Dry Weight)/Wet Weight] * 100. Apply this to adjust delivered cost per dry ton.

Table 1: Cost Impact of Biomass Moisture Content

| Moisture Content (%) | Effective Dry Ton Yield per 25-ton Load (tons) | Estimated Transport Cost Penalty (% increase over 15% baseline) |

|---|---|---|

| 10 | 22.5 | -5% |

| 15 (Baseline) | 21.25 | 0% |

| 25 | 18.75 | +15% |

| 35 | 16.25 | +35% |

FAQ 2: What is the standard protocol for determining optimal depot location using Geographic Information Systems (GIS)? Answer: Follow this multi-step protocol for locational cost optimization:

- Data Collection: Gather geospatial data on biomass fields (yield, type, harvest windows), road networks (type, tolls, weight limits), and potential depot sites (land cost, zoning).

- Buffer Analysis: Create travel-time buffers around candidate depot locations using network analysis tools (e.g., in ArcGIS or QGIS).

- Cost Allocation: Assign each biomass source to the depot with the lowest combined cost of collection and primary transportation.

- Model Iteration: Run a location-allocation model (e.g., p-median model) to minimize total system transportation cost. Validate with ground-truthed travel time samples.

Experimental Protocol: Biomass Densification Energy Measurement Objective: Quantify the specific energy consumption (kWh per dry ton) of a baling or pelleting process. Methodology:

- Preparation: Pre-weigh a batch of biomass feedstock with known moisture content.

- Instrumentation: Connect the densification equipment (e.g., baler, pellet mill) to a power analyzer (e.g., FLUKE 434 Series II) to record real-time voltage, current, and power factor.

- Process & Measure: Feed the biomass through the equipment at a controlled, steady rate. Record the total energy consumed (in kWh) from the power analyzer for the entire batch.

- Output Weighing: Weigh the total mass of densified product (bales or pellets).

- Calculation: Specific Energy (kWh/dry ton) = [Energy Consumed (kWh)] / [Output Mass (ton) * (1 - MC)]. Perform triplicate runs.

FAQ 3: Why does my sensitivity analysis show storage loss as the most critical parameter? Answer: Dry matter loss (DML) during storage has a compounding effect on all prior logistics costs. A high DML means all costs incurred for collection, transportation, and handling of the lost material are sunk. In TEA, this drastically increases the effective cost of usable feedstock. To mitigate, implement controlled storage experiments with different covering methods (tarps, breathable films) and monitor temperature/ humidity to quantify DML accurately for your specific biomass.

Table 2: Typical Dry Matter Loss Ranges by Storage Method

| Storage Method | Duration (Months) | Dry Matter Loss Range (%) | Key Cost Implication |

|---|---|---|---|

| Open Stack (no cover) | 6 | 15-25% | Very high cost amplification |

| Tarp-covered Pile | 6 | 8-15% | Moderate cost impact |

| Enclosed Shed (ventilated) | 6 | 3-8% | Lower impact, but higher capex |

| Ensiled (anaerobic) | 9 | 5-10% | Maintains moisture, alters preprocessing cost |

Biomass Logistics Cost Factor Decision Tree

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Biomass Logistics Research

| Item & Example Product | Function in Research Context |

|---|---|

| Moisture Analyzer (e.g., MX-50) | Precisely determines feedstock moisture content for yield correction and drying energy calc. |

| Portable Data Logger (e.g., HOBO) | Monitors temperature and humidity within storage piles to correlate with dry matter loss. |

| GPS Data Logger | Records route, time, and idle periods for ground-truthing transportation model assumptions. |

| Power Analyzer (e.g., FLUKE 434) | Measures real-time energy consumption of preprocessing equipment (shredders, pellet mills). |

| Density Measurement Kit (Box, Scale) | Determines bulk density before/after densification to model transport volume efficiency. |

| GIS Software (e.g., QGIS, ArcGIS Pro) | Platform for spatial analysis, depot location optimization, and route costing. |

| TEA/LCA Software (e.g., OpenLCA, Excel-based Models) | Integrates all experimental data into a full cost and environmental impact model. |

Research Integration for Logistics Optimization

Technical Support Center

FAQs & Troubleshooting for Biomass Logistics Modeling

Q1: During our simulation of a Hub-and-Spoke model, we are experiencing unrealistically high transportation costs. What could be the issue? A: This is often due to an incomplete cost parameter set. Ensure your model includes:

- Empty Return Leg Costs: Include cost for trucks returning empty from the central hub to collection points.

- Downtime/Opportunity Cost: Account for driver and asset waiting time during unloading at the hub.

- Biomass Degradation: Factor in moisture loss or quality degradation during longer storage/transport to a central hub, which reduces yield and effective $/ton.

Q2: Our feedstock quality variance in the Distributed Collection model is causing pre-processing inconsistencies at our pilot conversion facility. How can we mitigate this? A: Implement a standardized Quality Acceptance Protocol (QAP) at each collection point. Key steps:

- On-site Rapid Analysis: Use portable NIR spectrometers to measure moisture, carbohydrate, and ash content.

- Pre-processing Standardization: Apply a simple, uniform pre-processing step (e.g., single-pass shredding) at all nodes to homogenize particle size.

- Batching Logic: Create blended batches from multiple distributed nodes to average out quality fluctuations before shipment to the conversion unit.

Q3: How do we accurately model the "tipping point" where a Distributed model becomes more cost-effective than a Hub-and-Spoke model for our specific region? A: You must run a comparative Total Delivered Cost analysis with the following key variables. Use sensitivity analysis on feedstock density and conversion plant scale.

Table 1: Key Variables for Supply Chain Geometry Cost Modeling

| Variable Category | Hub-and-Spoke Model Parameters | Distributed Collection Model Parameters |

|---|---|---|

| Capital Expenditure (CapEx) | Central pre-processing facility cost, High-capacity equipment | Multiple small-scale pre-processing nodes, simpler equipment |

| Operational Expenditure (OpEx) | Primary transport to hub, Storage management cost, Energy for large processing | Transport from nodes to plant, Decentralized labor/energy costs |

| Transport Metrics | Average distance: Collection Point to Hub, Fleet type: Large trucks | Average distance: Node to Plant, Fleet type: Medium trucks |

| Feedstock Critical Factors | Biomass density (>3 dry tons/acre), Seasonal harvest window | Geographically dispersed, low-density biomass (<2 dry tons/acre) |

| Sensitivity Levers | Hub location optimization, Pre-processing technology efficiency | Number of collection nodes, Level of pre-processing at node |

Experimental Protocol: Field-to-Gate Cost Analysis for Two Geometries

Objective: Empirically compare the delivered cost per dry ton of agricultural residue (e.g., corn stover) for two supply chain configurations serving a decentralized 20 million gallon per year SAF pilot plant.

Protocol:

- Define Study Area: Select a 50-mile radius around the proposed plant location. Geospatially map all potential feedstock sources.

- Hub-and-Spoke Simulation:

- Hub Siting: Use centroid modeling or a P-median model to identify the optimal single hub location for aggregate collection.

- Data Collection: Log transport distances from 10 random source points to the hub, then hub to plant. Record simulated weights pre- and post-baling at hub.

- Cost Calculation: Apply local freight rates ($/mile/ton) separately for the two transport legs. Add modeled costs for baling and storage at the hub.

- Distributed Collection Simulation:

- Node Siting: Identify 5 existing agri-industrial facilities (e.g., co-ops, grain elevators) as potential pre-processing nodes.

- Data Collection: Log transport distances from source fields to the nearest node, then node to plant. Record simulated weights pre- and post-grinding at node.

- Cost Calculation: Apply freight rates. Add modeled costs for grinding and minimal storage at each node.

- Analysis: Calculate total cost per dry ton for each model. Perform Monte Carlo simulations (1000+ iterations) varying key inputs (distance, yield, fuel price) to generate a probabilistic cost distribution.

Experimental Workflow Diagram

Title: Biomass Supply Chain Geometry Simulation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Biomass Logistics Field Research

| Item | Function / Application |

|---|---|

| Portable Near-Infrared (NIR) Analyzer | Rapid, on-site determination of biomass composition (moisture, glucan, xylan, lignin) for quality control at collection points. |

| Geographic Information System (GIS) Software | Platform for spatial analysis, optimal facility siting, and transport route mapping for scenario modeling. |

| Discrete-Event Simulation (DES) Software | Tool for dynamic modeling of supply chain operations, including queue times, equipment utilization, and stochastic variability. |

| Unmanned Aerial Vehicle (UAV / Drone) | For remote sensing of crop residue coverage and yield estimation across large or inaccessible fields. |

| Standardized Biomass Sampling Kit | Includes corers, sieves, moisture cans, and grinders for collecting representative feedstock samples for lab validation of NIR data. |

| Logistics Cost Database Template | Customizable spreadsheet for capturing and calculating all CapEx, OpEx, and transport cost components specific to biomass. |

Strategic Frameworks and Technologies for Efficient Biomass Handling and Transport

Technical Support Center: Troubleshooting Guides & FAQs

Q1: During lab-scale baling of herbaceous biomass, we achieve inconsistent bale density. What are the primary causative factors and corrective actions? A: Inconsistent bale density is commonly caused by non-uniform feedstock moisture content, improper particle size distribution, or fluctuating compression pressure.

- Troubleshooting Steps:

- Measure Moisture: Use a moisture meter to verify feedstock moisture is uniform and within the optimal range (typically 12-20% w.b. for storage). Segment and process biomass in batches based on moisture content.

- Analyze Feedstock: Sieve the pre-processed biomass. For consistent densification, the majority of material should fall within a narrow size range (e.g., 2-10 cm). Adjust shredding/chopping protocols.

- Calibrate Equipment: Verify the calibration of the hydraulic pressure gauge on the baler. Ensure the compression chamber is free of residual material that prevents full piston stroke.

Q2: Our pelletizer dies are clogging frequently when processing torrefied biomass. How can we mitigate this? A: Torrefied biomass has reduced lignin content, which acts as a natural binder, and is more abrasive. Clogging is often due to excessive heat or insufficient binding.

- Troubleshooting Steps:

- Adjust Formulation: Introduce a binder. A starch-based binder (e.g., 1-3% by weight) can restore agglomeration. For R&D, test methyl cellulose or lignosulfonates.

- Optimize Process Parameters: Reduce the pre-heating temperature of the die. The torrefied material already has low moisture; excessive heat can degrade remaining binders. Monitor amperage on the pelletizer motor to detect overloading early.

- Material Preparation: Ensure the particle size of the torrefied feedstock is very fine (<1mm). Coarse, abrasive particles increase friction and compaction within the die channels.

Q3: After torrefaction, we observe a massive loss in mass yield but the energy yield remains high. Is this expected, and how do we optimize the trade-off? A: Yes, this is characteristic of torrefaction. The process drives off volatile, low-energy components (water, light organics), concentrating carbon and thus energy, in the solid yield.

- Optimization Protocol: A Response Surface Methodology (RSM) experiment is recommended to model the trade-off.

- Variables: Set temperature (250-300°C) and residence time (10-60 min) as independent variables.

- Responses: Measure Mass Yield (%), Higher Heating Value (HHV, MJ/kg), and Energy Yield (% = Mass Yield * (HHVtorr/HHVraw) * 100).

- Goal: Use statistical software to find the parameter set that maximizes Energy Yield while meeting downstream process requirements for grindability and hydrophobicity.

Q4: For decentralized SAF production research, which densification method provides the best logistic efficiency? A: The optimal method depends on the specific supply chain model. Key quantitative comparisons are below.

Table 1: Comparative Analysis of On-site Biomass Densification Methods

| Parameter | Unit | Baling | Pelletization | Torrefaction |

|---|---|---|---|---|

| Typical Density Increase | kg/m³ | 150-250 | 500-700 | 600-800 |

| Energy Density Increase | % | 0-5 | 10-20 | 20-30 |

| Moisture Tolerance | % w.b. | High (≤25) | Medium (≤15) | Low (≤10) |

| Typical Mass Yield | % | ~98 | ~95 | 60-80 |

| Typical Energy Yield | % | ~98 | ~92 | 75-90 |

| Hydrophobicity | - | Low | Medium | High |

| Grindability Improvement | - | Low | High | Very High |

Table 2: Key Research Reagent Solutions for Biomass Preprocessing Experiments

| Reagent/Material | Function in Research Context |

|---|---|

| Starch-based Binders (e.g., Corn Starch) | Added during pelletization (1-3% wt.) to improve durability, especially for low-lignin or torrefied feedstocks. |

| Lignosulfonates | Alternative organic binder; useful for studying the impact of sulfur content on downstream catalytic SAF conversion. |

| Silica Sand (various mesh sizes) | Used for abrasion testing in durability tumblers (e.g., ASABE S269.5) to simulate handling degradation of pellets. |

| Desiccant (e.g., Indicating Silica Gel) | For creating controlled low-moisture environments to test the equilibrium moisture content and hydrophobicity of torrefied biomass. |

| Thermogravimetric Analysis (TGA) Standards (e.g., Nickel, Curie Point standards) | To calibrate TGA equipment used for proximate analysis (moisture, volatiles, fixed carbon, ash) of raw and densified samples. |

Experimental Protocols

Protocol 1: Determining Pellet Durability Index (DI) Objective: Quantify the mechanical robustness of pellets per ASABE S269.5. Materials: Pellet durability tester (tumbler), sieve (specified size), balance. Method:

- Sieve 500g of pellets to remove fines. Record mass (A).

- Load pellets into the standardized tumbler chamber.

- Rotate for 500 revolutions at 50 rpm.

- Remove pellets and sieve again using the same method. Record the mass of retained pellets (B).

- Calculation: DI (%) = (B / A) * 100.

Protocol 2: Laboratory-Scale Torrefaction Objective: Produce torrefied biomass samples at varying severities. Materials: Tubular furnace/reactor, nitrogen cylinder, sample crucibles, flow controllers. Method:

- Load 20g of dried biomass (<2mm particle size) into a crucible.

- Place crucible in reactor. Purge with N2 at 1 L/min for 5 minutes to establish inert atmosphere.

- Heat reactor to target temperature (e.g., 250, 275, 300°C) at 10°C/min under continuous N2 flow (0.5 L/min).

- Hold at target temperature for desired residence time (e.g., 30 min).

- Cool reactor under N2 flow to below 50°C. Remove sample, weigh, and calculate mass yield.

Visualizations

Troubleshooting Inconsistent Bale Density

Torrefaction Mass vs. Energy Yield Trade-off

Selecting a Densification Method for SAF Research

Technical Support Center

Troubleshooting Guides & FAQs

Q1: In our biomass transport cost model, rail consistently shows lower per-ton-mile costs than trucking, yet our simulated total logistics costs are higher for rail. What is the primary discrepancy we should investigate?

- A: The most common issue is omitting or underestimating fixed and terminal costs. Rail transport involves significant costs for transloading, railcar spotting, and drayage that are not present in direct trucking. Furthermore, low throughput volumes may not justify the fixed cost of rail sidings. Review your model for the following data points, which are often sourced from carrier quotes or industry benchmarks:

- Transloading fee per ton ($/ton)

- Drayage cost from origin to rail ramp and from destination ramp to biorefinery ($/mile)

- Railcar daily demurrage charges (for detention)

- Minimum volume commitment for cost-effective rail service

- A: The most common issue is omitting or underestimating fixed and terminal costs. Rail transport involves significant costs for transloading, railcar spotting, and drayage that are not present in direct trucking. Furthermore, low throughput volumes may not justify the fixed cost of rail sidings. Review your model for the following data points, which are often sourced from carrier quotes or industry benchmarks:

Q2: During our intermodal (truck-rail-truck) simulation, we experience "bullwhip effect" volatility in biorefinery feedstock inventory. What operational protocols can stabilize supply?

- A: Inventory volatility is often a result of inconsistent rail transit times and poor visibility. Implement the following experimental protocol:

- Data Collection Phase: Over a 12-week period, track and log the following for each shipment: (a) Gate-in time at origin rail ramp, (b) Actual departure time, (c) Actual arrival time at destination ramp, (d) Gate-out time after drayage.

- Analysis: Calculate the mean and standard deviation for each leg. You will typically find rail transit has the highest variance.

- Protocol Adjustment: Introduce a safety stock buffer calculated as:

Safety Stock = (Daily Consumption Rate) * (Standard Deviation of Total Transit Time in Days) * Z-score (e.g., 1.65 for 95% service level). Adjust procurement orders to be based on(Inventory Position) + (In-Transit Stock)rather than just on-hand inventory.

- A: Inventory volatility is often a result of inconsistent rail transit times and poor visibility. Implement the following experimental protocol:

Q3: Our geospatial analysis for decentralized SAF production sites suggests a rail-optimal location, but local infrastructure assessment finds no active rail siding. What are the key cost and feasibility experiments to run?

- A: This requires a phased capital investment analysis versus long-term operational savings.

- Feasibility & Cost Experiment: Engage with shortline railroad companies and civil engineers for a site assessment. Model the costs as per the table below.

- Sensitivity Analysis: Run your total logistics cost model under different throughput scenarios (e.g., 50k, 100k, 200k tons/year) comparing the "Truck-Only" baseline against the "Rail with Siding Investment" scenario over a 15-year horizon. The net present value (NPV) of the siding project is critical.

- A: This requires a phased capital investment analysis versus long-term operational savings.

Quantitative Data Summary

Table 1: Comparative Cost Structure for Biomass Transport Modes (Model Estimates)

| Cost Component | Truck (Direct) | Rail (Origin to Destination) | Intermodal (Truck-Rail-Truck) |

|---|---|---|---|

| Variable Cost ($/ton-mile) | 0.25 - 0.35 | 0.05 - 0.10 | 0.08 - 0.15 |

| Fixed Terminal Cost ($/ton) | 5 - 10 | 20 - 40 | 25 - 45 |

| Typical Lead Time (Days) | 1 - 3 | 7 - 14 | 5 - 10 |

| Lead Time Variability (σ in Days) | Low (0.5) | High (2-4) | Medium-High (1.5-3) |

| Optimal Shipment Size | 20 - 25 tons | 1,000 - 2,000 tons | 1,000 - 2,000 tons |

Table 2: Rail Siding Installation Cost Breakdown

| Cost Item | Estimated Range | Notes |

|---|---|---|

| Initial Engineering & Permitting | $25,000 - $75,000 | Site-specific, includes environmental review |

| Track Construction (per foot) | $300 - $500 | Includes grading, ballast, ties, rail |

| Switch Installation | $50,000 - $100,000 | Connection to mainline |

| Site Preparation (Grading, Drainage) | $50,000 - $200,000 | Highly variable |

| Total Estimated Cost | $500,000 - $1.5M+ | For a ~2,000 ft siding |

Experimental Protocols

Protocol 1: Modal Break-Even Analysis for Biomass Corridors

- Objective: Determine the minimum one-way distance where rail or intermodal costs become lower than truck-only for a given annual biomass volume.

- Methodology:

- Define a specific biomass (e.g., corn stover) with a standardized bulk density (e.g., 5.5 lb/ft³ baled).

- For distances from 50 to 500 miles in 50-mile increments, calculate total annual cost.

- Total Cost Formula:

(Variable Cost * Distance * Annual Tons) + (Fixed Terminal Cost * Annual Tons) + (Inventory Carrying Cost * Value * Lead Time). - Plot total cost vs. distance for each mode. The intersection points are the break-even distances for low, medium, and high volume scenarios.

Protocol 2: Intermodal Transloading Efficiency Experiment

- Objective: Quantify biomass density loss and throughput rate during transloading from truck to railcar.

- Methodology:

- Weigh and sample 10 truckloads of biomass (e.g., wood chips) upon arrival at transload facility.

- Transload material using a standard radial stacker/conveyor system into a covered hopper railcar.

- Weigh the loaded railcar (tare weight known) to determine mass transferred.

- Calculate transfer efficiency:

(Mass in Railcar / Total Mass from Trucks) * 100. - Record throughput:

Total Mass Transferred / Total Operator Hours. - Repeat with different material formats (bales, chips, pellets) and equipment.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Biomass Logistics Optimization Research

| Item | Function in Research |

|---|---|

| Geographic Information System (GIS) Software | For spatial analysis of feedstock basins, routing, and facility siting based on transport networks. |

| Discrete-Event Simulation Software | To model stochastic logistics systems, simulating queues at transload facilities, transit times, and inventory levels. |

| Life Cycle Assessment (LCA) Database | To quantify and compare the greenhouse gas emissions (g CO2e/ton-mile) of different transport modes. |

| Bulk Density Tester | To determine the mass per unit volume of biomass formats, a critical variable for calculating transport capacity. |

| Moisture Content Analyzer | To measure wet and dry weight of samples, as moisture affects weight-based transport costs and material degradation. |

Visualizations

Title: Intermodal Biomass Logistics Workflow

Title: Transport Mode Optimization Research Methodology

Technical Support Center

This technical support center provides troubleshooting guidance for common experimental and modeling issues encountered during research into biomass logistics optimization for decentralized Sustainable Aviation Fuel (SAF) production.

Frequently Asked Questions (FAQs)

Q1: My Geographic Information System (GIS) model for hub candidate identification is yielding unrealistically high transportation costs. What could be the cause? A: This is often due to incorrect network impedance settings. Verify that your road network layer correctly distinguishes between road types (e.g., highway, secondary, tertiary) and that the associated speed or cost attributes are accurately calibrated for local biomass trucking regulations (e.g., weight limits on rural roads). Re-run the network analysis using impedance based on time (minutes) rather than pure distance (km), as this better reflects real-world conditions affecting fuel consumption.

Q2: The biomass feedstock quality (e.g., moisture content, composition) from my experimental preprocessing trials is highly variable, skewing my logistics cost model. How can I mitigate this? A: Implement a strict, documented feedstock sampling and preparation protocol (see Experimental Protocol 1 below). Variability often stems from inconsistent sampling methods or unrepresentative sub-sampling. For modeling, incorporate this variability as a stochastic parameter using Monte Carlo simulation rather than a single average value, which will provide a more robust cost distribution.

Q3: My Mixed-Integer Linear Programming (MILP) model for hub location is failing to find a feasible solution. What are the primary checks to perform? A: Perform these checks in order:

- Constraint Relaxation: Temporarily relax all capacity and demand constraints. If a solution appears, one of these is too restrictive.

- Demand-Supply Balance: Ensure total potential feedstock supply (tonnes/yr) across all collection points meets or exceeds the total demand input for your SAF biorefineries.

- Parameter Sanity Check: Verify that all cost coefficients (e.g., $/km/tonne) and capacity parameters are in logical, consistent units. A common error is mixing units (e.g., tonnes vs. kg, km vs. miles).

Q4: During Life Cycle Assessment (LCA) of the proposed network, how should I handle the allocation of environmental impacts for co-products from preprocessing (e.g., biochar)? A: Follow the ISO 14044 hierarchy. For decentralized SAF systems, system expansion (avoided burden approach) is often most appropriate. For example, if biochar is produced and used for soil amendment, the system is credited with avoiding the production and emissions of a conventional fertilizer. Document the choice of substituted product and its emission factor transparently.

Troubleshooting Guides

Issue: Inaccurate Biomass Yield Estimation from Satellite/Remote Sensing Data. Symptoms: Modeled feedstock availability at collection points does not align with ground-truthed data, leading to supply gaps. Diagnosis & Resolution:

- Calibrate the Yield Model: Use ground-truthed yield data from field plots to calibrate the vegetation indices (e.g., NDVI) from satellite data. A simple linear regression may be insufficient; consider machine learning models (Random Forest) that incorporate multiple indices and weather data.

- Account for Spatial Aggregation Error: Satellite data pixels may not align perfectly with farm boundaries. Use zonal statistics within GIS to calculate average indices per collection zone, not just a single point sample.

- Validate with Harvest Data: Partner with local agricultural cooperatives to obtain actual harvest data for calibration.

Issue: Unstable Results from Multi-Criteria Decision Analysis (MCDA) for Final Site Selection. Symptoms: Small changes in criterion weightings cause dramatic shifts in top-ranked hub locations, reducing stakeholder confidence. Diagnosis & Resolution:

- Perform Sensitivity Analysis: Systematically vary weights across a plausible range (e.g., ±10%) and observe rank stability. Present results as a set of robust candidate locations rather than a single "optimal" site.

- Check for Redundant Criteria: Use correlation analysis on your criterion performance matrix. If two criteria are highly correlated (e.g., "distance to highway" and "average travel speed to biorefinery"), remove one to avoid double-counting.

- Employ a Consensus Method: Use techniques like the Analytic Hierarchy Process (AHP) with pairwise comparisons from multiple experts to derive more stable, agreed-upon weights.

Experimental Protocols

Experimental Protocol 1: Standardized Feedstock Sampling & Preprocessing for Quality Analysis

Objective: To obtain a representative sample of biomass feedstock (e.g., agricultural residue, energy crops) for determining moisture content, bulk density, and compositional analysis (cellulose, hemicellulose, lignin).

Methodology:

- Field Sampling: For a defined plot, collect biomass from at least 5 random sub-locations using a quadrant. Combine into a composite primary sample (≥10 kg wet weight).

- Size Reduction: Use a laboratory-scale shredder to homogenize the primary sample to a particle size of <10 mm.

- Sub-sampling: Coning and quartering technique. Thoroughly mix the shredded biomass, form a cone, flatten, divide into quarters. Retain two opposite quarters, combine, and repeat until a 2-3 kg representative subsample is obtained.

- Moisture Content: Immediately weigh a portion (Mwet). Dry in a forced-air oven at 105°C for 24 hours or until constant weight (Mdry). Moisture % = [(Mwet - Mdry)/M_wet] * 100.

- Compositional Analysis: Send dried, milled subsample for standardized analysis (e.g., NREL/TP-510-42618 protocol for structural carbohydrates and lignin).

Experimental Protocol 2: Determining Optimal Preprocessing Hub Throughput Capacity

Objective: To model the relationship between capital/operating costs and throughput for a candidate preprocessing technology (e.g., pelletizer, torrefaction unit) to identify minimum viable scale.

Methodology:

- Data Collection: Gather quoted capital costs (CapEx) and operating costs (OpEx: energy, labor, maintenance) for 3-5 different equipment sizes (e.g., 1, 5, 10 dry tonnes/hour capacity).

- Cost Modeling: Fit a power law model to the CapEx data:

CapEx = a * (Capacity)^b. Typically,b < 1indicates economies of scale. - Unit Cost Calculation: Calculate unit processing cost ($/dry tonne) for each size:

Unit Cost = (Annualized CapEx + Annual OpEx) / Annual Throughput. Assume an annual operating time (e.g., 350 days * 20 hrs/day) and a capital recovery factor based on project lifespan and discount rate. - Sensitivity Analysis: Create a tornado chart showing the impact of key variables (e.g., biomass cost, energy price, annual operating hours) on the unit cost for the selected optimal scale.

Data Presentation

Table 1: Comparative Analysis of Preprocessing Technology Options for Herbaceous Biomass

| Technology | Output Product | Typical CapEx ($/tonne capacity) | Energy Consumed (GJ/tonne input) | Mass Yield (Output/Input) | Dry Matter Loss (%) | Suitability for Decentralized Micro-Depot |

|---|---|---|---|---|---|---|

| Densification (Pelleting) | Dense Pellet | 150 - 250 | 0.8 - 1.2 | ~0.95 | 1-3 | High - Modular, scalable units available. |

| Torrefaction (Mild) | Bio-coal | 300 - 500 | 1.5 - 2.0 | ~0.70 | 25-30 | Medium - Requires careful emission control. |

| Fast Pyrolysis | Bio-oil | 600 - 900 | 2.0 - 3.0 (Net) | ~0.65 (oil) | Varies | Low - Complex operation, unstable oil. |

Table 2: Sensitivity of Total Logistics Cost ($/tonne SAF) to Key Model Parameters

| Parameter | Base Value | -20% Variation | +20% Variation | Impact on Total Cost (% Change from Base) |

|---|---|---|---|---|

| Biomass Farmgate Price | $60/dry tonne | $48 | $72 | +9.2% / -8.1% |

| Average Trucking Cost | $0.30/km/tonne | $0.24 | $0.36 | +7.5% / -6.8% |

| Preprocessing Conversion Efficiency | 90% | 72% | 100%* | +11.4% / -5.2% |

| Micro-Depot Storage Loss | 5% annual | 4% | 6% | +1.3% / -1.1% |

*Represents theoretical maximum.

Visualizations

Title: Two-Stage Biomass Logistics Optimization Workflow

Title: Hub Location Model Decision Logic Flow

The Scientist's Toolkit: Research Reagent Solutions & Essential Materials

Table 3: Key Research Materials for Biomass Logistics & SAF Pathway Experiments

| Item | Function in Research Context | Example/Note |

|---|---|---|

| GIS Software (e.g., QGIS, ArcGIS Pro) | Spatial analysis for mapping feedstock, calculating transport costs, and identifying candidate hub locations via network analysis. | Open-source (QGIS) or commercial. Requires road network and land-use data layers. |

| Optimization Solver (e.g., Gurobi, CPLEX) | Solves the Mixed-Integer Linear Programming (MILP) model to determine optimal hub locations and biomass flows. | Academic licenses often available. |

| Life Cycle Inventory Database (e.g., Ecoinvent, GREET) | Provides emission factors for energy, transport, and processes used in the environmental assessment of the supply chain. | GREET model is tailored for transport fuels. |

| Proximate & Ultimate Analyzer | Determines key biomass properties (moisture, ash, volatile matter, fixed carbon, CHNS composition) critical for preprocessing design. | Essential for feedstock quality specification. |

| Pellet Durability Tester | Measures the mechanical strength of densified biomass pellets, a key quality metric for handling and storage logistics. | Simulates attrition during handling. |

| Bomb Calorimeter | Determines the higher heating value (HHV) of raw and preprocessed biomass, a direct input to logistics models (energy content per tonne). | Required for mass-to-energy calculations. |

Technical Support Center

GIS Troubleshooting Guide

Q1: My GIS software fails to accurately georeference drone-captured biomass feedstock imagery, causing misalignment with existing basemaps. How do I resolve this?

A: This is typically due to incorrect coordinate reference system (CRS) settings or insufficient ground control points (GCPs).

- Protocol: Follow this GCP collection and application protocol:

- GCP Collection: Prior to drone flight, place at least 5 high-visibility targets across the study area. Record their precise coordinates using a differential GPS (DGPS) receiver with centimeter-level accuracy (e.g., RTK-GPS).

- Software Check: In your GIS (e.g., QGIS, ArcGIS Pro), ensure the project CRS matches your DGPS data (e.g., WGS 84 / UTM zone [X]).

- Georeferencing Tool: Use the Georeferencer plugin. Load the drone image and input the collected GCP coordinates. Use a 1st-order polynomial (Affine) transformation for simple shifts, or a 2nd/3rd-order for terrain correction.

- Resampling: Select "Nearest Neighbour" for categorical data (e.g., land cover) or "Cubic Convolution" for continuous data (e.g., biomass yield models). Save the transformation.

Q2: Spatial analysis for optimal biomass collection point identification yields unrealistic "hot spots" in inaccessible areas (e.g., steep slopes, waterways). What parameters should I adjust?

A: Your suitability model lacks critical constraint layers.

- Protocol: Implement a Multi-Criteria Decision Analysis (MCDA) with constraints:

- Define Criteria: Create raster layers for key variables (e.g., biomass yield [tons/ha], distance to roads [m], slope [%]).

- Reclassify & Weight: Reclassify each layer to a common suitability scale (1-10). Assign weights using Analytic Hierarchy Process (AHP) based on expert input for SAF research. Example weights: Yield (40%), Road Distance (35%), Slope (25%).

- Apply Constraints: Create binary masks (0=excluded, 1=allowed) for absolute barriers:

Slope > 30%,Land Use ≠ Agricultural/Fallow,Proximity to Waterway < 10m. Multiply the final weighted suitability raster by all constraint masks. - Validate output against satellite imagery or field reconnaissance data.

IoT Fleet Management Troubleshooting Guide

Q3: Telematics devices on collection trucks show frequent "GPS dropout," creating gaps in route tracking for logistics optimization models.

A: Dropouts are caused by signal obstruction or power issues.

- Troubleshooting Steps:

- Verify Installation: Ensure the GPS antenna is mounted on the vehicle roof with a clear 360° view, not under metal covers.

- Check Power: Use a multimeter to test the wired connection to the vehicle's ignition-switched circuit. Voltage should be stable at ~12-24V DC. Consider adding an inline fuse.

- Diagnose via Logs: Access the device's diagnostic logs via the fleet management platform (e.g., Samsara, Geotab). Look for error codes related to power or antenna status.

- Protocol for Data Gap Interpolation: For modeling purposes, fill small gaps (<5 minutes) using linear interpolation of coordinates between known points. Flag interpolated data in your analysis.

Q4: Real-time moisture sensor data from truck-mounted probes shows erratic spikes and readings that don't correlate with lab samples, compromising feedstock quality prediction.

A: This indicates sensor calibration drift or mechanical issues.

- Calibration & Validation Protocol:

- Field Calibration: At the start of each collection day, take a representative biomass sample. Use a portable lab-grade moisture meter (e.g., Mettler Toledo) to get a reference reading (

Ref_M). - Sensor Reading: Simultaneously, record the average reading from the IoT moisture sensor (

Sensor_M) over a stable 60-second period. - Apply Offset: Calculate a daily offset:

Offset = Ref_M - Sensor_M. Apply this offset to all sensor data for that day's batch. - Mechanical Check: Inspect the probe for debris or damage. Ensure it is fully inserted into the biomass load.

- Field Calibration: At the start of each collection day, take a representative biomass sample. Use a portable lab-grade moisture meter (e.g., Mettler Toledo) to get a reference reading (

Frequently Asked Questions (FAQs)

Q: What is the minimum spatial resolution required for satellite imagery to effectively map herbaceous biomass feedstocks for decentralized SAF production?

A: For herbaceous feedstocks like switchgrass or miscanthus, a resolution of 10-30 meters per pixel (e.g., Sentinel-2) is sufficient for regional supply basin modeling. For field-level yield estimation, sub-meter to 3-meter resolution (e.g., PlanetScope, UAV imagery) is necessary to account for intra-field heterogeneity.

Q: Which IoT communication protocol (LPWAN) is most suitable for remote biomass storage sites with limited cellular coverage for monitoring silo conditions?

A: LoRaWAN is often optimal for remote, fixed asset monitoring. It offers long-range (up to 15 km rural), low-power communication, ideal for transmitting periodic data (temperature, humidity) from silo sensors to a central gateway. For mobile assets (trucks), cellular IoT (4G/LTE Cat-M1) remains the standard for reliable, continuous tracking.

Q: How do we ensure data interoperability between GIS platforms (for mapping) and IoT fleet platforms (for logistics) in our research pipeline?

A: Implement a standardized data schema and use a central data lake.

- Key Steps: 1) Define a common data model (e.g., feedstock parcels with unique GeoJSON geometry and attributes). 2) Use APIs from both platforms to push/pull data to a centralized repository (e.g., cloud SQL database). 3) Use unique identifiers (UUIDs) to link a specific GIS-mapped collection zone with the IoT data from the truck that services it.

Table 1: Comparative Analysis of Remote Sensing Platforms for Biomass Mapping

| Platform | Example Source | Spatial Resolution | Revisit Time | Key Suitability for SAF Research | Typical Cost |

|---|---|---|---|---|---|

| Satellite (Multispectral) | Sentinel-2 | 10-60 m | 5 days | Regional supply curve modeling, free | Free |

| Satellite (Commercial) | PlanetScope | 3 m | Daily | Field-level monitoring, change detection | $/km² |

| Aerial (Manned) | NAIP / Contracted | 0.5-1 m | 1-3 years | Baseline mapping, high accuracy | $$/flight |

| UAV/Drone | DJI P4 Multispectral | 2-8 cm | On-demand | Plot-level yield validation, research trials | $$$ (hardware) |

Table 2: IoT Sensor Performance Specifications for Biomass Logistics

| Sensor Parameter | Target Specification | Rationale for SAF Logistics | Typical Accuracy (Calibrated) |

|---|---|---|---|

| GPS Location | < 3m horizontal accuracy | Precise geofencing of collection zones | ±1.5 m (with GNSS) |

| Load Weight | On-board weighing scale | Mass-balance for yield calculation | ±0.5% of full scale |

| Feedstock Moisture | Capacitive or NIR probe | Critical for conversion yield prediction | ±1.5% (in range 10-30% wt) |

| Cab/Trailer Temp | Digital thermometer | Monitor feedstock spoilage risk | ±0.5°C |

| Data Transmission | Cellular + Satellite backup | Ensure data flow from remote areas | 99% uptime (cellular zone) |

Experimental Protocols

Protocol 1: Field Validation of GIS-Derived Biomass Yield Maps

- Objective: Validate remote sensing-based biomass yield estimates for a herbaceous energy crop.

- Methodology:

- Stratified Random Sampling: Using a GIS, stratify the study area into 3-5 yield zones (low, medium, high) based on the preliminary satellite-derived NDVI map.

- Plot Establishment: Randomly select 5-10 sample points per zone. At each point, establish a 1m x 1m quadrat.

- Harvest & Weigh: Harvest all above-ground biomass within the quadrat. Weigh immediately in the field to obtain fresh weight (FW).

- Sub-sample for Dry Weight: Take a ~500g sub-sample, seal in a pre-weighed dry bag, and label.

- Lab Analysis: Oven-dry sub-samples at 70°C for 48 hours or until constant weight. Record dry weight (DW).

- Calculate Yield: Compute dry matter yield per hectare (kg/ha) for each quadrat. Compare mean yield per zone to the GIS-predicted values using linear regression (R², RMSE).

Protocol 2: IoT-Enabled Route Optimization Experiment for Collection Trucks

- Objective: Minimize fuel consumption and collection time by comparing pre-planned GIS routes against real-time IoT-optimized routes.

- Methodology:

- Control Route: For a given day's collection tasks, generate a route using GIS network analysis based on static road data and collection point locations. Assign this to Truck A. Record planned distance and estimated time.

- Experimental Route: Input the same task list into a dynamic fleet management platform (e.g., using HERE or Google Roads API) with real-time traffic enabled. Assign this to Truck B.

- Data Collection: Use IoT telematics on both trucks to log: actual route (GPS track), total distance, idle time, moving time, and fuel consumption (from CAN bus or fuel sensor).

- Analysis: Conduct a paired t-test on key metrics (fuel used [L], total collection time [min], distance [km]) between Truck A and Truck B over 10-15 repeated collection days.

Diagrams

GIS Resource Mapping Workflow

IoT-Enabled Fleet Management Data Flow

The Scientist's Toolkit: Research Reagent Solutions & Essential Materials

Table 3: Key Tools for Digital Biomass Logistics Research

| Item | Function in Research | Example / Specification |

|---|---|---|

| Differential GPS (DGPS) | Provides centimeter-accuracy ground truth coordinates for validating remote sensing data and georeferencing. | Example: Trimble R12i with RTK correction. |

| Multispectral UAV/Drone | Captures high-resolution, multi-band imagery for developing and validating plot-level biomass prediction models. | Example: DJI Phantom 4 Multispectral (Blue, Green, Red, Red Edge, NIR). |

| Portable Moisture Meter | Provides rapid, accurate reference measurements for calibrating in-line IoT moisture sensors on collection trucks. | Example: Mettler Toledo HC103. |

| IoT Gateway (LoRaWAN) | Enables long-range, low-power data collection from fixed sensors in remote biomass storage or field locations. | Example: Milesight UG65 LoRaWAN Gateway. |

| GIS Software with MCDA | Performs spatial analysis, suitability modeling, and optimal location allocation for collection infrastructure. | Example: QGIS with GRASS & SAGA plugins. |

| Fleet Management API Access | Allows programmatic extraction of time-stamped logistics data (routes, fuel, loads) for integration with research models. | Example: Samsara or Geotab REST API. |

| Cloud Data Warehouse | Centralized repository for merging disparate GIS, IoT, and lab analysis datasets for holistic modeling. | Example: Google BigQuery or AWS Redshift. |

Technical Support Center: Troubleshooting Continuous Flow Biomass Logistics for SAF Research

This support center addresses common operational challenges in experimental setups for decentralized Sustainable Aviation Fuel (SAF) production from biomass. The questions and protocols are framed within the thesis context of Optimizing biomass logistics for decentralized SAF production research.

Troubleshooting Guides & FAQs

Q1: During a continuous flow pre-treatment experiment, we observe inconsistent biomass slurry viscosity, causing pump cavitation and flow interruption. What are the primary causes and corrective actions?

A: Inconsistent slurry viscosity typically stems from feedstock variability or improper preconditioning. Implement the following protocol:

- Experimental Protocol for Slurry Standardization:

- Sample & Characterize: Take a 1 kg representative sample of your biomass feedstock (e.g., agricultural residue). Determine its initial moisture content (MC) using a standardized oven-dry method (105°C for 24 hours).

- Pre-processing: Mill or chip the biomass to a uniform particle size distribution (e.g., 2-5 mm sieve fraction).

- Conditioning: For every 1 kg of dry biomass equivalent, add a calculated mass of preconditioning agent (e.g., dilute acid or alkali solution) to achieve a target solid loading of 15-20% w/w. Use a high-shear mixer for 10 minutes.

- Monitoring: Use an in-line rotational viscometer post-mixing tank. Target viscosity should be between 500-2000 cP at your process temperature (e.g., 80°C).

- Corrective Action Table:

| Symptom | Probable Cause | Immediate Action | Long-term Solution |

|---|---|---|---|

| Rising viscosity & pump overload | High solids concentration, particle agglomeration. | Dilute with preheated process water (5% increments). | Implement real-time moisture content sensor at feedstock intake to auto-adjust liquid addition. |

| Falling viscosity & settling | Low solids concentration, insufficient mixing. | Add pre-processed dry biomass. | Install an in-line static mixer before the pump inlet. |

| Periodic viscosity spikes | Feedstock heterogeneity (e.g., soil, rocks). | Stop flow, inspect and clean chopper/grinder screens. | Install a magnetic separator and de-stoner in feedstock prep line. |

Q2: Our inventory simulation for switchgrass shows frequent stock-outs, disrupting the continuous hydrothermal liquefaction (HTL) reactor runs. How can we model safety stock levels for seasonal biomass?

A: Stock-outs indicate insufficient buffer inventory. Use a (s, Q) inventory model with seasonally adjusted demand. Perform the following calculation:

Experimental Protocol for Determining Safety Stock:

- Data Collection: Over a minimum of 5 campaign cycles, record daily biomass consumption (D) and lead time (L) from harvest to on-site storage.

- Calculate Uncertainty: Determine the standard deviation of demand during lead time (σ_DL). For seasonal biomass, calculate this separately for harvest and off-harvest periods.

- Set Service Level: Choose a desired service level (probability of no stock-out). For research continuity, a 95% level is common (Z-score = 1.645).

- Calculate Safety Stock (SS): SS = Z * σ_DL.

- Calculate Reorder Point (s): s = (Average Daily Demand * Lead Time) + SS.

Example Quantitative Data Table (Hypothetical Switchgrass Data):

| Period | Avg. Daily Demand (tonnes/day) | Avg. Lead Time (days) | σ_Demand (tonnes/day) | Safety Stock (95% Level) | Reorder Point (s) |

|---|---|---|---|---|---|

| Harvest (Months 1-3) | 10 | 7 | 1.5 | 5.5 tonnes | 75.5 tonnes |

| Off-Harvest (Months 4-12) | 10 | 21 | 3.0 | 9.9 tonnes | 219.9 tonnes |

Q3: Scheduling multiple biomass batches (e.g., corn stover, forest residues) through a shared continuous pyrolysis unit is complex. How can we minimize transition time and product contamination?

A: This is a serial batch sequencing problem on a single continuous processor. Develop a changeover matrix and employ a Shortest Setup Time (SST) first heuristic when possible.

Experimental Protocol for Optimal Batch Sequencing:

- Characterize Changeover: Run controlled purge cycles between different feedstock types. Measure the time and inert gas/steam volume required to reduce cross-contamination in the product oil to below 1% (measured by GC-MS).

- Create Changeover Matrix: Document time and purge cost for each feedstock pair transition.

Feedstock Changeover Matrix Table:

| From / To | Corn Stover | Forest Residue | Energy Sorghum |

|---|---|---|---|

| Corn Stover | 0 hrs / $0 | 2.0 hrs / $120 | 1.5 hrs / $90 |

| Forest Residue | 2.5 hrs / $150 | 0 hrs / $0 | 2.2 hrs / $130 |

| Energy Sorghum | 1.0 hr / $60 | 1.8 hrs / $110 | 0 hrs / $0 |

Experimental Workflow for Biomass Logistics Optimization

Title: Workflow for Optimizing SAF Biomass Logistics

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Function in SAF Biomass Logistics Research |

|---|---|

| In-line Rotational Viscometer | Measures real-time viscosity of biomass slurries to ensure stable continuous flow and prevent pump failure. |

| Portable NIR Moisture Analyzer | Rapidly determines moisture content of incoming feedstock for inventory balancing and preconditioning calculations. |

| Gas Chromatograph-Mass Spectrometer (GC-MS) | Analyzes bio-crude/oil composition to quantify cross-contamination during feedstock switches in reactors. |

| Process Simulation Software (e.g., Aspen Plus, SuperPro) | Models mass/energy balances for contracting and inventory sizing of decentralized feedstock networks. |

| Discrete Event Simulation (DES) Platform (e.g., AnyLogic) | Models and optimizes complex scheduling and logistics for multiple biomass types and processing units. |

| Solid-Liquid Separation Unit (Bench-scale) | Tests dewatering efficiency of pre-treated slurries, critical for energy balance in logistics models. |

| Catalytic Hydrotreatment Bench Reactor | Upgrades bio-crude to SAF hydrocarbons, final step linking logistics to fuel specification testing. |

Solving Real-World Problems: Mitigating Risk and Cutting Costs in Biomass Supply Chains

Welcome to the Technical Support Center for Biomass Logistics Optimization. This resource provides targeted troubleshooting and FAQs to support your research in feedstock preservation for decentralized Sustainable Aviation Fuel (SAF) production pathways.

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: During our storage trials, we observed a rapid temperature spike in our miscanthus bales within 72 hours, followed by visible mold. What caused this, and how can we prevent it? A: This is a classic sign of microbial spoilage due to excessive moisture. The temperature spike indicates hyper-thermophilic activity. Prevention requires immediate moisture control at harvest and storage.

- Actionable Protocol: Implement the following drying and monitoring protocol:

- Field Drying: Allow cut biomass to field-dry to below 20% moisture content (w.b.) before baling.

- Real-Time Monitoring: Insert calibrated temperature and humidity probes into the center of 10% of bales at stacking.

- Data Logging: Record readings every 6 hours for the first 14 days.

- Intervention Threshold: If any probe reads >55°C or relative humidity inside the bale >70%, initiate forced aeration or relocate the affected bale stack.

Q2: Our lab analysis shows inconsistent sugar yields from enzymatically hydrolyzed corn stover samples after different storage durations. How do we isolate storage-related degradation from natural variability? A: Inconsistency often stems from unstandardized pre-storage processing. You must establish a controlled baseline.

- Actionable Protocol: Execute a controlled degradation study:

- Homogenization: Pool and thoroughly mix a single harvest batch of corn stover.

- Sub-Sampling: Divide into identical aliquots (e.g., 1kg dry mass equivalent).

- Conditioning: Artificially condition aliquots to target moisture levels (e.g., 10%, 15%, 20%, 25% w.b.) using a misting chamber.

- Storage Simulation: Store conditioned samples in controlled environment chambers mimicking target climate (e.g., 25°C/60% RH).

- Destructive Sampling: Sacrifice replicates from each condition at scheduled intervals (0, 30, 60, 90 days) for compositional analysis (NREL/TP-510-42618).

Q3: For our life-cycle assessment (LCA) model on decentralized SAF, we need reliable data on dry matter loss (DML) for willow chips stored outdoors. What are the key dependent variables we must measure? A: Accurate DML modeling requires tracking these interdependent variables, summarized in the table below.

Table 1: Key Metrics for Modeling Dry Matter Loss (DML) in Stored Biomass

| Metric | Measurement Method | Typical Range in Outdoor Pile Storage | Impact on DML |

|---|---|---|---|

| Moisture Content (% w.b.) | Oven-dry method (ASTM E871) | 25%-55% | Primary driver. >35% dramatically increases microbial DML. |

| Pile Core Temperature | Thermocouple array logging | Ambient to 70°C+ | >45°C indicates active degradation; sustained heat increases DML. |

| Ambient Relative Humidity | Weather station data | 50%-100% | Drives moisture adsorption; high RH impedes drying. |

| Storage Duration (days) | Log | 0-180+ | DML generally increases logarithmically; most losses occur in first 60 days. |

| Particle Size/Form | Sieve analysis | Chips, shreds, bales | Smaller particles have higher surface area, promoting faster drying but also initial microbial access. |

Q4: We are testing organic acid preservatives (e.g., propionic acid) to inhibit degradation in high-moisture grass silage for biogas. What is a safe and effective laboratory-scale application method? A: Use a spray-on application with proper safety controls.

- Actionable Protocol: