Strategic Pre-Processing Depot Optimization: Building Resilient Supply Chains for Drug Development

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to strategically optimize pre-processing depot locations, enhancing supply chain resiliency.

Strategic Pre-Processing Depot Optimization: Building Resilient Supply Chains for Drug Development

Abstract

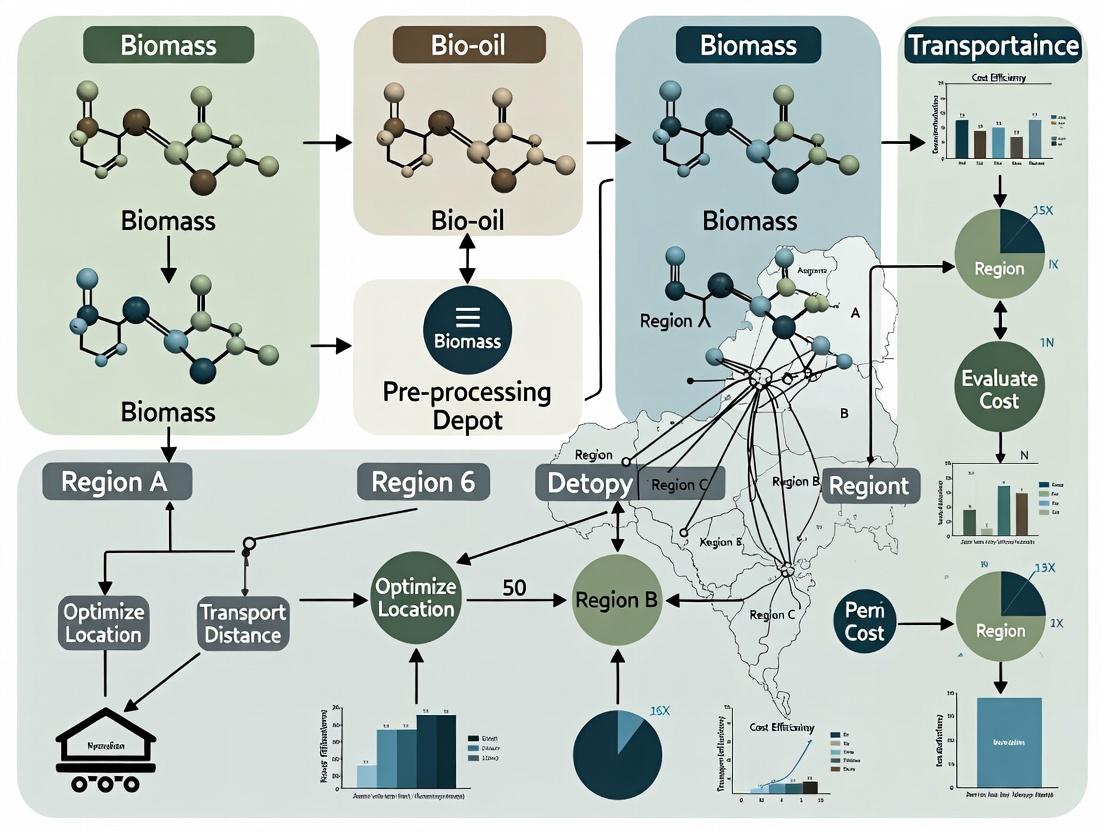

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to strategically optimize pre-processing depot locations, enhancing supply chain resiliency. We explore the foundational role of depots in mitigating disruptions, detail advanced methodological approaches for network design, address critical operational challenges, and validate strategies through comparative analysis. The content bridges theoretical logistics models with practical applications in biomedical research, offering actionable insights for building agile and robust supply networks capable of withstanding global volatility and ensuring the continuity of critical development pipelines.

The Critical Role of Pre-Processing Depots in Modern Pharmaceutical Supply Chains

Technical Support Center: Troubleshooting & FAQs

This technical support center addresses common operational and research challenges encountered when integrating advanced pre-processing depots into supply chain models for pharmaceutical and biologics research. The guidance is framed within the thesis context: Optimizing pre-processing depot locations for supply chain resiliency research.

Frequently Asked Questions (FAQs)

Q1: Our simulation model for depot network optimization is yielding inconsistent resiliency scores when we vary the 'reprocessing capacity' parameter. What could be the cause? A1: Inconsistent scores often stem from an incorrectly defined relationship between fixed capacity and variable throughput in your model. Ensure your "Pre-Processing Capacity" module distinguishes between physical holding capacity (static) and material processing throughput (dynamic, dependent on equipment and staffing). A common error is to use a single variable for both. Follow the validation protocol below.

Q2: How do we quantitatively measure the "value-add" of a pre-processing function (like purity testing) versus its cost in a depot location model? A2: You must define a Key Performance Indicator (KPI) that integrates quality and time. A recommended metric is Quality-Adjusted Throughput Speed (QATS). Calculate it per node in your network using the experimental protocol provided.

Q3: When modeling a cold chain for biologics, what critical pre-processing depot data inputs are most often missing, leading to model failure? A3: The most common missing data points are not temperature logs, but temperature transition profiles during depot intake/outflow and local utility reliability indices. These are essential for simulating real-world processing delays.

Troubleshooting Guides

Issue: Unstable Optimization Outputs for Depot Placement Symptoms: The optimization algorithm (e.g., genetic algorithm, MILP solver) selects wildly different depot locations in consecutive runs with minimal parameter changes. Diagnosis & Resolution:

- Check Data Normalization: Input parameters like "cost," "distance," and "processing yield" likely operate on different scales. Unnormalized data gives undue weight to larger numerical ranges.

- Solution: Apply min-max scaling or Z-score normalization to all quantitative inputs before optimization.

- Validate Constraint Feasibility: The defined constraints (e.g., "maximum transport time < 24h") may be too tight, creating a near-infeasible solution space.

- Solution: Conduct a feasibility analysis by relaxing constraints sequentially and observing the effect on the objective function. Use the data from Table 1 to benchmark realistic constraints.

Issue: Inaccurate Resilience Scoring Post-Disruption Simulation Symptoms: Simulated supply chain recovery times are shorter than empirical data suggests, or the model fails to identify key single points of failure. Diagnosis & Resolution:

- Incorporate Dynamic Reprocessing Rules: Your depot nodes likely lack rules for reprioritizing tasks during a disruption.

- Solution: Implement a rule-based module within each depot agent. For example:

IF [Input_Stream_A = 0] THEN [Reallocate_Testing_Capacity_to_Stream_B = 85%]. Use the workflow diagram (Diagram 1) to map these decision points.

- Solution: Implement a rule-based module within each depot agent. For example:

- Integrate Regional Risk Data: The model uses static failure probabilities. Integrate dynamic, regional data (see Table 2).

Experimental Protocols & Data Presentation

Protocol 1: Validating Pre-Processing Depot Capacity Parameters Objective: To empirically derive the relationship between a depot's nominal capacity and its actual throughput under stochastic demand. Methodology:

- Select 3 candidate depot locations in your model.

- Define a 72-hour simulation window with a variable demand function (e.g., sine wave ±30% of mean).

- For each depot, run the simulation while measuring:

Actual_Throughput(kg/hr or units/hr)Queue_Length(units waiting)Capacity_Utilization(%).

- Vary the

Processing_Rateparameter in 5% increments from 50% to 125% of the baseline. - Plot

Utilizationvs.Queue_Length. The inflection point of the curve indicates the practical maximum capacity, which is your key model input.

Protocol 2: Calculating Quality-Adjusted Throughput Speed (QATS) Objective: To create a unified metric for evaluating pre-processing depot efficiency. Methodology:

- For a given depot i and process j (e.g., sterile filtration), collect:

T_ij: Average processing time (hours).Y_ij: Average output yield or purity (%).B: Baseline yield for the industry standard (obtain from literature).

- Calculate the Quality Factor:

Q_ij = Y_ij / B. - Calculate QATS:

QATS_ij = Q_ij / T_ij. - Use this dimensionless metric to compare different depot configurations or technologies. A QATS > 1 indicates performance better than the industry baseline per unit time.

Table 1: Benchmark Constraints for Pharmaceutical Pre-Processing Depot Models

| Constraint Category | Typical Parameter Range | Data Source |

|---|---|---|

| Cold Chain Hold Time | 2 - 72 hours (depends on material) | ICH Q1A(R2), USP <1079> |

| Quality Control Sampling | 0.5% - 5.0% of batch lot | FDA Guidance for Industry: PAT |

| Material Reprocessing Rate | 60% - 85% of primary line speed | Industry whitepapers (2023-2024) |

| Regulatory Documentation Time | 15 - 90 minutes per batch | EMA GMP Annex 11 |

Table 2: Regional Risk Indices for Depot Resilience Modeling (Sample Data)

| Region | Utility Reliability Index (1-10) | Transport Congestion Factor (1-10) | Local Supplier Density (Suppliers/100km²) |

|---|---|---|---|

| North America - Midwest | 8.7 | 4.2 | 1.5 |

| Europe - Central | 8.9 | 5.1 | 3.8 |

| Asia - Southeast Coastal | 7.2 | 8.9 | 6.5 |

| Global Average (Benchmark) | 7.5 | 6.0 | 3.0 |

Mandatory Visualizations

Title: Depot Internal Material Routing Logic

Title: Key Factors for Depot Resilience Scoring

The Scientist's Toolkit: Research Reagent & Modeling Solutions

| Item/Category | Function in Pre-Processing Depot Research |

|---|---|

| AnyLogic/Simulia | Discrete-event simulation software for modeling dynamic material flow, queue times, and resource allocation within and between depots. |

| Gurobi/CPLEX Optimizer | Solver for mathematical programming (MILP) models used to solve the NP-hard depot location-allocation problem. |

| SAP ICH | Integrated supply chain data platform. Source for historical throughput and delay data to calibrate simulation models. |

| Stability Chambers | For empirical validation of modeled hold-time constraints under varied temperature/humidity conditions. |

| RFID/ IoT Sensor Suites | Generate real-time tracking data to inform model parameters for material transfer times and condition monitoring. |

| Regional Risk Databases | (e.g., Verisk Maplecroft) Provide quantitative indices for political, environmental, and utility risks used as model inputs. |

Technical Support Center for Resilient Supply Chain Pre-processing Depot Research

Frequently Asked Questions (FAQs) & Troubleshooting Guides

Q1: My agent-based simulation of depot networks is yielding inconsistent results for the same input parameters. What could be the issue?

A: This is often due to unseeded random number generators within stochastic modules (e.g., disaster probability, demand fluctuation). Solution: Implement a fixed seed at the start of each experimental run to ensure reproducibility. In Python (using numpy), use np.random.seed(42) before any stochastic function calls. Verify that all parallel threads or processes also receive unique, deterministic seeds derived from a master seed.

Q2: How do I accurately parameterize regional disruption probabilities for geopolitical or natural disaster events in my optimization model? A: Rely on curated, historical databases. Recommended Protocol:

- Data Source: Access the EM-DAT (International Disaster Database) or the U.S. Federal Emergency Management Agency (FEMA) Disaster Declarations.

- Methodology:

- Define your geographic regions of interest (e.g., NUTS-2, US counties).

- Query the database for events (e.g., floods, storms, earthquakes, political conflicts) over the last 20 years.

- Calculate the annual frequency per region:

Annual Probability = (Number of Events / 20). - For severity, use the reported "Total Damage" or "Affected Population" normalized by regional GDP or population to create a severity index.

- Troubleshooting: If data is sparse, employ a spatial smoothing algorithm (e.g., kernel density estimation) or use a surrogate region with similar hazard profiles.

Q3: My Mixed-Integer Linear Programming (MILP) model for depot location fails to solve within a reasonable time for large-scale networks. What are my options? A: Implement decomposition or heuristic strategies.

- Lagrangian Relaxation: Relax complex coupling constraints (e.g., demand coverage across all scenarios) into the objective function with penalty multipliers.

- Benders Decomposition: Separate the problem into a master problem (location decisions) and multiple independent subproblems (flow allocation under each disruption scenario).

- Protocol for a Simple Greedy Heuristic:

- Start with zero depots open.

- In each iteration, open the one candidate depot that yields the greatest reduction in total expected cost (weighted average of normal and disruption scenarios).

- Continue until opening a new depot provides no cost-saving benefit beyond its fixed cost.

- Perform a local search (e.g., swap one open depot with a closed one) to improve the solution.

Q4: When validating my resilient depot configuration using real-world COVID-19 disruption data, how should I quantify "performance"? A: Move beyond simple cost metrics. Use a multi-dimensional KPI table for validation.

| Performance Metric | Calculation Formula | Target Benchmark (Based on COVID-19 Pharma Supply Chain Analysis) |

|---|---|---|

| Service Level Maintained | (Orders fulfilled within SLA / Total orders) during disruption period. | >85% for critical medical supplies. |

| Cost Increase Relative to Baseline | (Disruption Scenario Cost - Baseline Cost) / Baseline Cost. | <30% for acute 6-month disruption. |

| Recovery Time to 95% Service Level | Time from onset of disruption to sustained 95% service level. | <60 days. |

| Inventory Buffering Index | (Peak inventory during disruption - Safety stock) / Average weekly demand. | Between 2.5 and 4.0 weeks of extra buffer. |

Q5: How can I model cascading failures where a disruption at a primary supplier impacts a pre-processing depot, which then impacts downstream nodes? A: Implement a discrete-event simulation (DES) framework alongside your optimization model. Experimental Protocol for Cascading Failure Analysis:

- Define Network: Map your supply chain as a directed graph (nodes = facilities, edges = material flows).

- Set Initial Disruption: Trigger a failure at a key node based on historical probability (from Q2).

- Propagation Rules: Program logic for propagation (e.g., if a node loses >50% of its inbound supply, it experiences a 70% capacity reduction after a 48-hour delay).

- Run Simulation: Execute 10,000 Monte Carlo runs with randomized initial failure points and durations.

- Analyze Results: Identify which of your proposed pre-processing depots most frequently become failure bottlenecks (using betweenness centrality metric on the failure propagation graphs).

Experimental Workflow for Depot Location Optimization

Signaling Pathway for Disruption Impact Propagation

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Solution | Function in Resilient Depot Research |

|---|---|

| Gurobi / CPLEX Optimizer | Commercial solvers for exact solution of large-scale MILP location-allocation models. |

| AnyLogistix or Simio | Supply chain simulation software for digital twin creation and disruption scenario testing. |

| Python (PuLP, SciPy) | Open-source libraries for formulating and solving custom optimization models and algorithms. |

| EM-DAT Database | The core international disaster database for parameterizing disruption probabilities and severities. |

| QGIS / ArcGIS | Geographic Information System software for spatial analysis, mapping depot catchments, and visualizing risk layers. |

| Resilience Index KPI Dashboard (Custom) | A consolidated view (e.g., in Tableau) of metrics from Table 1 to track model performance against benchmarks. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During a simulation of a supply chain disruption, my Time-to-Recovery (TTR) metric shows an improbably low value (near zero). What could be causing this? A: This is typically a data input or logic error in your simulation model. Verify the following:

- Check Disruption Definition: Ensure your model's "disruption event" correctly suspends activity at the affected pre-processing depot. A missing or incorrectly triggered disruption will result in no recovery time.

- Verify Recovery Logic: Confirm that the "recovery" condition in your code is not being met instantly. The trigger (e.g., "inventory replenished," "alternate depot activated") should be dependent on your Inventory Buffering or Network Flexibility parameters.

- Audit Input Data: Review the lead time and throughput data for your backup nodes. Incorrectly high capacity values can artifactually minimize TTR.

Q2: How should I quantify Inventory Buffering for critical lab reagents in a depot location model when demand is variable? A: For research supply chains, buffer stock must account for both operational variability and disruption scenarios.

- Protocol: Calculate the buffer

Busing:B = (z * σ_d * √L) + (D_d * R_d).z: Service level factor (e.g., 1.65 for 95%).σ_d: Standard deviation of daily demand from lab forecasts.L: Average lead time in days from primary supplier.D_d: Average daily demand.R_d: Additional "disruption coverage" days (a key resilience parameter to test).

- Action: Run sensitivity analyses on

R_d(e.g., 7, 14, 30 days) against total network cost to identify optimal trade-offs.

Q3: When modeling Network Flexibility via alternate depot routing, how do I resolve "infeasible solution" errors in my optimization solver? A: Infeasibility often arises from over-constraining the model with unrealistic flexibility assumptions.

- Troubleshoot Step-by-Step:

- Relax Capacity Constraints: Temporarily remove capacity limits on alternate depots. If the model runs, your initial flexibility design is insufficient for the simulated demand.

- Check Connectivity: Ensure all designated "alternate" depots in your model have transportation links (edges) to the required demand nodes. A missing link creates an unsolvable route.

- Validate Demand-Supply Balance: Sum the total demand and the total network capacity after the primary depot disruption. The latter must be equal to or greater than the former.

Q4: My multi-metric analysis yields conflicting recommendations: minimizing TTR increases cost, while maximizing flexibility reduces buffer efficiency. How do I reconcile this? A: This is the core challenge of resilience optimization. You must move to a multi-objective optimization framework.

- Methodology: Implement a Pareto Frontier analysis.

- Define Objectives: Minimize Total Cost (TC), Minimize Average TTR, Maximize Flexibility Score (e.g., % of demand nodes with ≥2 viable depots).

- Run Iterations: Use an algorithm (e.g., NSGA-II) to run hundreds of network designs.

- Analyze Output: Identify the set of "non-dominated" solutions where improving one metric worsens another. The optimal solution is chosen from this frontier based on organizational risk tolerance.

Data Presentation

Table 1: Simulated Impact of Buffer Stock on Key Resilience Metrics

| Disruption Coverage (R_d) | Avg. Time-to-Recovery (Days) | Network Cost Increase (%) | Service Level Maintained (%) |

|---|---|---|---|

| 0 days (Just-in-Time) | 10.5 | 0.0 | 65.2 |

| 7 days | 5.1 | 18.7 | 92.4 |

| 14 days | 3.8 | 35.2 | 98.7 |

| 30 days | 2.1 | 74.5 | 99.9 |

Table 2: Network Flexibility Configurations & Performance

| Flexibility Design | % of Demand Nodes with ≥2 Sourcing Options | Modeled TTR Reduction vs. Baseline | Estimated Cost Premium |

|---|---|---|---|

| Single, Centralized Depot (Baseline) | 0% | 0% | 0% |

| Regional Depots with No Redundancy | 0% | 15% | 20% |

| Regional Depots with Partial Overlap | 60% | 55% | 45% |

| Fully Meshed Network | 100% | 70% | 85% |

Experimental Protocols

Protocol 1: Measuring Time-to-Recovery (TTR) in a Simulated Disruption Objective: Quantify the time required for a supply network to return to pre-disruption service levels after a node failure.

- Model Setup: Configure your network model (e.g., in AnyLogistix, MATLAB, or custom Python simulation) with defined depot locations, capacities, routes, and demand points.

- Establish Baseline: Run the simulation under normal conditions for a set period (e.g., 100 simulated days) and record the average service level (e.g., order fulfillment rate, lead time).

- Induce Disruption: At a defined time

t_d, completely disable the primary pre-processing depot for a key reagent. - Monitor Recovery: Continue the simulation. Record the time

t_rat which the system's service level metric permanently returns to within 5% of its pre-disruption baseline. - Calculate TTR:

TTR = t_r - t_d. Repeat forn≥30stochastic runs to calculate average and standard deviation.

Protocol 2: Optimizing Depot Locations for Multi-Metric Resilience Objective: Identify depot locations that balance cost, TTR, Inventory Buffering, and Network Flexibility.

- Define Candidate Nodes: List all potential depot locations (e.g., lab hubs, commercial logistics centers).

- Parameterize Metrics:

- Cost: Fixed opening cost + variable operating cost per node.

- TTR: Estimated from network connectivity (see Protocol 1).

- Buffer: Calculate as shown in FAQ A2 for each node's assigned demand.

- Flexibility: For each demand node, count the number of depots within a maximum allowable lead time.

- Formulate Optimization Problem: Use a

p-median or multi-objective genetic algorithm model. A sample objective function to minimize could be a weighted sum:Minimize [ W1*Cost + W2*TTR - W3*Flexibility Score ]. - Solve & Analyze: Run the optimization. Generate a Pareto frontier plot (Cost vs. TTR vs. Flexibility) to visualize trade-offs and select optimal configurations.

Mandatory Visualization

Multi-Metric Depot Optimization Workflow

Interdependence of Key Resilience Metrics

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Supply Chain Resilience Research |

|---|---|

| Supply Chain Digital Twin Software (e.g., AnyLogistix, Simio) | Creates a virtual, simulatable model of the physical supply network to test disruptions and policies without risk. |

| Geographic Information System (GIS) Data | Provides real-world coordinates, distances, and transportation infrastructure data for accurate depot location modeling. |

| Python/R with Optimization Libraries (PuLP, DEAP, ompr) | Enables custom coding of simulation models, multi-objective optimization algorithms, and automated data analysis. |

| Historical Demand & Lead Time Data | Serves as the critical input for stochastic modeling, used to calculate safety stocks and simulate realistic variability. |

| Risk Scenario Database | A curated list of potential disruption events (e.g., port closure, supplier bankruptcy) with estimated probability and severity for stress-testing. |

This technical support center is designed to assist researchers, scientists, and drug development professionals conducting simulations and experiments related to the optimization of pre-processing depot locations for supply chain resiliency. The following troubleshooting guides and FAQs address common computational and methodological issues.

Frequently Asked Questions & Troubleshooting Guides

Q1: My network optimization model (e.g., mixed-integer linear programming) is failing to converge to a feasible solution when I introduce redundant depot nodes. What are the first steps to diagnose this? A: This typically indicates a model infeasibility due to conflicting constraints.

- Check Capacity-Demand Balance: Ensure the total capacity of all depots (primary + redundant) meets or exceeds total demand in all tested disruption scenarios. Create a simple table to verify:

- Audit Flow Constraints: Verify that constraints forcing demand assignment to only operational depots are correctly formulated. A missing or incorrect constraint can cause the solver to try routing material through a "closed" node.

- Relax and Re-introduce: Temporarily remove redundancy constraints and the disruption scenarios. If the model solves, re-introduce them one by one to isolate the culprit.

Q2: When running Monte Carlo simulations for random disruption events, my cost distributions show extreme outliers, skewing the average cost-benefit ratio. How should I handle this? A: Outliers often represent near-total network failure scenarios.

- Diagnosis: This is expected in resiliency modeling. The key is to separate analysis.

- Protocol: Segment your results into tiers based on disruption severity (e.g., Tier 1: <20% capacity loss, Tier 2: 20-50%, Tier 3: >50%). Calculate cost-benefit metrics (like Cost vs. Service Level) per tier. Use the 95th percentile cost (Value at Risk) for financial planning instead of relying solely on the mean.

Q3: I am using a graph theory approach to measure network connectivity. How do I quantitatively choose between adding one high-capacity redundant depot versus several smaller, distributed ones? A: This requires a multi-metric experimental protocol.

- Experimental Protocol:

- Define Baseline Network: Model your existing "efficient" depot network.

- Create Candidate Scenarios: Scenario A: Add one large redundant depot at location X. Scenario B: Add three smaller depots at locations Y1, Y2, Y3.

- Simulation & Data Collection: Run N disruption cycles (e.g., random link failures, node failures) for each scenario. Record the following for each cycle:

- Average Shortest Path Increase: (Post-disruption path length / pre-disruption path length).

- Network Efficiency Decline: (See Diagram 1).

- Cost Impact: Estimated operational & capital expense delta.

- Tabulate Results: Compare the performance of the two strategies.

| Metric | Baseline Network | Scenario A (1 Large Redundant Depot) | Scenario B (3 Small Distributed Depots) |

|---|---|---|---|

| Avg. Network Efficiency after Disruptions | 0.45 | 0.68 | 0.82 |

| 95th Percentile Logistics Cost Increase | +250% | +120% | +65% |

| Capital Investment (Relative Units) | 0 | 100 | 110 |

Q4: My machine learning model for predicting optimal depot locations performs well on training data but generalizes poorly to new disruption patterns. What validation approach is recommended? A: This suggests overfitting to the specific disruption scenarios in your training set.

- Troubleshooting Guide:

- Feature Engineering: Ensure your input features capture fundamental network topology (e.g., betweenness centrality of nodes, clustering coefficient) and not just historical disruption data.

- Validation Protocol: Use temporal or spatial cross-validation. Do not randomly split your data. Instead:

- Train on disruption data from Time Periods 1-3, validate on Period 4.

- Train on data from Geographic Regions A-C, validate on Region D.

- Simplify the Model: Reduce model complexity and incorporate regularization techniques (L1/L2) to penalize overfitting.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Resiliency Research |

|---|---|

| NetworkX (Python Library) | Enables the creation, manipulation, and analysis (e.g., shortest path, connectivity) of complex supply chain networks as graph structures. |

| Gurobi/CPLEX Solver | High-performance optimization engines for solving large-scale MILP problems to determine optimal flows and depot placements under constraints. |

| AnyLogistix or Supply Chain Guru | Commercial simulation platforms for dynamic, agent-based modeling of supply chains under stochastic disruption events. |

| Geospatial Data (GIS) | Provides real-world coordinates, distances, and terrain data for accurate transportation cost and risk modeling between candidate depot locations. |

| Monte Carlo Simulation Engine | Generates thousands of probabilistic disruption scenarios (e.g., port closures, supplier delays) to stress-test network designs. |

Diagrams

Diagram 1: Network Efficiency Calculation Workflow

Diagram 2: Resiliency Experiment Logic Flow

Regulatory and Quality Considerations (GxP) for Depot Location and Operations

Troubleshooting Guides and FAQs

Q1: During our simulation of a depot network for clinical trial material distribution, a potential 21 CFR Part 11 compliance gap was flagged for electronic data related to environmental monitoring. What are the critical first steps? A1: Immediately quarantine the affected electronic records/data sets from your operational model. The primary steps are: 1) Document the Deviation: Initiate a non-conformance record describing the potential gap (e.g., lack of audit trail, user access controls). 2) Impact Assessment: Determine which simulated depot locations or routing scenarios are impacted. 3) Corrective Action: For the simulation, this may involve re-running scenarios with a corrected digital toolset that has validated electronic signatures and audit trails. In a physical depot, this would require system remediation and re-validation.

Q2: Our resiliency model suggests situating a pre-processing depot in a geospatial zone with variable power grids. How do we address GMP concerns for temperature-controlled storage in the experimental design? A2: The model must incorporate power redundancy as a critical variable. The experimental protocol should include: 1) Risk Variable Definition: Define "power grid stability" as a quantifiable risk score (e.g., historical outage frequency/duration). 2) Control Design: Model scenarios with and without backup generators/UPS. 3) Data Point Collection: For each simulated scenario, record the predicted number of temperature excursions and mean time to recovery (MTTR). This data feeds directly into the site qualification risk assessment.

Q3: When modeling multiple potential depot locations, how should we weight and incorporate data from vendor quality audits into the selection algorithm? A3: Transform qualitative audit findings into a quantitative score for your optimization model. Use a structured table:

| Audit Finding Category | Score (1-5) | Weight in Model (%) | Data Source for Simulation |

|---|---|---|---|

| Quality Management System Maturity | 1 (Poor) to 5 (Mature) | 30% | Audit report classification (Critical/Major/Minor) |

| Past Performance (Deviation Rate) | 1 (High) to 5 (Low) | 25% | Historical quality metrics (e.g., % on-time, defect rate) |

| Facility & Equipment State | 1 (Non-compliant) to 5 (Excellent) | 20% | Audit observations and CAPA status |

| Personnel Training Records | 1 (Inadequate) to 5 (Robust) | 15% | Audit sample review |

| Data Integrity Governance | 1 (Weak) to 5 (Strong) | 10% | Assessment against ALCOA+ principles |

The weighted score becomes an input constraint (Audit_Score >= Threshold) in your location-optimization algorithm.

Experimental Protocol: Assessing GxP Compliance Impact on Depot Network Resiliency

Objective: To quantify how stringent adherence to GxP controls at pre-processing depot locations influences overall supply chain network performance and resiliency metrics.

Methodology:

- Variable Definition:

- Independent Variable: GxP Rigor Level (GRL). Define three tiers:

- GRL-High: Full adherence to cGMP (21 CFR 210/211), GDP, with rigorous quality oversight.

- GRL-Medium: Adherence to key GMP principles with some risk-based exceptions.

- GRL-Low: Guided by GMP but not formally compliant (e.g., for research-use-only materials).

- Dependent Variables: Mean Cost per Shipment, Network Recovery Time (NRT) after a disruption, Successful Delivery Rate (%).

- Independent Variable: GxP Rigor Level (GRL). Define three tiers:

- Simulation Setup: Use a network optimization model (e.g., a mixed-integer linear program) with nodes for suppliers, candidate depots, and clinical sites.

- Data Inputs: For each candidate depot location, input cost, capacity, and lead time data that correlates to its GRL (e.g., GRL-High has +15% operational cost, +5% processing time but -90% error rate).

- Disruption Modeling: Introduce a major disruption (e.g., port closure, regulatory hold) and measure the time and cost for the network to reroute and meet 95% of demand.

- Replication: Run each scenario (GRL-High, Medium, Low network configurations) with 1000 Monte Carlo iterations varying disruption timing and location.

- Analysis: Compare the mean values of dependent variables across GRL tiers using ANOVA.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Depot Optimization Research |

|---|---|

| Network Optimization Software (e.g., AnyLogistix, Llamasoft) | Platforms to build digital twins of supply chains, simulate disruptions, and run "what-if" scenarios for depot placement. |

| Geospatial Risk Data Feeds | Provide real-time and historical data on political stability, natural disaster risk, and infrastructure quality for potential depot locations. |

| GxP Regulation Databases (e.g., FDA, EMA, ICH portals) | Authoritative sources for current regulatory requirements to define constraints and rules in simulation models. |

| Quality Management System (QMS) Software | Provides structured data on deviations, CAPAs, and audit findings to quantify the "quality state" of a potential depot partner. |

| Monte Carlo Simulation Add-ins | Enables probabilistic modeling of variability and risk factors (e.g., customs delay, temperature excursion) within the supply chain network. |

Diagrams

Title: GxP-Informed Depot Selection Workflow

Title: GxP Rigor Impact on Performance Variables

Advanced Methodologies for Depot Network Design and Strategic Placement

This technical support center is designed to assist researchers and scientists working on optimizing pre-processing depot locations for supply chain resiliency, particularly in pharmaceutical and drug development contexts. Below are troubleshooting guides and FAQs addressing common issues encountered during data-driven site selection experiments.

Frequently Asked Questions & Troubleshooting

Q1: Our demand pattern analysis is yielding highly volatile time-series data. How can we smooth the data without losing critical trend information for depot capacity planning?

A: Apply a Hodrick-Prescott filter to separate the trend from cyclical components. For weekly data, a smoothing parameter (lambda) of 14,400 is recommended. Validate by ensuring the residual component has a mean of zero.

- Protocol: 1) Import time-series data into analytical software (e.g., Python's

statsmodelsor R). 2) Applyhpfilter()function. 3) Plot original series, trend, and cycle. 4) Correlate the trend component with known market events to validate.

Q2: When geocoding supplier addresses, we encounter a high rate of failed or inaccurate coordinates, jeopardizing the distance analysis.

A: This is often due to incomplete or formatted addresses. Implement a two-stage verification process.

- Protocol: 1) Use a primary geocoding service (e.g., Google Maps API) for bulk processing. 2) Export all records with low confidence scores (<0.8). 3) Process low-confidence records through a secondary service (e.g., OpenStreetMap Nominatim) and manually verify a 10% sample. 4) Merge the datasets.

Q3: Our multi-criteria decision model for depot sites is sensitive to small changes in weight assignments, leading to inconsistent rankings. How can we improve robustness?

A: Conduct a sensitivity analysis using the Monte Carlo simulation technique on criterion weights.

- Protocol: 1) Define a probability distribution (e.g., uniform ±10%) for each weight in your Analytical Hierarchy Process (AHP) model. 2) Run 10,000 simulations, randomly sampling weights from these distributions. 3) Record the rank of each potential site per simulation. 4) Calculate the probability distribution of ranks for each site. Sites with a high probability of appearing in the top 3 are robust choices.

Q4: How do we quantitatively integrate geopolitical risk hotspots into our location optimization model?

A: Transform qualitative risk data into a quantitative, location-specific "risk penalty" score.

- Protocol: 1) Source country and region-specific risk indices (e.g., World Bank Political Stability Index). 2) Normalize scores on a 0-1 scale (1=highest risk). 3) For each potential depot location (

i), calculate a weighted risk score (R_i) based on proximity to risk zones. 4) IncorporateR_ias a penalty cost in your objective function:Minimize [Total Logistics Cost + Σ (R_i * Penalty Multiplier * Depot Activity_i)].

Q5: The optimization solver (e.g., in Gurobi, CPLEX) fails to find a feasible solution for the depot location model. What are the first steps to debug?

A: Infeasibility often stems from overly restrictive constraints.

- Protocol: 1) Relax Demand Constraints: Temporarily allow demand to be unmet, and add a high penalty cost for unmet demand in the objective. If the model solves, the original demand coverage constraints were too tight. 2) Check Capacity Constraints: Ensure total depot capacity >= total demand * 1.1 (adding 10% buffer). 3) Isolate Constraints: Comment out constraint blocks (e.g., risk constraints, single-sourcing rules) one by one to identify the problematic set.

Data Presentation: Key Metrics for Site Selection Analysis

Table 1: Comparative Analysis of Candidate Pre-Processing Depot Locations

| Location ID | Avg. Distance to Top 10 Suppliers (km) | Projected Annual Demand within 250km (kg) | Geopolitical Risk Index (Normalized 0-1) | Estimated Operational Cost (USD/year) | Env. Compliance Score (1-100) |

|---|---|---|---|---|---|

| Site A | 145 | 5,750 | 0.15 | 2,250,000 | 92 |

| Site B | 89 | 8,900 | 0.45 | 1,980,000 | 85 |

| Site C | 210 | 4,200 | 0.10 | 2,500,000 | 96 |

| Site D | 112 | 7,100 | 0.60 | 1,750,000 | 78 |

Table 2: Data Sources for Resiliency Modeling

| Data Category | Recommended Source (2024) | Update Frequency | Key Use in Model |

|---|---|---|---|

| API Supplier Locations | FDA Gateway, Pharmacompass | Quarterly | Mapping supply nodes, lead time calculation |

| Clinical Trial Demand | ClinicalTrials.gov, Citeline | Monthly | Forecasting regional demand patterns |

| Political Risk | Verisk Maplecroft, World Bank Governance Indices | Annual | Adding risk penalties in objective function |

| Port Congestion | IHS Markit Port Intelligence, project44 | Real-time | Modeling logistics delay variability |

| Natural Hazard | NOAA, USGS, GDACS | Real-time/Alert | Identifying physical disruption hotspots |

Experimental Protocols

Protocol 1: Network Optimization for Depot Placement

- Objective: Minimize total weighted cost (transport, fixed, risk, penalty) while meeting demand.

- Methodology:

- Formulate as a Mixed-Integer Linear Programming (MILP) model.

- Decision Variables: Binary variable

Y_i(1 if depot opens at locationi), continuous flow variableX_ijk(quantity from supplierjto demand zonekvia depoti). - Constraints: Demand fulfillment, depot capacity, single sourcing (optional), budget.

- Solver: Implement in Python (PuLP/Gurobi) or AIMMS. Run with a MIP gap tolerance of 0.5%.

- Validation: Perform "leave-one-out" cross-validation on historical demand points to test model generalizability.

Protocol 2: Spatiotemporal Demand Clustering

- Objective: Identify stable demand clusters for depot service region designation.

- Methodology:

- Gather 36 months of demand data with ZIP code and timestamp.

- Use DBSCAN clustering algorithm (as it handles noise) on geographical coordinates.

- For each geographical cluster, perform time-series decomposition to check for seasonality.

- Only clusters with a stable, non-seasonal trend are considered "anchors" for a depot location.

Visualizations: Workflows & Relationships

Site Selection Analysis Workflow

Debugging Infeasible Optimization Model

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Supply Chain Resiliency Research

| Item/Resource | Function in Research | Example/Provider |

|---|---|---|

| Geospatial Analytics Software | Visualizes and analyzes supplier, demand, and risk data on maps. | ArcGIS Pro, QGIS, Python (geopandas, folium) |

| Optimization Solver | Computes optimal solutions for mathematical location-allocation models. | Gurobi, IBM CPLEX, Google OR-Tools, FICO Xpress |

| Risk Intelligence Feed | Provides structured data on political, regulatory, and environmental risks. | Verisk Maplecroft, Dun & Bradstreet Country Risk |

| Supply Chain Mapping Platform | Digitally maps tier-n supplier networks for dependency analysis. | Resilinc, Everstream Analytics, Altana AI |

| Transportation Cost Database | Provides real-world freight rates for road, rail, air, and sea. | Freightos Baltic Index (FBX), DAT iQ, Xeneta |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My Mixed-Integer Programming (MIP) solver fails to find a feasible solution for my multi-echelon FLP model. What are the primary checks I should perform? A: First, verify model formulation. A common error is overly restrictive constraints, such as capacity limits that cannot service total demand. Implement the following protocol:

- Relax Integer Constraints: Temporarily solve the model as a Linear Program (LP). If the LP is infeasible, the core constraints are contradictory.

- Analyze IIS: Use the solver's Irreducible Inconsistent Set (IIS) finder to identify the minimal set of conflicting constraints.

- Demand-Capacity Audit: Create a summary table to ensure total system capacity (if capacitated) ≥ total demand.

Q2: How do I choose between a p-median, p-center, and Fixed-Charge Facility Location (FCFL) model for depot pre-processing optimization? A: The choice is dictated by your resiliency objective. Use this decision workflow:

Q3: My FLP model runs are computationally expensive with large datasets. What are effective simplification strategies? A: Employ data aggregation and heuristic pre-solving.

- Protocol: Cluster demand points using k-means or geographic clustering. Use cluster centroids as aggregated demand nodes, weighted by original demand.

- Validation: Compare results (selected depot locations, total cost) from aggregated vs. a sample of the full model to ensure error tolerance is acceptable.

Q4: How can I incorporate "resiliency" against disruptions (e.g., facility closures) into a standard FLP? A: Implement models with backup coverage or stochastic scenarios.

- Methodology: Formulate a Robust Optimization or Stochastic Programming model.

- Define disruption scenarios (e.g., single-depot failure, regional disruption).

- Assign a probability to each scenario.

- Add a recourse variable (e.g.,

y_{ij}^s= demandiserved by depotjin scenarios). - Objective: Minimize fixed costs + expected transportation costs across all scenarios.

Key Experimental Protocols

Protocol P1: Formulating and Solving a Capacitated FCFL Model for Depot Pre-Processing

- Data Preparation: Compile candidate depot locations (

j∈ J) with fixed costf_jand capacitycap_j. Compile demand points (i∈ I) with demandd_i. Calculate transportation costc_{ij}(e.g., distance × unit cost). - Model Formulation (MIP):

- Decision Variables:

X_j= 1 if depotjis opened (0 otherwise). [Binary]Y_{ij}= fraction of demandiserved by depotj. [Continuous] - Objective: Min Σ{j ∈ J} fj Xj + Σ{i ∈ I} Σ{j ∈ J} di c{ij} Y{ij}

- Constraints:

- Demand Satisfaction: Σ{j ∈ J} Y{ij} = 1, ∀ i ∈ I

- Capacity: Σ{i ∈ I} di Y{ij} ≤ capj X_j, ∀ j ∈ J

- Linking: 0 ≤ Y{ij} ≤ Xj, ∀ i ∈ I, j ∈ J

- Decision Variables:

- Implementation: Code model in Python (PuLP, Pyomo) or AMPL. Solve using a MIP solver (e.g., Gurobi, CPLEX, CBC).

- Validation: Perform sensitivity analysis on key parameters (e.g.,

f_j,cap_j).

Protocol P2: Scenario-Based Resiliency Testing for Selected Depot Network

- Input: Optimal depot set from Protocol P1.

- Disruption Simulation: Define

Sdisruption scenarios (e.g.,S1: Depot A closed;S2: Depots B & C closed). - Recourse Simulation: For each scenario, re-solve the transportation model (variable

Y) to reassign demand to remaining open depots, respecting capacities. - Performance Metric Calculation: Record Key Performance Indicators (KPIs) for each scenario.

Data Presentation: Comparative Model Outputs

Table 1: Model Comparison for a 50-Node, 5-Depot Problem

| Model Type | Objective | Selected Depots (IDs) | Total Cost ($K) | Avg. Service Distance (km) | Max Service Distance (km) | Solve Time (s) |

|---|---|---|---|---|---|---|

| p-Median (p=5) | Min Avg. Distance | 12, 18, 23, 34, 47 | 452 | 7.2 | 22.5 | 3.1 |

| p-Center (p=5) | Min Max Distance | 8, 15, 29, 31, 42 | 510 | 9.8 | 14.1 | 2.8 |

| Capacitated FCFL | Min Fixed + Transport Cost | 5, 18, 29, 37 | 388 | 8.5 | 19.7 | 12.7 |

Table 2: Resiliency KPIs for FCFL Network Under Disruption

| Disruption Scenario | % Demand Served | Cost Increase | Avg. Distance Increase | Critical Failure Point |

|---|---|---|---|---|

| Baseline (No disruption) | 100% | 0% | 0% | N/A |

| Single Depot (#18) Closed | 100% | 18% | 24% | No |

| Regional (Depots #5 & #37) Closed | 85% | 52% | 41% | Yes (Capacity overload) |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for FLP Research

| Item (Software/Package) | Category | Function in Experiment |

|---|---|---|

| Gurobi / CPLEX | Solver | High-performance MIP solver for exact optimization. |

| PuLP / Pyomo | Modeling Language | Python libraries for formulating optimization models. |

| GeoPandas | Spatial Analysis | Processes geographic data (demand points, distances). |

| OSMnx | Network Analysis | Models real-world road networks for accurate c_{ij}. |

| Scikit-learn | Machine Learning | Used for demand clustering and data pre-processing. |

| Matplotlib / Plotly | Visualization | Creates maps and charts of results (depot networks). |

Model Optimization and Validation Workflow

Technical Support Center

FAQs & Troubleshooting Guides

1. Scenario Definition & Inputs Q1: My scenario planning outcomes are too narrow. How can I ensure my scenarios capture a sufficiently wide range of futures? A: This indicates a lack of divergence in your scenario axes. Re-evaluate your Critical Uncertainty Matrix. The two most impactful and uncertain driving forces should form your axes, creating four quadrants. Label each quadrant as a distinct scenario (e.g., "High Regulation, Localized Production"). Ensure forces are truly independent. Avoid clustering all "bad" events in one scenario and all "good" in another; each scenario must be internally consistent and plausible.

Q2: How do I translate qualitative scenario narratives into quantitative inputs for the Monte Carlo model? A: Develop a parameter mapping table. For each scenario, define probability distributions for key model variables (e.g., supplier lead time, transportation cost multiplier, demand volatility). Example Mapping:

- Scenario A (Stable Growth): Demand ~Normal(μ=100, σ=10).

- Scenario B (Supply Shock): Lead Time ~Triangular(min=14, mode=21, max=60). Assign a subjective probability weight to each scenario based on expert elicitation (e.g., A: 40%, B: 25%, etc.). These weights can inform the sampling frequency or be used to create a mixed distribution.

2. Monte Carlo Simulation Execution Q3: My simulation run time is excessively long. What are the primary levers to optimize performance? A: Focus on the number of iterations and model complexity. Use a convergence test to determine the necessary iterations. Start with 1,000 runs, calculate a key output metric (e.g., total network cost), and repeat, increasing runs. Plot the metric's moving average. Performance converges when the change falls below a threshold (e.g., 0.1%). Use this iteration count. Also, simplify the model where possible; use empirical distributions instead of complex functions, and pre-compute static variables.

Q4: I am getting unrealistic outliers in my simulation results (e.g., infinite costs). What is the likely cause? A: This is typically a "simulation crash" due to unconstrained variables or undefined mathematical operations. Check for:

- Division by Zero: Ensure denominator variables (e.g., throughput capacity) cannot be zero or negative. Use a

MAX(denominator, epsilon)function. - Unmet Demand: If your model allows 100% stockouts without a "penalty" or emergency procurement logic, costs may become undefined. Implement a fail-safe routing or punitive cost clause.

- Distribution Tails: Review the bounds of your input distributions (e.g., a

Triangulardistribution with amin=0might still sample near-zero, causing issues). Apply sensible minimums.

3. Output Analysis & Interpretation Q5: How do I effectively communicate the results from 10,000+ simulation runs to stakeholders? A: Move beyond the mean. Present key outputs using:

- Cumulative Distribution Functions (CDF): To show probability of achieving a target (e.g., "95% chance costs will be below $X").

- Tornado Charts: To display global sensitivity analysis, ranking which input variables contribute most to output variance.

- Scenario Overlay Charts: Plot the CDFs of the total cost for each defined scenario on one graph to compare their risk profiles directly.

Q6: My sensitivity analysis shows that too many input variables are significant. How can I prioritize factors for the resiliency model? A: Conduct a two-stage analysis. First, use a global sensitivity analysis method (e.g., Sobol indices) which accounts for interaction effects. Rank variables by their total-order index. Focus on the top 3-5. For these, perform a single-variable sensitivity using spider plots to understand the direction and shape of their effect. This combination identifies the most critical levers for depot location resilience.

Experimental Protocols & Data

Protocol: Integrated Scenario-Monte Carlo Workflow for Depot Optimization

- Scenario Framing: Conduct expert workshops to identify 6-8 critical uncertainties. Plot on impact/uncertainty matrices. Select two orthogonal extremes to form a 2x2 scenario matrix.

- Parameter Quantification: For each scenario, define probability distributions for all stochastic inputs in the depot location-allocation model. See Table 1.

- Model Instrumentation: Insert distribution sampling calls into the deterministic model. Replace fixed inputs (e.g.,

demand = 100) with sampling functions (e.g.,demand = Normal(100, 20)). Implement a random seed control. - Convergence Testing: Run the simulation in increments (1k, 5k, 10k, 15k runs). Track the mean and standard deviation of the primary objective function. Proceed with the N where the relative change in the 100-run moving average is <0.5%.

- Execution: Run the simulation for N iterations per scenario. Record all decision variables (depot openings, flows) and output metrics (cost, service level) per iteration.

- Analysis: Aggregate results. Build CDFs for total cost. Perform global sensitivity analysis on the combined scenario runs to identify cross-scenario critical factors.

Table 1: Example Stochastic Input Distributions by Scenario

| Input Parameter | Scenario A: Stable Global | Scenario B: Regional Tensions | Scenario C: High Volatility Demand | Distribution Type |

|---|---|---|---|---|

| Facility Fixed Cost | Normal(μ=500k, σ=25k) | +15% Cost Multiplier | Uniform(450k, 600k) | Parametric/Empirical |

| Transport Cost per km | Fixed(1.2) | Triangular(1.3, 1.5, 2.0) | Normal(μ=1.3, σ=0.2) | Parametric |

| Supplier Lead Time (days) | Uniform(7, 10) | Pert(min=14, mode=21, max=45) | Exponential(mean=10) | Empirical |

| Demand Mean (units) | 100 (Fixed) | 100 (Fixed) | Normal(μ=100, σ=40) | Parametric |

| Disruption Probability | Bernoulli(p=0.02) | Bernoulli(p=0.15) | Bernoulli(p=0.10) | Discrete |

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Stochastic Supply Chain Research |

|---|---|

| Python (PyMC, SALib, NumPy) | Core programming environment for coding simulation logic, probability sampling, and advanced sensitivity analysis. |

| AnyLogistix or Simul8 | Commercial supply chain simulation software with built-in Monte Carlo and scenario management tools. Useful for validation. |

| Pandas & Matplotlib/Seaborn | Python libraries for managing large result datasets and creating publication-quality charts (CDFs, tornado charts). |

| Sobol Sequence Generators | A quasi-random number generator for efficient sampling of high-dimensional input spaces, improving convergence. |

| Global Sensitivity Analysis (GSA) Library (SALib) | Python library to calculate Sobol, Morris, and other sensitivity indices, quantifying input factor importance. |

| Jupyter Notebooks | Interactive environment for documenting the end-to-end workflow, integrating code, visualizations, and narrative. |

Visualizations

Stochastic Analysis Workflow for Depot Planning

Monte Carlo Input-Output Model Flow

Leveraging GIS and Geospatial Analysis for Real-World Logistics Constraints

Technical Support Center: Troubleshooting & FAQs

This support center addresses common issues encountered when applying GIS and geospatial analysis to optimize pre-processing depot locations for supply chain resiliency in pharmaceutical research and development.

Frequently Asked Questions (FAQs)

Q1: My network analysis for optimal depot placement is returning unrealistic routes that traverse impassable terrain or protected areas. How do I correct this? A: This is typically caused by an incomplete or low-resolution impedance surface. The cost raster must incorporate all real-world constraints.

- Solution: Rebuild your cost raster using a weighted overlay of multiple constraint layers. Ensure you include:

- Road Networks: Classify by type (highway, local) and assign appropriate speed/travel time values.

- Land Use/Land Cover (LULC): Assign prohibitively high costs to protected areas, water bodies, and dense forests.

- Slope/Derived Terrain: Assign higher costs to steeper slopes using a graduated scale.

- Legal Boundaries: Incorporate zoning regulations that restrict industrial logistics facilities.

Q2: When running a Location-Allocation model (e.g., Minimize Facilities), my results show depots clustered in one geographic region, ignoring distant demand points. What is the issue?

A: This often stems from an incorrect or unbounded Capacity value for your candidate depot facilities or an improperly set Problem Type.

- Solution:

- Verify that each candidate depot has a realistic

Capacityvalue (e.g., total throughput in kg/week) based on your experimental setup. - Ensure the demand points have an accurate

Weight(e.g., required shipments per week). - If using "Minimize Facilities," set a

Cutoffimpedance (max travel time) to prevent allocation over impractical distances. Consider using the "Maximize Coverage" or "Maximize Capacitated Coverage" model type for resiliency-focused scenarios.

- Verify that each candidate depot has a realistic

Q3: My spatial interpolation (e.g., Kriging) of supplier risk scores is producing a "bullseye" artifact around sparse data points, which doesn't reflect realistic spatial continuity. A: This indicates poor semivariogram model selection and validation.

- Solution: Follow this experimental protocol:

- Calculate Empirical Semivariogram: Use your sampled point data.

- Model Fitting: Test different theoretical models (Spherical, Exponential, Gaussian) against the empirical data.

- Cross-Validation: Perform k-fold cross-validation. The table below summarizes key output metrics to compare: Table 1: Semivariogram Model Cross-Validation Metrics for Risk Surface Interpolation

| Model Type | Mean Error (ME) | Root-Mean-Square Error (RMSE) | Average Standard Error (ASE) | Mean Standardized Error (MSE) |

|---|---|---|---|---|

| Spherical | ~0 | [Calculated Value] | [Calculated Value] | ~0 |

| Exponential | ~0 | [Calculated Value] | [Calculated Value] | ~0 |

| Gaussian | ~0 | [Calculated Value] | [Calculated Value] | ~0 |

Optimal Model: Select the model with RMSE closest to ASE and MSE nearest to zero.

Q4: After integrating real-time traffic data via API into my network dataset, the solve times for my routing models have become prohibitively slow for iterative thesis experimentation. A: You are likely calling the live API during every solve iteration. This is computationally expensive.

- Solution: Implement a static snapshotting workflow.

- Data Caching: Download and cache traffic data for key time windows (e.g., peak AM/PM, off-peak) relevant to your logistics operations.

- Create Time-Sliced Network Datasets: Build separate network datasets, each incorporating the cached traffic impedance for a specific time window.

- Use ModelBuilder or Scripting: Automate the selection of the appropriate time-sliced network based on your analysis's departure time parameter.

Experimental Protocol: Multi-Criteria Decision Analysis (MCDA) for Depot Suitability

Objective: To generate a candidate suitability surface for resilient pre-processing depot locations by integrating environmental, economic, and logistical constraints.

Methodology:

- Criteria Selection & Data Layer Preparation: Standardize all raster layers to identical extents, cell size, and projection.

- Reclassification: Reclassify each layer to a consistent suitability scale (e.g., 1-9, where 9 is most suitable).

- Weight Assignment: Apply weights using an Analytic Hierarchy Process (AHP) pairwise comparison matrix based on thesis resiliency goals (e.g., Proximity to Major Highways: 0.3, Distance from Flood Zones: 0.25, Land Cost: 0.2, Proximity to Supplier Clusters: 0.25).

- Weighted Overlay: Execute the weighted sum:

Suitability = (Highway_Prox * 0.3) + (Flood_Dist * 0.25) + (Land_Cost * 0.2) + (Supplier_Prox * 0.25). - Validation: Overlay existing high-performing depot locations (if available) to visually and statistically assess correlation with high-suitability zones.

Title: MCDA Suitability Analysis Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Geospatial Tools & Data for Logistics Constraint Analysis

| Item / Software | Function in Experiment | Typical Application in Thesis Research |

|---|---|---|

| ArcGIS Pro / QGIS | Core spatial data management, visualization, and analysis platform. | Conducting network analysis, weighted overlays, and spatial statistics. |

| Network Dataset | A topologically correct model of transportation networks (roads) with attributes like speed and direction. | Solving Vehicle Routing Problems (VRP) and Location-Allocation models for depot placement. |

| Cost Raster (Impedance Surface) | A raster layer where each cell's value represents the cost of travel across it. | Calculating least-cost paths for shipments across terrain, avoiding high-risk zones. |

| AHP (Analytic Hierarchy Process) | A structured technique for organizing and analyzing complex decisions based on mathematics and psychology. | Objectively determining the weight of factors (cost, proximity, risk) in suitability models. |

| Python (geopandas, arcpy) | Scripting and automation of repetitive geospatial workflows and data processing. | Automating the batch processing of multiple scenario analyses (e.g., "what-if" disruptions). |

| Live Traffic & Weather APIs | Sources of dynamic constraint data that impact travel time and route viability. | Incorporating real-world volatility into resiliency stress-testing models. |

Multi-Criteria Decision Analysis (MCDA) Weighing Cost, Speed, Risk, and Compliance

Technical Support Center

Troubleshooting Guides & FAQs

Q1: In our MCDA model for depot location, the weighting for 'Compliance' seems to disproportionately skew results away from cost-effective options. How can we adjust the model to better balance these criteria?

A: This is a common issue when using static weight assignment. Implement a sensitivity analysis protocol. First, run your MCDA (e.g., using TOPSIS or AHP) with your initial weights. Then, systematically vary the Compliance weight +/- 20% in 5% increments while holding others constant. Observe the rank reversal of location alternatives. The goal is to find the weight range where the top 3 alternative depots remain stable, indicating a robust solution. Use the table below to record the stability index.

Q2: When quantifying 'Speed' for our resiliency model, should we use theoretical throughput (optimal conditions) or empirical data from disruptions?

A: Always use empirical data where available. Design a discrete-event simulation experiment. Protocol: 1) Model your supply chain network with candidate depots in a tool like AnyLogic or Simio. 2) Input historical order and shipment data. 3) Introduce a 'disruption event' node (e.g., port closure, supplier failure) with a probability derived from your risk assessment. 4) Run 1000 simulations per depot configuration. 5) Measure the actual 'Speed' as the 95th percentile of order fulfillment time during disruption scenarios. This provides a resilient speed metric.

Q3: Our risk data for geopolitical factors is qualitative (High/Medium/Low) but our MCDA requires quantitative inputs. What is the standard conversion method?

A: Use a paired comparison survey method with your research team to derive quantitative scores. Protocol: 1) List all risk factors (e.g., political instability, regulatory change, natural disaster frequency). 2) Create a matrix comparing each factor against every other. 3) Have each team member score on a 1-9 scale (1=equally important, 9=extremely more important). 4) Aggregate scores using the geometric mean to avoid rank reversal. 5) Calculate eigenvectors to produce normalized priority weights. See sample conversion below.

Q4: How do we validate that our chosen MCDA method (e.g., Weighted Sum Model vs. PROMETHEE) is appropriate for the depot location problem?

A: Perform a method correlation validation. Protocol: 1) Select 4-5 MCDA methods (WSM, WPM, TOPSIS, ELECTRE, PROMETHEE). 2) Apply each method to your dataset using the same weight set. 3) Rank the depot location alternatives from each method. 4) Calculate Spearman's rank correlation coefficient (ρ) between the method outputs. 5) High correlation (ρ > 0.7) between most methods suggests your problem structure is well-represented. Low correlation indicates you must scrutinize criteria independence and scale effects.

Data Tables

Table 1: Sample Criteria Weights & Sensitivity Ranges for Depot Location

| Criteria | Initial Weight | Robustness Range (Min) | Robustness Range (Max) | Measurement Unit |

|---|---|---|---|---|

| Cost (CapEx & OpEx) | 0.35 | 0.28 | 0.42 | USD, NPV over 5 years |

| Speed (Fulfillment Time) | 0.25 | 0.20 | 0.30 | Hours (95th %ile) |

| Risk (Disruption Score) | 0.20 | 0.16 | 0.24 | Index (0-1, 1=High Risk) |

| Compliance (Regulatory) | 0.20 | 0.15 | 0.25 | Audit Score (0-100) |

Table 2: Simulated Performance of Candidate Pre-processing Depots

| Depot Location ID | Avg. Cost Score (Lower is better) | Avg. Speed Score (Higher is better) | Avg. Risk Score (Lower is better) | Avg. Compliance Score (Higher is better) | Composite MCDA Score |

|---|---|---|---|---|---|

| DPT-ALPHA | 0.85 | 0.72 | 0.65 | 0.95 | 0.79 |

| DPT-BRAVO | 0.95 | 0.88 | 0.50 | 0.80 | 0.81 |

| DPT-CHARLIE | 0.70 | 0.65 | 0.80 | 0.85 | 0.73 |

| DPT-DELTA | 0.90 | 0.95 | 0.70 | 0.90 | 0.87 |

Experimental Protocols

Protocol: Calculating a Composite Risk Index for a Geographic Region

- Data Collection: Gather 10 years of historical data for: (a) Number of natural disasters (NA); (b) Political Stability Index (PS) from World Bank; (c) Freight Transparency International Corruption Perceptions Index (CPI); (d) Frequency of regulatory changes impacting logistics (RC).

- Normalization: Min-max normalize each dataset to a 0-1 scale, where 1 indicates highest risk.

- Weighting: Assign weights using AHP based on expert survey: NA=0.3, PS=0.25, CPI=0.25, RC=0.2.

- Aggregation: Calculate composite index for region i:

Risk_i = (NA_norm * 0.3) + (PS_norm * 0.25) + (CPI_norm * 0.25) + (RC_norm * 0.2). - Validation: Cross-validate by checking index against historical supply disruption days in the region; Pearson correlation should be > 0.6.

Protocol: Eliciting and Validating Criteria Weights from Subject Matter Experts

- Structured Survey: Develop a survey using 9-point Saaty scale for pairwise comparisons of Cost, Speed, Risk, Compliance.

- Expert Panel: Recruit a panel of ≥5 experts from supply chain, quality/compliance, and logistics.

- Consistency Check: Calculate Consistency Ratio (CR) for each respondent's matrix. Discard responses with CR > 0.1.

- Aggregation: Aggregate valid individual matrices using geometric mean to form a group comparison matrix.

- Derive Weights: Compute the principal eigenvector of the group matrix to obtain final criterion weights.

- Feedback Loop: Present results to panel in a second round (Delphi method) to refine.

Diagrams

MCDA Workflow for Depot Selection

Interdependencies Among Decision Criteria

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in MCDA for Supply Chain Resiliency |

|---|---|

| MCDA Software (e.g., Decision Lens, Expert Choice, R 'MCDM' package) | Provides algorithmic frameworks (AHP, TOPSIS, PROMETHEE) to structure the decision problem, calculate weights, and rank alternatives. |

| Discrete-Event Simulation Platform (e.g., AnyLogic, Simio, FlexSim) | Models dynamic supply chain behavior under disruption to generate empirical data for 'Speed' and 'Risk' criteria. |

| Geospatial Risk Database (e.g., Verisk Maplecroft, World Bank Indicators) | Provides quantifiable, location-specific data for political, economic, environmental, and regulatory risk factors. |

| Expert Elicitation Survey Platform (e.g., Qualtrics, SurveyMonkey) | Facilitates structured pairwise comparison surveys to derive objective criterion weights from subjective expert judgment. |

| Sensitivity Analysis Toolkit (e.g., R 'sensitivity' package, Palisade @RISK) | Performs Monte Carlo simulation on weight inputs to test the robustness and stability of the MCDA ranking results. |

Technical Support Center: Troubleshooting Guides & FAQs

FAQs & Troubleshooting for Cryogenic Logistics & Pre-Processing Experiments

Q1: During cell viability assessment post-thaw from a candidate depot's storage unit, we observe a >20% drop compared to baseline. What are the primary troubleshooting steps? A: A significant viability drop post-thaw typically indicates issues with the cold chain or thawing protocol.

- Verify Temperature Logs: Check continuous monitoring data from the depot and during transport for any excursions outside the validated range (typically -150°C to -190°C for liquid nitrogen vapor phase).

- Audit Thawing Procedure: Ensure the water bath is calibrated to 37°C ± 1°C and that thawing is completed within the specified window (e.g., <2 minutes). Rapid and uniform thawing is critical.

- Inspect Cryopreservation Media: Confirm the lot of DMSO used is within its expiry and has been validated for the specific cell type. Consider testing a fresh aliquot.

- Assess Fill Volume: Inconsistent cryobag or vial fill volumes can alter freezing/thawing kinetics. Standardize to the validated volume.

Q2: Our simulation for depot location optimization consistently fails to converge on a solution that meets both cost and resilience KPIs. How can we adjust the model parameters? A: This is often due to conflicting constraints or an under-defined resilience metric.

- Parameterize Resilience: Instead of a binary "pass/fail," quantify resilience as a score (e.g.,

Network Resilience Score = (∑[Alternative Paths within 48h]) / (Total Node Pairs)). See Table 1 for sample inputs. - Relax Initial Constraints: Temporarily remove the cost ceiling constraint and run the model to see the theoretical resilience-optimal network. Then, iteratively re-introduce cost constraints.

- Validate Input Data: Ensure the "failure probability" you have assigned to each potential node (e.g., from geopolitical risk, natural disaster data) is current and sourced reliably.

Q3: When performing pre-processing quality control (QC) assays at a regional depot, how do we handle an out-of-specification (OOS) result for vector concentration in a lentiviral batch? A: Follow a strict OOS investigation procedure to determine if the result is indicative of product failure or an analytical error.

- Phase I Investigation (Analytical): Repeat the assay with the original sample aliquot by a second analyst. Verify reagent integrity (e.g., qPCR standard curve efficiency for vector titer assays) and equipment calibration.

- Phase II Investigation (Process & Depot): If the OOS is confirmed, audit the chain of identity and custody. Review temperature logs during the batch's holding period at the depot. Assess if any deviations occurred during the in-depot handling (e.g., inappropriate temporary storage).

- Decision Point: If the investigation finds no analytical error, the batch must be quarantined. Correlate data with other QC metrics (e.g., infectivity ratio, sterility) from the same batch to make a final batch disposition decision.

Data Presentation

Table 1: Sample Input Parameters for Depot Network Optimization Model

| Parameter | Description | Example Value | Data Source |

|---|---|---|---|

| Demand Nodes | Clinical trial sites or treatment centers | 85 locations (global) | ClinicalTrials.gov, internal pipeline |

| Candidate Depots | Potential pre-processing/storage locations | 12 pre-qualified facilities | Site audit reports, logistics partner data |

| Transport Time Matrix | Hours between all nodes (door-to-door) | 24-72 hours (simulated) | IATA TTK, logistics provider APIs |

| Failure Probability (p) | Annual risk of node/single route disruption | 0.01 - 0.15 per node | World Bank Governance Indicators, NOAA seismic data |

| Cost per Unit | Storage & pre-processing cost per patient dose | $X - $Y (simulated) | Vendor quotes, operational cost models |

| Resilience Threshold (T) | Max allowable delay in case of single-point failure | ≤ 48 hours | Regulatory guidance, clinical viability limits |

Table 2: Comparative Analysis of Hypothetical Network Configurations

| Network Design | No. of Depots | Est. Annual Cost (Indexed) | Avg. Transport Time (hrs) | Network Resilience Score* | Viability Drop at Edge (Simulated) |

|---|---|---|---|---|---|

| Centralized (Hub & Spoke) | 1 | 100 | 48.2 | 0.15 | 22% ± 5% |

| Regional (3 Hubs) | 3 | 135 | 24.5 | 0.65 | 12% ± 3% |

| Distributed (+Edge Pre-processing) | 6 | 185 | 18.1 | 0.92 | <5% ± 2% |

*Score: 1.0 = All node pairs have ≥2 viable routes within threshold T.

Experimental Protocols

Protocol 1: Simulating Cell Viability Under Logistic Stress Objective: To model the impact of transport duration and temperature excursions on cell viability for depot location planning. Methodology:

- Sample Preparation: Aliquot identical volumes of a standardized cryopreserved cell therapy product (e.g., CAR-T cells).

- Stress Induction: Place aliquots in a qualified thermal chamber simulating transport profiles:

- Control: Constant -180°C.

- Profile A: -180°C with a 5-minute "door open" spike to -100°C at midpoint.

- Profile B: Held at -150°C for 24 hours (simulating suboptimal depot storage).

- Profile C: Gradual warming to -80°C over 12 hours (simulating extended transport failure).

- Thaw & Analysis: Thaw all samples using the standard SOP at t=0, t=24h (simulated), and t=48h. Assess viability via flow cytometry (Annexin V/PI) and functionality via a cytokine release assay.

- Data Integration: Fit viability decay curves to inform maximum allowable transport time in the network optimization model.

Protocol 2: Monte Carlo Simulation for Network Disruption Objective: To quantify the resilience of a proposed depot network configuration. Methodology:

- Model Definition: Input the network as a graph G=(V,E) where V={depots, demand nodes} and E={transport routes}. Assign attributes (cost, time, p_failure) to each edge and node.

- Failure Simulation: Run 10,000 iterations. In each iteration, randomly disable nodes/edges based on their

p_failureto simulate disruptions (e.g., depot outage, route closure). - Flow Recalculation: For each iteration, re-calculate optimal routes from all depots to all demand nodes using Dijkstra's algorithm, subject to the maximum time constraint T.

- KPI Calculation: Record the percentage of demand that can still be met within time T in each iteration. The Network Resilience Score is the average of this percentage across all iterations.

Mandatory Visualization

Title: Resilient Depot Network Flow for Cell & Gene Therapy

Title: Pre-Processing Workflow at a Regional Depot

The Scientist's Toolkit: Research Reagent & Material Solutions

Table 3: Essential Materials for Pre-Processing & Stability Experiments

| Item | Function in Context | Key Consideration for Depot Planning |

|---|---|---|

| Controlled-Rate Freezer | Validates and simulates temperature ramp-down profiles for new product introductions at a depot. | Requires IQ/OQ/PQ at each depot location; calibration traceability. |

| Portable Data Loggers (e.g., RFID, Bluetooth) | Provides continuous temperature monitoring during simulated transport legs between nodes. | Data must be 21 CFR Part 11 compliant and integrate with central track-and-trace system. |

| Liquid Nitrogen Dry Vapor Shipper | Enables reliable transport of cryogenic materials between manufacturing and depots. | Validated hold time is a critical constraint for defining maximum route distance/duration. |

| Closed-System Processing Kits (e.g., for thaw/wash/formulation) | Allows for sterile pre-processing at the depot without a full cleanroom (ISO 5 biosafety cabinet within ISO 7 room). | Reduces depot facility footprint and cost; essential for distributed network model. |

| Rapid QC Assay Kits (e.g., flow cytometry-based viability, fast mycoplasma) | Enables in-depot quality control with minimal turnaround time (<4 hours) before release for shipment. | Assay reproducibility across different depot lab personnel must be rigorously validated. |

| qPCR-based Vector Titer Assay | Quantifies viral vector concentration post-thaw and post-processing at the depot. | Requires standard curve and controls validated for inter-depot use to ensure consistency. |

Overcoming Operational Hurdles and Fine-Tuning Depot Performance

Technical Support Center

Troubleshooting Guide: Mitigating Implementation Risks

Issue 1: Unanticipated Delays in Reagent Procurement Disrupting Experiment Timelines

- Symptoms: Critical assay reagents (e.g., specialized cytokines, conjugated antibodies, cell culture media components) are out of stock at the central depot. Orders from primary suppliers indicate lead times of 8+ weeks, halting parallel research streams.

- Root Cause Analysis: Underestimation of procurement lead times, often due to reliance on pre-pandemic data or failure to account for supplier diversification and single-source dependencies.

- Immediate Action:

- Audit your current experimental pipeline and identify all reagents with a single supplier.

- Implement a "Lead Time Heat Map" for all critical materials (see Table 1).

- Establish a local (lab-level) buffer stock for 3-5 high-risk, long-lead items.

- Long-Term Resolution: Redesign the depot network model to include regional, specialized "fast-moving" depots for high-priority, temperature-sensitive reagents, moving away from a purely cost-optimized central super-depot.

Issue 2: Loss of Sample Viability Due to Extended Transport from Central Depot

- Symptoms: Primary cell samples or temperature-sensitive biologics arrive at the research site with compromised viability or activity, leading to failed experiments and irreproducible data.

- Root Cause Analysis: Over-centralization of sample banking and reagent storage, leading to complex, multi-leg logistics that exceed the stability window of the material.

- Immediate Action:

- Validate cold chain logistics for your most sensitive materials. Track temperature and time in transit.

- Redefine "critical" materials not just by cost, but by stability half-life (t½) at transport conditions.

- Long-Term Resolution: Implement a hybrid hub-and-spoke model. Central depot holds stable, bulky items. Regional, nimble depots equipped with ultra-low freezers (-80°C) or liquid nitrogen storage handle sensitive, high-value samples for a cluster of nearby research facilities.

Frequently Asked Questions (FAQs)

Q1: How do we accurately calculate lead times for our depot planning model? A: Lead times are dynamic. You must model a range (best-case, expected, worst-case) using current data. Integrate supplier scorecards, geopolitical risk indices, and port congestion data. The table below summarizes key factors:

Table 1: Lead Time Calculation Components for Research Supply Planning

| Component | Description | Typical Impact Range (Weeks) | Data Source |

|---|---|---|---|

| Manufacturing/Sourcing | Time for supplier to produce or source the raw material. | 2 - 26 | Supplier quotation, industry benchmarks. |

| Quality Control & Release | In-house testing, stability checks, documentation. | 1 - 4 | Good Manufacturing Practice (GMP) guidelines. |

| Customs & Regulatory Clearance | Import/export documentation, inspections for biologics. | 1 - 8 (Highly variable) | Local customs brokers, trade compliance data. |

| Domestic Logistics | Transportation from port of entry to central depot. | 0.5 - 2 | Logistics partner Service Level Agreements (SLAs). |

| Depot Processing | Receiving, labeling, kitting, quality check. | 0.5 - 1 | Internal warehouse performance metrics. |

Q2: What are the key metrics to identify if our depot is over-centralized? A: Monitor these Key Performance Indicators (KPIs):

Table 2: KPIs for Diagnosing Over-Centralization

| KPI | Calculation | Threshold Indicating Risk |

|---|---|---|

| Average Last-Mile Delivery Time | Time from depot dispatch to researcher receipt. | > 48 hours for standard ambient items. |

| Cold Chain Breakage Rate | % of sensitive shipments with temperature excursions. | > 2% for critical reagents. |

| Single-Source Critical Items | # of reagents with only one approved supplier. | Any. Aim for ≥2 for all mission-critical items. |

| Experiment Delay Attribution | % of project delays directly linked to material availability. | > 15% suggests structural supply issues. |

Q3: Can you provide a protocol for stress-testing our depot resilience? A: Yes. Conduct a "Supply Shock Simulation" experiment.

Experimental Protocol: Supply Chain Stress Test

- Objective: To evaluate the robustness of the current depot configuration against a major disruption.

- Methodology:

- Scenario Design: Define a disruption (e.g., closure of a primary shipping hub, loss of a key supplier for a critical assay kit).