Resilient Biofuel Supply Chains: Strategies to Mitigate Facility Disruption Risks in Renewable Energy Networks

This article provides a comprehensive analysis of strategies for optimizing biofuel supply chains against facility disruption risks, targeting researchers and development professionals.

Resilient Biofuel Supply Chains: Strategies to Mitigate Facility Disruption Risks in Renewable Energy Networks

Abstract

This article provides a comprehensive analysis of strategies for optimizing biofuel supply chains against facility disruption risks, targeting researchers and development professionals. It explores the foundational vulnerabilities within biofuel networks, examines advanced methodological frameworks like stochastic programming and resilience analytics for modeling disruptions, and details troubleshooting and optimization techniques for enhancing robustness. The content further validates these approaches through comparative analysis of real-world case studies and simulation results. The synthesis offers actionable insights for building resilient, efficient, and sustainable biofuel infrastructure critical for the energy transition.

Understanding Biofuel Supply Chain Vulnerabilities: A Primer on Disruption Risks and Network Fragility

Biofuel Research Technical Support Center

Welcome to the technical support center for the research initiative "Optimizing biofuel supply chain under facility disruption risks." This resource provides troubleshooting guides and FAQs for researchers and scientists conducting experiments within this framework.

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: My lignocellulosic feedstock pretreatment yields are inconsistent, affecting downstream hydrolysis. What could be the cause? A: Inconsistency often stems from variable feedstock particle size and moisture content. Implement a strict feedstock characterization protocol before pretreatment. Use sieving to standardize particle size (e.g., 0.5-2.0 mm) and dry samples to a constant weight (e.g., <10% moisture). Monitor and control pretreatment parameters (temperature, residence time, catalyst concentration) in real-time. Facility disruptions in feedstock pre-processing equipment can introduce this variability.

Q2: During fermentation inhibition studies, my control reactor shows reduced microbial growth. How do I troubleshoot? A: Follow this diagnostic protocol:

- Check Feedstock-Derived Inhibitors: Analyze hydrolysate for consistent levels of furans (furfural, HMF), weak acids (acetic, formic), and phenolics. Use the HPLC method below.

- Verify Nutrient Sterilization: Autoclave nutrients (e.g., yeast extract, phosphate buffers) separately from hydrolysate to avoid Maillard reaction products that can inhibit growth.

- Calibrate pH and DO Sensors: Sensor drift is common. Recalibrate before each batch.

- Assess Contamination: Plate samples on non-selective media. Contamination can consume nutrients and produce secondary inhibitors.

Q3: What is the best method to quickly quantify common microbial inhibitors in biomass hydrolysates? A: High-Performance Liquid Chromatography (HPLC) with a UV/RI detector array is standard. See the protocol below.

Q4: My supply chain simulation model for disruption risks is computationally intensive. How can I optimize it? A: This is common when modeling multi-echelon networks. Consider:

- Reducing Temporal Granularity: Shift from hourly to daily time steps for long-term risk assessment.

- Applying Scenario Aggregation: Use k-means clustering to group similar disruption scenarios (by type, location, duration) before full simulation.

- Validating with Key Performance Indicators (KPIs): Focus simulation output on core KPIs like Resilience Cost and Recovery Time to simplify output analysis.

Experimental Protocols

Protocol 1: HPLC Analysis of Hydrolysate Inhibitors Objective: Quantify concentrations of common fermentation inhibitors (furfural, 5-hydroxymethylfurfural (HMF), acetic acid, formic acid, levulinic acid). Methodology:

- Sample Preparation: Filter hydrolysate through a 0.2 μm syringe filter. Dilute 1:10 with mobile phase (0.005M H₂SO₄).

- HPLC Setup:

- Column: Bio-Rad Aminex HPX-87H (or equivalent ion exclusion column).

- Mobile Phase: 0.005 M Sulfuric Acid, isocratic.

- Flow Rate: 0.6 mL/min.

- Temperature: 50°C Column Oven, 35°C Detector.

- Detection: Refractive Index (RI) Detector for acids; UV-Vis Detector at 280 nm for furans (furfural, HMF).

- Calibration: Create standard curves for each compound (concentration range 0.1-10 g/L). Inject each standard and sample in triplicate.

- Calculation: Use peak area integration software. Calculate concentration from the linear regression of the standard curve.

Protocol 2: Assessing Microbial Inhibition in Hydrolysates Objective: Determine the inhibitory effect of a hydrolysate on a model fermenting microorganism (e.g., Saccharomyces cerevisiae). Methodology:

- Preparation: Prepare a synthetic medium matching the sugar composition of your hydrolysate (e.g., glucose, xylose). This is your Control Medium.

- Test Media: Create Detoxified Hydrolysate Medium (via overliming or activated charcoal treatment) and Raw Hydrolysate Medium (pH adjusted to match control).

- Inoculation: Inoculate each medium with a standard inoculum of your microbe (OD600 = 0.1).

- Cultivation: Cultivate in a controlled bioreactor or shake flasks at optimal conditions (e.g., 30°C, 150 rpm). Monitor OD600 and substrate consumption every 2-3 hours.

- Analysis: Calculate key metrics: Specific Growth Rate (μ max), Lag Time, and Ethanol Yield (if applicable). Compare values between media to quantify inhibition.

Data Presentation: Common Biofuel Feedstock Composition

Table 1: Representative Composition of Key Lignocellulosic Feedstocks (% Dry Weight)

| Feedstock Type | Cellulose | Hemicellulose | Lignin | Ash | References |

|---|---|---|---|---|---|

| Corn Stover | 35-40% | 20-25% | 15-20% | 4-6% | (NREL 2023) |

| Switchgrass | 30-35% | 25-30% | 15-20% | 5-6% | (DOE 2022) |

| Sugarcane Bagasse | 40-45% | 25-30% | 20-25% | 1-4% | (BioFR 2024) |

| Poplar Wood | 45-50% | 20-25% | 20-25% | <1% | (IEA Bioenergy 2023) |

Table 2: Inhibitor Concentrations in Various Biomass Hydrolysates

| Feedstock | Pretreatment | Furfural (g/L) | HMF (g/L) | Acetic Acid (g/L) | Formic Acid (g/L) |

|---|---|---|---|---|---|

| Corn Stover | Dilute Acid | 1.2 - 2.5 | 0.8 - 1.8 | 4.5 - 7.5 | 1.0 - 2.5 |

| Wheat Straw | Steam Explosion | 0.5 - 1.2 | 0.3 - 1.0 | 3.0 - 5.0 | 0.5 - 1.5 |

| Sugarcane Bagasse | Alkaline | < 0.1 | < 0.1 | 2.0 - 4.0 | 0.2 - 0.8 |

Diagrams

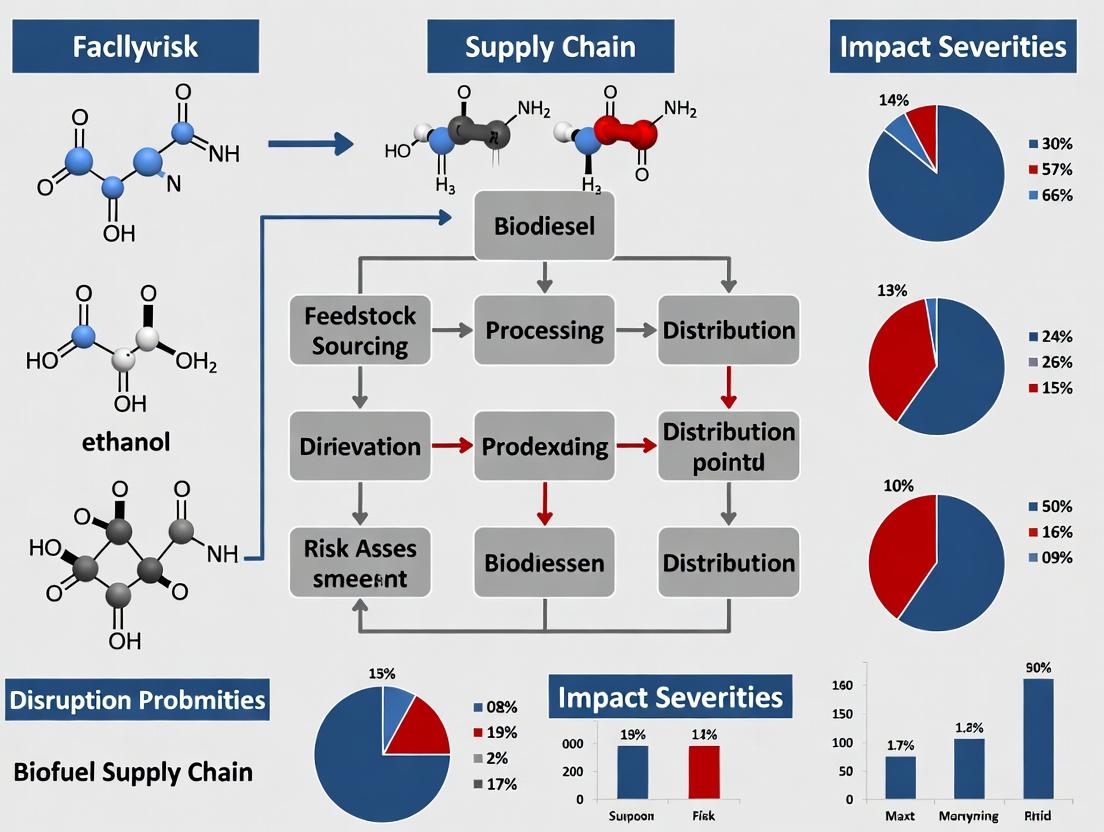

Title: Biofuel Supply Chain with Disruption Risk Points

Title: Microbial Inhibition Pathways from Hydrolysate Toxins

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Biofuel Process & Inhibition Research

| Reagent/Material | Function in Research | Example Supplier/Product |

|---|---|---|

| Aminex HPX-87H Column | HPLC separation of sugars, acids, and furans in hydrolysates. | Bio-Rad Laboratories |

| Cellulase & Hemicellulase Enzyme Cocktails | Standardized enzymes for hydrolyzing pretreated biomass to fermentable sugars. | Novozymes (Cellic CTec3) |

| Model Microorganism Strains | Genetically characterized strains for consistent fermentation studies. | ATCC (e.g., S. cerevisiae BY4741) |

| Synthetic Metabolic Inhibitors | Pure compounds (furfural, HMF, acetic acid) for creating calibration standards and spiking experiments. | Sigma-Aldrich |

| Detoxification Resins | Activated charcoal or polymeric adsorbents for hydrolysate detoxification studies. | Dowex (XAD-4 resin) |

| Nutrient Media (Yeast Nitrogen Base, etc.) | Defined media for controlled microbial cultivation experiments. | Thermo Fisher Scientific |

| Anaerobic Chamber or Sealed Cultivation System | For maintaining anoxic conditions required by many biofuel-producing microbes. | Coy Laboratory Products |

Technical Support Center: Troubleshooting Biofuel Supply Chain Experimentation

Welcome to the Technical Support Center for the thesis "Optimizing Biofuel Supply Chain Under Facility Disruption Risks." This resource provides targeted guidance for researchers and scientists modeling and mitigating disruption risks in biofuel production and logistics networks.

Frequently Asked Questions (FAQs) & Troubleshooting Guides

Q1: Our agent-based supply chain simulation is yielding inconsistent disruption propagation results under identical initial conditions. How can we ensure model stability? A: This indicates a potential issue with random number generation or uninitialized agent state variables.

- Troubleshooting Steps:

- Seed Control: Explicitly set and record the pseudo-random number generator (PRNG) seed at the start of each simulation run. In Python (using

numpy), usenp.random.seed(12345). - Agent Initialization Audit: Ensure all agent attributes (e.g., inventory levels, operational status) are deterministically initialized after setting the PRNG seed, not before.

- Parallel Processing Check: If using parallel computation, verify that each thread/process uses an independent and well-seeded PRNG stream to avoid correlation.

- Seed Control: Explicitly set and record the pseudo-random number generator (PRNG) seed at the start of each simulation run. In Python (using

- Protocol (Model Stability Verification):

- Implement a test script that runs the simulation N times (e.g., N=50) with a fixed seed.

- Log a key output metric (e.g., total system throughput post-disruption) for each run.

- Calculate the standard deviation of this metric across runs. A non-zero standard deviation in a deterministic model indicates a source of randomness that must be controlled.

Q2: When integrating geopolitical risk indices (like the Global Peace Index) into our facility risk scoring, what is the best method for normalizing and weighting them against operational data (like Mean Time Between Failures)? A: Use a multi-criteria decision analysis (MCDA) framework, such as the Analytic Hierarchy Process (AHP) or a simple linear scaling with expert-derived weights.

- Detailed Methodology:

- Data Normalization: Convert all metrics to a common scale (e.g., 0-1, where 1=highest risk). For geopolitical indices (higher score = higher risk), use min-max normalization:

(x - min(index)) / (max(index) - min(index)). For operational reliability like MTBF (higher value = lower risk), first invert it to a "failure rate" proxy, then normalize. - Weight Assignment: Convene a panel of 3-5 experts (supply chain logicians, political risk analysts). Using AHP, have them pairwise compare the relative importance of "Natural," "Operational," and "Geopolitical" risk categories. Calculate the consistency ratio; accept if <0.1.

- Aggregate Risk Score: For a facility i:

Risk_i = (w_geo * GeoIndex_norm) + (w_op * OpRisk_norm) + (w_nat * NatHazard_norm).

- Data Normalization: Convert all metrics to a common scale (e.g., 0-1, where 1=highest risk). For geopolitical indices (higher score = higher risk), use min-max normalization:

Q3: Our network flow model for rerouting feedstocks during a port closure is computationally intractable for large-scale, real-world networks. What optimization techniques are recommended? A: For large-scale networks, employ a combination of graph simplification and heuristic or decomposition algorithms.

- Troubleshooting Guide:

- Problem Identification: Is the issue memory (RAM) or processing time (CPU)?

- Solution Paths:

- Graph Reduction: Aggregate demand nodes by geographic region (e.g., cluster facilities within a 50km radius). Use community detection algorithms like Louvain method on the network graph.

- Solver Selection: Switch from an exact Linear Programming solver (which finds the optimal solution) to a heuristic like Simulated Annealing or a metaheuristic like a Genetic Algorithm for "good-enough" solutions in large networks.

- Model Decomposition: Use Benders Decomposition to break the problem into a master problem (strategic rerouting decisions) and independent sub-problems (tactical flow allocation for each scenario).

Q4: How do we quantitatively validate a probabilistic disruption forecast model for hurricane-related facility outages? A: Use statistical reliability tests like Probability Integral Transform (PIT) and evaluation of proper scoring rules.

- Experimental Protocol (Model Validation):

- Data Segmentation: Split historical hurricane/outage data into training (70%) and testing (30%) sets, respecting temporal order.

- Generate Forecasts: For each facility in the test set, use your model to produce a probabilistic forecast—not a binary yes/no, but a predicted distribution of outage likelihood.

- Apply Scoring Rules: Calculate the Continuous Ranked Probability Score (CRPS). Lower CRPS indicates better forecast accuracy and sharpness.

- Apply PIT: If the forecast probability distribution is accurate, the PIT values (the cumulative probability at which the actual outage occurred) should follow a uniform distribution. Test this with a Kolmogorov-Smirnov test.

Data Presentation: Comparative Risk Metrics

Table 1: Normalized Comparative Risk Scores for Prototypical Biofuel Facility Locations

| Facility Type / Location | Geopolitical Risk Index (Normalized) | Seismic Risk (Peak Ground Accel. %g, norm.) | Flood Risk (FEMA Zone, norm.) | Operational MTBF (Days, norm.) | Aggregate Disruption Score |

|---|---|---|---|---|---|

| Coastal Refinery, SE Asia | 0.85 | 0.20 | 0.95 | 0.30 | 0.68 |

| Inland Biorefinery, Midwest USA | 0.15 | 0.10 | 0.25 | 0.90 | 0.28 |

| Port Terminal, NW Europe | 0.25 | 0.05 | 0.60 | 0.85 | 0.36 |

| Feedstock Hub, Eastern Europe | 0.65 | 0.05 | 0.40 | 0.70 | 0.53 |

Weights Applied: Geopolitical=0.4, Natural=0.3, Operational=0.3. Normalized to 0-1 scale (1=highest risk). Data synthesized from 2023 Global Peace Index, USGS NHGIS, FEMA NFHL, and industry maintenance records.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Supply Chain Disruption Modeling

| Item / Solution | Function in Research | Example Vendor / Tool |

|---|---|---|

| AnyLogistix or similar Simulation Software | Integrated platform for agent-based & discrete-event simulation of supply chains under disruption scenarios. | The AnyLogic Company |

| Gephi or NetworkX | For modeling, analyzing, and visualizing the complex network topology of supply chains (nodes=facilities, edges=transport links). | Open Source / Python Library |

CRAN scoringRules R Package |

Provides rigorous statistical functions (like CRPS) for evaluating probabilistic forecasts of disruption events. | Comprehensive R Archive Network |

| Commercial Risk Indices (GPJ, WGI) | Quantitative, annually updated data streams for parameterizing geopolitical and governance risk models. | Institute for Economics & Peace, World Bank |

| Linear & Mixed-Integer Programming Solver (Gurobi, CPLEX) | High-performance optimization engines for solving large-scale network rerouting and inventory prepositioning models. | Gurobi Optimization, IBM |

| Geospatial Risk Data Layers | GIS-ready data on natural hazards (earthquake, flood, hurricane) for spatial risk assessment of facility locations. | NASA SEDAC, NOAA, USGS |

Experimental Workflow & Pathway Visualizations

Title: Research Workflow for Disruption Risk Thesis

Title: Supply Chain Disruption Cascade Logic

Technical Support Center: Biofuel Process Disruption Mitigation

This support center provides targeted troubleshooting for common experimental and pilot-scale facility failures in biofuel research, framed within the thesis context of Optimizing biofuel supply chain under facility disruption risks.

FAQs & Troubleshooting Guides

Q1: During continuous fermentation for bioethanol production, we observe a sudden pH drop and cessation of microbial activity. What are the immediate steps? A: This indicates a contamination event or critical nutrient depletion.

- Immediate Action: Halt feedstock inflow. Take a sterile sample for immediate Gram staining and microscopy.

- Diagnosis: Check feedstock sterility logs and calibrate pH probes. Review the last nutrient media supplement.

- Protocol - Contamination Check:

- Prepare slides from the culture sample.

- Perform a Gram stain (Crystal Violet, Iodine, Alcohol decolorizer, Safranin).

- Examine under oil immersion (1000X magnification). Pure S. cerevisiae cultures are Gram-positive and ovoid; rods or mixed morphologies indicate bacterial contamination.

- Mitigation: If contaminated, the batch must be sacrificed and sterilized. Clean and sterilize the bioreactor following SOP-7 (Full CIP/SIP Cycle). Implement a stricter aseptic sampling protocol.

Q2: Our HPLC analysis for lipid quantification from algal biofuel samples shows inconsistent triacylglyceride (TAG) peak areas. How do we troubleshoot? A: Inconsistency often stems from sample preparation or column degradation.

- Immediate Action: Run a standard TAG calibration curve (e.g., Triolein) to verify system performance.

- Diagnosis: Check the pressure profile of the HPLC system. A rising baseline or peak broadening suggests column issues.

- Protocol - Sample Preparation Standardization:

- Lyse: Resuspend algal pellet in 2:1 Chloroform:Methanol. Sonicate on ice (3 pulses of 10s each).

- Extract: Add 0.9% NaCl solution, vortex, and centrifuge at 1000 x g for 10 min.

- Collect: Recover the lower organic phase. Dry under nitrogen gas.

- Redissolve: Reconstitute in 2-propanol for HPLC injection. Critical: Ensure consistent drying and reconstitution times and volumes.

Q3: The enzymatic hydrolysis yield of lignocellulosic biomass has dropped by >30% in our latest reactor run. What could cause this? A: This is a classic sign of inhibitor accumulation or enzyme denaturation.

- Immediate Action: Test the hydrolysate for common inhibitors (furfural, HMF, phenolic compounds) via GC-MS.

- Diagnosis: Verify pre-treatment conditions (e.g., steam explosion) were within specified parameters. Over-treatment generates inhibitors.

- Protocol - Inhibitor Assay (Colorimetric for Phenolics):

- Prepare a Folin-Ciocalteu reagent dilution (1:10 with water).

- Mix 100 µL of sample, 200 µL of the reagent, and 2 mL of 7.5% sodium carbonate.

- Incubate at 50°C for 10 min.

- Measure absorbance at 765 nm. Compare to a standard curve prepared with gallic acid.

Q4: Our pilot-scale anaerobic digester for biogas production shows a sudden increase in VFA concentration and a drop in methane percentage. A: This indicates process instability, often "acidogenesis overpowering methanogenesis."

- Immediate Action: Immediately reduce the organic loading rate (OLR) by 50%.

- Diagnosis: Measure alkalinity and calculate the VFA-to-Alkalinity ratio. A ratio >0.3 indicates imminent failure.

- Protocol - Alkalinity Titration:

- Centrifuge a 50 mL digestate sample.

- Titrate 10 mL of supernatant with 0.1N H2SO4 to a pH endpoint of 5.75.

- Calculate alkalinity as mg/L CaCO3: (mL acid) x (N of acid) x (50,000) / (mL sample).

- Mitigation: If ratio >0.3, halt feeding and consider adding alkalinity agents (e.g., sodium bicarbonate) cautiously.

Quantitative Impact Data of Downtime Events

Table 1: Economic Impact of Common Facility Failures (Pilot Scale)

| Failure Event | Avg. Resolution Time | Direct Cost (Lost Materials/Energy) | Indirect Cost (Delayed Research Timeline) | Estimated CO2e Emissions from Wasted Feedstock* |

|---|---|---|---|---|

| Bioreactor Contamination | 5-7 days | $12,000 - $18,000 | 2-3 week delay | 1.8 - 2.5 tonnes |

| Chromatography System Failure | 2-3 days | $3,000 (in solvents/columns) | 1-week delay in data generation | 0.1 tonnes |

| Pre-treatment Reactor Overpressure | 3-5 days | $8,000 (catalyst, biomass) | 1-week delay | 0.8 tonnes |

| Anaerobic Digester Imbalance | 10-14 days | $15,000 - $25,000 | 1-month delay in continuous data | 3.0 - 5.0 tonnes |

*Emissions calculated based on decay/incineration of organic feedstock without product recovery.

Table 2: Key Research Reagent Solutions for Disruption-Prone Processes

| Reagent/Material | Function in Biofuel Research | Critical for Mitigating |

|---|---|---|

| CIP/SIP Solutions (e.g., NaOH, Phosphoric acid) | Clean-in-Place/Sterilize-in-Place agents for bioreactors. | Prevents microbial contamination downtime. |

| Internal Standards (HPLC/GC) (e.g., Tritridecanoin, 4-Methylvaleric acid) | Quantitative standards for accurate metabolite (TAG, VFA) analysis. | Ensures data fidelity during process monitoring. |

| Inhibitor Adsorbents (e.g., Polyvinylpolypyrrolidone - PVPP) | Binds phenolic compounds in lignocellulosic hydrolysates. | Protects enzymatic and microbial catalysts from inhibition. |

| Alkalinity Buffers (e.g., Sodium Bicarbonate) | Maintains pH in anaerobic digestion systems. | Prevents acid crash and digester failure. |

| Cryopreservation Stocks (Master Cell Bank) | Preserves genetic integrity of production microbial strains. | Enables rapid bioreactor restart after failure. |

Experimental Workflow & Pathway Visualizations

Diagram Title: Troubleshooting Path for Bioreactor Contamination

Diagram Title: Lipid Analysis Workflow with Failure Points

Diagram Title: Digester Acid Crash Pathway & Mitigations

Key Performance Indicators (KPIs) for Measuring Supply Chain Resilience and Vulnerability

Technical Support Center: Troubleshooting KPI Implementation in Biofuel Supply Chain Research

Troubleshooting Guides

Issue 1: Inconsistent KPI Measurements During Simulated Facility Disruption

- Problem: KPI values (e.g., Recovery Time, Inventory Buffer Index) show high variance between identical simulation runs of a biofuel refinery disruption.

- Diagnosis: This is often caused by unseeded random number generators in disruption modeling or undefined initial system states.

- Solution: Implement a fixed random seed in your simulation software (e.g., AnyLogic, MATLAB) for reproducibility. Clearly document all initial conditions, including pre-disruption inventory levels at all nodes (feedstock, conversion, distribution).

Issue 2: Inability to Quantify "Vulnerability" Beyond Operational KPIs

- Problem: Researchers can track operational recovery (resilience) but lack metrics to capture pre-disruption weakness (vulnerability).

- Diagnosis: Over-reliance on time-based recovery KPIs. Vulnerability is a structural property.

- Solution: Integrate topological KPIs. Calculate the Network Criticality Index for each facility by simulating its removal and measuring the drop in overall network throughput. Use the following formula as part of your protocol:

NCI_i = (T_total - T_without_i) / T_totalwhereT_totalis normal network throughput andT_without_iis throughput after disabling facilityi.

Issue 3: Data Collection Gaps for KPI Calculation in Multi-Tier Supply Chains

- Problem: Missing upstream (feedstock supplier) or downstream (distribution hub) data prevents calculation of end-to-end KPIs like Order Fulfillment Cycle Time.

- Diagnosis: Assumptions are filling data gaps, reducing validity.

- Solution: Establish a standardized data-sharing protocol with partners using a simplified data structure. For experimental purposes, use agent-based modeling to generate synthetic but realistic data for missing tiers, clearly annotating all synthetic data points in results.

Frequently Asked Questions (FAQs)

Q1: What are the most critical KPIs to start with for a biofuel supply chain resilience experiment? A: Begin with a balanced set covering resilience and vulnerability:

- Time to Recovery (TTR): Measures resilience speed post-disruption.

- Financial Impact (FI): Total cost of the disruption event.

- Network Density: A vulnerability KPI measuring the ratio of existing connections to possible connections (lower density often means higher vulnerability).

- Inventory Buffer Index: Ratio of safety stock to regular cycle stock at key facilities.

Q2: How can I experimentally validate a calculated KPI, like "Recovery Cost," in a simulated environment? A: Use historical disruption data if available. For novel scenarios, employ a Delphi method with industry experts: Present your simulation's recovery trajectory and associated calculated costs, and have experts score its realism on a Likert scale (1-5). Calibrate your model until you achieve a consensus score >4.

Q3: My KPIs for feedstock suppliers show low vulnerability, but the overall network seems fragile. What's wrong? A: You are likely measuring node-level KPIs, not system-level KPIs. Introduce a Propagation Risk KPI. This measures the percentage of nodes (facilities) whose operation degrades by more than a threshold (e.g., 20%) when a given node is disrupted. A highly connected hub may have low internal vulnerability but high propagation risk.

Table 1: Core KPIs for Biofuel Supply Chain Resilience & Vulnerability Assessment

| KPI Category | KPI Name | Formula/Description | Target for Biofuel Chains | Data Source |

|---|---|---|---|---|

| Resilience (Time) | Time to Recovery (TTR) | Time from disruption onset to return to ≥95% pre-disruption throughput. | Minimize | Simulation Logs, ERP Systems |

| Resilience (Cost) | Financial Impact (FI) | ∑(Lost Revenue + Expediting Costs + Penalties) during disruption. | Minimize | Financial Systems, Cost Models |

| Vulnerability (Structural) | Network Criticality Index (NCI) | NCI_i = (T_total - T_without_i) / T_total. |

Identify hotspots (High NCI) | Network Topology Map, Simulation |

| Vulnerability (Operational) | Single Point of Failure (SPoF) Ratio | # of facilities with NCI > 0.7 / Total # of facilities. | Minimize (<0.1) | Calculated from NCI |

| Preparedness | Inventory Buffer Index | Safety Stock Level / Average Daily Demand. | Optimize (Balance cost vs. risk) | Inventory Management Systems |

Table 2: Example Experimental Results from a Simulated Algae Biofuel Refinery Disruption

| Disruption Scenario | TTR (Days) | FI (Million $) | Max NCI Identified | SPoF Ratio |

|---|---|---|---|---|

| 30-day Feedstock Supplier Failure | 38 | 4.2 | 0.85 (Primary Reactor) | 0.25 |

| 7-day Port Closure (Distribution) | 15 | 1.1 | 0.65 (Central Storage Hub) | 0.08 |

| 14-day Refinery Shutdown (Fire) | 45 | 8.5 | 0.92 (Primary Reactor) | 0.33 |

Experimental Protocols

Protocol 1: Measuring Time to Recovery (TTR) Under a Facility Disruption

- Model Definition: Map the biofuel supply chain network (Nodes: Suppliers, Refineries, Hubs. Edges: Material flow volumes).

- Baseline Establishment: Run the simulation for 365 days without disruptions. Record average daily throughput (T_avg).

- Disruption Injection: Select a target facility (e.g., a catalytic cracking unit). At a defined model day (e.g., day 100), set its operational capacity to 0%.

- Simulation & Monitoring: Continue the simulation. Log daily network throughput.

- Calculation: Identify the first day post-disruption where the 7-day rolling average throughput ≥ (0.95 * T_avg). TTR = (This day - Day 100).

Protocol 2: Calculating the Network Criticality Index (NCI) for All Nodes

- Baseline Throughput: As in Protocol 1, step 2, determine T_total.

- Iterative Node Removal: For each facility/node

iin the network: a. Create a copy of the baseline model. b. Set the capacity of nodeito 0% for the entire simulation period. c. Run the simulation and record the resulting average throughput,T_without_i. - Computation: For each node

i, calculateNCI_i = (T_total - T_without_i) / T_total. - Analysis: Rank nodes by NCI. Nodes with NCI > 0.7 are typically considered critical single points of failure.

Visualizations

Title: Workflow for Calculating Network Criticality Index (NCI)

Title: Relationship Between Disruption Events and Key Resilience/Vulnerability KPIs

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Supply Chain Resilience Experimentation

| Item | Function in Research | Example/Notes |

|---|---|---|

| Agent-Based Modeling (ABM) Software | To simulate autonomous agent (supplier, facility, transporter) behaviors and interactions under disruption. | AnyLogic, NetLogo. Crucial for capturing emergent system properties. |

| Disruption Scenario Library | A curated set of plausible disruption events with defined parameters (duration, location, severity). | Includes cyber-attacks, fires, feedstock blight, port closures. Based on historical data & expert input. |

| Network Topology Dataset | Digital map of the supply chain with nodes, edges, capacities, and transit times. | Often built from corporate data, industry reports, or synthetic generation for proprietary chains. |

| Optimization Solver | To calculate optimal recovery pathways or pre-disruption mitigation investments. | Integrated within ABM or separate (e.g., Gurobi, CPLEX). Used for "what-if" analysis. |

| Data Visualization Platform | To communicate KPI results, network maps, and disruption impacts effectively. | Tableau, Power BI, or Python libraries (Plotly, Matplotlib). Essential for stakeholder buy-in. |

Modeling Disruption: Advanced Methodologies for Resilient Biofuel Network Design

Stochastic Programming and Robust Optimization Frameworks for Uncertainty

Troubleshooting Guide & FAQs

Q1: When implementing the two-stage stochastic programming model for our biofuel supply chain, the optimization solver returns an "infeasible" status for certain disruption scenarios. How do we diagnose and resolve this? A1: This typically indicates that the proposed recourse actions (e.g., rerouting feedstock) for a given high-impact disruption scenario are insufficient under the model's constraints. Follow this protocol:

- Isolate the Infeasible Scenario(s): Use the solver's IIS (Irreducible Infeasible Set) finder to identify the specific constraints and variables causing infeasibility.

- Scenario Analysis: Check the isolated scenario's parameters—likely a combination of high facility downtime, low inventory, and maximum transportation capacity constraints.

- Resolution: Implement a "soft" constraint or penalty term for unmet demand in the second-stage problem. Modify the objective function to include a high penalty cost for shortage, ensuring all scenarios have a feasible recourse action, albeit costly.

Q2: In our robust optimization (RO) model for facility location, the solution is overly conservative, leading to prohibitively high upfront costs. How can we adjust the framework to obtain a less conservative, cost-effective design? A2: The conservatism is controlled by the uncertainty set's size. Use this methodology:

- Parameterize the Uncertainty Set: If using a budget-of-uncertainty (Γ) parameter, re-solve the model for a range of Γ values (e.g., from 0, representing no uncertainty, to the maximum number of uncertain parameters).

- Performance Evaluation: Simulate the RO solution for each Γ value against a large set of random disruption scenarios in a Monte Carlo simulation.

- Trade-off Analysis: Plot the upfront investment cost against the simulated average performance (e.g., total cost or service level). Select the Γ value at the knee of the trade-off curve.

Q3: How do we validate that our stochastic programming solution is truly robust against disruptions not explicitly modeled in our scenario set? A3: Conduct an out-of-sample stability test using this experimental protocol:

- Generate Two Scenario Sets: Create a large set of N scenarios (e.g., 1000) via your disruption probability distributions. Split it into an in-sample set (e.g., 200 scenarios used to solve the model) and an out-of-sample set (the remaining 800).

- Solve and Simulate: Solve your stochastic program using the in-sample set. Fix the first-stage decisions (e.g., facility locations, baseline capacity).

- Evaluate: Simulate these fixed decisions against the out-of-sample scenarios by solving only the second-stage (recourse) problems.

- Analyze Gap: Calculate the relative gap between the expected cost from the in-sample solution and the average cost from the out-of-sample simulation. A small gap (<2-5%) indicates model stability.

Q4: We are integrating a risk measure (CVaR) into our stochastic biofuel model. How do we technically implement this and calibrate the risk-aversion parameter? A4: Conditional Value-at-Risk (CVaR) can be linearized and added to a two-stage stochastic linear program.

- Implementation: For a set of scenarios s with probabilities p_s and total cost C_s, introduce an auxiliary variable η (representing VaR) and non-negative variables z_s.

- Linear Constraints: Add:

C_s - η ≤ z_sfor all s, andz_s ≥ 0. - Objective Integration: The CVaR at confidence level α is given by

η + (1/(1-α)) * Σ_s (p_s * z_s). Incorporate this as a weighted term in your overall objective (e.g.,min Expected Cost + λ * CVaR). - Calibration Protocol: Solve the model for a spectrum of λ values. For each solution, plot the efficient frontier: CVaR (risk) on one axis and expected cost on the other. The choice of λ is a strategic decision based on the risk tolerance of the supply chain stakeholder.

Table 1: Comparison of Optimization Frameworks for Disruption Management

| Framework | Core Philosophy | Key Parameter(s) | Typical Solution Character | Computational Burden | Best for Disruption Type |

|---|---|---|---|---|---|

| Two-Stage Stochastic Programming | Optimize expected performance over a discrete set of scenarios. | Probability of each disruption scenario. | Cost-effective on average; may fail in extreme cases. | High (grows with scenarios). | Frequent, low-to-medium impact disruptions. |

| Robust Optimization (Budget-of-Uncertainty) | Optimize for the worst-case within a bounded uncertainty set. | Budget of uncertainty (Γ). | Overly conservative if Γ is max; tunable. | Moderate (often remains a MIP). | Rare, high-impact disruptions with limited data. |

| Risk-Averse Stochastic (e.g., CVaR) | Optimize expected performance while controlling tail-risk. | Risk aversion parameter (λ), confidence level (α). | Balances average cost and extreme event performance. | High (adds variables/constraints). | Managing financial or service-level catastrophes. |

Table 2: Sample Biofuel Facility Disruption Data for Scenario Generation

| Disruption Parameter | Baseline (No Disruption) Value | Disrupted State Range | Estimated Probability (Annual) | Data Source for Calibration |

|---|---|---|---|---|

| Feedstock Pre-processing Facility Downtime | 0 days | 7 - 45 days | 0.05 (1 in 20 years) | Historical maintenance logs, FEMA hazard models. |

| Biorefinery Capacity Loss | 100% | 40% - 70% output | 0.12 | Industry reliability databases. |

| Transport Link Failure (Key Route) | 0 days | 3 - 14 days | 0.08 | DOT closure records, weather event frequency. |

| Feedstock Yield Shock (Regional) | 100% | 60% - 90% of forecast | 0.15 | Agrometeorological models, historical drought data. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Biofuel SCND under Uncertainty

| Tool/Software | Primary Function in Research | Key Application in Thesis Context |

|---|---|---|

| GAMS/AMPL | Algebraic modeling language for mathematical optimization. | Formulating and solving large-scale stochastic MIP models for supply chain network design (SCND). |

| Python (Pyomo, Pandas) | Open-source modeling and data analysis. | Prototyping models, automating scenario generation, and post-processing solution data. |

| CPLEX/Gurobi | Commercial solver for linear, mixed-integer, and quadratic programs. | Finding optimal solutions to the deterministic equivalent of stochastic and robust problems. |

| R (ggplot2, tidyverse) | Statistical computing and graphics. | Analyzing disruption data distributions and visualizing trade-off curves (e.g., cost vs. risk). |

| Graphviz | Graph visualization software. | Mapping optimal supply chain networks and material flows under different scenarios (see below). |

Experimental Workflow Diagrams

Title: Uncertainty Modeling Research Workflow

Title: Two-Stage Stochastic Program Structure

Technical Support Center: Troubleshooting & FAQs

This support center addresses common technical challenges encountered when applying Multi-Agent Simulation (MAS) and Discrete Event Simulation (DES) for scenario analysis within biofuel supply chain resilience research.

FAQ 1: During a DES model run of our biomass preprocessing facility, the simulation "hangs" or shows no activity for long periods. What is the likely cause?

- Answer: This is typically a "deadlock" issue. In a DES, entities (e.g., truckloads of biomass) require multiple resources (e.g., unloading dock, screener, dryer) simultaneously or in sequence. If the logic incorrectly queues entities holding one resource while waiting for another that is held by a different queued entity, all processes stop.

- Troubleshooting Guide:

- Enable Agent Tracing: Activate step-by-step event tracing for a small number of entities to follow their path.

- Audit Resource Logic: Check the "Seize" and "Release" logic blocks for shared resources. Ensure every "Seize" has a corresponding "Release" in all possible process branches (including failure routes).

- Implement Timeouts: Introduce a maximum wait time for resources. If exceeded, the entity releases its held resources and moves to an exception handling sub-process (e.g., rerouted to a secondary facility).

- Simplify & Test: Start with a minimal model of only the suspected process, confirm it works, and then gradually add complexity.

FAQ 2: How do I validate that my Multi-Agent model of supplier and distributor behavior realistically represents decision-making under disruption?

- Answer: Validation requires a multi-faceted approach comparing model output to real-world or theoretical benchmarks.

- Troubleshooting Guide:

- Face Validation: Present the agent decision rules (e.g., IF inventory < X AND supplierdisrupted THEN switchtoaltsupplier) and simulation animations to domain experts (supply chain managers).

- Historical Data Comparison: If partial historical disruption data exists, compare key output metrics (e.g., inventory levels, recovery time) from your model against the real data.

- Extreme Condition Testing: Run scenarios where parameters are set to extreme values (e.g., 100% disruption probability). The model's output should align with logical expectations (e.g., complete system failure or full activation of contingency plans).

- Sensitivity Analysis: Systematically vary key behavioral parameters (e.g., risk aversion threshold) and ensure the output changes in a plausible and monotonic manner.

FAQ 3: When integrating a DES (facility operations) with an MAS (strategic actors), what is the most efficient way to handle time synchronization?

- Answer: Use a controlled hybrid approach where one paradigm drives the master clock.

- Troubleshooting Guide:

- DES as Time Driver: Most effective when analyzing operational logistics. Let the DES event calendar advance time. The MAS agents are invoked at predefined DES schedule points (e.g., end of each week) or triggered by specific DES events (e.g., "inventorybelowthreshold").

- MAS as Time Driver: More suitable for long-term strategic analysis. Let agent decisions and interactions advance the simulation clock in discrete time steps (e.g., 1 day). DES processes within facilities are approximated using aggregate delay functions or embedded queuing models calculated per time step.

- Implementation: Create a clear "Time Synchronization Interface" module. Document whether time is event-driven (DES) or step-driven (MAS) and ensure all state updates are synchronized to prevent causality errors.

FAQ 4: My scenario analysis results show high volatility across replications, making it difficult to draw conclusions. How can I improve output stability?

- Answer: High volatility often stems from an inadequate sample size (too few replications) or improperly modeled stochastic elements.

- Troubleshooting Guide:

- Determine Required Replications: Use a sequential procedure. Run an initial set of n replications (e.g., 10). Calculate the mean and confidence interval for your Key Performance Indicator (KPI). Continue adding replications until the half-width of the confidence interval is less than a target precision (e.g., 1% of the mean).

- Review Stochastic Inputs: Ensure probability distributions (for disruption duration, biomass yield, transport time) are fitted to empirical data, not guesses. Replace poorly defined uniform distributions with more appropriate triangular or beta distributions.

- Common Random Numbers (CRN): When comparing scenarios (e.g., Policy A vs. Policy B), use identical streams of random numbers across scenarios. This reduces variance in the difference between scenarios, making it easier to detect a true effect.

Data Presentation: Key Performance Indicators (KPIs) for Scenario Comparison

Table 1: Quantitative Output from Biofuel Supply Chain Disruption Scenarios KPI: Total System Cost per Liter of Biofuel Produced (in $)

| Scenario Description | DES Model (Operational Cost) | MAS Model (Tactical/Strategic Cost) | Integrated MAS-DES Model (Total Cost) | 95% Confidence Interval (+/-) |

|---|---|---|---|---|

| Baseline (No Disruptions) | 0.42 | 0.10 | 0.52 | 0.02 |

| Single Feedstock Facility Disruption (30 days) | 0.58 | 0.22 | 0.80 | 0.05 |

| Multi-Facility Correlated Disruption | 0.71 | 0.35 | 1.06 | 0.08 |

| With Contingency Inventory Policy | 0.49 | 0.18 | 0.67 | 0.04 |

Table 2: Model Configuration & Computational Performance Platform: AnyLogic 8.8, Intel i7-12700H, 32GB RAM

| Model Type | # of Agents / Entities | # of Stochastic Inputs | Avg. Runtime (10 replications) | Output Variance (Std. Dev. of KPI) |

|---|---|---|---|---|

| DES Only | 15,000 entities | 8 | 4 min 22 sec | 0.015 |

| MAS Only | 45 agents | 12 | 1 min 15 sec | 0.041 |

| Integrated | 45 agents + ~5,000 ents | 20 | 18 min 50 sec | 0.063 |

Experimental Protocols

Protocol 1: Calibrating Agent Behavioral Parameters (Risk Aversion) Objective: To empirically set the risk aversion threshold for supplier agents in the MAS. Methodology:

- Literature Review: Extract stated risk tolerance levels from surveys of agricultural/industrial suppliers. Convert to an initial threshold range (e.g., 20-40% inventory buffer).

- Historical Data Mining: Analyze past disruption events from industry reports. Correlate the time at which a known supplier switched to a backup logistics provider with their recorded inventory levels at disruption onset.

- Expert Elicitation: Conduct structured interviews with 5-7 supply chain managers using the following protocol:

- Present a series of hypothetical disruption scenarios with varying severities.

- Ask: "At what remaining inventory level (as % of normal) would you activate your contingency contract?"

- Record responses and calculate the median threshold for each scenario severity.

- Model Calibration: Run the MAS with the threshold as a variable. Use optimization (e.g., genetic algorithm) to find the threshold value that minimizes the difference between model-predicted contingency activation timing and historically observed timing.

Protocol 2: Simulating a Cascading Facility Disruption Objective: To model the propagation of a disruption from a primary processing plant to downstream biorefineries. Methodology:

- DES Setup: Model the primary plant with detailed failure, repair, and queue logic.

- MAS Setup: Model downstream biorefinery agents with inventory monitoring and alternative sourcing logic.

- Trigger Integration:

- In the DES, at the moment the primary plant's "failure" event occurs, send a message to the MAS:

{disruption_start: Plant_A, estimated_duration: triangular(14,21,28)}. - The affected biorefinery agents receive this message. They check their current inventory and consumption rate against their internal risk threshold.

- If triggered, an agent initiates its "Alternative Sourcing" protocol, which involves sending request messages to other supplier agents and incurring a stochastic delay and cost premium.

- In the DES, at the moment the primary plant's "failure" event occurs, send a message to the MAS:

- Data Collection: Record the time lag between the initial disruption and each agent's response, the resulting shortage volumes, and the cost inflation across the network.

Mandatory Visualizations

Title: MAS-DES Research Workflow for Biofuel Supply Chain

Title: Integrated MAS-DES Disruption Response Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software & Modeling Tools for MAS-DES in Supply Chain Research

| Item Name (Software/Library) | Function & Explanation | Typical Use Case in Biofuel SC Research |

|---|---|---|

| AnyLogic Professional | A multi-method simulation platform supporting DES, MAS, and System Dynamics in a single integrated environment. | Building the integrated hybrid model where DES handles plant logistics and MAS handles supplier agents. |

| Simio | An object-oriented simulation software focused on DES with emerging agent-based capabilities. | Detailed modeling of complex material handling and transportation networks within facilities. |

| Repast Simphony / Mesa | Open-source platforms specifically designed for developing agent-based simulation models. | Prototyping and testing complex agent decision algorithms before integration into a hybrid model. |

| R / Python (SimPy, SALib) | Statistical programming languages with simulation (SimPy) and sensitivity analysis (SALib) libraries. | Pre-processing input data, running automated sensitivity analyses, and post-processing output data. |

| OptQuest (Within AnyLogic) | An optimization engine that uses metaheuristics to find the best input parameters for a simulation model. | Automating the search for optimal inventory policy parameters (e.g., safety stock levels). |

| MySQL / PostgreSQL | Relational database management systems. | Storing and managing large volumes of input parameters and output results from thousands of simulation runs. |

Integrating Resilience Analytics and Graph Theory to Identify Critical Nodes

Troubleshooting Guides & FAQs

Q1: During network construction, my adjacency matrix yields a disconnected graph. How do I handle this for resilience analytics? A: A disconnected graph invalidates many central path-based metrics. First, check your connection logic (e.g., threshold for creating edges is too high). If disconnection is inherent (e.g., isolated facilities), you have two options: 1) Analyze the largest connected component (LCC) separately, noting this limitation, or 2) Use metrics that don't require path connectivity, such as Degree Centrality or leverage a multilayer network framework to connect components via a different relationship (e.g., shared suppliers). For biofuel supply chains, ensure all transport routes between pre-processing, conversion, and distribution nodes are accurately captured.

Q2: My Betweenness Centrality calculations identify too many "critical" nodes, diluting focus. How can I refine the results? A: High Betweenness can indicate critical choke points. To refine:

- Apply thresholds: Calculate the mean and standard deviation of Betweenness values. Flag nodes exceeding (mean + 2*SD) as highly critical.

- Use weighted edges: Replace binary connections (1/0) with edge weights reflecting capacity, distance, or cost. Recalculate weighted Betweenness. This often prioritizes high-capacity, low-redundancy links in your biofuel network.

- Perform cascading failure simulation: Sequentially remove top candidates and recalculate network efficiency. The node whose removal causes the steepest drop in global efficiency is paramount.

Q3: When simulating facility disruptions, how do I choose between random failure and targeted attack scenarios? A: Your choice must align with your thesis risk model.

- Random Failure: Use this to model widespread, non-discriminatory events like regional storms or pandemics. Nodes are removed uniformly at random. This tests the network's inherent redundancy.

- Targeted Attack: Use this to model strategic risks like supplier bankruptcy or targeted sabotage. Nodes are removed in descending order of a centrality metric (e.g., Degree, Betweenness). This identifies the network's vulnerability to intelligent threats. For a comprehensive analysis in biofuel supply chain research, run both. Compare the rate of decline in network performance (e.g., efficiency, throughput).

Q4: The "resilience loss" metric after node removal seems abstract. How can I translate it into actionable supply chain insights? A: Quantify resilience loss (RL) using a concrete metric like Normalized Delivery Shortfall (NDS). Follow this protocol:

- Define network throughput

T_initialunder normal operation. - Upon node/edge removal, use a maximum flow algorithm to compute new throughput

T_disrupted. - Calculate

NDS = (T_initial - T_disrupted) / T_initial. - Map high-NDS scenarios to specific biofuel supply chain KPIs: increased cost per liter, delayed delivery days, or inventory shortage probability.

Q5: My graph analysis software (e.g., NetworkX, Gephi) struggles with large, dense biofuel supply networks. Any optimization tips? A: For networks with >10,000 nodes/edges:

- Sparsify: Apply a meaningful weight threshold to remove insignificant connections.

- Use Approximate Metrics: For Betweenness, use sampling (e.g., the

kparameter in NetworkX'sbetweenness_centralityto estimate using a subset of source nodes). - Leverage HPC/Cloud: Use GPU-accelerated graph libraries (e.g., CuGraph) or distribute computations across clusters for centrality calculations and disruption simulations.

Experimental Protocols

Protocol 1: Constructing a Biofuel Supply Chain Network for Critical Node Analysis Objective: To model the supply chain as a directed, weighted graph for resilience analytics. Steps:

- Node Identification: Enumerate all entities: feedstock farms (F), pre-processing facilities (P), biorefineries (B), storage hubs (S), distribution centers (D).

- Edge Establishment: For each material flow from entity A to B, create a directed edge (A -> B).

- Edge Weight Assignment: Assign two weights: i)

capacity(tons/day), ii)alternatives(integer count of other nodes providing similar flow to the target). - Graph Representation: Store as an adjacency list or matrix. Use a dictionary of dictionaries for flexibility with weighted attributes.

- Validation: Cross-verify with stakeholders to ensure all major flows for a target biofuel (e.g., cellulosic ethanol) are captured.

Protocol 2: Simulating a Targeted Attack on Critical Nodes Objective: To stress-test the network and rank nodes by criticality. Steps:

- Calculate Initial State: Compute the network's global efficiency (G_e) or total weighted throughput (T).

- Node Ranking: Calculate Betweenness Centrality for all nodes. Rank nodes in descending order.

- Iterative Removal: Remove the top-ranked node. Recalculate G_e or T for the remaining network.

- Recalculation: Recalculate centralities for the remaining network (this simulates dynamic rerouting).

- Repetition: Repeat steps 3-4 for the next top-ranked node in the original ranking (static attack) or the recalculated ranking (dynamic attack) for

kiterations. - Output: Plot % of network performance (G_e or T) vs. % of nodes removed. The area under this curve is a quantitative resilience measure.

Table 1: Comparison of Graph Centrality Metrics for Critical Node Identification

| Metric | Formula (Simplified) | Interpretation in Biofuel SC | Pros | Cons |

|---|---|---|---|---|

| Degree | Deg(v) = # of connections |

Number of direct neighbors (suppliers/customers) | Fast to compute. Indicates local load. | Ignores broader network role. |

| Betweenness | Bet(v) = Σ (σ_st(v)/σ_st) |

# of shortest paths passing through node. Identifies bridges/chokepoints. | Captures control over flow. | Computationally heavy for large nets. |

| Eigenvector | x_v = (1/λ) Σ_{u∈N(v)} x_u |

Influence of a node based on its connected neighbors. | Identifies well-connected hubs. | May not reflect physical flow. |

| Closeness | Clo(v) = 1 / Σ d(v,t) |

Average distance to all other nodes. Speed of propagation. | Good for spread time. | Sensitive to graph disconnection. |

Table 2: Simulated Network Performance Under Disruption Scenarios

| Scenario | Nodes Removed | % Drop in Global Efficiency | % Drop in Throughput | Likely Biofuel Impact |

|---|---|---|---|---|

| Random Failure | 10% | 12.4 ± 3.1% | 15.2 ± 4.7% | Moderate regional delays |

| Targeted (Betweenness) | 5% | 61.8% | 73.5% | Major system-wide shortage |

| Targeted (Degree) | 5% | 45.2% | 58.1% | Severe output reduction |

| Edge Capacity Attack* | 10% | 28.7% | 41.3% | Increased logistics cost |

*Attack on top 10% of edges by flow volume.

Visualizations

Title: Biofuel Supply Chain as a Directed Graph

Title: Critical Node Identification Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Resilience/Graph Analysis |

|---|---|

| NetworkX (Python) | Primary library for graph creation, manipulation, and calculation of centrality metrics. Essential for prototyping. |

| igraph (R/Python) | High-performance library for fast analysis of large networks, suitable for supply chains with thousands of entities. |

| Gephi | Interactive visualization platform. Used for exploratory analysis and generating publication-quality network diagrams. |

| CuGraph | GPU-accelerated graph analytics library. Dramatically speeds up centrality computations on very large supply chain networks. |

| Linear Programming Solver (e.g., Gurobi, PuLP) | Used to model and compute maximum network flow after disruptions, translating graph theory results into operational metrics. |

| Geographic Information System (GIS) Data | Provides real-world spatial coordinates for facilities and routes, enabling accurate distance-based edge weighting. |

Technical Support Center

This support center provides troubleshooting guidance for researchers implementing data-driven monitoring systems within biofuel supply chain experiments, specifically those studying facility disruption risks.

FAQs & Troubleshooting Guides

Q1: Our IoT sensor network monitoring feedstock storage silos is reporting inconsistent moisture readings. What are the primary troubleshooting steps?

A: Inconsistent moisture data, critical for predicting microbial growth and spoilage risk, typically stems from three areas:

- Sensor Calibration Drift: Harsh industrial environments cause drift. Recalibrate sensors against a standard weekly.

- Network Packet Loss: Check signal strength at the sensor gateway. Implement a lightweight MQTT protocol with QoS level 1 to ensure message delivery.

- Power Fluctuations: Install an uninterruptible power supply (UPS) for gateway modules. Use the diagnostic table below to isolate the issue.

Table: Diagnostic Steps for Erratic IoT Sensor Data

| Symptom | Possible Cause | Diagnostic Action | Corrective Protocol |

|---|---|---|---|

Sporadic NULL values |

Network latency/packet loss | Ping sensor node from gateway; check logs for timeouts. | Optimize antenna placement; switch to a mesh network topology (e.g., LoRaWAN). |

| Readings stuck at a constant value | Sensor fault or firmware hang | Send a manual read command via the device management platform. | Power-cycle the sensor node; update device firmware. |

| Gradual reading bias over time | Calibration drift | Compare sensor reading with a handheld calibrated hygrometer on a physical sample. | Execute on-site recalibration procedure per manufacturer specs. |

| Synchronization errors in timestamps | Gateway clock drift | Check gateway system time against NTP server. | Configure gateway to auto-sync with time.google.com daily. |

Q2: The real-time AI model for predicting pretreatment reactor failure has high accuracy in training but poor performance (low precision) in live deployment. How do we diagnose this?

A: This indicates model drift due to a mismatch between training and live data distributions.

- Data Divergence Check: Use a Kolmogorov-Smirnov test to compare distributions of key live input variables (e.g., feedstock viscosity, inlet temperature) against the training dataset.

- Feature Importance Audit: Re-run feature importance (e.g., using SHAP values) on live data. A shift may indicate a new failure precursor not present historically.

- Protocol for Retraining: Establish a continuous evaluation pipeline. When model precision drops below 85% for three consecutive days, trigger the following retraining protocol:

- Step 1: Collect the most recent 3 months of operational data.

- Step 2: Manually label failure events with help from process engineers.

- Step 3: Retrain the model (e.g., XGBoost classifier) on the new data, holding out the latest 2 weeks for testing.

- Step 4: Deploy the new model as a shadow model to run in parallel for 1 week before full cutover.

Q3: The digital twin of our biorefinery logistics hub is causing latency in the real-time dashboard, delaying disruption alerts. How can we optimize performance?

A: Latency is often due to excessive fidelity in non-critical areas. Optimize using the following methodology:

- Workflow Analysis: Profile the digital twin's update cycle. The diagram below outlines the optimized data flow to reduce latency.

Title: Optimized Data Flow for Low-Latency Digital Twin

- Implementation Protocol: Implement data filtering at the edge. Use simple rules (e.g., "send data only if value changes >0.5%") on IoT gateways to reduce cloud payload. For the twin itself, simplify non-essential unit operations to reduced-order models.

Q4: When simulating a port disruption, our supply chain optimization model fails to converge on a feasible rerouting plan within a practical time. What solver adjustments are recommended?

A: This is a large-scale Mixed-Integer Linear Programming (MILP) problem. Use the following experimental solver configuration protocol:

- Step 1: Initial Relaxation. Solve the LP relaxation to obtain a lower bound and identify "hard" constraints.

- Step 2: Solver Parameters. Set

MIPGap = 0.05(5%) to accept a near-optimal solution faster than seeking absolute optimality. - Step 3: Heuristic Start. Use a greedy algorithm (e.g., nearest available facility) to generate an initial feasible solution (

Startvariable) for the solver. - Step 4: Parallel Computing. Enable the solver's parallel processing feature (e.g.,

Threads = 4) to explore multiple branch-and-bound nodes simultaneously.

Table: Experimental Solver Configuration for Disruption Rerouting

| Solver Parameter | Recommended Value | Function in Experiment |

|---|---|---|

TimeLimit |

300 seconds | Ensures the simulation provides a timely decision. |

MIPFocus |

1 | Directs solver effort to finding feasible solutions quickly. |

Heuristics |

0.05 | Increases use of heuristics to find initial solutions. |

PreSolve |

2 | Aggressively simplifies the problem before solving. |

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Digital Research Tools for Biofuel SC Disruption Experiments

| Tool/Reagent | Function in Experiment | Example/Note |

|---|---|---|

| IoT Development Kit | Prototyping custom sensor nodes for unique metrics (e.g., feedstock acidity). | Raspberry Pi with HATs for sensors; Arduino MKR boards. |

| Time-Series Database | Ingesting and storing high-volume, timestamped sensor data for analysis. | InfluxDB, TimescaleDB. |

| Simulation Software | Creating discrete-event and agent-based models of supply chain logistics. | AnyLogic, FlexSim. |

| Optimization Solver | Solving mathematical programming models for network redesign under disruption. | Gurobi, IBM CPLEX (available via academic licenses). |

| Containerization Platform | Ensuring reproducibility of AI/analytics models across research environments. | Docker, Kubernetes for orchestration. |

| Visualization Library | Building custom dashboards to communicate real-time insights and predictions. | Plotly Dash, Streamlit. |

Q5: Our anomaly detection system for fermentation batch processes is generating too many false positive alerts, leading to alarm fatigue. How can we improve its specificity?

A: This requires refining the anomaly detection model's threshold and features. Follow this experimental protocol:

- Label Historical Data: Manually review historical batches and label true positive anomalies (e.g., contamination, stalled reaction).

- Feature Engineering: Add context-aware features beyond sensor readings, such as

batch_ageorfeedstock_batch_id, to help the model discern between novel but normal states and true faults. - Threshold Tuning: Use the Precision-Recall curve on a validation set to select an anomaly score threshold that meets a minimum precision of 90%. The workflow is shown below.

Title: Anomaly Detection Model Tuning Workflow

- Implementation: Implement a simple rule-based filter to suppress anomalies that auto-correct within 5 minutes, as these are likely sensor artifacts.

Building Robustness: Practical Strategies for Mitigating Disruption in Biofuel Operations

Strategic Facility Fortification and Proactive Maintenance Protocols

Technical Support Center: Biofuel Pilot Plant & Analytical Laboratory

Troubleshooting Guides & FAQs

Section 1: Fermentation & Bioreactor Operations

Q1: Our fermentation run is showing a sudden, sustained drop in bioethanol yield after 36 hours. What are the primary diagnostic steps?

- A: This indicates a potential facility disruption in nutrient supply or contamination. Follow this protocol:

- Immediate In-line Sensor Check: Verify dissolved oxygen (DO), pH, and temperature probe calibrations against offline samples.

- Contamination Assay: Aseptically sample and perform:

- Gram staining for bacterial contamination.

- Plate on non-selective (LB Agar) and selective (containing cycloheximide) media to differentiate bacterial vs. fungal contamination.

- Nutrient Analysis: Use HPLC to quantify residual glucose and key inhibitors (e.g., furfural, HMF) in the broth. Compare to baseline.

- A: This indicates a potential facility disruption in nutrient supply or contamination. Follow this protocol:

Q2: The pilot-scale bioreactor's heat exchanger is failing to maintain optimal temperature, risking a batch loss. What is the emergency response?

- A: This is a critical facility fortification failure.

- Immediate Mitigation: Divert process steam or pre-warm feedstock via backup inline heater to temporarily stabilize temperature.

- Diagnostic: Check for fouling in the exchanger plates (common with lignocellulosic hydrolysates) and verify PID controller loop functionality.

- Proactive Protocol: Implement a monthly CIP (Clean-in-Place) cycle with 1M NaOH to prevent fouling, as per the schedule below.

- A: This is a critical facility fortification failure.

Section 2: Downstream Processing & Analytics

Q3: Post-distillation, our biofuel sample shows inconsistent purity readings via GC-MS. How do we isolate the issue?

- A: Inconsistency points to instrument or sample preparation disruption.

- Column Integrity Test: Run a standard n-alkane mixture (C8-C20). Compare retention indices and peak symmetry to the historical benchmark.

- Sample Preparation Audit: Ensure the internal standard (e.g., 1-Butanol) is added at the exact same concentration and stage for every sample.

- Facility Environment Check: Lab temperature and humidity fluctuations can affect GC stability. Verify that the analytical lab's HVAC log shows stability within ±2°C.

- A: Inconsistency points to instrument or sample preparation disruption.

Q4: The cross-flow filtration membrane for cell separation is clogging prematurely, reducing throughput. What optimization is required?

- A: This is a maintenance protocol failure.

- Immediate Action: Perform a forward/back pulse flush with 0.1M NaOH to recover flux.

- Root Cause Analysis: Analyze feed slurry particle size distribution. A shift towards smaller particles indicates upstream pretreatment variability.

- Protocol Update: Implement a daily integrity test measuring normalized water permeability (NWP) to track membrane performance decay predictively.

- A: This is a maintenance protocol failure.

Quantitative Data Summary

Table 1: Common Facility Disruptions & Impact on Yield

| Disruption Type | Affected Unit Operation | Typical Yield Reduction | Mean Time to Recovery (Hours) |

|---|---|---|---|

| Bioreactor Temperature Excursion | Fermentation | 15-40% | 6-24 |

| Sterility Failure (Contamination) | Seed Train/Fermentation | 60-100% | 48+ (batch loss) |

| Membrane Fouling Acceleration | Downstream Separation | 20-35% | 8-12 (for cleaning) |

| HPLC/GC-MS Calibration Drift | Quality Control | N/A (data integrity loss) | 2-4 |

Table 2: Proactive Maintenance Schedule for Key Equipment

| Equipment | Maintenance Task | Frequency | Key Performance Indicator (KPI) to Monitor |

|---|---|---|---|

| Pilot Bioreactor | Calibrate DO, pH, temp probes | Weekly | Standard deviation of probe vs. offline reference |

| Distillation Column | Inspect/clean packing material | Quarterly | Pressure drop per theoretical plate |

| Centrifuge | Rotor inspection and balance | Every 200 hours | Vibration amplitude (mm/s) |

| Analytical GC-MS | Replace septum, liner, tune MS | Weekly/Per 100 runs | Signal-to-Noise ratio of standard mix |

Experimental Protocols

Protocol P-01: Rapid Assessment of Feedstock Contaminant Inhibition on Fermentation.

- Purpose: To quantify the impact of facility-related feedstock degradation or contamination on yeast viability.

- Methodology:

- Prepare a standard synthetic media and a test media with the suspect feedstock hydrolysate.

- Inoculate with S. cerevisiae strain at OD600 = 0.1 in triplicate 96-well plates.

- Incubate at 30°C with continuous shaking in a plate reader.

- Monitor OD600 (growth) and ethanol concentration (via enzymatic assay kit) every 2 hours for 48 hours.

- Calculate specific growth rate (μ) and ethanol productivity (g/L/h) for both media. A >25% reduction in μ indicates significant inhibition.

Protocol P-02: Stress Testing Backup Power Cutover for Critical Instrumentation.

- Purpose: To validate facility fortification against power disruption.

- Methodology:

- Identify critical units (e.g., -80°C freezer, bioreactor control system, anaerobic chamber).

- During a scheduled downtime, manually simulate a mains power failure.

- Record the time delay (seconds) for UPS and generator backup to engage.

- Monitor and log the internal temperature of the freezer for 30 minutes post-cutover.

- Verify data integrity on connected PCs and PLCs. Any temperature rise >10°C or data/log loss constitutes a test failure.

Visualizations

Biofuel Process Flow with Key Disruption Risks

Diagnostic Workflow for Fermentation Yield Drop

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Biofuel Supply Chain Research |

|---|---|

| Cycloheximide | Selective antibiotic used in culture media to inhibit eukaryotic (e.g., yeast) growth, allowing detection of bacterial contaminants in fermentation processes. |

| N-Alkane Standard Mix (C8-C20) | Certified reference material for calibrating Gas Chromatograph retention times, essential for accurate identification and quantification of biofuel components. |

| Enzymatic Ethanol Assay Kit (NAD/ADH based) | Allows rapid, specific quantification of ethanol concentration in complex fermentation broths without requiring distillation, enabling high-throughput screening. |

| Internal Standard (e.g., 1-Butanol for GC) | Added in a constant amount to all analytical samples; its peak area variations correct for instrument fluctuations and sample preparation errors. |

| Lignocellulosic Inhibitor Standards (Furfural, HMF, Acetic Acid) | HPLC standards used to quantify concentrations of fermentation inhibitors generated during biomass pretreatment, crucial for feedstock quality control. |

| Particle Size Standard (Latex Beads) | Used to calibrate particle size analyzers, monitoring slurry consistency and predicting downstream filtration performance. |

Technical Support Center

This support center provides troubleshooting guidance for computational and experimental logistics models within biofuel supply chain research. The following FAQs address common issues encountered when simulating dynamic routing and multi-modal transport under facility disruption risks.

FAQs & Troubleshooting Guides

Q1: My dynamic routing algorithm fails to converge or returns infeasible routes when simulating a major biorefinery disruption. What are the primary checks? A1: This is typically a data input or constraint definition issue. Follow this protocol:

- Check Node Connectivity: Verify that your network graph remains fully connected after the simulated disruption. A disconnected graph will cause failure.

- Validate Capacity Constraints: Ensure alternative transport modes (e.g., rail, barge) have sufficient capacity defined in the model to handle rerouted volumes. An infeasible solution often indicates insufficient capacity.

- Review Cost Parameters: Confirm that penalty costs for unmet demand or delay are numerically significant relative to transport costs to ensure proper algorithm prioritization.

Q2: During multi-modal simulation, the model disproportionately selects one transport mode (e.g., truck) even when rail is cost-advantageous for long distances. How do I correct this? A2: This suggests biased or incomplete cost parameterization. Implement the following experimental protocol:

- Audit the Total Logistic Cost Function: Expand your equation to include all variables from the table below.

- Run a Sensitivity Analysis: Systematically vary each cost parameter in the "Multi-Modal Cost Components" table to identify which one is driving the bias.

- Incorporate Modal Transfer Costs: Explicitly add fixed and time-based costs for transferring biomass between truck, rail, and barge at hub terminals.

Multi-Modal Cost Components for Sensitivity Analysis

| Cost Component | Typical Unit | Function in Model | Common Source of Error |

|---|---|---|---|

| Variable Transport Cost | $/ton-mile | Scales with distance & volume | Using outdated fuel surcharges |

| Fixed Loading/Unloading Cost | $/terminal visit | Covers handling at facilities | Omission for specific modes |

| Modal Transfer Cost | $/transfer | Cost of switching transport mode | Complete omission, favoring door-to-door modes |

| Inventory Holding Cost (In-Transit) | $/ton-day | Penalizes slower modes | Underestimation of biomass degradation rate |

| Emission Cost / Carbon Tax | $/ton CO₂-eq | Favors greener modes | Exclusion from core model logic |

Q3: How do I experimentally validate a simulated dynamic routing strategy for biomass feedstock delivery? A3: Validation requires a hybrid digital-physical approach. Use this methodology:

- Historical Data Benchmarking: Run your optimized model on past disruption events (e.g., historic facility shutdowns, weather events). Compare the model's suggested routes and costs against what was actually executed.

- Discrete-Event Simulation (DES) Prototyping: Before field deployment, build a DES model (using tools like AnyLogic or SimPy) to simulate the stochastic arrival of trucks, queue times at loading stations, and variable transport times. This tests the robustness of the dynamic routes.

- Pilot Scale Physical Test: Implement the model's routing instructions for a subset of deliveries (e.g., 5-10 trucks) using GPS-tracked shipments. Monitor adherence to schedule, fuel use, and document any unforeseen obstacles.

Key Experimental Workflow for Dynamic Routing

The following diagram outlines the core computational-experimental loop for developing and validating adaptive logistics strategies.

Diagram Title: Adaptive Logistics Model Development Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Biofuel Logistics Research | Example / Specification |

|---|---|---|

| Geographic Information System (GIS) Software | Creates the spatial network for routing, incorporating real-world roads, rails, and waterways. Essential for accurate distance and time estimation. | ArcGIS, QGIS, PostGIS. Must include network analysis toolkits. |

| Optimization Solver Library | Provides the computational engine to solve the dynamic routing problem, typically formulated as a Mixed-Integer Linear Program (MILP). | Gurobi, CPLEX, OR-Tools. Ensure academic licenses are configured. |

| Discrete-Event Simulation (DES) Platform | Models stochastic processes (arrivals, breakdowns, transfers) to test dynamic routing logic under uncertainty before real-world implementation. | AnyLogic, Simio, SimPy (Python library). |

| Biomass Moisture & Degradation Model | A sub-model that predicts quality decay over time in transit. Critical for calculating holding costs and validating viability of longer multi-modal routes. | Empirical model based on feedstock type (e.g., switchgrass, corn stover), temperature, and humidity. |

| Real-Time Vehicle Tracking Data Feed | Provides live data for dynamic model input and validates simulation outputs against actual performance metrics like speed and idle time. | GPS API feeds (commercial or prototype hardware on test vehicles). |

Inventory Buffering and Strategic Stockpiling of Critical Feedstocks and Intermediates

Technical Support Center: Troubleshooting & FAQs for Feedstock & Intermediate Stability & Storage Experiments

This support center addresses common experimental challenges in the characterization and storage of critical biofuel supply chain materials, framed within the research thesis: Optimizing biofuel supply chain under facility disruption risks.

Frequently Asked Questions (FAQs)

Q1: Our stockpiled lignocellulosic hydrolysate shows a significant drop in fermentable sugar yield after 4 weeks of storage at 4°C. What are the likely causes and mitigation strategies? A: Primary causes are microbial contamination and/or chemical degradation (e.g., re-polymerization). Mitigation includes:

- Sterile Filtration: Use 0.2 µm filters before storage.

- Low-Temperature Storage: Store at -20°C for >1-month stability.

- Acidification: Adjust pH to ~2-3 to inhibit microbial growth and slow degradation.

- Regular Titers: Perform weekly sugar concentration assays (e.g., HPLC) to establish degradation kinetics.

Q2: We observe phase separation and precipitation in our stored lipid intermediates (e.g., FAME from algal oil). How can we stabilize the mixture? A: Phase separation indicates water ingress or thermal instability.

- Dehydration: Use molecular sieves (3Å or 4Å) or nitrogen sparging to remove residual water.

- Additives: Incorporate approved antioxidants (e.g., BHT at 50-100 ppm) to prevent oxidative rancidity.

- Storage Conditions: Store under an inert atmosphere (N₂ or Ar) in airtight, opaque containers at 4°C to minimize oxidation and photodegradation.

Q3: Our stability-monitoring experiment for a key enzyme (e.g., cellulase cocktail) shows inconsistent activity loss. How should we standardize the protocol? A: Inconsistency often stems from variable temperature cycles or assay conditions.

- Controlled Aliquots: Divide stock into single-use aliquots to avoid freeze-thaw cycles.

- Stabilizing Buffer: Store in a buffer with 50% glycerol at -20°C to maintain protein conformation.

- Standardized Assay: Implement a strict, calibrated activity assay (e.g., DNSA for reducing sugars) using a fixed protein concentration and reaction temperature each time.

Q4: How do we accurately model the shelf-life of a buffered stockpile under variable facility conditions? A: Implement an Accelerated Stability Testing (AST) protocol.

- Stress Conditions: Expose samples to elevated temperatures (e.g., 25°C, 37°C, 50°C) and humidity.

- Regular Sampling: Measure key quality metrics (concentration, activity, purity) at set intervals.

- Arrhenius Modeling: Use the degradation data at high temperatures to predict degradation kinetics at standard storage temperatures.

Experimental Protocols

Protocol 1: Accelerated Stability Testing for Feedstock Intermediates Objective: To predict the shelf-life of a saccharified biomass feedstock under non-ideal storage conditions. Methodology:

- Sample Preparation: Prepare 50mL aliquots of the hydrolysate. Adjust subsets to different pH levels (3, 5, 7).

- Stress Incubation: For each condition, incubate replicates at 4°C (control), 25°C, and 37°C.

- Sampling Schedule: Draw 1mL samples from each condition at T=0, 24h, 72h, 1 week, 2 weeks, and 4 weeks.