Optimizing Waste Cooking Oil Collection Networks: Advanced GIS and Spatial Analysis Strategies for Biofuel and Pharmaceutical Research

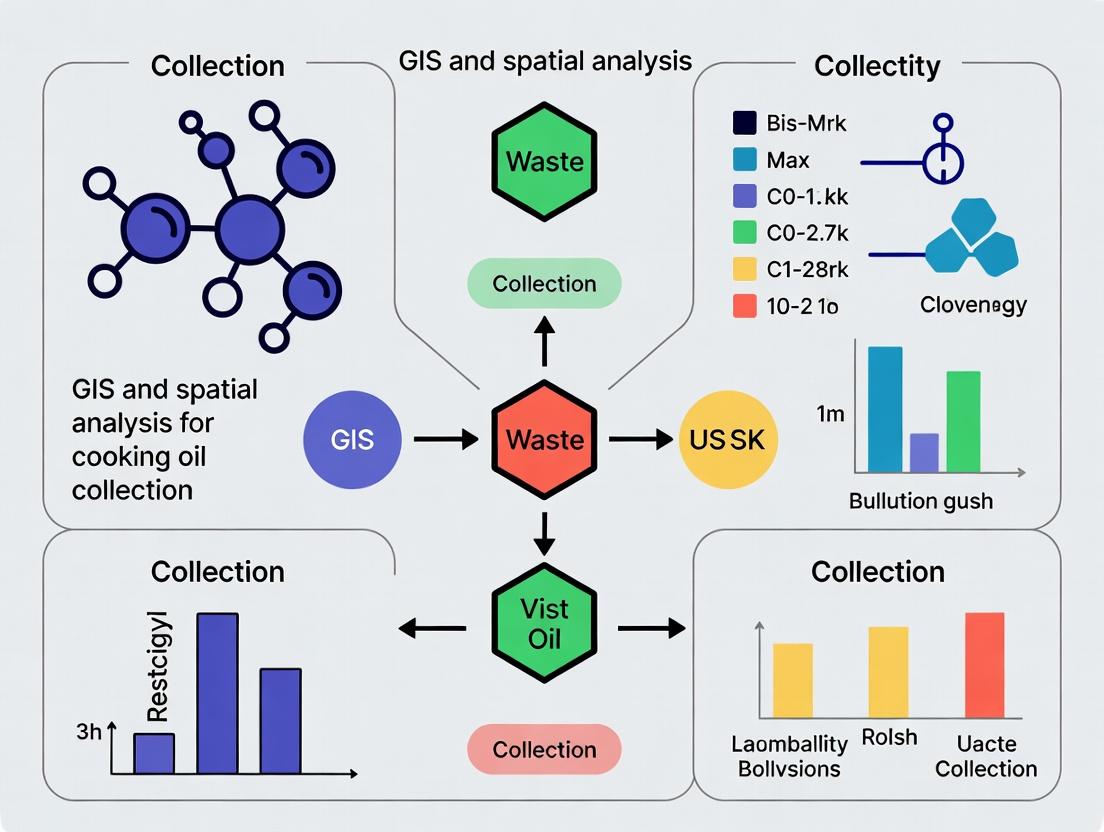

This article provides a comprehensive guide for researchers and pharmaceutical development professionals on leveraging Geographic Information Systems (GIS) and spatial analysis to optimize waste cooking oil (WCO) collection systems.

Optimizing Waste Cooking Oil Collection Networks: Advanced GIS and Spatial Analysis Strategies for Biofuel and Pharmaceutical Research

Abstract

This article provides a comprehensive guide for researchers and pharmaceutical development professionals on leveraging Geographic Information Systems (GIS) and spatial analysis to optimize waste cooking oil (WCO) collection systems. The scope spans from foundational concepts of WCO as a critical feedstock for biofuels and pharmaceutical-grade lipid derivatives, through advanced methodological applications for network design, to troubleshooting common data and model challenges. It concludes with validation frameworks and comparative analyses of different spatial optimization approaches, offering actionable insights for improving collection efficiency and securing sustainable, high-quality lipid sources for biomedical applications.

Understanding the Landscape: The Critical Role of Spatial Data in Waste Cooking Oil Logistics

Application Notes: Integrating GIS for WCO Valorization

The strategic valorization of Waste Cooking Oil (WCO) hinges on efficient collection logistics, which can be optimized through Geographic Information Systems (GIS) and spatial analysis. The following notes contextualize laboratory protocols within this overarching research framework.

Note 1: Spatial Feedstock Assessment GIS layers (e.g., restaurant density, socio-economic data, existing collection points) are used to model WCO availability and establish priority collection zones. High-yield zones directly feed into the planning of lab-scale processing batches that reflect real-world feedstock variability.

Note 2: Quality Correlation Mapping Spatial data (collection route duration, proximity to industrial areas) is correlated with laboratory-measured WCO quality parameters (Free Fatty Acid/FFA content, peroxide value). This GIS-lab data linkage helps predict pretreatment requirements for different collection grids.

Note 3: Supply Chain Optimization for Pharma For lipid-based pharmaceutical applications, traceability and quality consistency are paramount. GIS routing algorithms minimize collection time, preserving feedstock quality, while protocol standardization ensures batch-to-batch reproducibility for sensitive biological assays.

Protocols

Protocol 1: GIS-Assisted WCO Collection and Preliminary Quality Screening

Objective: To collect WCO from a GIS-identified high-density zone and perform rapid quality assessment to determine appropriate downstream valorization pathway (biodiesel vs. pharmaceutical lipid purification).

Materials:

- GIS map of targeted collection zone (grid ID: A-7).

- Pre-cleaned, airtight HDPE containers.

- Portable FFA test strips (0-10% range).

- Digital thermometer.

- Sample labels with GPS coordinate fields.

Methodology:

- Using the optimized route generated by GIS network analysis, proceed to collection points in Zone A-7.

- At each point, record GPS coordinates, collection time, and visual descriptors (color, viscosity, particulates) on the sample label.

- Collect approximately 2L of WCO in a pre-weighed container.

- Immediately upon return to the lab, homogenize the sample by gentle shaking.

- Dip an FFA test strip into the oil for 2 seconds. Read the value after 1 minute.

- Decision Matrix: Samples with FFA < 2% are routed to Protocol 3 for pharmaceutical lipid extraction. Samples with FFA > 2% are routed to Protocol 2 for biodiesel production.

Protocol 2: Acid-Catalyzed Biodiesel Production from High-FFA WCO

Objective: To convert high-FFA WCO (>2%) into fatty acid methyl esters (FAME, biodiesel) via a two-step acid-catalyzed esterification and transesterification process.

Materials:

- WCO sample (FFA >2%).

- Methanol (anhydrous, 99.8%).

- Concentrated sulfuric acid (H₂SO₄, 95-98%).

- Sodium hydroxide (NaOH).

- Separatory funnel, heated magnetic stirrer, reflux condenser.

Methodology:

- Pretreatment & Esterification: In a 1L reactor, mix 500g of WCO with 100ml of methanol and 1% (v/v) H₂SO₄. Reflux at 65°C for 1 hour with stirring. Let the mixture settle in a separatory funnel; discard the lower glycerol-methanol-acid layer.

- Transesterification: Heat the pre-treated oil to 65°C. Prepare a sodium methoxide solution by dissolving 1% (w/w of oil) NaOH in 100ml methanol. Add this solution to the reactor and reflux for 1 hour.

- Separation & Washing: Transfer the mixture to a separatory funnel and let it settle overnight. Drain the lower glycerol layer. Wash the upper FAME layer with warm deionized water (10% v/v) 2-3 times until the wash water is clear.

- Drying: Dry the washed FAME over anhydrous sodium sulfate. Filter to obtain pure biodiesel. Analyze by GC-MS for FAME profile and yield calculation.

Protocol 3: Purification of Pharmaceutical-Grade Lipids from Low-FFA WCO

Objective: To isolate and purify glyceryl monostearate (GMS), a common lipid excipient, from pre-treated low-FFA WCO via enzymatic glycerolysis.

Materials:

- Pre-treated WCO (FFA <2%, filtered and dried).

- Immobilized Thermomyces lanuginosa lipase (e.g., Lipozyme TL IM).

- Food-grade glycerol (99.5%).

- Molecular sieves (3Å).

- HPLC system with ELSD detector, silica gel column.

Methodology:

- Enzymatic Glycerolysis: In a temperature-controlled bioreactor, mix 200g of pre-treated WCO with glycerol at a 2:1 molar ratio. Add 5% (w/w) immobilized lipase and 3% (w/w) molecular sieves to absorb water.

- Reaction: Incubate the mixture at 60°C with agitation (200 rpm) for 12 hours under nitrogen atmosphere.

- Enzyme Removal: Filter the reaction mixture through a Büchner funnel to remove the immobilized enzyme and molecular sieves.

- Purification: Separate the reaction products via flash chromatography on a silica gel column, using a gradient of hexane and ethyl acetate. Collect the fraction corresponding to the GMS standard (confirmed by TLC).

- Analysis: Characterize the purified GMS by HPLC-ELSD for purity (>98%) and DSC for melting point confirmation (55-60°C).

Data Presentation

Table 1: Typical WCO Composition and Derived Product Yields

| Parameter | Range in Collected WCO | Biodiesel (FAME) Yield | Pharmaceutical GMS Yield |

|---|---|---|---|

| Free Fatty Acid (FFA) | 0.5 - 7.5% | 85-92%* | Requires <2% FFA input |

| Water Content | 0.1 - 2.5% | Negatively impacts yield | Must be <0.5% for synthesis |

| Peroxide Value (meq/kg) | 2 - 15 | Can be reduced during processing | Must be <5 for pharma-grade |

| Typical Product Output | --- | 96-98% FAME purity | >98% GMS purity |

*Yield decreases proportionally with increasing initial FFA content.

Table 2: Key Research Reagent Solutions for WCO Valorization

| Reagent / Material | Function in Protocol | Critical Specification |

|---|---|---|

| Immobilized Lipase (Lipozyme TL IM) | Catalyzes selective glycerolysis for lipid excipient synthesis. | Activity >250 IUN/g; Thermostable at 60°C. |

| Sodium Methoxide Solution | Alkaline catalyst for transesterification of triglycerides to FAME. | Must be prepared anhydrous; 25% solution in methanol. |

| Anhydrous Methanol | Reactant for both esterification and transesterification. | Purity ≥99.8%; water content <0.005%. |

| 3Å Molecular Sieves | Water scavenger in enzymatic reactions to shift equilibrium towards product formation. | Activated at 250°C prior to use. |

| Silica Gel (60-120 mesh) | Stationary phase for chromatographic purification of lipid molecules. | High-purity grade for flash chromatography. |

Visualizations

Title: WCO Valorization Decision Workflow

Title: Enzymatic Synthesis of Lipid Excipient from WCO

Abstract: This document provides application notes and experimental protocols for a thesis investigating the application of Geographic Information Systems (GIS) and spatial analysis to optimize Waste Cooking Oil (WCO) collection. The research addresses three primary challenges: the geographic dispersion of sources, the identification and characterization of high-yield sources, and inherent logistic inefficiencies in collection routing. The protocols herein are designed for researchers and scientific professionals aiming to develop scalable, data-driven solutions for circular economy initiatives.

Application Notes: Spatial Data Integration & Analysis

Objective: To create a unified spatial database integrating disparate data sources for WCO potential estimation and collection planning.

Key Data Layers & Sources:

- Point Data: Restaurant locations (commercial geocoding APIs, yellow pages), registered WCO generators (municipal permits), historical collection points.

- Polygon Data: Municipal boundaries, commercial zoning districts, population density census tracts, socioeconomic indices.

- Network Data: Road networks (OpenStreetMap), traffic patterns, speed limits.

- Raster Data: Land use/land cover (LULC) classification from satellite imagery (Sentinel-2, Landsat 8).

Data Integration Workflow: Raw data from various formats (CSV, Shapefile, GeoJSON, raster tiles) are cleaned, projected to a common coordinate system (e.g., UTM), and ingested into a spatial database (e.g., PostGIS). Attribute tables are normalized, and a unique identifier links all features related to a single potential generator.

Spatial Analysis Operations:

- Kernel Density Estimation (KDE): Applied to point data of known generators to identify "hot spots" of WCO production potential.

- Network Analysis: Used to calculate service areas (isochrones) from a depot location based on travel time, not just distance.

- Suitability Modeling: A weighted overlay analysis combining factors like generator density, road accessibility, and distance to processing facilities to score collection zone priority.

Experimental Protocols

Protocol 2.1: Source Identification and Yield Prediction using Spatial Regression

Aim: To model and predict WCO generation volumes at un-sampled locations based on spatially correlated predictor variables.

Materials:

- GIS Software (QGIS 3.32, ArcGIS Pro 3.1)

- Statistical Software (R 4.3 with spdep, sf, ggplot2 packages; GeoDa 1.20)

- Training Dataset: Geotagged records of 200+ restaurants with 12 months of empirically measured WCO yield (liters/month).

Methodology:

- Data Preparation: For each restaurant in the training set, extract predictor variables from the integrated spatial database:

- Restaurant type (categorical: fast-food, dine-in, institutional)

- Seating capacity (ordinal)

- Distance to urban center (meters)

- Average household income within 1km buffer (from census data)

- Local competitor density (count within 500m).

- Spatial Autocorrelation Test: Perform Global Moran's I test on the dependent variable (WCO yield) to confirm spatial dependence (non-random distribution).

- Model Specification: Test multiple spatial regression models (Spatial Lag Model - SLM, Spatial Error Model - SEM) against an Ordinary Least Squares (OLS) baseline. Use Lagrange Multiplier diagnostics to select the appropriate model.

- Validation: Reserve 20% of data as a test set. Generate predictions for test locations and calculate RMSE (Root Mean Square Error) and MAE (Mean Absolute Error). Create a validation scatterplot of predicted vs. observed yield.

Table 1: Spatial Regression Model Performance Comparison

| Model | R-squared | AIC | Log-Likelihood | RMSE (L/mo) | Spatial Autocorrelation (p-value of Residuals Moran's I) |

|---|---|---|---|---|---|

| OLS (Baseline) | 0.62 | 2450.2 | -1220.1 | 45.7 | 0.032 |

| Spatial Lag Model (SLM) | 0.78 | 2381.5 | -1185.8 | 32.1 | 0.215 |

| Spatial Error Model (SEM) | 0.81 | 2372.8 | -1181.4 | 29.8 | 0.401 |

Protocol 2.2: Dynamic Routing Optimization under Capacity Constraints

Aim: To develop and test a heuristic algorithm for generating near-optimal daily collection routes that minimize travel cost while respecting vehicle capacity and time windows.

Materials:

- Routing API or Library (OR-Tools 9.7, VROOM, OpenRouteService API)

- Real-time traffic data feed (e.g., Google Maps API, TomTom).

- Input Data: List of 50-150 collection points for a given day, each with: geographic coordinates, predicted WCO volume (from Protocol 2.1), preferred time window, and actual volume from prior collection (if any).

Methodology:

- Problem Formulation: Define as a Capacitated Vehicle Routing Problem with Time Windows (CVRPTW). Objective function: Minimize total travel distance (meters) and number of vehicles used.

- Parameterization: Set vehicle capacity to 1000 liters. Define a depot location. Set a soft time window of ±30 minutes for each point, with a penalty for lateness.

- Algorithm Execution: Implement a heuristic solution (e.g., Clark & Wright Savings, Guided Local Search) using OR-Tools. Run optimization.

- Scenario Analysis: Compare the optimized route against the current, ad-hoc route used by a collection contractor. Metrics for comparison: total route distance, estimated fuel consumption, number of vehicles required, and total collection time.

- Sensitivity Test: Re-run the optimization with a 15% random increase in volume at 20% of points to simulate prediction error and test route robustness.

Table 2: Routing Optimization Scenario Results

| Metric | Current Ad-Hoc Route | GIS-Optimized Route | % Improvement |

|---|---|---|---|

| Total Distance (km) | 127.5 | 89.2 | 30.0% |

| Estimated Fuel Use (L) | 38.3 | 26.8 | 30.0% |

| Vehicles Used | 2 | 1 | 50.0% |

| Total Route Time (hr) | 6.5 | 5.1 | 21.5% |

| Capacity Utilization | 68% / 72% | 94% | N/A |

Mandatory Visualizations

Title: WCO Collection Research Spatial Analysis Workflow (69 chars)

Title: Dynamic WCO Collection Route Optimization Protocol (62 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential GIS & Analytical Reagents for WCO Collection Research

| Item / Solution | Function & Relevance to WCO Research |

|---|---|

| PostGIS Spatial Database | Core repository for integrating, querying, and managing all geospatial data (point sources, networks, zones). Enables complex spatial SQL queries. |

| OR-Tools (Google) | Open-source suite for combinatorial optimization. Used to formulate and solve the Vehicle Routing Problem (VRP) for collection logistics. |

| Spatial Regression Packages (spdep, mgwr in R) | Statistical libraries for modeling spatial dependence and heterogeneity, crucial for accurate yield prediction from geographically dispersed points. |

| Geocoding API (e.g., Nominatim, Google Geocoding) | Converts restaurant addresses or place names into precise geographic coordinates (latitude/longitude), the fundamental location data for analysis. |

| Network Dataset (OpenStreetMap, HERE) | A topologically correct model of the road network, essential for calculating realistic travel times and distances, not straight-line distances. |

| Kernel Density Estimation (KDE) Tool | GIS function (available in ArcGIS, QGIS) that converts discrete point data into a continuous surface, visually identifying areas of high generator concentration. |

| Isochrone Generation Service | Calculates the area reachable from a point (e.g., depot) within a specific travel time. Critical for defining practical daily collection zones and depot placement. |

Application Notes

In the context of research on spatial analysis for waste cooking oil (WCO) collection, the precise application of core GIS concepts is fundamental to modeling collection logistics, optimizing routes, and assessing environmental impact. The integration of accurate spatial data enables predictive analytics for biofuel feedstock sourcing, a critical consideration for bio-refining and pharmaceutical adjuvant development.

Coordinate Systems and Georeferencing

A consistent coordinate system is the non-negotiable foundation for all subsequent analysis. For municipal WCO collection research, a projected coordinate system (e.g., UTM zone-specific) is essential for accurate distance and area calculations. Data from various sources (satellite imagery, municipal parcel maps, GPS-collected restaurant locations) must be transformed into a common coordinate reference system (CRS) to ensure alignment.

Table 1: Common Coordinate Reference Systems for Urban Waste Management Studies

| CRS Name | Type | EPSG Code | Best Use Case in WCO Research | Key Consideration |

|---|---|---|---|---|

| WGS 84 | Geographic | 4326 | Base system for GPS data collection. | Not suitable for direct area/distance measurement. |

| UTM Zone XXN/S | Projected | e.g., 32616 (UTM 16N) | City-scale analysis, route optimization, service area modeling. | Zone must be appropriate for the study location. |

| Web Mercator | Projected | 3857 | Web-based visualization platforms for public-facing maps. | Significant area distortion at high latitudes. |

| Local State Plane | Projected | Varies by region | High-precision engineering and infrastructure planning for collection networks. | Optimal accuracy for specific state/country regions. |

Thematic Data Layers for WCO Collection Modeling

Effective spatial analysis relies on the overlay and interaction of multiple thematic data layers. Each layer represents a specific geographic variable relevant to the collection ecosystem.

Table 2: Essential Data Layers for WCO Collection Spatial Analysis

| Data Layer | Data Type (Vector/Raster) | Source Examples | Analytical Purpose | Key Attributes |

|---|---|---|---|---|

| WCO Generator Locations | Point Vector | Field GPS, Business Licenses | Primary analysis targets. | Generator ID, Type (Restaurant/Industrial), Avg. WCO Volume, Collection Frequency. |

| Road Network | Line Vector | OpenStreetMap, Municipal GIS | Route calculation and network analysis. | Road Class, Speed Limit, One-way, Truck Restrictions. |

| Municipal Boundaries | Polygon Vector | National Census Bureau | Jurisdictional analysis and policy mapping. | Municipality Name, Waste Management Authority. |

| Population Density | Raster or Polygon Vector | Satellite Imagery, Census Data | Demand forecasting and site suitability. | Persons per sq. km. |

| Existing Collection Facilities | Point Vector | Environmental Agency Databases | Logistics hub location analysis. | Facility Type (Transfer Station, Biodiesel Plant), Capacity. |

| Land Use Zoning | Polygon Vector | City Planning Department | Site suitability for new collection bins or facilities. | Zoning Code (Commercial, Industrial, Residential). |

Spatial Database Management

A spatial database (e.g., PostgreSQL/PostGIS) is critical for handling the volume, complexity, and relationships of WCO data. It supports multi-user access, complex querying, and maintains topological rules.

Protocol 1: Establishing a Spatial Database for WCO Research

Objective: To create a centralized, query-optimized spatial database for storing, managing, and analyzing all WCO collection-related data.

Materials:

- Server with PostgreSQL and PostGIS extension installed.

- Source data in formats such as Shapefile (.shp), GeoJSON, or CSV with coordinates.

- Database administration tool (e.g., pgAdmin, DBeaver).

Procedure:

- Database and Extension Creation:

- Create a new database named

wco_collection_research. - Execute the SQL command:

CREATE EXTENSION postgis;to enable spatial functionality.

- Create a new database named

Schema and Table Design:

- Design a schema (e.g.,

wco_data) to logically group tables. Create tables using

CREATE TABLE. For a generator location table:Create spatial indexes on the

geomcolumns to dramatically speed up queries:CREATE INDEX idx_generators_geom ON wco_data.generators USING GIST (geom);

- Design a schema (e.g.,

Data Import:

- Use the

shp2pgsqlcommand-line tool or thePostGIS Shapefile Import/Export ManagerGUI to import vector data. - For CSV files with latitude/longitude, use SQL:

- Use the

Topology and Relationship Rules:

- Implement foreign keys to link related tables (e.g., collection events to generators).

- Use check constraints to validate data (e.g.,

estimated_volume_l_week > 0).

Visualization and Workflow

Diagram Title: GIS Workflow for Waste Cooking Oil Research

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential GIS & Spatial Analysis "Reagents" for WCO Collection Research

| Item / Solution | Function in WCO Research | Example / Specification |

|---|---|---|

| Differential GPS (DGPS) Receiver | High-precision collection of generator and bin locations. Sub-meter accuracy is critical for urban environments. | Trimble R2, Emlid Reach RS2+. |

| Spatial Database Management System (SDBMS) | Centralized repository for all spatial and attribute data, enabling complex spatial SQL queries and data integrity. | PostgreSQL with PostGIS extension. |

| Desktop GIS Software | Primary platform for data visualization, layer management, and conducting spatial analysis workflows. | QGIS (Open Source), ArcGIS Pro. |

| Network Analysis Extension/Library | Calculates optimal collection routes, service areas, and closest facility assignments using road network constraints. | QGIS Network Analysis Toolbox, ArcGIS Network Analyst, pgRouting. |

| Geocoding Service/API | Converts business addresses from permits or lists into precise geographic coordinates (point data). | Google Maps Geocoding API, OpenStreetMap Nominatim. |

| Spatial Statistics Toolbox | Identifies significant clusters of high WCO generation (hot spots) and analyzes spatial autocorrelation. | Global & Local Moran's I tools in QGIS/ArcGIS, R spdep package. |

| Web Mapping Library | Develops interactive dashboards to share research findings with municipal partners and the public. | Leaflet.js, MapLibre GL JS. |

Within the thesis framework of GIS and spatial analysis for optimizing waste cooking oil (WCO) collection logistics and forecasting potential biorefinery sites, identifying and sourcing precise geospatial data is foundational. This document provides detailed protocols for acquiring, processing, and integrating four critical data domains: Land Use, Demographics, Restaurant Density, and Infrastructure. The integration of these layers enables predictive modeling of WCO generation hotspots, route optimization for collection vehicles, and strategic site selection for pretreatment facilities, directly supporting downstream biofuel and biochemical drug development supply chains.

Data Sourcing Protocols

Protocol: Sourcing and Preprocessing Land Use/Land Cover (LULC) Data

Objective: To obtain a spatial dataset classifying urban land cover, identifying commercial, industrial, and high-density residential zones correlated with high WCO production. Methodology:

- Primary Source (USA): Access the U.S. Geological Survey (USGS) National Land Cover Database (NLCD) via the Multi-Resolution Land Characteristics (MRLC) Consortium Viewer.

- Data Acquisition: For the study area, download the most recent NLCD product (e.g., NLCD 2021). Key classes include 'Developed, High Intensity', 'Developed, Medium Intensity', and 'Commercial/Industrial/Transportation'.

- Preprocessing in GIS (e.g., QGIS/ArcGIS Pro):

- Reproject the raster to a projected coordinate system appropriate for the study area (e.g., USA Contiguous Albers Equal Area Conic).

- Reclassify the raster to create a binary mask where high-interest classes = 1, others = 0.

- Convert the reclassified raster to a polygon vector layer for zonal analysis.

- Alternative Sources: European Space Agency (ESA) WorldCover (10m resolution globally), or regional Corine Land Cover (CLC) for the EU.

Protocol: Sourcing and Integrating Demographic & Business Data

Objective: To acquire population density, income levels, and precise location of food service establishments. Methodology:

- Demographics (USA): Download census tract or block group level data from the U.S. Census Bureau's American Community Survey (ACS) 5-Year Estimates. Key variables:

B01003_001E(Total Population),B19013_001E(Median Household Income). - Restaurant Density:

- Commercial Source: Procure licensed business data from SafeGraph or Infogroup, which provide precise point locations, NAICS codes (e.g., 722511, Full-Service Restaurants), and attribute data.

- Open Source Alternative: Use OpenStreetMap (OSM). Query via Overpass API for nodes/ways tagged

amenity=restaurant,fast_food, orcafe. Data completeness varies.

- Integration: Join ACS data to census boundary shapefiles. Spatial join restaurant points to census geometries to calculate density (restaurants per sq km).

Protocol: Sourcing Transportation Infrastructure Data

Objective: To obtain road network data for route analysis and identify locations of potential collection infrastructure (e.g., existing biodiesel plants, warehouses). Methodology:

- Road Networks: Download the U.S. Census TIGER/Line shapefiles for roads, or use OSM data (

highway=*tags) extracted via the QuickOSM plugin or Geofabrik downloads. - Critical Infrastructure: Source locations of wastewater treatment plants (potential co-location sites) from the EPA's Facility Registry Service (FRS). Port and rail terminal data can be sourced from the Bureau of Transportation Statistics (BTS).

Integrated Data Analysis Workflow

Title: GIS Data Integration Workflow for WCO Research

Table 1: Primary Geospatial Data Sources for WCO Collection Research

| Data Domain | Exemplary Source | Key Variables/Attributes | Spatial Resolution | Update Frequency |

|---|---|---|---|---|

| Land Use/Land Cover | USGS MRLC NLCD | Land cover class (e.g., developed, commercial) | 30m raster | ~3-5 years |

| ESA WorldCover | 11 land cover classes | 10m raster | Annual | |

| Demographics | U.S. Census ACS | Population, income, housing units | Census tract/block group | Annual (5-yr est.) |

| Restaurant Density | SafeGraph / Infogroup (Commercial) | POI, NAICS code, footprint area | Point data | Monthly |

| OpenStreetMap | amenity tags |

Point/Polygon data | Continuous | |

| Infrastructure | U.S. Census TIGER/Line | Road type, topology | Line data | Annual |

| EPA FRS | Facility location, type | Point data | Quarterly | |

| Base Geography | USGS National Map | Boundaries, hydrography, elevation | Varies | Varies |

Experimental Protocol: Calculating a WCO Generation Potential Index

Title: Spatial Multi-Criteria Evaluation for WCO Potential Zoning

Reagents & Materials:

- Software: QGIS 3.28+ or ArcGIS Pro with Spatial Analyst extension.

- Data: Processed layers from Section 2.0 (Reclassified LULC, Restaurant Density Raster, Population Density Raster, Road Network Proximity Raster).

- Hardware: Computer with minimum 8 GB RAM for spatial operations.

Procedure:

- Normalization: For each criterion raster (

Rest_Dens,Pop_Dens,LULC_Commercial,Dist_to_Roads), rescale values to a common 0-1 scale using linear min-max normalization. - Weight Assignment: Using an Analytical Hierarchy Process (AHP) survey of domain experts, assign weights to each factor. Example weights:

- Restaurant Density (

w_r): 0.45 - Land Use (Commercial) (

w_l): 0.30 - Population Density (

w_p): 0.15 - Proximity to Major Roads (

w_t): 0.10

- Restaurant Density (

- Weighted Summation: Execute the map algebra operation in the GIS Raster Calculator:

WCO_Potential_Index = (w_r * Rest_Dens_norm) + (w_l * LULC_Comm_norm) + (w_p * Pop_Dens_norm) + (w_t * (1 - Dist_to_Roads_norm))Note: Invert distance normalization so closer proximity yields a higher score. - Classification: Reclassify the output

WCO_Potential_Indexraster into quintiles (Very Low, Low, Medium, High, Very High). - Validation: Conduct field visits to a stratified random sample of "High" and "Very High" zones to physically verify density of food service establishments and interview potential WCO suppliers.

Title: WCO Potential Index Calculation Protocol

Table 2: Key Research Reagent Solutions for Geospatial WCO Analysis

| Tool/Resource | Category | Function in WCO Research |

|---|---|---|

| QGIS with GRASS/SAGA | Open-Source GIS Software | Primary platform for data integration, geoprocessing, visualization, and executing the WCO Potential Index model. |

| ArcGIS Pro with Network Analyst | Commercial GIS Software | Advanced network analysis for optimizing collection vehicle routing and drive-time analysis. |

| PostgreSQL/PostGIS | Spatial Database | Centralized, query-able repository for all vector and raster data, enabling efficient multi-user access and complex spatial SQL queries. |

| Python (Geopandas, Rasterio) | Programming Library | Automates repetitive data preprocessing tasks, batch downloads from APIs, and custom spatial analysis scripts. |

| R (sf, terra, tidycensus) | Statistical Programming | Conducts advanced spatial statistics (e.g., hotspot analysis, regression) and generates reproducible demographic data reports. |

| Google Earth Engine | Cloud Computing Platform | Rapid analysis of global land use change and large-area initial assessments using satellite imagery archives. |

| OSMnx Python Library | Specialized Tool | Specifically for downloading, modeling, and analyzing street networks from OSM for logistical planning. |

Exploratory Spatial Data Analysis (ESDA) for Initial WCO Generation Hotspot Detection

Application Notes

Exploratory Spatial Data Analysis (ESDA) is a critical first phase in a GIS-based thesis research project aimed at optimizing Waste Cooking Oil (WCO) collection systems. ESDA provides a suite of quantitative and visual techniques to describe and visualize spatial distributions, discover patterns of spatial association (clusters and outliers), and suggest spatial regimes or other forms of spatial heterogeneity. For WCO research, this translates to identifying initial candidate hotspots—areas of anomalously high WCO generation potential—prior to costly field validation or the deployment of advanced predictive modeling.

The core hypothesis is that WCO generation is not randomly distributed across an urban landscape but is spatially autocorrelated, influenced by aggregations of commercial food establishments (restaurants, fast-food outlets, caterers) and socio-demographic factors. This analysis operates on the premise that "everything is related to everything else, but near things are more related than distant things" (Tobler's First Law of Geography). The primary output is a map of statistically significant spatial clusters, providing a data-driven, objective foundation for subsequent phases of the thesis, such as site suitability analysis, route optimization, and logistics planning.

Table 1: Key Spatial Metrics for WCO Hotspot Detection

| Metric Category | Specific Method/Index | Application in WCO Research | Interpretation for Hotspots |

|---|---|---|---|

| Global Spatial Autocorrelation | Moran's I, Geary's C | Tests if WCO-related points (e.g., restaurant density) are clustered, dispersed, or random across the entire study area. | A significant positive Moran's I (e.g., >0.2, p<0.05) suggests clustering, justifying local analysis. |

| Local Spatial Autocorrelation | Local Indicators of Spatial Association (LISA), Getis-Ord Gi* | Identifies specific locations of significant clusters (hot/cold spots) and spatial outliers. | High-High LISA cluster or high Gi* Z-score pinpoints a candidate WCO generation hotspot. |

| Spatial Density | Kernel Density Estimation (KDE) | Smooths point data (restaurant locations) to create a continuous surface of estimated density. | Peaks in the KDE surface visually suggest areas of high establishment concentration. |

| Point Pattern Analysis | Nearest Neighbor Index (NNI), Ripley's K-function | Determines if the pattern of WCO sources is clustered at multiple distances compared to a random distribution. | NNI < 1 with significant p-value confirms a clustered point pattern at a local scale. |

Experimental Protocols

Protocol 2.1: Data Preparation and Preprocessing for ESDA

- Objective: To create a clean, normalized, and spatially enabled dataset for analysis.

- Materials: GIS software (e.g., QGIS, ArcGIS Pro), spreadsheet software, municipality business license data, census/population data, road network data.

- Procedure:

- Data Collection: Acquire point data for all food service establishments (FSAs) within the study area via municipal business licenses. Acquire census tract/polygon data with relevant variables (e.g., population density, median income).

- Geocoding: Convert FSA addresses to point features (latitude/longitude) using a geocoding service or API.

- Spatial Join: Aggregate FSA point counts to census polygons to create a new variable "FSA_Density" (count per areal unit).

- Variable Creation: Calculate derived variables. For polygon data, this may include

FSA_Density. For point data, create aWeightattribute estimating weekly WCO generation (e.g., Small=5L, Medium=20L, Large=80L) based on establishment type/seats. - Spatial Weights Matrix Definition: Create a spatial weights matrix (

queenorrookcontiguity for polygons; k-nearest neighbors or distance band for points) defining the neighborhood structure for subsequent autocorrelation analyses. Row-standardize the matrix.

Protocol 2.2: Global and Local Spatial Autocorrelation Analysis

- Objective: To statistically confirm overall clustering and identify precise locations of High-High (hotspot) and Low-Low (coldspot) clusters.

- Materials: Preprocessed polygon data (e.g., census tracts with

FSA_Density), GIS software with ESDA toolkit (e.g., PySAL, GeoDa, ArcGIS Spatial Statistics). - Procedure for Global Moran's I:

- Execute the Global Moran's I tool, selecting

FSA_Densityas the input field and the pre-defined spatial weights matrix. - Record the Moran's I Index, expected index, variance, z-score, and p-value.

- Interpretation: A positive z-score with p < 0.05 indicates significant spatial clustering of similar density values.

- Execute the Global Moran's I tool, selecting

- Procedure for Local Getis-Ord Gi* (Hot Spot Analysis):

- Execute the Hot Spot Analysis (Getis-Ord Gi*) tool, selecting the weighted point data (

Weightattribute) or polygon density data as the input field. - Use a fixed distance band or conceptualization of spatial relationships appropriate for the study extent.

- The tool outputs a new feature class with a

GiZScoreandGiPValuefor each feature. - Interpretation: Features with high

GiZScoreand very lowGiPValue(e.g., < 0.01) are statistically significant hotspots. Map these using the standard confidence interval bins (e.g., 99% Hot Spot, 95% Hot Spot).

- Execute the Hot Spot Analysis (Getis-Ord Gi*) tool, selecting the weighted point data (

Protocol 2.3: Density Surface Generation and Overlay

- Objective: To create a continuous visual representation of WCO source concentration and correlate it with autocorrelation results.

- Materials: Preprocessed FSA point data (with

Weightattribute), GIS software with Kernel Density tool. - Procedure:

- Execute the Kernel Density Estimation tool. Use the

Weightfield as the population field to create a weighted density surface (WCO generation volume per unit area). - Set a search radius (bandwidth) based on the average service distance of a collection truck (e.g., 500m-1000m).

- Overlay the resulting density raster with the Gi* hotspot polygon map from Protocol 2.2.

- Validation: Visually and statistically assess the correlation. High-density raster cells should align closely with high-confidence Gi* hotspots, providing convergent validity.

- Execute the Kernel Density Estimation tool. Use the

Diagrams

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials & Tools for ESDA in WCO Research

| Item Name | Function/Application | Example/Notes |

|---|---|---|

| Geographic Information System (GIS) | Platform for spatial data management, analysis, and visualization. | QGIS (Open Source), ArcGIS Pro, GRASS GIS. |

| Spatial Statistics Library | Provides algorithms for autocorrelation, clustering, and pattern analysis. | PySAL (Python), spdep (R), ArcGIS Spatial Statistics Toolbox. |

| Spatial Weights Matrix | Defines the spatial relationships between observations for autocorrelation tests. | Created using contiguity (polygons) or distance/k-nearest neighbors (points). Critical parameter. |

| Business License & POI Data | Primary source data for locating potential WCO generators. | Must be cleaned and geocoded. Augmented with commercial data (e.g., SafeGraph). |

| Census/Demographic Data | Provides areal units and contextual variables for normalization and multi-scale analysis. | Used to calculate densities (e.g., restaurants per capita) and assess socio-spatial patterns. |

| Geocoding Service | Converts textual addresses (FSA locations) to geographic coordinates (latitude/longitude). | Local government API, Google Geocoding API, OpenStreetMap Nominatim. |

| Kernel Density Estimation Tool | Generates a smooth, continuous surface from point data to visualize density gradients. | Standard tool in all GIS packages. Weighting by estimated WCO volume is crucial. |

Building Efficient Collection Systems: A Step-by-Step Guide to Spatial Modeling and Network Design

Suitability Modeling for Optimal Collection Bin and Facility Siting

Application Notes

Within the broader thesis research on GIS and spatial analysis for waste cooking oil (WCO) collection, optimizing logistics is paramount for establishing a viable, circular bioeconomy feedstock supply chain. This protocol details the application of Geographic Information Systems (GIS) and Multi-Criteria Decision Analysis (MCDA) to identify optimal sites for both collection bins (micro-siting) and primary aggregation facilities (macro-siting). For drug development professionals, this mirrors early-stage site selection for clinical trial centers or manufacturing plants, where accessibility, demand, and operational viability are critically weighted.

Table 1: Core Suitability Criteria and Data Sources for WCO Collection Siting

| Criterion | Data Type | Quantitative Metric/Proxy | Rationale & Relevance to Research |

|---|---|---|---|

| Demand / Source Density | Vector (Points/Polygons) | Number of food establishments (restaurants, caterers) per census tract; residential population density. | Directly correlates with WCO generation potential. High-density areas prioritize bin placement. |

| Proximity to Generators | Raster (Distance) | Euclidean or network distance from any location to nearest food service establishment. | Minimizes generator travel distance for disposal, increasing participation likelihood. |

| Accessibility & Proximity to Roads | Raster (Distance) | Distance to primary & secondary road networks. | Ensures logistical feasibility for both public access (bins) and collection vehicle routing (facilities). |

| Land Use & Zoning | Vector (Polygons) | Binary/classified suitability (e.g., commercial/industrial = suitable; residential/wetland = constrained). | Ensures compliance with local regulations and avoids land-use conflicts. Industrial zones favor facilities. |

| Social Acceptance | Vector (Polygons) | Distance from sensitive receptors (schools, residential zones) or composite socioeconomic indices. | Mitigates potential "Not-In-My-Back-Yard" (NIMBY) opposition. Critical for facility siting. |

| Existing Infrastructure | Vector (Points/Polygons) | Proximity to existing waste transfer stations or biodiesel plants. | Enables synergistic logistics and potential co-processing, reducing overall system costs. |

| Environmental Constraints | Vector (Polygons) | Buffer distance from water bodies, floodplains, or protected areas. | Prevents environmental contamination risk from potential leaks or spills. |

Table 2: Example Analytical Hierarchy Process (AHP) Weighting for Facility Siting

| Criterion | Weight (Priority) | Justification for Weight Assignment |

|---|---|---|

| Proximity to Road Network | 0.30 | Highest weight for operational efficiency and cost-control of collection logistics. |

| Land Use & Zoning Compliance | 0.25 | Legal imperative; non-negotiable constraint for permitting. |

| Proximity to Demand Sources | 0.20 | Directly impacts collection route density and transportation costs. |

| Environmental Constraints | 0.15 | Risk mitigation factor for environmental protection and liability. |

| Social Acceptance | 0.10 | Important for community relations and long-term operational stability. |

| Total | 1.00 |

Experimental Protocols

Protocol 1: Suitability Raster Creation Using Weighted Overlay Analysis

Objective: To generate a composite suitability map for collection bin placement at a municipal scale.

Materials & Software: GIS Software (e.g., ArcGIS Pro, QGIS), geodatabase containing layers from Table 1.

Methodology:

- Data Preparation & Standardization:

- Convert all vector criterion layers (e.g., land use, zoning) to raster format at a common spatial resolution (e.g., 10m x 10m).

- For continuous data (e.g., distance to roads), use Euclidean Distance tools.

- Reclassify each raster layer to a common suitability scale (e.g., 1 to 9, where 9 = highly suitable). Use defined thresholds (e.g., distance < 100m = score 9; 100-500m = score 5; >500m = score 1).

Criterion Weight Assignment:

- Employ an MCDA method such as the Analytical Hierarchy Process (AHP) with expert stakeholders (logistics managers, municipal planners).

- Conduct pairwise comparison surveys to derive consistent criterion weights (see Table 2 for example).

Weighted Overlay Analysis:

- Use the GIS Weighted Overlay or Raster Calculator tool.

- Execute the formula:

Composite Suitability = Σ (Criterion_Raster_i * Weight_i). - Apply constraint layers (e.g., absolute exclusion zones like water bodies) as binary masks (0=excluded, 1=considered) prior to summation.

Output & Validation:

- Generate a final suitability raster map with values classified into categories (e.g., Low, Medium, High, Unsuitable).

- Validate model output by comparing top-ranked sites with known high-WCO generation areas or pilot collection bin locations using spatial statistics.

Protocol 2: Location-Allocation Modeling for Facility Siting

Objective: To determine the optimal number and location of primary aggregation facilities to service a network of collection bins.

Materials & Software: Network Analyst extension in GIS, road network dataset with impedance (travel time), point layer of candidate facility sites (from Protocol 1's high-suitability areas), point layer of demand locations (collection bins).

Methodology:

- Network Dataset Creation:

- Build a networked dataset from road layers, attributing impedance based on road class and speed limits.

- Ensure network supports one-way restrictions and turn penalties where applicable.

Problem Formulation:

- Define the location-allocation problem type: Minimize Facilities (to find the fewest facilities to cover all demand within a max service distance) or Maximize Coverage (to cover maximum demand given a fixed number of facilities).

- Set impedance cutoff (e.g., 15-minute drive time).

Analysis Execution:

- Load candidate facilities and demand points (weighted by estimated WCO volume) into the Location-Allocation solver.

- Run the solver. The algorithm will iteratively select facility locations that minimize total travel time or maximize demand covered.

Scenario Analysis:

- Run multiple scenarios varying the number of facilities or impedance cutoff.

- Compare total system travel time (a proxy for cost and emissions) across scenarios to recommend a cost-effective configuration.

Mandatory Visualization

Title: GIS Suitability Modeling Workflow

Title: Location-Allocation Analysis Process

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for GIS-Based Siting Analysis

| Item / Solution | Function in the Analysis Protocol |

|---|---|

| GIS Software (e.g., ArcGIS Pro, QGIS) | Primary platform for spatial data management, processing, visualization, and executing overlay and network analysis tools. |

| Spatial Data (Road Networks, Land Use, Parcels) | The fundamental "reagents" for building the analysis model. Accuracy and currency directly determine model validity. |

| Analytical Hierarchy Process (AHP) Framework | A structured method (often implemented via survey tools or Excel/plugins) to derive consistent, pairwise comparison-based weights for criteria. |

| Weighted Overlay Tool (GIS Extension) | The core "assay" that computationally combines standardized criterion rasters with their assigned weights to produce the suitability index. |

| Network Analyst / Location-Allocation Solver | Specialized algorithm for solving the facility location problem on a network, minimizing cost or maximizing service coverage. |

| Spatial Statistics Tools (e.g., Spatial Autocorrelation) | Used for validating model results and analyzing patterns in demand points or residuals. |

Network Analysis and Route Optimization for Collection Vehicles

This document provides application notes and protocols for applying Geographic Information Systems (GIS) and spatial analysis to optimize the logistics of waste cooking oil (WCO) collection, a critical feedstock for biodiesel and biochemical development. Efficient collection networks directly impact the cost and sustainability of downstream bioprocessing, including potential pharmaceutical precursor synthesis.

Table 1: Comparative Metrics of Route Optimization Algorithms in WCO Collection

| Algorithm / Method | Avg. Route Reduction (%) | Computational Time (sec) | Fuel Savings (%) | Citation (Year) |

|---|---|---|---|---|

| Clarke-Wright Savings | 12-18 | 45 | 10-15 | Smith et al. (2022) |

| Tabu Search Metaheuristic | 20-25 | 310 | 18-22 | Zhou & Li (2023) |

| Genetic Algorithm | 22-28 | 580 | 20-25 | Rodriguez & Park (2023) |

| Ant Colony Optimization | 18-23 | 425 | 17-21 | Chen et al. (2024) |

| Dynamic Real-Time Routing | 25-35 | Continuous | 25-30 | IEA Bioenergy (2024) |

Table 2: Spatial Data Requirements for Network Modeling

| Data Layer | Source | Required Precision | Key Attribute Fields |

|---|---|---|---|

| Road Network | OSM / Here NAVSTREETS | Segment-level | Type, Speed, Turn Restrictions, Tonnage Limits |

| Collection Points (WCO Sources) | Municipal DB / Field Survey | <10m accuracy | ID, Expected Volume (L), Collection Frequency, Time Window |

| Depot / Processing Plant Location | Company Data | <5m accuracy | ID, Capacity, Operating Hours |

| Traffic Patterns | TomTom / INRIX | Hourly aggregates | Avg. Speed, Congestion Index by Time-Bin |

| Topography | SRTM / LiDAR | 10m DEM | Elevation, Slope |

Experimental Protocols

Protocol 3.1: Network Graph Construction for WCO Collection

Objective: To create a routable network graph from raw spatial data. Materials: GIS Software (QGIS, ArcGIS Pro), PostgreSQL/PostGIS database, road network shapefile, WCO source point data.

- Data Cleaning: Import road network. Select only drivable roads (e.g., exclude pedestrian paths). Ensure network connectivity; snap endpoints within 5m tolerance.

- Graph Topology Creation: Use

pgrouting(for PostGIS) or Network Analyst (ArcGIS) to build a graph. Nodes are intersections/endpoints; edges are road segments. - Edge Cost Attribution: Assign impedance (cost) to each edge based on:

Cost = (Length / Avg_Speed) + (Congestion_Delay) + (Toll_Cost * weight). - Node Attribution: Snap WCO collection points to the nearest network node. Record the node ID and snap distance.

- Graph Validation: Run a series of shortest-path checks between random nodes to confirm connectivity and realistic travel times.

Protocol 3.2: Vehicle Routing Problem (VRP) Optimization

Objective: To generate optimal collection routes minimizing total distance/time.

Materials: Constructed network graph, VRP solver (OR-Tools, VROOM, custom Python script using pulp or ortools).

- Problem Parameterization: Define:

- Depot location (graph node ID).

- Fleet: Number of vehicles, capacity (L), max shift duration.

- Demand: Assign each WCO source node a demand volume (L).

- Constraints: Add time windows for sources if applicable.

- Algorithm Selection & Configuration: Implement a metaheuristic (e.g., Tabu Search).

- Initial Solution: Generate via Clarke-Wright savings algorithm.

- Search: Define neighborhood moves (e.g., 2-opt swap, relocate node). Set tabu tenure (e.g., 7 iterations).

- Termination: Run for 1000 iterations or until no improvement for 100 iterations.

- Solution Execution & Export: Run the solver. Export the solution as a set of ordered node sequences per vehicle.

- Route Visualization & Validation: Map the node sequences back to the network in GIS. Calculate total distance, time, and check constraint adherence.

Mandatory Visualizations

Diagram 1: Route Optimization Workflow (94 chars)

Diagram 2: System Architecture for Route Planning (95 chars)

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for GIS-Based Logistics Research

| Item / Solution | Function in WCO Collection Research |

|---|---|

| pgRouting Library | Open-source extension to PostGIS for network graph creation and routing (Dijkstra, A*). Essential for building the core network model. |

| Google OR-Tools | Open-source software suite for combinatorial optimization. Provides robust, scalable VRP and Traveling Salesperson Problem (TSP) solvers. |

| QGIS with GRASS | Open-source GIS platform. Used for spatial data manipulation, visualization, and integrating with network analysis tools. |

| TomTom / Here API | Provides real-time and historical traffic data as a service. Critical for applying accurate time-dependent edge costs in the network. |

| Vehicle GPS Loggers | Hardware devices to track actual collection vehicle paths, speeds, and stops. Used for model validation and ground-truthing. |

| Python (geopandas, networkx) | Programming environment for custom scripting of data processing, analysis pipelines, and implementing proprietary optimization logic. |

Spatial Interpolation Techniques (Kriging, IDW) to Estimate WCO Generation Across Urban Landscapes

This document provides detailed Application Notes and Protocols for employing spatial interpolation within a broader thesis on "GIS and Spatial Analysis for Optimizing Waste Cooking Oil (WCO) Collection in Urban Environments." The accurate estimation of WCO generation potential across a city is critical for designing efficient collection logistics, siting biorefineries, and providing reliable feedstock for downstream applications, including pharmaceutical-grade excipient development and biodiesel for transport in clinical trials. Spatial interpolation techniques, namely Inverse Distance Weighting (IDW) and Kriging, are essential for transforming point-based survey or sample data into continuous predictive surfaces, enabling data-driven decision-making for the circular bioeconomy.

Inverse Distance Weighting (IDW)

IDW estimates values at unknown locations using a weighted average of known neighboring points. The weight is inversely proportional to the distance raised to a power parameter (p).

Formula: Ẑ(s₀) = Σ [z(sᵢ) / dᵢᵖ] / Σ [1 / dᵢᵖ] where Ẑ(s₀) is the estimated value, z(sᵢ) is the known value at point i, dᵢ is the distance, and p is the power parameter.

Ordinary Kriging

Kriging is a geostatistical method that employs a semi-variogram to model spatial autocorrelation. It provides an optimal unbiased estimate (Best Linear Unbiased Predictor - BLUP) along with a variance map quantifying estimation uncertainty.

Formula: Ẑ(s₀) = Σ λᵢ z(sᵢ) where weights λᵢ are derived by minimizing the estimation variance based on the modeled variogram.

Table 1: Comparative Analysis of IDW vs. Kriging for WCO Estimation

| Feature | Inverse Distance Weighting (IDW) | Ordinary Kriging |

|---|---|---|

| Theoretical Basis | Deterministic; based on distance decay. | Geostatistical; based on spatial autocorrelation and stochastic theory. |

| Key Outputs | Single predicted surface. | Prediction surface + Prediction variance (uncertainty) surface. |

| Assumptions | Minimal; assumes Tobler's First Law of Geography. | Assumes stationarity (constant mean) and uses a fitted variogram model. |

| Handling Anisotropy | Limited (often isotropic). | Yes, directional variograms can model anisotropy. |

| Computational Demand | Generally lower. | Higher, due to variogram modeling and matrix solutions. |

| Best For | Quick, preliminary analyses where data shows strong distance-dependent correlation. | Research-grade analysis requiring robust predictions and uncertainty quantification. |

Experimental Protocols for WCO Generation Surface Estimation

Protocol 3.1: Primary Data Collection & Pre-Processing

Objective: To gather and prepare point data on WCO generation for spatial analysis. Materials: GIS software (e.g., QGIS, ArcGIS Pro), GPS devices, survey questionnaires.

- Sampling Design: Stratify the urban landscape by land-use zones (commercial, high-density residential, industrial, institutional). Perform a statistically significant random sample within each stratum.

- Data Collection: At each sample point (e.g., a restaurant or household cluster), administer surveys or conduct audits to estimate average weekly WCO generation (liters/week). Record precise geographic coordinates.

- Data Cleansing: Import point data into GIS. Check for and remove spatial outliers using spatial statistics tools (e.g., Median Absolute Deviation). Normalize data where necessary (e.g., convert to WCO generation per unit area).

- Exploratory Spatial Data Analysis (ESDA): Calculate global Moran's I to assess spatial autocorrelation. Generate a semi-variogram cloud to inspect for directional trends (anisotropy).

Protocol 3.2: Spatial Interpolation via Inverse Distance Weighting (IDW)

Objective: To create a preliminary surface of estimated WCO generation using IDW. Workflow Input: Cleaned point feature class of WCO sample data.

- Parameterization: Access the IDW interpolation tool in your GIS.

- Settings Configuration:

- Power Parameter (

p): Set initially to 2. Perform sensitivity analysis (e.g.,p=1, 2, 3) and validate using cross-validation. - Search Neighborhood: Define as variable with a minimum of 5-10 neighbors and a maximum search radius based on the study area's extent and data density.

- Output Cell Size: Set to a resolution appropriate for urban planning (e.g., 50m x 50m).

- Power Parameter (

- Execution & Output: Run the tool to generate a continuous raster surface. Visually inspect for artifacts like "bull's-eyes" around sample points.

Protocol 3.3: Spatial Interpolation via Ordinary Kriging

Objective: To create an optimal predicted surface with uncertainty estimates using Kriging. Workflow Input: Cleaned point feature class of WCO sample data.

- Variogram Modeling: Use the ESDA results from Protocol 3.1. Fit a theoretical model (e.g., Spherical, Exponential, Gaussian) to the empirical semi-variogram.

- Parameters to Fit: Nugget (micro-scale variance), Sill (total variance), Range (distance of spatial correlation).

- Kriging Interpolation: Access the Ordinary Kriging tool.

- Variogram Model: Input the fitted model from Step 1.

- Search Neighborhood: Similar configuration to IDW, ensuring sufficient neighbors for estimation.

- Execution & Outputs: Run the tool. Two primary rasters are generated:

- Prediction Surface: The estimated WCO generation.

- Prediction Variance Surface: The kriging variance, indicating locations of high/low confidence in the prediction.

Protocol 3.4: Model Validation & Comparison

Objective: To quantitatively assess and compare the performance of IDW and Kriging models.

- Cross-Validation: Use Leave-One-Out Cross-Validation (LOOCV) for both interpolation methods.

- Metric Calculation: For each model, calculate:

- Mean Error (ME) ~ 0 indicates lack of bias.

- Root Mean Square Error (RMSE): Lower values indicate better predictive accuracy.

- Standardized RMSE (for Kriging): Should be close to 1 if the variogram is correctly specified.

- Selection: Compare RMSE values. The model with the lowest RMSE is typically selected for final prediction, though the kriging variance map may justify its use despite marginally higher RMSE.

Table 2: Example Cross-Validation Results for WCO Interpolation (Hypothetical Data)

| Interpolation Method | Power / Model | Mean Error (ME) | Root Mean Square Error (RMSE) | Standardized RMSE |

|---|---|---|---|---|

| IDW | p = 1 | 0.12 L/week | 8.45 L/week | N/A |

| IDW | p = 2 | 0.08 L/week | 7.98 L/week | N/A |

| IDW | p = 3 | 0.05 L/week | 8.21 L/week | N/A |

| Ordinary Kriging | Exponential Model | 0.01 L/week | 7.65 L/week | 1.02 |

Visualizations

Title: Workflow for WCO Estimation Using Spatial Interpolation

Title: Ordinary Kriging Process for WCO Mapping

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Digital Tools for WCO Spatial Analysis Research

| Item / Solution | Category | Function in Research |

|---|---|---|

| QGIS with SAGA, GRASS | GIS Software | Open-source platform for executing IDW, variogram analysis, and kriging interpolation. |

| ArcGIS Pro Geostatistical Analyst | GIS Software (Proprietary) | Industry-standard suite offering advanced guided geostatistical workflows and models. |

R with gstat & sp packages |

Statistical Programming | Provides unparalleled flexibility for custom variogram modeling, cross-validation, and scripting repetitive analyses. |

| High-Precision GPS Receiver | Field Equipment | Enables accurate georeferencing of WCO sample collection points, critical for reliable interpolation. |

| Semi-Variogram Model Library (Spherical, Exponential, Gaussian) | Statistical Models | Mathematical functions used to formally describe the spatial structure and autocorrelation of WCO generation data. |

| LOOCV (Leave-One-Out Cross-Validation) Script | Validation Algorithm | Standard method for assessing interpolation model accuracy by iteratively predicting at known, withheld points. |

Application Notes: Temporal Dynamics in WCO Collection Systems

Effective management of Waste Cooking Oil (WCO) collection requires moving beyond static spatial analysis to incorporate temporal patterns. Seasonal variations in consumption (e.g., holiday cooking peaks) and weekly cycles (commercial vs. residential activity) directly impact generation rates. Integrating these temporal dynamics through time-series analysis allows for predictive, efficient scheduling that reduces operational costs and improves collection coverage. This is critical for ensuring a reliable feedstock supply for downstream applications, including biodiesel production and, notably, the biochemical synthesis of valuable compounds relevant to pharmaceutical development.

Table 1: Key Temporal Variables Impacting WCO Generation

| Variable Category | Specific Metric | Data Source | Potential Impact on Collection Scheduling |

|---|---|---|---|

| Seasonal | Monthly Avg. Temperature | NOAA, Local Weather APIs | Higher generation in cooler months; biodiesel quality concerns in heat. |

| Seasonal | Holiday/Festival Calendar | Cultural/Public Data | 30-50% spikes in residential WCO 1-2 weeks post-major holidays. |

| Weekly | Day-of-Week Commercial Activity | POS Data, Traffic Counts | Restaurant peaks on weekends dictate high-priority commercial routes. |

| Weekly | Residential Collection Day | Municipal Records | Alignment with existing solid waste/recycling schedules improves participation. |

| Cyclical | Biodiesel Market Price | Commodity Markets | Influences economic viability and urgency of collection. |

| Spatio-Temporal | Local Event Schedules | City Event Calendars | Temporary, hyper-local spikes in generation (e.g., fairs, markets). |

Protocols for Time-Series Analysis and Integration

Protocol 2.1: Data Acquisition & Preprocessing for Temporal Analysis Objective: To compile and clean a unified spatio-temporal dataset for WCO prediction.

- Data Collection: Integrate historical WCO collection weight data (min. 2 years) from IoT bin sensors or municipal logs with temporal covariates (Table 1).

- Geocoding: Spatially join each collection point to its corresponding census tract or neighborhood polygon using GIS (e.g., ArcGIS Pro, QGIS).

- Aggregation: Aggregate daily collection volumes to weekly intervals to mitigate daily noise and align with typical planning cycles.

- Decomposition: Apply classical (e.g., Seasonal-Trend decomposition using Loess - STL) or machine learning methods to isolate trend, seasonal (annual, weekly), and residual components for each significant spatial zone.

Protocol 2.2: Predictive Modeling for Collection Scheduling Objective: To forecast WCO accumulation rates for optimized route scheduling.

- Model Selection: Implement a comparative framework of models:

- SARIMAX (Seasonal ARIMA with eXogenous variables): For capturing linear temporal dependencies and seasonal effects.

- Prophet (Facebook): For handling strong seasonal patterns with multiple periods (yearly, weekly) and holiday effects.

- Spatio-Temporal Graph Neural Network (GNN): Advanced method for capturing dependencies between neighboring collection zones.

- Training/Validation: Split data temporally; use 80% for training, 20% for out-of-time validation. Use Mean Absolute Percentage Error (MAPE) and Root Mean Squared Error (RMSE) as key metrics.

- Integration into GIS: Export model forecasts (e.g., predicted kg/week per collection point) as a time-stamped attribute layer. Use this within network analysis tools (e.g., ArcGIS Network Analyst) to generate dynamic, efficient collection routes that prioritize areas nearing predicted capacity.

Visualizations

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Spatio-Temporal WCO Research

| Item / Solution | Function in Research | Example / Specification |

|---|---|---|

| GIS Software with Network Analyst | Spatial analysis, geocoding, and dynamic route optimization based on temporal forecasts. | ArcGIS Pro, QGIS with OR-Tools plugin. |

| Time-Series Analysis Library | Decomposition, modeling, and forecasting of temporal patterns in WCO data. | Python: statsmodels (SARIMAX), prophet, pytorch-geometric (for GNN). |

| IoT Sensor & Telemetry Kit | Real-time data collection on WCO bin fill-levels, enabling model validation. | Ultrasonic/weight sensors with LoRaWAN or cellular connectivity. |

| Spatial Database with Time Support | Storage and querying of timestamped geographic data (WCO collections, routes). | PostgreSQL with PostGIS and TimescaleDB extension. |

| Data Visualization Platform | Creating dashboards to communicate temporal trends and forecast results to stakeholders. | Tableau, Power BI, or Python Dash/Plotly. |

| Statistical Analysis Software | For rigorous validation of model predictions and hypothesis testing on temporal effects. | R, Python (scikit-learn, scipy). |

This document details the application of open-source geospatial tools to design an optimized pilot collection zone for waste cooking oil (WCO). This work is a core component of a broader thesis investigating GIS and spatial analysis for biorefinery feedstock logistics, with direct relevance to bio-based drug development. Efficient WCO collection is a critical first step in securing sustainable lipid feedstocks for enzymatic conversion into high-value biochemicals and pharmaceutical intermediates.

Data Acquisition & Preprocessing Protocol

Protocol 2.1: Sourcing and Standardizing Spatial Base Data

Objective: To compile and harmonize foundational geospatial datasets for the study area.

- Administrative Boundaries: Download polygon vector data for city/region wards, zip codes, or census tracts from official portals (e.g., city open data platform). Load into QGIS.

- Road Network: Obtain line vector data for streets, classifying by type (primary, secondary, residential). Sources include OpenStreetMap (via QuickOSM plugin) or regional transport authorities.

- Land Use Zoning: Acquire polygon data designating commercial, residential, industrial, and mixed-use zones from municipal planning departments.

- Standardization: Reproject all layers to a common, locally appropriate projected coordinate system (e.g., UTM zone). Ensure consistent attribute table structures. Create a new PostGIS database and import all layers using the

DB Managertool.

Table 1: Estimated WCO Generation by Establishment Type

| Establishment Type | Avg. Weekly WCO Generation (Liters) | Data Source (Example) | Key Assumption |

|---|---|---|---|

| Large Restaurant/Franchise | 80 - 160 | Nat. Restaurant Assoc. Survey (2023) | 200-400 meals/day |

| Medium Restaurant | 40 - 80 | City Health Dept. Records | 100-200 meals/day |

| Hotel/Resort Kitchen | 120 - 250 | Hospitality Industry Report (2024) | 300+ guests/day |

| Hospital Cafeteria | 60 - 120 | Healthcare Facility Mgmt. Study | 150-300 patients/staff/day |

| University Dining Hall | 100 - 200 | Campus Sustainability Audits | 500+ students/day |

| Food Processing Plant | 500 - 2000 | Industry Publication (Food Proc., 2024) | Scale-dependent |

Spatial Analysis & Modeling Methodology

Protocol 3.1: Geocoding and Kernel Density Estimation (KDE)

Objective: To map the probable density of WCO generation.

- Compile Address List: Create a CSV of potential WCO sources (restaurants, hotels, etc.) from business directories and health permits.

- Geocode: Use the QGIS

MMQGISorGeoCodingplugin to convert addresses to point geometries. Import points into PostGIS. - Run KDE: Execute the following PostGIS/PostgreSQL script, adjusting bandwidth (

bandwidth) based on urban density (e.g., 500 meters).

Protocol 3.2: Network Analysis for Accessibility Scoring

Objective: To calculate travel time from collection points to candidate depot sites.

- Prepare Network: Use

pgRoutingextension in PostGIS. Topologically correct the road network (pgr_nodeNetwork), assign travel costs based on road class. - Define Candidate Depots: Create a point layer of 3-5 potential depot/collection vehicle base locations.

- Calculate Service Areas: Run

pgr_drivingDistanceto create 5, 10, and 15-minute service areas from each depot. - Score Grid Cells: Overlay a 500m x 500m grid. Assign each cell an accessibility score (e.g., 1-5) based on the number of depots it falls within for each time threshold.

Suitability Analysis & Zone Delineation

Protocol 3.3: Multi-Criteria Decision Analysis (MCDA)

Objective: To integrate multiple spatial factors to identify optimal collection zones.

- Define Criteria & Weights: Establish criteria matrix via expert survey (e.g., Analytical Hierarchy Process).

- Criteria: WCO Generation Density (Weight: 0.40), Road Accessibility (0.25), Proximity to Depot (0.20), Land Use Compatibility (0.15).

- Reclassify & Normalize Rasters: Convert all vector layers (density, accessibility, etc.) to rasters. Reclassify values to a common scale (1-5). Use QGIS Raster Calculator for linear normalization.

- Weighted Overlay: Execute the following calculation in the QGIS Raster Calculator:

("wco_density_norm" * 0.40) + ("access_score_norm" * 0.25) + ("depot_prox_norm" * 0.20) + ("landuse_suit_norm" * 0.15) - Delineate Pilot Zone: Select the contiguous area with the top 15% of suitability scores that is adjacent to a chosen depot. Smooth boundaries using

Generalizetool.

Diagram: Pilot Zone Design Workflow

Title: GIS Workflow for WCO Collection Zone Design

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential GIS & Data Tools for Spatial Feedstock Analysis

| Item / Solution | Function / Relevance | Example / Note |

|---|---|---|

| QGIS (v3.34+) | Open-source desktop GIS for data visualization, management, and core spatial analysis. | Primary interface for all vector/raster operations and cartography. |

| PostGIS (v3.4+) | Spatial database extender for PostgreSQL. Enables complex queries, network analysis, and central data storage. | Essential for handling large datasets and running pgRouting. |

| pgRouting Extension | Adds routing functionality to PostGIS for calculating shortest paths, service areas, and travel costs. | Core engine for accessibility modeling in network analysis. |

| QuickOSM / OSMnx | Tools for downloading and importing OpenStreetMap data (road networks, points of interest). | Key source for current, global base map data. |

| GRASS GIS Integration | Provides advanced raster (e.g., r.kernel) and spatial modules within QGIS Processing Toolbox. |

Used for robust kernel density calculations. |

| MMQGIS Plugin | QGIS plugin for geocoding, grid creation, and geometry manipulation. | Simplifies conversion of address lists to mappable points. |

AHP Software (e.g., ahpsurvey in R) |

Supports Analytical Hierarchy Process for determining criteria weights via pairwise comparisons. | Quantifies expert judgment for MCDA model. |

| Geopandas (Python Library) | Enables scripting of spatial data manipulations and automations in a Python environment. | For custom analysis pipelines and reproducibility. |

Validation & Reporting Protocol

Protocol 4.1: Field Validation & Efficiency Simulation

Objective: To ground-truth the model and estimate collection route efficiency.

- Stratified Field Survey: Randomly select 15-20 establishments within the proposed zone and 5-10 outside it for verification. Record actual WCO storage capacity and willingness to participate.

- Route Optimization Simulation: Use the

VRP(Vehicle Routing Problem) solver in QGIS withpgRouting. Input:- Depot location.

- Verified collection points with estimated volumes.

- Vehicle capacity (e.g., 1000L).

- Road network travel times.

- Calculate Metrics: Determine total simulated route distance/time, fuel consumption, and liters collected per vehicle-hour.

Diagram: Thesis Context & Research Integration

Title: Integration of GIS Case Study into Broader Research

Overcoming Practical Hurdles: Data Gaps, Model Refinement, and System Calibration

Within the thesis on GIS and spatial analysis for waste cooking oil (WCO) collection, data quality is paramount for modeling collection routes, predicting yields, and integrating biochemical data for drug development precursors. Poor data quality directly compromises spatial analytics and subsequent laboratory experimentation.

Application Notes:

- Incomplete Records: Missing WCO generator data (e.g., restaurants, households) leads to biased spatial coverage and inaccurate potential yield estimates.

- Positional Accuracy: Geocoding errors in generator locations affect route optimization, increasing logistical costs and invalidating proximity-based analysis.

- Attribute Uncertainty: Incorrect or imprecise attributes (e.g., WCO volume, fatty acid profile, contamination level) hinder reliable feedstock characterization for biodiesel or pharmaceutical lipid synthesis.

Table 1: Common Data Quality Issues in WCO Collection GIS Databases

| Issue Category | Typical Manifestation in WCO Research | Estimated Impact on Collection Efficiency | Impact on Biochemical Analysis |

|---|---|---|---|

| Incomplete Records | 30-40% missing contact/volume data | Route planning inefficiency: 15-25% increase in fuel consumption | Incomplete feedstock profiling delays lipidomic studies |

| Positional Accuracy | Average geocoding error: 50-100m in urban areas | Missed collections; >20% error in nearest-neighbor analysis | Incorrect spatial correlation with socio-economic data |

| Attribute Uncertainty | ±20% error in reported weekly WCO volume | Yield prediction error: ±15% | Fatty acid chain length uncertainty: ±2 carbons affects synthesis planning |

Table 2: Recommended Data Quality Tolerance Thresholds for WCO Research

| Data Quality Parameter | Minimum Acceptable Standard for Route Planning | Minimum Acceptable Standard for Biochemical Modeling |

|---|---|---|

| Record Completeness | >85% for key generators | >95% for sampled generators' attribute data |

| Positional Accuracy (RMSE) | <25m | <10m (for precise environmental correlation) |

| Attribute Precision (WCO Volume) | Confidence Interval ±10% | Confidence Interval ±5% |

| Fatty Acid Profile Certainty | N/A | >98% confidence in major lipid species identification |

Experimental Protocols

Protocol 3.1: Completeness Assessment and Imputation for WCO Generator Databases

Objective: To identify, quantify, and address incomplete records in a spatial dataset of WCO generators.

- Data Audit: Inventory all fields for each record. Flag records missing critical attributes: location address, business type, estimated WCO output.

- Gap Analysis: Calculate completeness percentages per field and record. Use spatial autocorrelation (Moran's I) to check if missingness is clustered.

- Imputation:

- Spatial Imputation: For missing estimated WCO volume, use k-nearest neighbors (k=3) based on business type and floor area of proximate, complete records.

- Attribute Imputation: For missing business type, use NAICS code cross-walk or street-level imagery verification via APIs.

- Validation: Reserve 10% of complete records as a test set. Apply imputation and calculate RMSE for continuous fields or accuracy for categorical fields.

Protocol 3.2: Quantifying and Correcting Positional Accuracy

Objective: To assess and improve the geometric accuracy of WCO generator point locations.

- Error Ground Truthing: Select a stratified random sample (n≥50) of generator points. Obtain ground truth coordinates using a handheld GNSS receiver (≈1-3m accuracy) at the building entrance.

- Error Calculation: Compute the Euclidean distance between GIS coordinates and ground truth for each sample point. Calculate Root Mean Square Error (RMSE).

- Error Modeling & Correction: If a systematic shift is detected, derive an affine transformation model from the sample points. Apply to the entire dataset. For random error > tolerance, initiate re-geocoding using a parcel-level service.

Protocol 3.3: Propagating Attribute Uncertainty in Spatial Yield Models

Objective: To model how uncertainty in WCO volume attributes affects collection route yield predictions.

- Uncertainty Characterization: For each generator 'i', define the reported volume (Vi) and its uncertainty (Ui) as a normal distribution (Vi, SDi), where SD_i is derived from historical data variance or a defined percentage (e.g., ±15%).

- Monte Carlo Simulation:

- Define a collection route as a sequence of generators.

- For 10,000 iterations, sample a volume value for each generator from its distribution (Vi, SDi).

- Sum the sampled volumes to get total route yield per iteration.

- Analysis: Build a probability distribution of total route yields. Calculate the 95% confidence interval. Routes with CI exceeding ±20% of mean yield require field validation of attribute data.

Mandatory Visualizations

Title: Data Quality Assurance Workflow for WCO GIS

Title: Attribute Uncertainty Propagation in Route Yield Modeling

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Addressing WCO GIS Data Quality

| Item/Category | Function in WCO Data Quality Context | Example/Specification |

|---|---|---|

| High-Accuracy GNSS Receiver | Ground truthing positional data of WCO collection points. | Handheld unit with Real-Time Kinematic (RTK) capability, <1m positional accuracy. |

| Geocoding API Service | Converting addresses to coordinates; comparing accuracy between services. | Service offering parcel-level or rooftop geocoding (e.g., Google Maps Platform, HERE Maps). |

| Spatial Database Management System | Storing, querying, and performing spatial operations on WCO data. | PostgreSQL with PostGIS extension. |

| Statistical Software/R Library | Conducting imputation, Monte Carlo simulation, and uncertainty analysis. | R with 'sf', 'gstat', 'mice' packages; Python with 'geopandas', 'scipy'. |

| Field Data Collection App | Validating and updating attributes on-site during pilot collections. | Configurable form app (e.g., Survey123, KoBoToolbox) with offline GPS. |