Navigating Uncertainty in Biofuel LCA: From Stochastic Modeling to Sustainable Development

This article provides a comprehensive analysis of Life Cycle Assessment (LCA) under uncertainty for biofuels, tailored for researchers and sustainability professionals.

Navigating Uncertainty in Biofuel LCA: From Stochastic Modeling to Sustainable Development

Abstract

This article provides a comprehensive analysis of Life Cycle Assessment (LCA) under uncertainty for biofuels, tailored for researchers and sustainability professionals. It explores foundational concepts, methodological frameworks, and advanced techniques for uncertainty quantification. The content covers challenges in data variability, model choices, and scenario definition, while reviewing optimization strategies and comparative validation approaches. This guide serves as a critical resource for improving the reliability and decision-support value of biofuel sustainability assessments in research and policy contexts.

Why Uncertainty is Central to Biofuel LCA: Sources, Impacts, and the Imperative for Rigorous Analysis

Within the broader thesis on Life Cycle Assessment (LCA) under uncertainty for biofuels research, a critical step is the rigorous classification of uncertainty sources. This distinction is not merely academic; it dictates the appropriate analytical response. Aleatory uncertainty (or variability) arises from inherent, natural randomness in the system and is irreducible with more data. Epistemic uncertainty stems from a lack of knowledge, imperfect models, or measurement errors and is, in principle, reducible through further research. Mischaracterizing one for the other can lead to flawed policy and R&D decisions.

The following tables synthesize recent data (2023-2024) from literature on uncertainty magnitudes and types across key biofuel life cycle stages.

Table 1: Aleatory Uncertainties (Variability) in Biofuel Feedstock Production

| Source of Variability | Typical Range/Description | Key Influencing Factors |

|---|---|---|

| Crop Yield (e.g., Corn, Switchgrass) | Year-to-year CV*: 15-30% | Climate (precip., temp.), soil heterogeneity, pest outbreaks |

| Soil N2O Emissions | Emission factor: 0.3-3% of applied N | Soil type, moisture, temperature, microbial activity |

| Soil Carbon Stock Change | -500 to +200 kg C/ha/yr | Prior land use, climate, management practice history |

| CV: Coefficient of Variation |

Table 2: Epistemic Uncertainties in Conversion and Well-to-Tank Processes

| Process Stage | Uncertainty Type | Estimated Impact on GHG (gCO2e/MJ) | Primary Cause |

|---|---|---|---|

| Biochemical Conversion (e.g., Enzymatic Hydrolysis) | Model Parameter | ±10-25% | Enzyme kinetics, inhibitor effects, empirical model fit |

| Thermochemical Conversion (e.g., Gasification) | Model Structure | ±15-30% | Assumed reaction pathways, equilibrium vs. kinetic model |

| Co-product Allocation | Methodological Choice | Can shift result >50% | Choice of allocation method (mass, energy, economic, system expansion) |

| Electricity Grid Mix | Temporal/Technological | ±20-40% | Projection of future grid carbon intensity |

Experimental Protocols for Characterizing Uncertainty

Protocol for Characterizing Aleatory Uncertainty in Feedstock Yields

Objective: Quantify spatial and temporal yield variability for a dedicated energy crop. Methodology:

- Site Selection & Design: Establish a stratified random sampling plot network across a target region, covering major soil types and climate zones.

- Longitudinal Monitoring: Harvest and weigh biomass from standardized sub-plots annually for a minimum of 10 years.

- Data Analysis: Calculate summary statistics (mean, variance, CV) for each site and year. Perform spatial and temporal analysis of variance (ANOVA) to partition variability components.

- Distribution Fitting: Fit statistical distributions (e.g., Normal, Log-Normal, Beta) to the aggregated yield data for use in stochastic LCA modeling.

Protocol for Quantifying Epistemic Uncertainty in Conversion Yields

Objective: Reduce parameter uncertainty in a lignocellulosic ethanol fermentation model. Methodology:

- Sensitivity Analysis: Conduct a global sensitivity analysis (e.g., Sobol indices) on the fermentation kinetic model to identify most influential parameters (e.g., max growth rate μmax, substrate inhibition constant Ki).

- Targeted Experimentation: Design a series of controlled batch fermentation experiments where suspected key parameters are varied systematically (e.g., substrate concentration, inhibitor levels).

- Bayesian Calibration: Use the experimental data in a Bayesian framework to update prior distributions of the model parameters, yielding posterior distributions that reflect reduced uncertainty.

- Model Validation: Predict outcomes for a new, independent experimental condition using the calibrated model and compare to observed data to validate the reduced uncertainty.

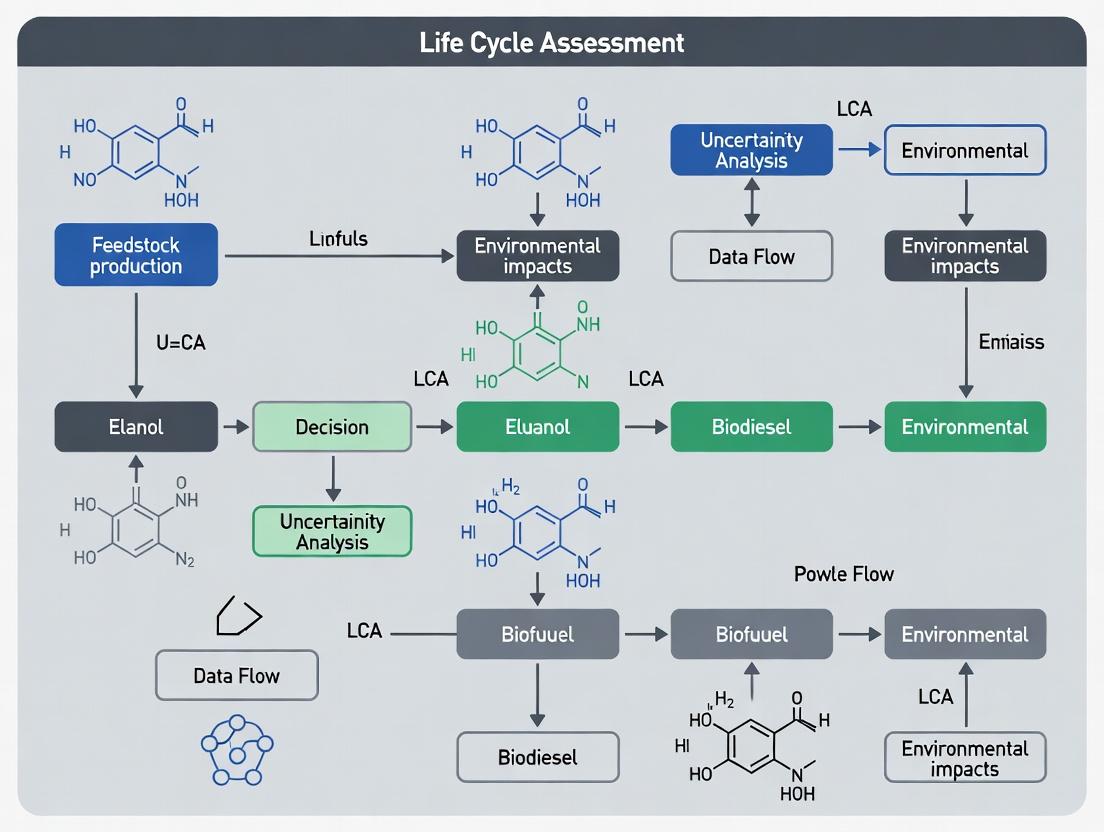

Visualizing Uncertainty Distinctions and Workflows

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for Uncertainty-Directed Biofuel Research

| Item | Function in Uncertainty Analysis | Example Product/Catalog |

|---|---|---|

| Stable Isotope Tracers (e.g., ¹³C-Glucose, ¹⁵N-Urea) | Precisely track carbon/nitrogen flows in metabolic or environmental pathways to reduce epistemic uncertainty in emission factors. | Cambridge Isotope Laboratories CLM-1396 (¹³C6-Glucose) |

| Custom Enzyme Cocktails | Systematically vary enzyme loadings and ratios in hydrolysis assays to quantify parameter uncertainty in conversion models. | Novozymes Cellic CTec3 (for lignocellulose) |

| Soil Microbial DNA/RNA Kits | Characterize microbial community variability (aleatory) and link to GHG emission fluxes. | Qiagen DNeasy PowerSoil Pro Kit |

| High-Throughput Fermentation Systems (e.g., Microbioreactors) | Generate large, reproducible kinetic data sets for robust parameter estimation and model discrimination. | Sartorius Ambr 250 High-Throughput System |

| Certified Reference Materials (CRMs) for Fuels/Gases | Calibrate analytical instruments (GC, HPLC) to minimize measurement error (epistemic uncertainty). | NIST SRM 2770 (Biodiesel Fatty Acid Methyl Esters) |

| Statistical & LCA Software | Perform Monte Carlo simulation, global sensitivity analysis, and stochastic LCA. | SimaPro (with Monte Carlo module), @RISK for Excel, openLCA |

Within the rigorous framework of Life Cycle Assessment (LCA) for biofuels research, quantifying and managing uncertainty is paramount for generating credible, decision-relevant results. This whitepaper delineates the key technical sources of uncertainty across the biofuel value chain, focusing on the inherent variability of biological feedstocks and the dynamic nature of production technologies. For researchers, scientists, and process developers, a systematic understanding of these uncertainties is critical for robust experimental design, model parameterization, and interpretation of LCA outcomes.

Feedstock Variability: Biological and Logistical Inconsistency

Feedstock characteristics are the foundational source of uncertainty, influencing every subsequent conversion step and LCA inventory.

Key Variability Factors:

- Compositional Variability: Lignin, cellulose, and hemicellulose content; moisture; ash; and extractives vary by species, cultivar, geography, harvest time, and climate.

- Agricultural Input Uncertainty: Fertilizer application rates, irrigation, pesticide use, and associated N₂O emissions from soil exhibit high spatial and temporal variance.

- Yield Uncertainty: Modeled and actual biomass yields per hectare are sensitive to weather, soil health, and farming practices.

- Logistical Parameters: Transportation distance, mode, and storage losses are often generalized in LCA models.

Quantitative Data Summary:

Table 1: Representative Variability in Key Feedstock Parameters

| Feedstock | Parameter | Typical Range | Coefficient of Variation (%) | Primary Influence |

|---|---|---|---|---|

| Switchgrass | Cellulose Content | 32 - 40 % dry weight | ~10% | Conversion Yield, Enzyme Demand |

| Corn Stover | Harvestable Yield | 2.5 - 5.5 Mg/ha/yr | ~30% | Land Use, Transportation Burden |

| Soybean | Nitrogen Fertilizer Application | 0 - 80 kg N/ha | >100% | Eutrophication Potential, GHG Emissions |

| Microalgae | Lipid Content | 15 - 50 % dry weight | ~40% | Biodiesel Yield, Energy for Dewatering |

Conversion Process Performance and Technological Learning

The conversion pathway (biochemical, thermochemical, catalytic) introduces uncertainties related to modeled efficiency, scale, and technological maturation.

Key Uncertainty Factors:

- Model vs. Pilot/Commercial Data: Laboratory-scale yields and energy balances often do not scale linearly.

- Catalyst & Enzyme Efficiency: Lifespan, activity, and required loading rates are major sources of technical and cost uncertainty.

- Co-product Allocation: Methodological choices (system expansion, mass/energy allocation) drastically alter LCA results.

- Technological Learning Curves: Future process efficiency, energy integration, and material recycling are projected, not known.

Quantitative Data Summary:

Table 2: Uncertainty Ranges in Biochemical Conversion Parameters

| Process Stage | Parameter | Bench Scale Value | Pilot/Commercial Scale (Expected Range) | Key Uncertainty Driver |

|---|---|---|---|---|

| Pretreatment | Sugar Solubilization Yield | 85-95% | 75-90% | Feedstock inconsistency, reactor mixing |

| Enzymatic Hydrolysis | Glucose Yield | >90% theoretical | 80-88% theoretical | Enzyme inhibition, solids loading |

| Fermentation | Ethanol Titer | 50-100 g/L | 40-80 g/L | Inhibitor tolerance, microbial stability |

| Overall | Mass Balance Closure | 97-99% | 92-97% | Unaccounted losses, measurement error |

Methodologies for Characterizing Uncertainty

Experimental Protocol: Feedstock Compositional Analysis (NREL/TP-510-42620)

Objective: To determine the consistent composition of biomass feedstocks for conversion process modeling.

- Sample Preparation: Mill biomass to pass a 2 mm screen. Dry at 45°C until constant weight.

- Extractives: Soxhlet extraction with water followed by ethanol for 24 hours each.

- Structural Carbohydrates & Lignin: Perform a two-stage acid hydrolysis (72% H₂SO₄ at 30°C, then 4% H₂SO₄ at 121°C) on the extractive-free material.

- Analysis: Quantify sugars in hydrolysate via HPLC (Aminex HPX-87P column). Acid-soluble lignin via UV-Vis spectrometry. Ash by combustion at 575°C.

- Uncertainty Quantification: Perform analysis in quintuplicate. Report mean, standard deviation, and 95% confidence interval for each component.

Experimental Protocol: Enzymatic Hydrolysis Saccharification Assay

Objective: To measure the digestibility of pretreated biomass under standardized conditions.

- Reaction Setup: Load 1% (w/v) solids (on a dry basis) in 50 mM sodium citrate buffer (pH 4.8) in a stirred tube.

- Enzyme Loading: Dose with commercial cellulase cocktail (e.g., CTec3) at 15 mg protein/g glucan.

- Incubation: Maintain at 50°C with agitation for 72 hours.

- Sampling & Analysis: Take samples at 0, 3, 6, 12, 24, 48, 72h. Centrifuge, filter, and analyze supernatant for glucose and xylose via HPLC.

- Data Modeling: Fit sugar release data to a sigmoidal kinetic model. Report maximum yield (Y_max) and rate constant (k) with their standard errors from the model fit.

Visualizing Uncertainty Relationships and Workflows

Feedstock to LCA Uncertainty Propagation

Technology Learning Curve & Data Fidelity

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Biofuel Uncertainty Research

| Item | Function in Research | Key Consideration for Uncertainty |

|---|---|---|

| Commercial Cellulase Cocktails (e.g., CTec3, HTec3) | Hydrolyze cellulose/hemicellulose to fermentable sugars in saccharification assays. | Batch-to-batch activity variation; requires standardization with control substrate (e.g., Avicel). |

| NIST Standard Reference Materials (e.g., Poplar, Corn Stover) | Certified biomass materials with reference compositional data. | Critical for calibrating analytical methods (HPLC, NIR) and benchmarking process yields. |

| Stable Isotope-Labeled Compounds (¹³C-Glucose, ¹⁵N-Urea) | Trace carbon/nitrogen flows in metabolic studies and environmental fate experiments. | Enables precise tracking, reducing uncertainty in pathway allocation and emission modeling. |

| Anaerobic Workstation/Glove Box | Maintain strict anoxic conditions for studying fermentative or methanogenic microbes. | Eliminates uncertainty from oxygen contamination in yield and metabolic rate studies. |

| Process Mass Spectrometer (Gas Analysis) | Real-time monitoring of off-gas (CO₂, CH₄, H₂, O₂) from bioreactors. | Provides high-frequency data for accurate carbon balancing, reducing mass closure uncertainty. |

| Monte Carlo Simulation Software (e.g., @RISK, Crystal Ball) | Propagates input parameter variability through LCA models. | Core tool for quantitatively assessing the combined effect of multiple uncertainty sources. |

1. Introduction

Within the context of Life Cycle Assessment (LCA) for biofuels research, uncertainty is not a peripheral concern but a central determinant of credible science, robust investment, and defensible policy. This technical guide examines how epistemic and aleatory uncertainties propagate through biofuel LCA models, critically impacting conclusions on greenhouse gas (GHG) savings, land-use change (LUC) effects, and techno-economic viability. For researchers and professionals in adjacent fields like drug development—where uncertainty quantification in complex biological systems is paramount—the methodologies herein offer parallel insights.

2. Taxonomy of Uncertainty in Biofuel LCA

Uncertainty in LCA is multifaceted. The table below categorizes primary sources relevant to biofuels.

Table 1: Taxonomy of Uncertainty in Biofuel LCA

| Category | Source | Typical Impact on Results | Quantification Method |

|---|---|---|---|

| Parameter Uncertainty | Emission factors, fertilizer inputs, feedstock yield, conversion process efficiency. | ±40-200% variation in GHG footprint. | Monte Carlo simulation, Bayesian inference. |

| Model Uncertainty | Choice of LCA allocation method (energy, economic, mass), system boundary selection, LUC modeling approach (e.g., economic vs. deterministic). | Can reverse the ranking of biofuel pathways. | Scenario analysis, pedigree matrix. |

| Scenario Uncertainty | Future energy mix, technological learning rates, policy compliance mechanisms. | Affects long-term sustainability claims and investment risk. | Integrated Assessment Models (IAMs). |

| Spatio-temporal Uncertainty | Regional variation in soil carbon, weather patterns, time horizon for GHG accounting (GWP20 vs GWP100). | High geographic specificity challenges generic claims. | Geospatial analysis, temporal discounting. |

3. Quantitative Data Synthesis: The Range of Claims

Recent literature and databases (e.g., GREET 2023, EU JEC WTW v5) reveal vast ranges in reported carbon intensities (CI) for common biofuels, primarily driven by uncertainty in key parameters.

Table 2: Reported Carbon Intensity of Select Biofuels (g CO₂e/MJ)

| Biofuel Pathway | Low Estimate | High Estimate | Key Uncertainty Drivers |

|---|---|---|---|

| Corn Ethanol (w/ LUC) | 45 | 150 | N₂O emissions modeling, indirect LUC (iLUC) coefficient, co-product allocation. |

| Soybean Biodiesel (w/ LUC) | 40 | 220 | Soil organic carbon change, deforestation emission factor, processing energy source. |

| Cellulosic Ethanol (Switchgrass) | -15 (carbon negative) | 35 | Soil C sequestration rate, biomass yield under marginal land, enzyme conversion efficiency. |

| Renewable Diesel (Hydrotreated Vegetable Oil) | 25 | 90 | Feedstock transport distance, hydrogen source (grey vs. green), catalyst longevity. |

4. Experimental & Analytical Protocols for Uncertainty Quantification

Protocol 4.1: Monte Carlo Simulation for Parameter Uncertainty

- Objective: Propagate input parameter uncertainties through an LCA model to output a probability distribution of results (e.g., GHG emissions).

- Methodology:

- Define Probability Distributions: Assign statistical distributions (e.g., normal, log-normal, uniform) to all sensitive input parameters (see Table 1). Use data from literature reviews, expert elicitation, or experimental replicates.

- Random Sampling: Use a pseudo-random number generator to draw a value for each parameter from its defined distribution, creating one coherent input set.

- Model Execution: Run the deterministic LCA model with this input set to compute one output value.

- Iteration: Repeat steps 2-3 for a minimum of 10,000 iterations to achieve stable output statistics.

- Analysis: Analyze the output distribution to determine mean, median, standard deviation, and confidence intervals (e.g., 95% interval).

Protocol 4.2: Scenario Analysis for Model/Scenario Uncertainty

- Objective: Evaluate the robustness of LCA conclusions to fundamental methodological choices.

- Methodology:

- Identify Key Model Choices: Select critical assumptions (e.g., allocation method: system expansion vs. energy allocation; iLUC model: GTAP vs. AEZ-EF).

- Design Scenario Matrix: Create a full-factorial or fractional-factorial set of scenarios combining different choices.

- Calculate Results per Scenario: Execute the LCA model for each defined scenario, holding parameter values constant where possible.

- Meta-Analysis: Compare results across scenarios. Use statistical range or analysis of variance (ANOVA) to determine which choices have the most significant impact.

5. Visualizing Uncertainty Propagation and Decision Pathways

Uncertainty Propagation in LCA Modeling

Decision Logic Under Uncertainty

6. The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Tools for Uncertainty-Aware Biofuels LCA Research

| Tool/Reagent Category | Specific Example | Function in Uncertainty Research |

|---|---|---|

| LCA Software with Uncertainty Features | openLCA, Brightway2, SimaPro. | Platforms enabling Monte Carlo simulation, parameterized modeling, and sensitivity analysis. |

| Uncertainty Data Repositories | Ecoinvent (with uncertainty data), USDA LCA Commons. | Provide pre-quantified probability distributions for thousands of background LCI parameters. |

| Statistical Analysis Packages | R (stats, sensitivity), Python (NumPy, SciPy, SALib). | Perform advanced statistical analysis, global sensitivity analysis (Sobol indices), and data visualization. |

| Geospatial Analysis Tools | QGIS, ArcGIS with soil carbon/land use data layers. | Quantify spatial variability in feedstock production impacts, reducing scenario uncertainty. |

| Biochemical Assay Kits | Soil organic carbon analyzers, N₂O flux measurement chambers, enzymatic activity assays. | Generate primary, high-quality data to reduce parameter uncertainty for critical emission factors and yields. |

Within the critical field of biofuels research, Life Cycle Assessment (LCA) is the foundational methodology for evaluating environmental impacts from feedstock cultivation to fuel combustion. Traditional LCA has relied heavily on deterministic point estimates, which obscure the inherent variability and uncertainty in data. This whitepaper details the technical evolution towards probabilistic frameworks that explicitly quantify and propagate uncertainty, enabling more robust and reliable decision-making for researchers and scientists in biofuel development.

The Paradigm Shift: From Deterministic to Probabilistic

The evolution can be characterized by three distinct phases, each addressing the limitations of its predecessor.

Phase 1: Point Estimate LCA Single-value inputs (e.g., a fixed fertilizer application rate of 150 kg N/ha) are used to generate single-value outputs (e.g., 45 g CO2-eq/MJ). This approach provides a false sense of precision and is unable to inform on risk or confidence.

Phase 2: Sensitivity & Scenario Analysis Point estimates are varied in a controlled, one-at-a-time (OAT) manner or through discrete scenarios (e.g., a "high" and "low" fertilizer rate). This identifies influential parameters but does not characterize the full probability distribution of outcomes.

Phase 3: Probabilistic LCA Inputs are defined as probability distributions (e.g., Normal(150, 20) kg N/ha). Uncertainty is propagated through the computational model using methods like Monte Carlo simulation, resulting in a probability distribution for the impact score, from which confidence intervals and key contributors to uncertainty can be derived.

Evolution of LCA Uncertainty Methods

Core Methodologies for Probabilistic Frameworks

Uncertainty Characterization & Data Collection

Each input parameter is assigned a probability distribution based on data quality.

Table 1: Common Probability Distributions for LCA Parameters

| Parameter Type (Biofuels Example) | Recommended Distribution | Justification | Typical Parameters (Example) |

|---|---|---|---|

| Agricultural Yield (kg/ha) | Normal or Log-normal | Central limit theorem, bounded by zero. | Mean=5000, SD=600 |

| Emission Factor (g CH4/kg feedstock) | Log-normal | Strictly positive, often right-skewed data. | Geo-mean=10, GSD=1.5 |

| Technology Efficiency (%) | Beta | Bounded between 0 and 100%. | α=30, β=4 (for ~88% mean) |

| Process Energy Use (MJ/kg) | Uniform | Used when only min/max range is known. | Min=15, Max=25 |

| Land Use Change Carbon Stock (t C/ha) | Triangular | When minimum, mode, and maximum are estimable. | Min=50, Mode=80, Max=110 |

Uncertainty Propagation: Monte Carlo Simulation

The standard computational engine for probabilistic LCA.

Experimental Protocol for Monte Carlo Simulation in Biofuel LCA:

- Model Definition: Construct a deterministic LCA model,

Y = f(X1, X2, ..., Xn), whereYis the impact score (e.g., GWP) andXiare input parameters. - Distribution Assignment: Assign a probability distribution to each uncertain

Xi(see Table 1). - Sampling: For iteration

k=1toN(whereNis large, e.g., 10,000): a. Randomly sample one valuex_i,kfrom the distribution of eachXi. b. Compute the corresponding output valuey_k = f(x_1,k, x_2,k, ..., x_n,k). - Aggregation: Collect all

y_kto form the probability distribution of the outputY. - Analysis: Calculate statistics of

Y: mean, median, standard deviation, and percentiles (e.g., 2.5th, 97.5th for a 95% confidence interval).

Monte Carlo Simulation Workflow

Global Sensitivity Analysis (GSA)

GSA techniques, such as Sobol' indices, decompose the output variance to quantify each input's contribution to total uncertainty. This identifies which parameters most need better data to reduce overall output uncertainty.

Protocol for Variance-Based Sensitivity Analysis (Sobol' Indices):

- Sample Generation: Generate two independent sampling matrices

AandBof sizeN x nusing a quasi-random sequence (e.g., Sobol' sequence). - Model Evaluation: Run the LCA model for all rows in

AandB, yielding output vectorsY_AandY_B. - Index Calculation: Compute first-order (

S_i) and total-order (S_Ti) Sobol' indices. This involves creating hybrid matrices where columns fromAare replaced with columns fromBand re-running the model.S_i = Var[E(Y|X_i)] / Var(Y): Measures the main effect ofX_i.S_Ti = 1 - Var[E(Y|X_~i)] / Var(Y): Measures the total effect ofX_i, including all interactions.

- Interpretation: A high

S_TiindicatesX_iis a major source of output uncertainty.

Application in Biofuels Research: A Comparative Case

Scenario: Comparing the Global Warming Potential (GWP) of corn-ethanol and soybean-biodiesel.

Table 2: Probabilistic LCA Results for Biofuel GWP (g CO2-eq/MJ)

| Biofuel Pathway | Mean | Standard Deviation | 95% Confidence Interval | Probability Corn-Ethanol has Lower GWP |

|---|---|---|---|---|

| Corn-Ethanol | 68.5 | 12.3 | [46.2, 94.1] | -- |

| Soybean-Biodiesel | 42.1 | 18.7 | [15.3, 82.5] | 12% |

Interpretation: While soybean-biodiesel has a lower mean GWP, its 95% CI is wider, indicating greater result uncertainty. The probabilistic overlap reveals only a 12% probability that corn-ethanol is truly better, emphasizing the need for uncertainty-aware comparison.

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key Solutions for Uncertainty-Aware LCA Research

| Item Name/Software | Type | Primary Function in Probabilistic LCA |

|---|---|---|

| Brightway2 | Open-source LCA framework (Python) | Core platform for building, managing, and calculating LCA models. Enables Monte Carlo simulation and sensitivity analysis via its stats and gsa modules. |

| openLCA | Desktop LCA software | User-friendly GUI for building LCA models. Supports uncertainty distributions in databases and basic Monte Carlo simulations. |

| SALib | Python library | Specifically designed for GSA. Implements Sobol', Morris, and other methods to connect with LCA model outputs. |

| Pedigree Matrix | Data quality schema | Systematic method (e.g., from Ecoinvent) to convert qualitative data quality scores (reliability, completeness) into quantitative uncertainty factors (geometric variance). |

| PRé SimaPro | Commercial LCA software | Industry-standard software with integrated Monte Carlo simulation and contribution-to-variance analysis tools. |

| Sobol' Sequence | Quasi-random number generator | A low-discrepancy sequence for efficient sampling of input distributions, reducing the number of iterations needed for stable results. |

Frameworks and Tools for Quantifying Uncertainty in Biofuel Sustainability Metrics

In Life Cycle Assessment (LCA) for biofuels research, parameter uncertainty is a central challenge. Input parameters—such as crop yield, fertilizer emission factors, conversion efficiency, and fuel combustion data—are inherently variable or imprecise. Monte Carlo Simulation (MCS) has emerged as the fundamental computational technique for quantifying how this input uncertainty propagates through complex LCA models to affect output uncertainty (e.g., in greenhouse gas emissions). This whitepaper provides an in-depth technical guide to implementing MCS within the context of biofuels LCA, enabling researchers to robustly communicate the reliability of their sustainability conclusions.

Theoretical Foundation

Monte Carlo Simulation is a class of computational algorithms that rely on repeated random sampling to obtain numerical results. Its core principle is to use randomness to solve problems that might be deterministic in principle. In uncertainty propagation, the process is:

- Define a Quantitative Model:

Y = f(X₁, X₂, ..., Xₙ), where Y is the model output (e.g., Global Warming Potential) and Xᵢ are the uncertain input parameters. - Characterize Input Distributions: Assign a probability distribution (e.g., normal, lognormal, uniform, triangular) to each uncertain Xᵢ based on empirical data or expert judgment.

- Sample Repeatedly: Draw a random value from the distribution of each Xᵢ, creating one possible input scenario.

- Compute and Store: Calculate the corresponding output Y for that scenario.

- Iterate: Repeat steps 3-4 thousands of times (e.g., 10,000 iterations).

- Analyze Output: The resulting distribution of Y values represents the propagated uncertainty, from which statistics (mean, median, standard deviation, confidence intervals) can be derived.

Application to Biofuels LCA: A Step-by-Step Protocol

Objective: To quantify the uncertainty in the well-to-wheel GHG emissions (g CO₂-eq/MJ) of a corn ethanol pathway.

Protocol:

Model Formulation: Define the deterministic LCA model. A simplified example:

GHG = (E_production + E_transport + E_conversion) / Ethanol_EnergyWhere each term (E_production) is itself a function of sub-parameters (e.g., N₂O emission factor, diesel usage).Parameter Identification & Distribution Assignment: Identify all stochastic parameters. Use primary experimental data or databases like ecoinvent (which often provide uncertainty data). For example:

- Corn Yield (kg/ha): Fit a normal distribution using regional agricultural trial data.

- N₂O Emission Factor (kg N₂O-N/kg N applied): Use a lognormal distribution per IPCC Tier 1 guidelines.

- Biorefinery Natural Gas Use (MJ/L ethanol): Use a triangular distribution (min, most likely, max) from pilot plant data.

Correlation Specification: Identify correlated parameters (e.g., higher crop yield may correlate with higher fertilizer input). Define correlation coefficients and implement sampling using a Cholesky decomposition or copula approach to maintain these relationships.

Simulation Execution:

- Choose iteration count (N=10,000 is standard for stable statistics).

- For i = 1 to N:

- For each parameter, generate a random value from its defined distribution, respecting correlations.

- Compute the

GHGvalue for iteration i. - Store the result.

Convergence Diagnostic: Monitor the running mean and standard deviation of the output distribution. Ensure they stabilize before the final iteration.

Results Analysis:

- Plot a histogram and kernel density estimate of the output GHG distribution.

- Calculate the 95% confidence interval (2.5th to 97.5th percentiles).

- Perform global sensitivity analysis (e.g., Sobol indices) to rank parameters by their contribution to output variance.

Diagram 1: Monte Carlo Simulation Workflow for LCA

Table 1: Exemplary Uncertain Parameters for Corn Ethanol LCA (Hypothetical Data)

| Parameter | Unit | Distribution Type | Parameters (μ, σ or min, mode, max) | Data Source Justification |

|---|---|---|---|---|

| Corn Grain Yield | kg/ha | Normal | μ=10,000, σ=1,200 | Regional USDA-NASS survey data (CV ~12%) |

| N Fertilizer Application Rate | kg N/ha | Triangular | 140, 155, 170 | Expert survey from extension agents |

| Direct N₂O Emission Factor | kg N₂O-N/kg N | Lognormal | μ=-3.29, σ=0.74 | IPCC (2019) Tier 1, Ch. 11 |

| Biorefinery Ethanol Yield | L/kg corn | Uniform | 0.395, 0.415 | Technology review of dry mill plants |

| Natural Gas Use (Conversion) | MJ/L ethanol | Normal | μ=8.5, σ=0.85 | Industry benchmark analysis |

Table 2: Summary Output of Monte Carlo Simulation (N=10,000)

| Output Statistic | Value (g CO₂-eq/MJ) | Interpretation |

|---|---|---|

| Mean | 54.2 | Central estimate of GHG intensity |

| Standard Deviation | 8.7 | Absolute measure of uncertainty |

| 2.5th Percentile | 38.1 | Lower bound of 95% confidence interval |

| 97.5th Percentile | 72.9 | Upper bound of 95% confidence interval |

| Probability < Petroleum Baseline (80 g/MJ) | 99.4% | Likelihood biofuel meets policy threshold |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Software & Computational Tools for MCS in LCA

| Tool / Solution | Function in MCS | Application Note for Biofuels LCA |

|---|---|---|

| Python SciPy/NumPy | Core numerical computing; provides statistical distributions, random number generators, and matrix operations for custom sampling. | Ideal for building fully customized, transparent MCS models integrated with process-based LCA algorithms. |

R with mc2d Package |

Specialized package for 2-dimensional Monte Carlo (separating variability and uncertainty). | Useful for advanced uncertainty analysis distinguishing inter-annual farm yield variability from true epistemic uncertainty. |

| OpenLCA | Open-source LCA software with built-in MCS capabilities via parameter uncertainty distributions. | Allows application of MCS to large, existing LCA databases and complex product systems without extensive programming. |

| SimaPro | Commercial LCA software featuring robust MCS and sensitivity analysis modules. | Provides a user-friendly GUI for applying MCS to predefined and user-defined LCA inventories common in biofuels research. |

| @RISK or Palisade | Excel add-in for performing risk analysis and MCS directly within spreadsheet models. | Enables rapid prototyping of MCS for LCA practitioners who develop models in Excel. |

| GNU Octave / MATLAB | High-level language and interactive environment for numerical computation. | Suitable for complex models requiring advanced statistical functions or integration with process simulation tools. |

Diagram 2: Uncertainty Types & MCS Role in LCA

Advanced Considerations

- Convergence & Iteration Count: Use the coefficient of variation of the mean as a convergence criterion. For biofuel LCAs with heavy-tailed distributions, >100,000 iterations may be needed.

- Global vs. Local Sensitivity: While MCS propagates uncertainty, coupling it with global sensitivity analysis (GSA) methods (e.g., Sobol, Morris) is critical. GSA apportions output variance to input factors, identifying key research priorities (e.g., reducing uncertainty in land use change carbon accounting has higher leverage than refining transport data).

- Computational Efficiency: For large, dynamic LCA models, use Latin Hypercube Sampling (LHS) for more efficient stratification of the input space, ensuring full coverage with fewer iterations.

- Reporting: Always report the complete uncertainty distribution, not just the mean. Visualize using cumulative distribution functions (CDFs) to communicate probabilities (e.g., "There is a 90% probability that GHG emissions are below X").

Monte Carlo Simulation is an indispensable, non-parametric method for rigorously quantifying uncertainty in biofuels LCA. By transparently propagating parameter uncertainty through complex supply chain models, MCS moves sustainability assessments from deterministic point estimates to probabilistic statements, thereby providing a more robust foundation for scientific inference and policy decision-making. Its integration with global sensitivity analysis transforms LCA from a mere accounting exercise into a tool for identifying critical knowledge gaps in the biofuel life cycle.

Within the framework of a broader thesis on life cycle assessment (LCA) under uncertainty for biofuels research, managing technological and temporal uncertainty is paramount. Technological uncertainty arises from variable conversion efficiencies, evolving feedstock pre-treatment methods, and future process innovations. Temporal uncertainty encompasses long-term factors such as climate change impacts on crop yields, policy shifts, and changes in background energy grids. Scenario and sensitivity analysis (SC&SA) provides a robust methodological toolkit to quantify these uncertainties, offering researchers, scientists, and development professionals a structured approach to decision-making under incomplete information. This guide details the technical protocols for implementing SC&SA in biofuel LCA.

Foundational Concepts and Data

SC&SA in biofuel LCA distinguishes between scenario analysis, which explores discrete, plausible future states (e.g., different policy regimes or technology adoption pathways), and sensitivity analysis, which quantifies how output variability (e.g., GHG emissions) depends on input variability (e.g., fertilizer input, enzyme loading). Key data types and their uncertainties are summarized below.

Table 1: Primary Sources of Uncertainty in Biofuel LCA

| Uncertainty Category | Typical Parameters | Data Range & Source |

|---|---|---|

| Technological | Biochemical conversion yield, Co-product allocation factor, Catalyst lifetime | Yield: 250-400 L ethanol/tonne biomass (NREL 2023 data); Allocation: 0-1 based on mass, energy, or economic value. |

| Temporal | Grid electricity carbon intensity, Agricultural N2O emission factor, Feedstock yield trend | Grid CI: 0.35-0.10 kg CO2-eq/kWh (2050 projection); N2O EF: 0.3-3% of applied N (IPCC Tier 1 range). |

| Methodological | Time horizon for GWP (Global Warming Potential), System boundary definition, Choice of impact assessment method | GWP: 20-yr vs 100-yr horizon can alter results by >30% for CH4-heavy processes. |

| Parameter | Fertilizer application rate, Transport distance, Process energy demand | Fertilizer: ±20% of regional average; Transport: 50-500 km radius. |

Table 2: Common Scenario Archetypes for Biofuel Systems

| Scenario Name | Technological Context | Temporal/Policy Context | Key Assumption Shifts |

|---|---|---|---|

| Baseline (Frozen Tech) | Current average conversion processes. | Static background systems (e.g., today's grid). | No learning rates; fixed yields. |

| Optimistic Tech Advance | High yield, integrated biorefinery with high-value co-products. | Mid-term (2035) decarbonizing grid. | 25% yield increase; 40% energy demand reduction. |

| Stringent Carbon Policy | Current tech, but with CCS (Carbon Capture and Storage). | Carbon tax or low-carbon fuel standard. | CCS adds 15% capex, captures 90% of process CO2. |

| High Climate Impact | Current tech. | Changed agronomic conditions (drought). | Feedstock yield decreases by 20%; irrigation demand increases. |

Experimental Protocols for Analysis

Protocol for Global Sensitivity Analysis (Morris Method Screening)

Objective: To identify the most influential input parameters on the LCA output (e.g., net GHG emissions) prior to more computationally intensive analysis.

Materials:

- LCA model of the biofuel pathway (e.g., in openLCA, GREET, or custom script).

- Defined probability distribution for each uncertain input parameter (see Table 1).

- Sensitivity analysis software (e.g., SALib for Python, SimLab, or R

sensitivitypackage).

Procedure:

- Parameter Definition: Select k uncertain parameters. Define their range and distribution (e.g., uniform ±20% around baseline).

- Trajectory Generation: Generate r trajectories in the k-dimensional parameter space using the Morris sampling strategy. A common setting is r = 50-100.

- Model Execution: Run the LCA model for each sample point (r * (k+1) runs total).

- Elementary Effect Calculation: For each parameter i, compute the elementary effect (EE) for each trajectory: EE_i = [ f(x1,..., xi+Δ,..., xk) - f(x) ] / Δ, where Δ is a predetermined step size.

- Metric Aggregation: Compute the mean (μ) and standard deviation (σ) of the absolute EE_i across all trajectories for each parameter.

- Interpretation: High μ indicates strong overall influence on the output. High σ indicates parameter interactions or non-linear effects. Rank parameters by μ for prioritization.

Protocol for Scenario-Based LCA Modeling

Objective: To compare the environmental performance of a biofuel system across distinct, coherent future states.

Materials:

- Base LCA inventory model.

- Scenario definitions with quantified assumptions (see Table 2).

- Background database for future scenarios (e.g., ecoinvent v4 with scenario modules, or IPCC-based energy system projections).

Procedure:

- Scenario Framing: Define 3-5 plausible, divergent, and relevant scenarios. Ensure they are internally consistent (e.g., a high-tech scenario should not assume a regressive carbon grid).

- Inventory Adjustment: For each scenario, modify the foreground and background data in the LCA model. Technological: Adjust process efficiencies, yields, and material flows. Temporal: Substitute background datasets (e.g., electricity mix 2030) and update temporally dynamic characterization factors (e.g., GWP if radiative forcing changes).

- Impact Assessment: Calculate a full set of life cycle impact indicators (e.g., IPCC GWP, ReCiPe) for each scenario.

- Multi-Criteria Presentation: Present results in a comparative table or radar chart. Highlight trade-offs (e.g., reduced GHG but increased water use).

- Robustness Check: Identify which conclusions hold across all or most scenarios, indicating a robust finding versus a scenario-dependent one.

Visualizations

Title: Workflow for Uncertainty Management in LCA

Title: Uncertainty Inputs Propagating Through an LCA Model

The Scientist's Toolkit

Table 3: Research Reagent Solutions for LCA Uncertainty Analysis

| Tool / Resource | Primary Function | Application in Biofuel LCA Uncertainty |

|---|---|---|

| openLCA with Parameter & Global SA modules | Open-source LCA software with built-in uncertainty parameterization and Monte Carlo simulation. | Defining input distributions and running probabilistic simulations for foreground inventory data. |

| SALib (Sensitivity Analysis Library in Python) | A comprehensive library for implementing global sensitivity analysis methods (Sobol, Morris, FAST). | Connecting to LCA models via API to perform variance-based sensitivity analysis on key parameters. |

| ecoinvent database (with scenario variants) | Life cycle inventory database offering future scenario datasets (e.g., "Renewable Energy Future 2050"). | Providing consistent, scenario-specific background data for electricity, fuels, and materials. |

| GREET Model (Argonne National Lab) | A dedicated suite for transportation fuel LCA with extensive built-in fuel pathways and parameters. | Rapid scenario testing for U.S.-specific biofuel pathways with pre-defined technological learning curves. |

| Crystal Ball / @RISK | Monte Carlo simulation add-ons for Microsoft Excel. | Performing sensitivity and scenario analysis on simplified, spreadsheet-based LCA models. |

| IPCC Emission Factor Database | Authoritative source for time-dependent emission factors for GHGs and other pollutants. | Updating temporal characterization factors and agricultural emission models within impact assessment. |

R tidyverse & ggplot2 |

Data manipulation and visualization packages in R. | Processing large volumes of Monte Carlo simulation output and creating publication-quality plots (e.g., tornado charts, CDFs). |

Fuzzy Logic and Interval Analysis for Handling Data Gaps and Epistemic Uncertainty

Life cycle assessment (LCA) of biofuels is critical for evaluating environmental impacts from feedstock cultivation to fuel combustion. However, these assessments are plagued by epistemic uncertainty—uncertainty stemming from incomplete knowledge—and frequent data gaps, particularly for novel biofuel pathways. Traditional probabilistic methods often fail when data is scarce or imprecise. This technical guide details the integration of fuzzy logic and interval analysis as robust mathematical frameworks to explicitly quantify and propagate these uncertainties, thereby improving the reliability of LCA decision-support for researchers and scientists in biofuels and related fields like pharmaceutical development, where similar data challenges exist.

Theoretical Foundations

Fuzzy Logic extends classical binary logic to handle the concept of partial truth. It uses membership functions (μ), ranging from 0 (non-member) to 1 (full member), to describe the degree to which a value belongs to a fuzzy set (e.g., "high greenhouse gas emission").

Interval Analysis operates on ranges of numbers ([a, b]), where the true value is unknown but bounded. It is ideal for representing uncertainty when only extreme values are known, without assuming a distribution.

Combined, they allow for fuzzy intervals, where the bounds themselves are "soft," providing a powerful tool for epistemic uncertainty.

Methodological Protocols

Protocol for Fuzzy Interval Inventory Construction

Objective: To compile life cycle inventory data with quantified epistemic uncertainty.

- Data Identification: For each unit process (e.g., enzyme hydrolysis sugar yield), identify input and output flows.

- Uncertainty Categorization: Classify uncertainty as aleatory (inherent variability) or epistemic (data gap, model simplification). Proceed with epistemic.

- Interval Assignment: Where data is missing or expert-based, assign a conservative numerical interval ([low, high]).

- Fuzzification: For each interval, define a membership function. A triangular fuzzy number (TFN) defined by a pessimistic, most likely, and optimistic value (a, b, c) is often used.

- Documentation: Record the rationale for each fuzzy interval in a structured database.

Protocol for Uncertainty Propagation in LCA Models

Objective: To propagate fuzzy-interval inventory data through an LCA model to obtain uncertain impact scores.

- Model Formulation: Define the computational model

f(e.g.,Impact = ∑ (Flow_i × CharacterizationFactor_i)). - Alpha-Cut Strategy: Discretize the membership axis [0,1] into levels (α-cuts, e.g., α = 0, 0.5, 1). For each α-cut, the fuzzy number becomes a regular interval.

- Interval Computation: For each α-cut, compute the output interval using interval arithmetic. For monotonic functions, compute at the interval bounds. For complex models, use a global optimization (e.g., genetic algorithm) to find min and max of

fover the input intervals. - Result Reconstruction: The set of output intervals at different α-cuts is reassembled to form the resulting fuzzy-interval impact score.

Quantitative Data & Applications

Table 1: Fuzzy-Interval Data for a Hypothetical Cellulosic Ethanol LCA

| Inventory Flow | Unit | Pessimistic (a) | Most Likely (b) | Optimistic (c) | Uncertainty Source |

|---|---|---|---|---|---|

| N2O Emission from Fertilizer | kg N2O/kg N | [0.003, 0.005] | [0.007, 0.010] | [0.012, 0.015] | IPCC Tier 1 range |

| Enzyme Hydrolysis Yield | % theoretical | [70, 75] | [80, 82] | [88, 90] | Pilot-scale data gap |

| Lignin Combustion Efficiency | % | [85, 87] | [90, 92] | [94, 95] | Expert estimate |

Table 2: Global Warming Impact (kg CO2-eq/MJ) via Different Uncertainty Methods

| Uncertainty Method | Low Estimate | Central Estimate | High Estimate | Comments |

|---|---|---|---|---|

| Deterministic | -- | 45.2 | -- | No uncertainty considered |

| Monte Carlo (assumed normal) | 39.1 | 45.5 | 51.9 | Requires full distribution data |

| Pure Interval Analysis | 36.8 | -- | 53.5 | Only bounds, no likelihood |

| Fuzzy-Interval (α=0) | [35.0, 38.5] | -- | [54.2, 58.0] | Full range of possibility |

| Fuzzy-Interval (α=1) | -- | [44.8, 46.3] | -- | Core, most plausible values |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Analytical Tools for Uncertainty-Aware Biofuels LCA

| Item/Reagent | Function in Uncertainty Analysis |

|---|---|

| Fuzzy Logic Toolbox (MATLAB/Python) | Provides libraries for defining fuzzy sets, rules, and performing operations. |

Interval Arithmetic Library (e.g., pyinterval) |

Enables reliable computation with intervals, preventing overestimation. |

| Global Optimizer (e.g., NLopt, Genetic Algorithm) | Solves for min/max of complex LCA models over parameter intervals. |

Uncertainty Visualization Package (e.g., ggplot2, matplotlib) |

Creates clear plots of fuzzy results, alpha-cuts, and sensitivity indices. |

| Structured Expert Elicitation Protocol | Systematic method to convert expert knowledge into consistent fuzzy intervals. |

Visualized Workflows and Relationships

Fuzzy-Interval LCA Workflow

Alpha-Cut Decomposition of a Fuzzy Number

This technical guide, framed within a thesis on Life Cycle Assessment (LCA) under uncertainty for biofuels research, provides a comprehensive overview of integrating probabilistic uncertainty and variability into commercial and open-source LCA platforms. For researchers and development professionals, robust uncertainty analysis is critical to distinguish meaningful differences in biofuel pathways from statistical noise.

Foundational Concepts of Uncertainty in LCA

Uncertainty in LCA for biofuels stems from:

- Parameter Uncertainty: Inexact values for emission factors, yields, or energy content.

- Scenario & Model Uncertainty: Choices in allocation methods (e.g., mass, energy, economic) or system boundaries.

- Variability: Temporal, geographical, or technological differences in feedstock production and conversion processes.

The table below summarizes typical uncertainty ranges (as coefficient of variation, CV) for key parameters in biofuel LCAs, compiled from recent literature and databases.

Table 1: Typical Uncertainty Ranges for Key Biofuel LCA Parameters

| Parameter Category | Specific Example (Biofuel Context) | Typical Uncertainty (CV Range) | Primary Source of Uncertainty |

|---|---|---|---|

| Agricultural Inputs | N₂O emission factor from fertilizer application | 30% - 200% (Log-normal) | IPCC Tier 1 empirical models |

| Pesticide manufacturing inventory data | 10% - 30% (Log-normal) | Industry average data aggregation | |

| Feedstock Yield | Lignocellulosic biomass (e.g., switchgrass) yield per hectare | 15% - 40% (Normal) | Spatial/temporal variability, climate models |

| Conversion Process | Biochemical conversion yield (e.g., sugar to ethanol) | 5% - 20% (Normal/Triangular) | Pilot vs. commercial scale-up uncertainty |

| Thermochemical conversion efficiency (e.g., FT-diesel) | 10% - 25% (Triangular) | Technology readiness level (TRL) | |

| Co-product Allocation | Market price of dried distillers grains (DDGS) | 20% - 50% (Normal) | Economic variability |

| Climate Metrics | Global Warming Potential (GWP) of CH₄ (AR6) | ±10% (Normal) | Scientific assessment uncertainty |

Core Methodology: Monte Carlo Simulation in LCA

The primary experimental protocol for integrating uncertainty is Monte Carlo Simulation (MCS).

Experimental Protocol for Probabilistic LCA

Aim: To propagate input uncertainties through the LCA model to produce a probability distribution for impact results.

Materials & Software:

- LCA platform (openLCA 2.x / SimaPro 9.4+).

- Life cycle inventory (LCI) database with uncertainty data (e.g., ecoinvent v3.x with

gepisdata). - Defined product system (e.g., 1 MJ of bioethanol from corn stover).

- Probability distributions assigned to key parameters.

Procedure:

- Model Construction: Build a conventional LCA model for the biofuel pathway.

- Uncertainty Parameterization: a. For each critical flow/process, assign a probability distribution (Normal, Log-normal, Triangular, Uniform). b. Define parameters: Mean (µ) and Standard Deviation (σ) for Normal; µ and σ (geometric) for Log-normal; Min, Mode, Max for Triangular. c. Example: Assign Log-normal distribution to N₂O field emissions with µ=5 kg N₂O-N/kg N and σ(geom)=1.3.

- Correlation Definition: Identify and specify correlations between parameters (e.g., higher yield correlates with higher fertilizer input) using correlation coefficients (r) in the platform's uncertainty setup.

- Simulation Execution: a. Set the number of iterations (N ≥ 10,000 for stable results). b. Run MCS. In each iteration, the software randomly samples a value from each input distribution and solves the LCA matrix. c. Aggregate results per iteration for each impact category (e.g., GWP, FDP).

- Output Analysis: Analyze the output distributions (histograms, summary statistics) to determine median impact, confidence intervals (e.g., 95%), and contribution to variance.

Workflow Diagram: Probabilistic LCA for Biofuels

Diagram 1: Workflow for probabilistic LCA of biofuels.

Platform-Specific Implementation

openLCA

- Native Uncertainty Support: Full integration via

olca-ipcor JSON-LD API. Uncertainty data stored inCategorizedEntityfields. - Workflow: Use the "Calculate with uncertainty" option. Input distributions are defined directly in process editors.

- Advanced Protocol: For complex spatial variability (e.g., regional soil carbon), use Python scripts (

olca-python-api) to manipulate GeoJSON data and run batch MCS.

SimaPro

- Native Uncertainty Support: Parametric uncertainty analysis via "Uncertainty" tab in process windows. Uses pre-defined

Pedigreematrix with basic uncertainty factors. - Workflow: Run "Uncertainty/Monte Carlo analysis" from calculation settings. Results show contributions to variance.

- Advanced Protocol: Link with

Ror@RISKfor custom distributions and advanced sensitivity analysis (Global SA, Sobol indices).

Pathway Diagram: Uncertainty Propagation in Biofuel System

Diagram 2: Uncertainty propagation in a biofuel LCA model.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Uncertainty Integration in Biofuel LCA

| Tool / Reagent | Function in Uncertainty Analysis | Example in Biofuel Research |

|---|---|---|

| Probabilistic LCA Software | Core platform for building models and running MCS. | openLCA with native uncertainty; SimaPro Analyst. |

| LCI Databases with Uncertainty | Provides pre-quantified uncertainty data for background processes. | ecoinvent (Pedigree/Geometric SD); Agri-footprint. |

| Statistical Analysis Package | For post-processing results, fitting distributions, advanced SA. | R (tidyverse, sensitivity), Python (SciPy, statsmodels). |

| Pedigree Matrix & Basic Uncertainty Factors | A semi-quantitative system to estimate data quality uncertainties. | Applied to primary data from biofuel pilot plants. |

| Global Sensitivity Analysis (GSA) Tool | Quantifies contribution of each input to output variance. | Sobol indices calculation in R or SimaPro/R link. |

| Data Distribution Fitting Software | Converts raw experimental data (e.g., yield trials) into input distributions. | @RISK, BestFit, or fitdistrplus in R. |

| Uncertainty Documentation Template | Ensures consistent reporting (ISO 14073, 14071). | Template for documenting data pedigree, distributions, and correlations. |

Advanced Experimental Protocol: Global Sensitivity Analysis (GSA)

Aim: To identify which uncertain input parameters contribute most to the variance in the final impact score.

Procedure (using Sobol Indices via R/openLCA):

- Generate a sample matrix of input parameters using a quasi-random sequence (Sobol sequence).

- Execute the LCA model for each sample row (requires automated coupling, e.g.,

olca-ipcfor openLCA). - Compute first-order (S₁) and total-order (Sₜ) Sobol indices for each input parameter using the

sensitivitypackage in R. - Interpretation: A high Sₜ indicates an important parameter whose uncertainty must be reduced to improve output certainty.

Overcoming Common Pitfalls and Refining Uncertainty Analysis in Biofuel LCAs

Life Cycle Assessment (LCA) for biofuels is inherently plagued by data scarcity, particularly for novel feedstocks, emerging conversion technologies, and cradle-to-grave environmental impact inventories. This uncertainty compromises the reliability of sustainability certifications and policy decisions. This whitepaper provides a technical guide for researchers to employ scientifically robust strategies—proxy data and informed assumptions—to navigate data gaps while maintaining methodological rigor within biofuel LCA under uncertainty.

Quantitative Data on Common Data Gaps in Biofuel LCA

The following table summarizes key areas of data scarcity identified in recent literature and the typical proxies used.

Table 1: Common Data Gaps and Proxy Strategies in Biofuel LCA

| Data Gap Category | Specific Example | Common Proxy/Assumption | Key Uncertainty Introduced |

|---|---|---|---|

| Agricultural Inputs | Fertilizer application rates for novel cover-crop biomass. | Rates from a geographically similar region for a functionally similar crop (e.g., switchgrass proxies for miscanthus). | ±40% variance in eutrophication potential (N, P runoff). |

| Conversion Process | Energy/material balances for pilot-scale hydrothermal liquefaction (HTL). | Data from lab-scale experiments, scaled using engineering principles (e.g., Sherwood scaling laws). | ±25% variance in energy input/output estimates. |

| Co-product Allocation | Market value of novel biochar co-product from pyrolysis. | Economic allocation based on proxy product (e.g., activated carbon) or system expansion using displaced product. | Can shift >30% of total GHG burden between products. |

| Land Use Change (LUC) | Indirect LUC (iLUC) effects for a new biofuel feedstock. | Computable General Equilibrium (CGE) model outputs using aggregated agricultural sector data. | High model dependency; uncertainties can exceed 100% of biofuel's carbon footprint. |

| End-of-Life | N₂O emissions from soil application of digestate in new regions. | IPCC Tier 1 emission factors, defaulted by climate zone and nitrogen content. | ±60% variance due to soil type, climate, and management practice differences. |

Methodological Framework for Proxy Selection and Validation

Protocol for Systematic Proxy Data Identification

- Define the Data Need: Precisely specify the required parameter (e.g., yield of enzyme "X" per gram of pretreated feedstock "Y" at pilot scale).

- Conduct a Similarity Analysis: Identify candidate proxies by scoring similarity across multiple dimensions:

- Technological/ Biological Similarity: (e.g., same enzyme class, similar metabolic pathway).

- Geographic/ Temporal Similarity: (e.g., same soil-climate zone, data from within last 5 years).

- Scale Similarity: (e.g., pilot-scale data for a different but analogous process).

- Apply Adjustment Factors: Use stoichiometric, thermodynamic, or allometric scaling to adjust proxy values. Example: Scaling fermentation yields based on theoretical maximum carbon conversion efficiency difference between substrates.

- Quantify & Document Uncertainty: Apply pedigree matrices (e.g., from the Ecoinvent database) or Monte Carlo analysis to assign uncertainty ranges to the proxied data point.

Protocol for Formulating and Testing Informed Assumptions

- Assumption Statement: Formulate a clear, testable statement (e.g., "The nutrient recycling efficiency for algal biofilm cultivation is assumed to be 75%, analogous to closed hydroponic systems").

- Establish Boundary Conditions: Define the plausible range (minimum, maximum) based on fundamental principles (conservation of mass/energy) or extreme case literature.

- Design a Sensitivity Analysis: Model the impact of the assumption across its plausible range on the final LCA results (e.g., Global Warming Potential).

- Iterate: If the assumption is a key driver of results (>10% variance in primary outcome), prioritize it for targeted primary data collection or seek a higher-fidelity proxy.

Visualizing Strategies and Workflows

Diagram 1: Decision Workflow for Proxy vs. Assumption

Diagram 2: Uncertainty Integration in Biofuel LCA

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Toolkit for Proxy Data Generation & Validation in Biofuel Research

| Tool/Reagent | Function in Tackling Data Scarcity | Example Application in Biofuels |

|---|---|---|

| 15N/13C Isotopic Tracers | Enables precise tracking of nutrient fate and carbon flow in novel biological systems, providing primary data to validate proxy assumptions. | Quantifying nitrogen uptake efficiency in algae or energy grasses to validate proxy data from conventional crops. |

| Lab-Scale Fermentation/Bioreactor Arrays | High-throughput screening of process parameters (pH, temp, yield) for novel feedstocks, generating primary data to inform scaling assumptions. | Establishing yield curves for engineered yeasts on biomass hydrolysates to proxy for pilot-scale performance. |

| Process Simulation Software (Aspen Plus, SuperPro) | Allows for rigorous thermodynamic and mass/energy balance modeling based on limited experimental data, creating in-silico proxy data. | Simulating entire biorefinery flowsheets to estimate energy demands when only unit operation data is available. |

| LCA Database Subscriptions (Ecoinvent, GaBi) | Provides extensively reviewed background data for common materials and energy, serving as a benchmark and proxy source for foreground systems. | Using proxy data for chemical inputs (e.g., solvents, catalysts) from database processes with documented uncertainty. |

| Pedigree Matrix & Uncertainty Factor Libraries | Standardized system for qualitatively scoring data quality (reliability, completeness) and deriving quantitative uncertainty distributions. | Assigning a wider uncertainty range to a proxied enzyme loading value based on its technological dissimilarity from the source. |

| Global Sensitivity Analysis (GSA) Software (SALib, SimaPro) | Quantifies the contribution of each uncertain input (including proxies) to variance in final LCA results, identifying critical data gaps. | Determining if a proxied land use change emission factor drives >80% of variance in the Climate Change impact category. |

Life cycle assessment (LCA) for biofuels is fundamentally a high-dimensional, multi-parameter modeling challenge fraught with epistemic and aleatoric uncertainty. Complex machine learning models are increasingly employed to integrate heterogeneous data—from crop yield and land-use change to conversion process efficiency and tailpipe emissions—to predict sustainability metrics like Greenhouse Gas (GHG) intensity. However, these models often become "black boxes," obscuring the relative contribution of input variables (e.g., fertilizer input, co-product allocation, choice of functional unit) to the final output. This opacity undermines scientific credibility, hinders model validation, and prevents the identification of key leverage points for improving biofuel sustainability. This guide provides a technical framework for integrating transparency and interpretability directly into the modeling workflow of LCA under uncertainty, ensuring that predictions are both accurate and accountable.

Core Interpretability Techniques: A Technical Guide

Post-hoc Interpretability for Pre-trained Models

When deploying complex ensembles (e.g., Gradient Boosting Machines, Deep Neural Networks) on existing LCA inventories, post-hoc techniques explain specific predictions.

Methodology: SHAP (SHapley Additive exPlanations) SHAP values, based on cooperative game theory, attribute the difference between a model's prediction for a specific data point and the average model prediction to each input feature.

- Input: Trained model

f, background datasetX_background(e.g., 100 random samples from the LCA inventory database), instance to explainx_instance. - Compute SHAP Values: Use the

KernelExplainerorTreeExplainer(for tree-based models) from theshapPython library. The explainer estimates:φ_i(f, x) = Σ_(S ⊆ N \ {i}) [|S|! (|N| - |S| - 1)! / |N|! ] * [f(S ∪ {i}) - f(S)]whereφ_iis the SHAP value for featurei,Nis the set of all features, andSis a subset of features withouti. - Interpretation: A positive SHAP value indicates the feature pushes the model's prediction (e.g., higher GHG intensity) above the dataset's average prediction for that instance.

Methodology: LIME (Local Interpretable Model-agnostic Explanations) LIME approximates the complex model locally with an interpretable surrogate model (e.g., linear regression).

- Perturbation: Generate a perturbed dataset around the instance

x_instance. - Weighting: Weight the new samples by their proximity to

x_instance(e.g., using a cosine kernel). - Surrogate Model: Train a sparse linear model

gon the weighted, perturbed dataset, using the predictions of the black-box modelfas the target. - Interpretation: The coefficients of

gexplain the local behavior offaroundx_instance.

Intrinsically Interpretable Model Architectures

For new modeling efforts, prefer architectures that offer built-in transparency.

Methodology: Generalized Additive Models (GAMs)

GAMs model the target as a sum of univariate smooth functions of each feature: g(E[y]) = β_0 + f_1(x_1) + f_2(x_2) + ... + f_p(x_p). This maintains nonlinear flexibility while preserving additivity and feature-wise interpretability.

- Implementation: Use the

pyGAMlibrary. Specify link functiong(e.g., logit for classification, identity for regression) and smoothness constraints for eachf_i(e.g., splines). - Fitting: Fit using backfitting or penalized likelihood.

- Visualization: Plot each

f_ito see the marginal effect of a feature (e.g., soil N2O emission factor) on the predicted GHG outcome.

Methodology: Attention Mechanisms in Neural Networks In sequence or graph-based LCA models (e.g., for process-chain analysis), attention layers can be designed to reveal which parts of the input sequence (e.g., which life cycle stage) the model "pays attention to" when making a prediction.

- Architecture: Incorporate a standard scaled dot-product attention layer.

- Interpretation: Extract the attention weight matrix

AwhereA_ijindicates the relevance of input elementjto the output for elementi.

Uncertainty Quantification (UQ) as an Interpretability Tool

UQ directly addresses the "uncertainty" context of biofuels LCA, telling us not just the prediction but also how confident the model is.

Methodology: Conformal Prediction This framework provides valid prediction intervals under minimal assumptions.

- Split Data: Divide data into proper training set

I_1and calibration setI_2. - Fit Model: Train model

fonI_1. - Compute Nonconformity Scores: For each

iinI_2, compute scores_i = |y_i - f(x_i)|(for regression). - Determine Quantile: Find the

(1-α)-th quantileqof the scores{s_i : i ∈ I_2}. - Form Prediction Interval: For a new sample

x_new, output the interval:C(x_new) = [f(x_new) - q, f(x_new) + q]. This interval guaranteesP(y_new ∈ C(x_new)) ≥ 1-αmarginally.

Methodology: Bayesian Neural Networks (BNNs) BNNs treat model weights as probability distributions, naturally capturing epistemic uncertainty.

- Prior: Place a prior distribution over weights

p(w)(e.g., Gaussian). - Inference: Compute the posterior distribution

p(w | D)given dataD. This is approximated via variational inference or Markov Chain Monte Carlo (MCMC). - Prediction: The predictive distribution is

p(y_new | x_new, D) = ∫ p(y_new | x_new, w) p(w | D) dw, yielding predictive means and credible intervals.

Table 1: Comparison of Interpretability Techniques for Biofuels LCA Modeling

| Technique | Model Agnostic? | Provides Global/Local Explanation | Handles Uncertainty? | Computational Cost | Key Output for LCA |

|---|---|---|---|---|---|

| SHAP | Yes | Both (via global aggregations) | No (but has variance estimates) | Medium-High | Feature attribution values for any LCI datum |

| LIME | Yes | Local Only | No | Low-Medium | Local linear approximation for a specific fuel pathway |

| GAMs | No (model-specific) | Global (by design) | Can be extended | Low | Marginal effect plots of each input parameter |

| Attention Weights | No (model-specific) | Both | No | Low (to extract) | Relevance scores for life cycle stages or inputs |

| Conformal Prediction | Yes | N/A (provides intervals) | Yes (for total uncertainty) | Low | Valid prediction intervals for GHG outcomes |

| Bayesian Neural Nets | No (model-specific) | Global (via posterior) | Yes (epistemic) | Very High | Full predictive posterior distribution |

Table 2: Illustrative Impact of Key Variables on Biofuel GHG Predictions (SHAP Analysis from a Simulated Model)

| Input Feature (Unit) | Range in Dataset | Mean Absolute SHAP Value (g CO2e/MJ) | Typical Direction of Effect |

|---|---|---|---|

| Soil Carbon Stock Change (kg C/ha/yr) | -500 to 1000 | 8.2 | Negative change (loss) increases GHG |

| N2O Emission Factor (kg N2O-N/kg N) | 0.005 - 0.025 | 6.5 | Positive correlation with GHG |

| Biomass-to-Fuel Conversion Yield (%) | 30 - 55 | 10.1 | Negative correlation with GHG |

| Co-product Allocation Mass Fraction | 0.1 - 0.4 | 5.3 | Higher allocation to co-product reduces main product GHG |

| Energy Input for Cultivation (MJ/ha) | 5000 - 15000 | 3.8 | Positive correlation with GHG |

Experimental Protocol for an Interpretable LCA Modeling Workflow

Protocol: Developing an Interpretable, Uncertainty-Aware Model for Biofuel GHG Intensity Prediction

Objective: To create a predictive model for GHG intensity that clearly identifies key drivers and provides reliable uncertainty intervals.

Materials & Data:

- Life Cycle Inventory (LCI) Database: e.g., USDA GREET model case data or proprietary experimental data.

- Feature Set:应包括技术参数(转化率、能耗)、空间变量(土壤类型、降雨量)、管理变量(施肥率)、系统选择(分配方法、时间范围)。

- Target Variable: GHG intensity (g CO2e/MJ).

Procedure:

Phase 1: Data Preprocessing & Partitioning

- Handle missing data using multiple imputation by chained equations (MICE).

- Partition data into Training (60%), Calibration (20%), and Test (20%) sets. Ensure stratified partitioning if dealing with different biofuel types.

Phase 2: Model Training with Intrinsic Interpretability

- Train a Generalized Additive Model (GAM) with cubic splines and a Gaussian link function.

- Apply L1/L2 regularization to ensure smoothness and prevent overfitting.

- Plot the partial dependence functions for each feature to visualize marginal effects.

Phase 3: Post-hoc Explanation of a Complementary Complex Model

- Train a high-performing XGBoost Regressor on the same training set.

- Using the SHAP library (

TreeExplainer): a. Compute SHAP values for all instances in the test set. b. Generate a summary plot (beeswarm plot) to rank global feature importance. c. Generate dependence plots for top features to reveal interactions (e.g., between N-fertilizer rate and soil N2O emission factor).

Phase 4: Uncertainty Quantification

- Apply Conformal Prediction to the GAM model:

a. Use the held-out calibration set to compute nonconformity scores (absolute residuals).

b. For a desired coverage level of 90% (α=0.1), calculate the corresponding quantile

qof the residuals. c. For predictions on the test set, output the point prediction ±qas the prediction interval. - Evaluate the empirical coverage of these intervals on the test set.

Phase 5: Validation & Reporting

- Report standard metrics (RMSE, R²) for both models on the test set.

- Report the empirical coverage of the conformal intervals.

- Synthesize findings: Present a unified narrative combining GAM marginal plots, SHAP global rankings, and uncertainty intervals to provide a transparent, multi-faceted interpretation of the model's drivers and reliability.

Visualizations

Title: Interpretable & Uncertain LCA Modeling Workflow

Title: Attention Weights for Biofuel LCA Stages

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Interpretable & Uncertain LCA Modeling

| Tool / Reagent | Function in the Research Context | Key Features for Interpretability |

|---|---|---|

| SHAP (shap) Python Library | Computes Shapley values for any model. | Model-agnostic, provides local/global explanations, handles feature interactions. |

| InterpretML Python Library | Unified framework for interpretable models. | Implements GAMs, EBMs (Explainable Boosting Machines), and provides visualization tools. |

ConformalPrediction (or mapie) Python Lib |

Implements conformal prediction for uncertainty intervals. | Model-agnostic, distribution-free guarantees, easy to integrate with scikit-learn. |

| PyMC3 / Pyro Python Libraries | Frameworks for probabilistic programming. | Enables building Bayesian Neural Networks (BNNs) for inherent uncertainty quantification. |

LCA Software API (e.g., brightway2) |

Provides programmatic access to LCI databases and calculation engines. | Allows integration of LCA data directly into machine learning pipelines for consistent system modeling. |

Sensitivity Analysis Libraries (e.g., SALib) |

Performs global variance-based sensitivity analysis (Sobol indices). | Quantifies how uncertainty in model output is apportioned to uncertainty in inputs, complementing interpretability. |

Optimizing Computational Efficiency for High-Resolution Stochastic Modeling

Within the thesis framework of Life cycle assessment under uncertainty for biofuels research, high-resolution stochastic modeling is indispensable for propagating uncertainties from feedstock variability, conversion processes, and market dynamics through complex life cycle inventory (LCI) databases. This technical guide addresses the computational challenges inherent in performing Monte Carlo simulations across high-dimensional parameter spaces, which are critical for generating robust probability distributions of environmental impact metrics.

Core Computational Challenges & Current Benchmarks

The primary bottleneck in stochastic life cycle assessment (LCA) is the iterative evaluation of computationally intensive process models. The table below summarizes current performance benchmarks for key modeling tasks, based on a 2024 survey of LCA software and high-performance computing (HPC) literature.

Table 1: Computational Performance Benchmarks for Stochastic LCA Tasks

| Modeling Task | Typical Runtime (Baseline) | High-Res Target Runtime | Key Limiting Factor | Parallelization Potential |

|---|---|---|---|---|

| Monte Carlo (10^6 iterations) on simplified LCI | ~2.5 hours | < 15 minutes | Sequential model evaluation | High (Embarrassingly parallel) |

| Stochastic solution of bio-chemical kinetic ODEs | ~45 minutes per simulation | < 5 minutes per simulation | ODE solver stiffness | Moderate (Parameter-level parallelism) |

| Global Sensitivity Analysis (Sobol indices) | ~72 hours (full) | < 8 hours | Required sample size (N*(k+2)) | High |

| Spatially-explicit inventory modeling | ~1 week | < 1 day | Geospatial data I/O & processing | High (Spatial domain decomposition) |

Optimization Methodologies & Experimental Protocols

Protocol: Surrogate Model Construction via Gaussian Process Regression

Objective: Replace an expensive, high-fidelity model (e.g., a biorefinery Aspen Plus simulation) with a fast statistical surrogate for Monte Carlo sampling.

- Design of Experiments (DoE): Using a space-filling Latin Hypercube Sampling (LHS) design, sample 500-1000 input parameter vectors (e.g., feedstock composition, temperature, pressure) from the defined probability distributions.

- High-Fidelity Model Execution: Run the full physical/process model for each sampled input vector to generate the corresponding output dataset (e.g., yield, energy use).

- Surrogate Training: Fit a Gaussian Process (GP) regression model with a Matern 5/2 kernel to the input-output data. Optimize kernel hyperparameters via maximum likelihood estimation.

- Validation: Reserve 20% of the data as a test set. Validate the surrogate by calculating the Normalized Root Mean Square Error (NRMSE) and the coefficient of determination (R²). An R² > 0.95 is typically required for high-confidence substitution.

- Deployment: Integrate the trained GP surrogate into the Monte Carlo loop, enabling evaluation times on the order of milliseconds instead of minutes/hours.

Protocol: Hybrid Parallel Computing for Monte Carlo Simulations

Objective: Leverage both distributed (MPI) and shared-memory (OpenMP) parallelism to accelerate large-scale uncertainty propagation.

- Workflow Decomposition: The master process (MPI rank 0) reads the global parameter distributions and the number of required iterations (e.g., 10^7).

- Distributed Sampling: The total iterations are divided across

NMPI processes. Each process independently generates its assigned subset of random parameter vectors using a parallel random number generator (e.g., Philox) with unique seeds. - Embarrassingly Parallel Evaluation: Each process evaluates the model (or surrogate) for its local batch of samples. Within each node, OpenMP threads can further parallelize the evaluation of complex sub-models or vectorized operations.

- Result Aggregation: All processes send their local result vectors (e.g., GHG emissions per run) to the master process for concatenation and final statistical analysis (kernel density estimation, quantile calculation).

Diagram: Hybrid Parallel Monte Carlo Workflow

Title: Hybrid MPI-OpenMP Architecture for Stochastic LCA

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Computational Tools for Stochastic Biofuels LCA

| Tool/Reagent | Category | Primary Function in Stochastic Modeling | Key Consideration |

|---|---|---|---|

| Brightway2 LCA | LCA Framework | Provides core database structure and calculation engines for stochastic LCI. | Open-source; allows deep integration with Python's scientific stack (NumPy, Pandas). |

| Chaospy | Uncertainty Quantification Library | Advanced polynomial chaos expansion for surrogate modeling and sensitivity analysis. | More efficient than Monte Carlo for smooth response surfaces in moderate dimensions. |

| Intel oneAPI Math Kernel Library (MKL) | Optimized Math Library | Accelerates linear algebra (matrix inversions in GP regression) and random number generation. | Critical for performance on Intel CPUs; use with NumPy for automatic acceleration. |

| JAX | Differentiable Programming | Enables automatic differentiation and GPU-accelerated NumPy operations for gradient-based analysis. | Essential for training neural network surrogates and Hamiltonian Monte Carlo sampling. |

| UC Irvine OpenLCI Databases | Data Source | Provides spatially and temporally explicit life cycle inventory data for uncertainty characterization. | Data quality and pedigree matrices are required to define input parameter distributions. |