Assessing Reliability in Hybrid Renewable Energy Systems: A Comprehensive Guide to Monte Carlo Simulation Methods

This article provides a targeted guide for researchers and engineers on applying Monte Carlo simulation to evaluate the reliability of hybrid renewable energy systems (HRES).

Assessing Reliability in Hybrid Renewable Energy Systems: A Comprehensive Guide to Monte Carlo Simulation Methods

Abstract

This article provides a targeted guide for researchers and engineers on applying Monte Carlo simulation to evaluate the reliability of hybrid renewable energy systems (HRES). We begin by establishing the fundamental need for probabilistic reliability assessment in systems with inherent variability from sources like solar and wind. The core of the article details the methodological framework for building a Monte Carlo model, from defining system architecture and stochastic inputs to calculating key reliability indices. We then address common implementation challenges and optimization techniques for computational efficiency and model accuracy. Finally, we explore validation strategies against analytical methods and comparative analysis of different system configurations or control algorithms. The synthesis offers clear pathways for employing this powerful simulation tool to de-risk and optimize the design of robust, sustainable energy systems.

Why Stochastic Simulation is Essential for Modern Renewable Energy System Design

Application Notes on Reliability Assessment via Monte Carlo Simulation

Monte Carlo simulation (MCS) provides a probabilistic framework for quantifying reliability in hybrid renewable energy systems (HRES). This method accounts for the stochastic nature of renewable resources, load demand, and component failures, moving beyond deterministic analyses.

Core Stochastic Inputs for MCS:

- Intermittency: Modeled using time-series probability distributions for solar irradiance and wind speed, derived from historical site data.

- Load Uncertainty: Represented by statistical load profiles, often following a normal or log-normal distribution around a forecasted mean.

- Component Failure: Modeled using failure and repair rates, typically following exponential distributions for time-to-failure and time-to-repair.

Key Output Metrics: The simulation yields reliability indices, most critically the Loss of Load Probability (LOLP) and Expected Energy Not Supplied (EENS).

Table 1: Representative Input Parameters for MCS Reliability Analysis

| Parameter Category | Specific Parameter | Typical Value / Distribution | Data Source / Notes |

|---|---|---|---|

| Photovoltaic (PV) System | Panel Degradation Rate | 0.5 - 1.0% per year | Manufacturer's warranty data |

| Inverter MTBF* | 50,000 - 100,000 hours | Field reliability studies | |

| Solar Irradiance Model | Beta Distribution (α, β) | NASA POWER/NSRDB databases | |

| Wind Turbine System | Turbine Availability | 95 - 98% | Industry benchmarks |

| Wind Speed Model | Weibull Distribution (k, λ) | Site meteorological masts | |

| Energy Storage System | Battery Cycle Life | 3,000 - 6,000 cycles (to 80% DoD) | Cell testing data |

| Round-Trip Efficiency | 85 - 95% | Manufacturer specification | |

| Load & System | Daily Load Profile | Normal Distribution (μ, σ) | Smart meter aggregations |

| Grid Availability (if applicable) | 99.9% (8.76 hrs/year outage) | Utility reliability reports | |

| Diesel Generator (backup) Failure Rate | 0.005 - 0.02 failures/hour | Maintenance logs |

MTBF: Mean Time Between Failures; *DoD: Depth of Discharge*

Table 2: Sample MCS Output Reliability Indices (Simulated 20-year period)

| Reliability Index | Acronym | Formula / Description | Sample Result from Case Study |

|---|---|---|---|

| Loss of Load Probability | LOLP | Σ(Time load not met) / (Total simulated time) | 2.15% |

| Expected Energy Not Supplied | EENS | Σ(Energy deficit in kWh) | 1,250 kWh/year |

| System Average Interruption Frequency Index | SAIFI | Σ(Number of customer interruptions) / (Total customers) | 1.8 interruptions/year |

| Equivalent Availability Factor | EAF | (Available Time – Outage Time) / Available Time | 96.7% |

Experimental Protocols for HRES Reliability Research

Protocol 1: Time-Series Data Generation for Stochastic Inputs

Objective: To synthesize annual, high-resolution (hourly) time-series data for solar irradiance, wind speed, and load to serve as MCS inputs.

Materials: Historical meteorological data (NASA POWER, ERA5), aggregated load data, statistical software (Python/R, @RISK, MATLAB).

Procedure:

- Data Collection: Obtain at least 10 years of historical hourly solar global horizontal irradiance (GHI) and wind speed at hub height for the site.

- Distribution Fitting: For each month and hour, fit a probability distribution (Beta for GHI, Weibull for wind speed) to the historical data. Calculate shape parameters (α, β, k, λ).

- Markov Chain Synthesis: Use a first-order Markov chain model to incorporate temporal autocorrelation.

- Calculate transition probability matrices for irradiance/wind states (e.g., Low, Medium, High) from historical sequences.

- Generate a synthetic year of hourly data by sequentially drawing the next state based on the current state's transition probabilities and the fitted distribution.

- Load Profile Synthesis: Fit a normal distribution to historical load data for each hour of the day. Generate synthetic load by sampling from

N(μ_hour, σ_hour). - Validation: Compare the statistical properties (mean, variance, autocorrelation) of the synthetic data with the historical data using Kolmogorov-Smirnov tests.

Protocol 2: Sequential Monte Carlo Simulation for Reliability Assessment

Objective: To perform a non-sequential and sequential MCS to evaluate the HRES's Loss of Load Probability (LOLP) and Expected Energy Not Supplied (EENS).

Materials: Synthesized input time-series (Protocol 1), component reliability data (Table 1), system power flow model, computational software.

Procedure - Non-Sequential MCS:

- Define System State Vector: For each component (PV array, inverter, wind turbine, battery, etc.), define its state as 1 (operational) or 0 (failed).

- Random Sampling: For each simulation trial (e.g., N=100,000):

- Randomly determine the state of each component based on its failure probability.

- Randomly sample hourly solar irradiance and wind speed values from their respective distributions.

- Randomly sample the load for that hour.

- Power Balance Check: For the sampled system state and resources, run a power balance calculation (Generation + Storage ≥ Load?). Record a deficit event if load is not met.

- Calculate Indices: After N trials, calculate LOLP = (Number of deficit trials) / N.

Procedure - Sequential MCS (More Accurate for Storage):

- Initialize: Generate a synthetic year of hourly resource and load data (Protocol 1). Initialize all components as operational. Set simulation period (e.g., 20 years).

- Component State Duration Sampling: For each component, sample its time-to-failure (TTF) and time-to-repair (TTR) from exponential distributions using

TTF = -MTBF * ln(U), where U is a uniform random number. - Chronological Simulation: Step through the simulation time (e.g., hour-by-hour):

- Update component states based on TTF/TTR schedules.

- Execute the HRES energy dispatch and storage management logic.

- Check for power balance, recording the magnitude and duration of any deficit.

- Iterate and Aggregate: Repeat the entire sequential simulation for multiple synthetic years (e.g., 1000 years). Calculate LOLP and EENS from the aggregated results.

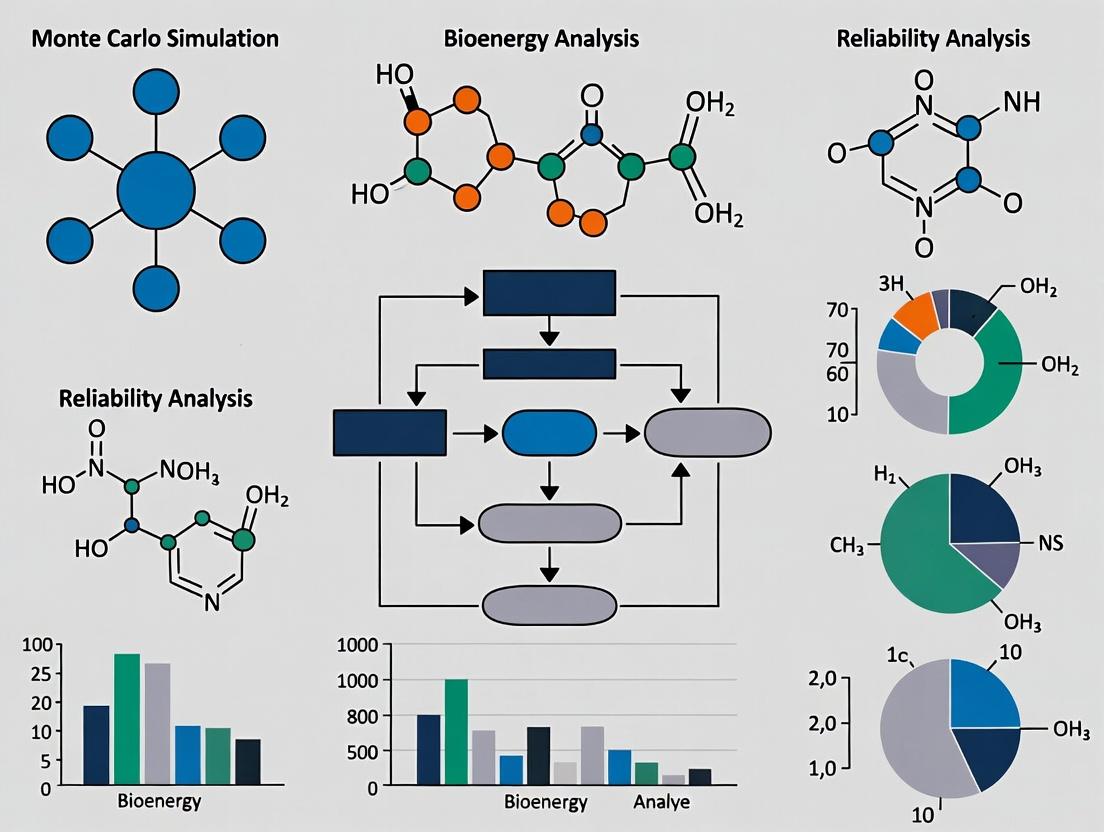

Visualizations: Monte Carlo Simulation Workflow and System Logic

Title: Monte Carlo Simulation Workflow for HRES Reliability

Title: HRES Component Logic and Stochastic Inputs

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Data Sources for HRES Reliability Research

| Item Name | Category | Function / Application |

|---|---|---|

| NASA POWER / ERA5 Database | Data Source | Provides validated, long-term historical time-series for solar irradiance, temperature, and wind speed at global locations. Essential for stochastic input modeling. |

| HOMER Pro / HYBRID2 | Simulation Software | Industry-standard tools for designing and optimizing microgrids and hybrid systems. Useful for initial sizing and deterministic analysis before detailed MCS. |

| Python Ecosystem (pandas, NumPy, SciPy, PyMC) | Computational Tool | Core platform for data processing, statistical analysis, distribution fitting, and custom-built Monte Carlo simulation scripts. Offers maximum flexibility. |

| R (stats, fitdistrplus) | Computational Tool | Alternative statistical computing environment with robust packages for probability distribution fitting and time-series analysis. |

| @RISK / SAP Crystal Ball | Simulation Add-on | Monte Carlo simulation add-ins for Microsoft Excel, enabling probabilistic modeling with a user-friendly interface. Useful for rapid prototyping. |

| MATLAB Simulink | Modeling Software | Provides a block-diagram environment for modeling dynamic system behavior and can be integrated with MCS routines for reliability analysis. |

| Reliability Databases (OREDA, IEEE Std 493) | Data Source | Provide generic failure rate and repair time data (MTBF, MTTR) for electrical components like inverters, transformers, and switches when site-specific data is unavailable. |

| Li-ion Battery Degradation Models (e.g., semi-empirical) | Analytical Model | Mathematical models that predict battery capacity fade and resistance increase as a function of cycling (DoD, C-rate, temperature). Crucial for simulating storage lifetime. |

Application Notes: The Case for Probabilistic Methods

Deterministic analysis, which employs fixed input values (e.g., average solar irradiance, average wind speed, nameplate capacities) to model hybrid renewable energy systems (HRES), is fundamentally ill-suited for assessing true system reliability and performance. Its limitations are exposed by the intrinsic stochasticity of renewable resources and component failure modes. These notes, framed within a thesis on Monte Carlo simulation for HRES reliability, detail why deterministic models fall short and outline the protocols for a probabilistic alternative.

Core Limitation 1: Inability to Capture Resource Volatility Deterministic models use long-term averages (e.g., annual average daily solar profile) as inputs. This fails to represent the diurnal, seasonal, and inter-annual variability and the occurrence of prolonged low-generation periods (dunkelflaute). The correlation between solar and wind resources at sub-hourly timescales is also lost.

Core Limitation 2: Oversimplification of Component Reliability Using manufacturer-rated lifetimes or mean time between failures (MTBF) as fixed inputs ignores the probabilistic nature of component degradation and random failures in PV panels, wind turbines, and battery cells. This leads to inaccurate estimates of system downtime and maintenance costs.

Core Limitation 3: Misleading Reliability Metrics Deterministic analysis produces single-point outputs such as a nominal Levelized Cost of Energy (LCOE) or a binary "meets demand/does not meet demand" result. It cannot generate the probability distributions of key metrics—like Loss of Load Probability (LOLP), Expected Energy Not Served (EENS), or capacity credit—that are essential for risk-informed planning and financing.

Table 1: Comparative Outputs of Deterministic vs. Probabilistic Analysis

| Performance/Reliability Metric | Deterministic Analysis Output | Probabilistic (Monte Carlo) Analysis Output |

|---|---|---|

| System Reliability | Binary pass/fail against a specific scenario. | Probability distribution (e.g., LOLP = 2.5% ± 0.5%). |

| Annual Energy Yield | Single value (MWh/year). | Probability density function with confidence intervals. |

| Battery Cycle Degradation | Estimated based on average daily cycles. | Distribution of State of Health (SoH) over time, accounting for variable depth-of-discharge. |

| Financial Risk (LCOE) | Single nominal value ($/kWh). | Range of possible LCOE values with associated probabilities. |

| Capacity Adequacy | Fixed capacity factor. | Effective Load Carrying Capability (ELCC) or capacity credit. |

Experimental Protocols: Monte Carlo Simulation for HRES Reliability

The following protocol details a Monte Carlo simulation framework designed to overcome the limitations of deterministic analysis.

Protocol MC-HRES-001: Probabilistic Reliability Assessment of a Solar/Wind/Battery System

1. Objective: To quantify the probability of supply adequacy (LOLP, EENS) and the statistical distribution of key performance indicators for a grid-connected or off-grid HRES over a 20-year project lifetime.

2. Experimental Workflow:

Diagram Title: Monte Carlo Workflow for HRES Reliability

3. Detailed Methodology:

- Step 1: System Definition. Specify the technical parameters: PV array capacity (kWp), wind turbine capacity (kW), battery storage capacity (kWh) and power (kW), inverter efficiency, control strategy (e.g., dispatch logic), and load profile.

- Step 2: Stochastic Input Characterization. Define probability distributions for all key uncertain inputs:

- Solar Resource: Generate synthetic hourly global horizontal irradiance (GHI) time series using autoregressive models (e.g., from TMY data with inter-annual variability). Distribution: Multivariate (temporal correlation).

- Wind Resource: Generate synthetic hourly wind speed time series using Weibull distributions with site-specific shape (k) and scale (c) parameters, incorporating seasonal and diurnal trends.

- Component Failures: Model time-to-failure for major components using exponential (random failures) or Weibull (wear-out failures) distributions. Define repair time distributions (e.g., log-normal).

- Battery Degradation: Model capacity fade as a function of probabilistic cycling regimes (cycle depth, rate) and calendar aging.

- Step 3: Performance Model. Build a deterministic time-series energy balance model in a computational environment (e.g., Python, MATLAB). This model, for a given set of inputs, calculates hourly energy generation, storage state, and load satisfaction.

- Step 4: Monte Carlo Simulation.

- Set the number of iterations N (≥10,000 for stable statistics).

- For each iteration i:

- Randomly sample a full set of input parameters from the distributions defined in Step 2 (including full yearly weather time series and any component failures for that simulated year).

- Execute the deterministic performance model from Step 3 with these sampled inputs.

- Record output metrics for iteration i:

LOL_i(binary: 1 if any load loss, else 0),EENS_i(kWh),Annual_Energy_Yield_i, etc.

- Step 5: Statistical Analysis.

- LOLP = (Σ

LOL_i) / N. - EENS = (Σ

EENS_i) / N. - Construct histograms and kernel density estimates for all continuous output metrics (e.g., annual yield).

- Calculate confidence intervals (e.g., 5th, 50th, 95th percentiles) for LCOE and other financial metrics.

- LOLP = (Σ

- Step 6: Result Synthesis. Present results as probability distributions and risk curves (e.g., a duration curve for power shortfalls).

Protocol MC-HRES-002: Sensitivity Analysis via Monte Carlo

Objective: To rank input variables (e.g., wind speed inter-annual variance, battery cycle life, component MTBF) by their influence on key output variance (e.g., EENS).

Methodology: Use the framework from MC-HRES-001. Employ techniques like regression of outputs on inputs or calculation of Sobol indices from the Monte Carlo results to quantify the contribution of each input variable's uncertainty to the output variance.

Diagram Title: Sensitivity Analysis Flow for HRES

The Scientist's Toolkit: Research Reagent Solutions for HRES Modeling

| Tool/Reagent | Function in HRES Reliability Research |

|---|---|

Synthetic Weather Generator (e.g., using p-vlib or RESTATS) |

Creates statistically robust, multi-year hourly time series of solar irradiance and wind speed that preserve historical spatiotemporal patterns and variability, serving as primary stochastic inputs. |

| Probabilistic Degradation Models | Mathematical functions (e.g., capacity fade = f(cycles, temperature, SOC)) parameterized with empirical data to model the uncertain aging of batteries and PV modules over time. |

| Failure Mode Database (e.g., OREDA, field data) | A curated collection of failure rates (λ) and repair times for system components (inverters, trackers, turbine gearboxes) used to define reliability distributions for Monte Carlo sampling. |

| High-Performance Computing (HPC) Cluster or Cloud Compute Credits | Enables the execution of tens of thousands of simulation iterations (each simulating 20+ years at hourly resolution) in a tractable timeframe. |

Open-Source Simulation Core (e.g., HybridSimulator in Python) |

A validated, deterministic performance model of the HRES's physics and dispatch logic that can be programmatically called within a Monte Carlo loop. |

Global Sensitivity Analysis Library (e.g., SALib for Python) |

A software tool to post-process Monte Carlo results, computing variance-based sensitivity indices (Sobol indices) to identify the most influential uncertain parameters. |

Core Principles

Monte Carlo Simulation (MCS) is a computational technique that uses repeated random sampling to obtain numerical results for probabilistic problems. Its core principle is to model phenomena with inherent uncertainty by building models of possible results, substituting a range of values—a probability distribution—for any factor that has inherent uncertainty. It then calculates results repeatedly, each time using a different set of random values from the probability functions. A key strength is its ability to handle problems with high dimensionality and complex, non-linear relationships, common in hybrid renewable energy system (HRES) reliability analysis.

Application Notes for HRES Reliability Research

In the context of a thesis on HRES reliability, MCS is employed to assess system performance metrics like Loss of Power Supply Probability (LPSP), Expected Energy Not Supplied (EENS), and System Availability under stochastic variables. These variables include solar irradiance, wind speed, load demand, and component failure rates.

Key Stochastic Inputs & Their Distributions

| Stochastic Input Variable | Typical Probability Distribution | Justification in HRES Context |

|---|---|---|

| Solar Irradiance (kW/m²) | Beta Distribution | Bounded between 0 and maximum clear-sky irradiance; fits empirical data well. |

| Wind Speed (m/s) | Weibull Distribution | Commonly used to model wind resource; shape parameter (k) ~2.0 (Rayleigh). |

| Load Demand (kW) | Normal or Time-series | Can be modeled as normal around a forecast mean, or as a deterministic profile with noise. |

| Component Time-to-Failure (e.g., inverter) | Exponential Distribution | Often used for electronic components with constant failure rate (λ). |

| Battery State of Charge (Initial) | Uniform Distribution | Assumes no prior knowledge of starting condition within operating bounds. |

MCS Output Metrics for HRES Reliability

| Reliability Metric | Formula/Description | MCS Calculation Method |

|---|---|---|

| Loss of Power Supply Probability (LPSP) | LPSP = (Σ_t LPS_t) / (Σ_t Load_t) |

For each simulation year, sum Loss of Power Supply (LPS) hours. Average over total trials. |

| Expected Energy Not Supplied (EENS) | EENS = Σ_t (LPS_t) [kWh/period] |

Direct output from each trial; reported as a distribution. |

| System Availability (A) | A = 1 - (Total Downtime / Total Time) |

Downtime is defined when load demand exceeds total generation and storage. |

Experimental Protocols

Protocol 1: MCS for Annual HRES Reliability Assessment

Objective: To estimate the annual LPSP and EENS for a given HRES configuration.

- Model Definition: Define the HRES system architecture (e.g., PV rated power, wind turbine capacity, battery bank size, inverter rating).

- Input Distributions: Characterize all stochastic inputs (see table above) with their parameters (e.g., Beta(α, β) for irradiance, Weibull(k, c) for wind speed).

- Time-step Resolution: Set simulation time-step (Δt), typically 1 hour.

- Pseudo-random Number Generation: Initialize a Mersenne Twister or similar high-quality random number generator with a seed.

- Simulation Loop (Single Trial): a. For each hour (t = 1 to 8760), sample random values from all input distributions. b. Convert irradiance and wind samples to power output using manufacturer power curves. c. Execute the defined HRES energy balance and dispatch logic (e.g., charge battery if excess generation, discharge if deficit). d. Calculate LPS(t) if load cannot be met. e. Record hourly data.

- Aggregation: After 8760 steps, calculate trial LPSP and EENS.

- Repetition: Repeat steps 5-6 for N trials (e.g., N = 10,000).

- Output Analysis: Analyze the distribution of output metrics (LPSP, EENS). Calculate mean, standard deviation, and percentiles (e.g., 95th) to present risk-informed reliability indices.

Protocol 2: MCS for Component Failure Impact on HRES

Objective: To assess the impact of stochastic component failures on system availability.

- Failure Modeling: For each critical component (PV array, wind turbine, inverter, battery), define Mean Time Between Failures (MTBF) and Mean Time To Repair (MTTR).

- Distribution Assignment: Model time-to-failure as Exponential(λ=1/MTBF). Model repair time as Lognormal or Exponential.

- Simulation Initialization: Set all components to "operational" at t=0.

- Event-Driven Simulation Loop: a. For each component, sample its next time-to-failure. b. Advance simulation clock to the next event (either a failure or a repair completion). c. Update system state: if a component fails, check if system can meet load with remaining components. Sample its repair time and schedule a repair completion event. d. Log periods of system downtime. e. Repeat for the desired simulation period (e.g., 20 years).

- Replication: Repeat the entire simulation for multiple independent runs (e.g., 5,000 runs).

- Metric Calculation: Calculate long-term average availability and frequency of failure events from the aggregated results.

Visualizations

Title: MCS Workflow for HRES Hourly Reliability Analysis

Title: Stochastic Inputs to Reliability Outputs in HRES MCS

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Solution | Function in HRES-MCS Research |

|---|---|

| Numerical Computing Environment (e.g., MATLAB, Python/NumPy) | Provides core mathematical libraries, random number generators, and vectorized operations for efficient simulation coding. |

| High-Quality Pseudo-Random Number Generator (PRNG) | Engine for generating uncorrelated, long-sequence random numbers (e.g., Mersenne Twister). Critical for result validity. |

| Statistical Distribution Fitting Toolbox | Used to fit theoretical distributions (Weibull, Beta) to historical meteorological and load data for accurate input modeling. |

| Time-Series Weather Data (TMY, NSRDB) | Typical Meteorological Year or long-term historical data forms the empirical basis for defining input probability distributions. |

| Component Reliability Databases (e.g., IEEE Std 493, MIL-HDBK-217F) | Sources for failure rate (λ) and repair time data for generators, inverters, and storage to parameterize failure models. |

| Parallel Computing Toolbox / Library (e.g., CUDA, Parallel Computing Toolbox) | Enables parallel execution of thousands of independent Monte Carlo trials, drastically reducing computation time. |

| Result Visualization & Statistical Analysis Package | For creating histograms, cumulative distribution functions, and sensitivity analysis plots of output metrics. |

Within the broader thesis on Monte Carlo Simulation (MCS) for Hybrid Renewable Energy System (HRES) reliability research, the quantification of system performance through key reliability indices is paramount. These indices translate stochastic operational data—generated via MCS—into standardized metrics for system design, comparison, and optimization. This application note details the definition, calculation, and experimental protocols for four core indices: Loss of Load Probability (LOLP), Loss of Energy Expectation (LOEE), Expected Energy Not Supplied (EENS), and Availability. The target audience—researchers and scientists in energy systems—can utilize these protocols to benchmark HRES configurations within their MCS frameworks.

Core Reliability Indices: Definitions and Quantitative Benchmarks

The following indices are calculated over a defined simulation period (T), typically one year, using time-series data from MCS that models resource variability, component failures, and load demand.

Table 1: Core Reliability Indices for HRES Assessment

| Index | Acronym | Formal Definition | Typical Unit | Benchmark Range (HRES) |

|---|---|---|---|---|

| Loss of Load Probability | LOLP | Probability that the system load exceeds the available generation capacity. | dimensionless (probability) | 0.01 - 0.05 (1-5% of time) |

| Loss of Energy Expectation / Expected Energy Not Supplied | LOEE / EENS | Expected energy deficit when load exceeds available generation. | kWh/year | Varies by system size; often <5% of annual load. |

| System Availability | A | Proportion of time the system meets the load demand. | % | > 95% for critical loads |

Key Relationships:

- LOEE and EENS are synonymous and interchangeable terms.

- Availability = 1 - LOLP (when LOLP is expressed as a probability of time).

- EENS = Σ (Load Power - Available Power) * Δt, summed over all time steps where a deficit exists.

Experimental Protocols for Index Calculation via Monte Carlo Simulation

Protocol 3.1: Sequential Monte Carlo Simulation for Time-Series Indices

Objective: To calculate LOLP, EENS, and Availability indices for an HRES over a long-term period by simulating its chronological operation, accounting for weather variability, component state transitions, and load profile.

Methodology:

- System Modeling: Define the HRES architecture (e.g., PV, Wind Turbine, Battery, Diesel Generator). Model power outputs using resource data (solar irradiance, wind speed) and component efficiency curves.

- State Modeling: For each non-deterministic component (e.g., generator, inverter, battery), define a state transition model (e.g., Up Down) using Mean Time To Failure (MTTF) and Mean Time To Repair (MTTR) values.

- Load Definition: Input a chronological load profile (hourly resolution recommended).

- Simulation Engine: Run a sequential MCS for N years (e.g., N=1000 simulated years). a. For each time step (e.g., 1 hour): i. Sample component states based on reliability parameters. ii. Calculate total available power from renewable sources and available units. iii. Execute the HRES energy management strategy (e.g., charge/discharge battery, dispatch generator). iv. Calculate net power balance: Balance = Available Power - Load. b. Record a deficit event if Balance < 0.

- Index Calculation:

- LOLP: (Total number of time steps with deficit) / (Total number of time steps in simulation).

- EENS: Sum of all energy deficits (kW * Δt) across the entire simulation, divided by the number of simulated years to get an annual expectation.

- Availability: (Total time steps - deficit time steps) / (Total time steps) * 100%.

Protocol 3.2: Non-Sequential (State-Sampling) Monte Carlo for LOLP

Objective: To efficiently estimate the probability-based index LOLP for a system without simulating chronological sequences, focusing on system capacity adequacy.

Methodology:

- Capacity Outage Probability Table (COPT): For systems with multiple conventional units, build a COPT. For HRES, create a multi-state model that combines the probabilistic power output states of renewable sources (e.g., via clustering of historical data).

- Random Sampling: In each simulation trial: a. Randomly sample the power output state for each renewable source based on its probability distribution. b. Randomly sample the state (Up/Down) for each conventional component. c. Sum the total available capacity for this trial state.

- Load Comparison: Compare the sampled available capacity against the peak load (or a daily load block) for the period.

- LOLP Calculation: LOLP = (Number of trials where available capacity < load) / (Total number of trials).

Visualization of Monte Carlo-Based Reliability Assessment Workflow

Diagram 1: Workflow for HRES Reliability Assessment via MCS.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Essential Computational & Data Tools for HRES Reliability Research

| Item / Solution | Function in HRES Reliability Research |

|---|---|

| Time-Series Weather Data (TMY, NSRDB, MERRA-2) | Provides solar irradiance, wind speed, temperature inputs for renewable generation models in sequential MCS. |

| Component Reliability Databases (IEEE Std 493, OREDA) | Sources for Mean Time Between Failures (MTBF) and Mean Time To Repair (MTTR) data for generators, converters, and balance-of-system components. |

| Load Profile Data (Residential, Commercial, Industrial) | Chronological demand data against which system adequacy is measured. Critical for EENS calculation. |

| Monte Carlo Simulation Software (MATLAB, Python with NumPy/Pandas, R, HOMER Pro) | Core computational environment for implementing the experimental protocols. Enables custom modeling and automation. |

| Statistical Analysis Package (Python SciPy, R Stats) | For analyzing MCS output, calculating confidence intervals, and fitting probability distributions to input data. |

| Energy Management System (EMS) Algorithm Code | A digital model of the dispatch rules (e.g., battery charge/discharge logic) that governs the HRES operation within each MCS time step. |

1. Introduction & Application Notes

The paradigm of complex system analysis, whether in pharmacometrics or renewable energy systems, is shifting toward integration. Current research trends emphasize the movement from isolated, component-level models to high-fidelity, multi-physics, multi-scale integrated system models. In pharmacodynamics, this mirrors the shift from single-target drug models to whole-cell or organ-on-a-chip simulations that integrate pharmacokinetics, pharmacodynamics, and disease progression. For hybrid renewable energy systems (HRES), this involves co-simulating stochastic weather inputs, power electronics, electrochemical battery degradation, grid dynamics, and demand profiles within a unified reliability framework.

The critical research gap is the lack of standardized, transparent, and reproducible protocols for constructing, validating, and interrogating these integrated models. The computational burden of high-fidelity simulation, especially when coupled with probabilistic methods like Monte Carlo for uncertainty quantification, remains a significant barrier. These application notes provide a structured approach to bridge this gap.

2. Data Synthesis: Key Quantitative Trends in Integrated Modeling

Table 1: Comparison of Modeling Fidelity Levels and Computational Cost

| Fidelity Level | Typical Application | Simulation Speed (Relative) | Key Input Variables | Monte Carlo Run Feasibility |

|---|---|---|---|---|

| Low-Fidelity (Empirical) | Preliminary screening, long-term capacity planning | 1x (Baseline) | Aggregated weather data, yearly load averages, component MTBF* | High (10,000+ runs) |

| Medium-Fidelity (Quasi-Static) | Annual reliability assessment, techno-economic analysis | 0.01x | Time-series data (hourly), temperature-dependent efficiency, stateful component models | Medium (1,000 - 10,000 runs) |

| High-Fidelity (Dynamic Integrated) | Transient stability analysis, fault response, control system validation | 0.0001x | Sub-second meteorological gusts, electromagnetic transients, detailed thermal & aging models | Low (10 - 100 runs, requiring HPC) |

MTBF: Mean Time Between Failures. *HPC: High-Performance Computing.

Table 2: Research Gaps in Integrated HRES Reliability Modeling

| Gap Category | Specific Deficiency | Impact on Reliability Assessment |

|---|---|---|

| Data Integration | Lack of synchronized, high-resolution temporal datasets (solar irradiance, wind speed, load, grid price) at same location. | Introduces spurious correlation errors, undermines model validation. |

| Cross-Domain Model Coupling | Absence of standardized interfaces between weather, power, and degradation models (e.g., FMU* standards). | Limits model portability, increases development time, hinders reproducibility. |

| Uncertainty Propagation | Inadequate treatment of epistemic (model form) vs. aleatory (data) uncertainty across the integrated model chain. | Leads to under- or over-confident reliability predictions, poor decision support. |

| Validation Metrics | No consensus on system-level, time-dependent reliability metrics beyond Loss of Load Probability (LOLP). | Fails to capture frequency, duration, and severity of energy deficits. |

*FMU: Functional Mock-up Unit for co-simulation.

3. Experimental Protocols

Protocol 1: Monte Carlo Simulation for Time-to-Failure Analysis of an Integrated HRES

Objective: To estimate the probability distribution of system failure time for a solar-wind-battery HRES, accounting for correlated component degradation and stochastic resource availability.

Materials & Software: See "The Scientist's Toolkit" below.

Procedure:

- Model Integration: Implement the integrated system model (Fig. 1) in a co-simulation environment (e.g., Python with

FMPy). Link the high-fidelity photovoltaic model (electrical + thermal), wind turbine model, lithium-ion battery degradation model, and load profile. - Define Failure Threshold: Set a system failure criterion (e.g., load power demand unmet for >4 consecutive hours).

- Initialize Parameters: Define probability distributions for all stochastic input variables (Table 3).

- Monte Carlo Loop: For

i = 1toN(e.g., N=10,000): a. Sampling: Draw a complete parameter setP_iand a 20-year hourly weather/time-seriesW_ifrom their respective distributions. b. Simulation: Execute the integrated model withP_iandW_ifor the simulated period or until the failure threshold is met. Record the time-to-failureTTF_i. c. Logging: StoreTTF_i, key degradation states (e.g., battery capacity fade), and failure mode. - Post-Processing: Construct the empirical cumulative distribution function (CDF) of

TTF. Perform sensitivity analysis (e.g., Sobol indices) to rank input parameters by their contribution to output variance.

Table 3: Stochastic Input Variables for Protocol 1

| Variable | Distribution Type | Parameters | Correlation Consideration |

|---|---|---|---|

| Solar Panel Initial Degradation Rate | Lognormal | Mean = 0.5%/year, SD = 0.15%/year | Correlated with first-year irradiance. |

| Battery Calendar Aging Coefficient | Normal | Mean = α, SD = 0.05*α | Independent. |

| Annual Wind Speed Mean | Weibull | Shape=k, Scale=λ (site-specific) | Correlated with seasonal solar resource. |

| Hourly Load Forecast Error | Normal | Mean = 0 kW, SD = 5% of forecast | Auto-correlated time series. |

Protocol 2: Validation of an Integrated Model Against Operational Data

Objective: To calibrate and validate a high-fidelity HRES model using a historical dataset of performance.

Procedure:

- Data Segmentation: Partition one year of synchronized operational data (power output, weather, load) into a 9-month calibration set and a 3-month validation set.

- Model Calibration: a. Define a loss function (e.g., Root Mean Square Error of total system output power). b. Using the calibration dataset, run a Bayesian calibration (e.g., Markov Chain Monte Carlo) or a global optimization algorithm (e.g., Particle Swarm) to estimate posterior distributions of key model parameters (e.g., inverter efficiency curve coefficients, battery internal resistance).

- Model Validation: a. Run the calibrated model with the validation set input data. b. Compare model output to true output using quantitative metrics: Normalized Mean Absolute Error (NMAE), R-squared, and a time-dependent reliability metric (e.g., Energy Supply Deficit).

- Gap Analysis: Quantify the remaining discrepancy. If significant, iterate model structure (e.g., add a soiling loss model for PV) and repeat Protocols 1 & 2.

4. Mandatory Visualizations

Integrated HRES Monte Carlo Simulation Workflow

Monte Carlo Time-to-Failure Analysis Protocol

5. The Scientist's Toolkit: Research Reagent Solutions

| Tool / Material | Function / Description | Example in HRES Research |

|---|---|---|

| Co-Simulation Framework (e.g., FMPy, OMNISIM) | Enables the coupling of models from different physical domains (e.g., electrical, thermal) and tools via standardized interfaces (FMI). | Linking a MATLAB/Simulink battery model with a Modelica wind turbine model and a Python-based solar forecast. |

| Uncertainty Quantification Library (e.g., UQLab, Chaospy, SALib) | Provides algorithms for propagating input uncertainties through complex models and performing global sensitivity analysis. | Computing Sobol indices to determine if wind speed uncertainty impacts reliability more than battery degradation uncertainty. |

| High-Performance Computing (HPC) Scheduler (e.g., SLURM) | Manages the distribution of thousands of independent Monte Carlo simulation runs across a computing cluster. | Parallel execution of Protocol 1, reducing wall-time from months to hours. |

| Synchronized, High-Resolution Dataset | The fundamental "reagent" for model validation. Must include coincident time-series for all relevant environmental and operational variables. | A 1-second resolution dataset of irradiance, module temperature, wind speed, power output, and grid frequency for a test microgrid. |

| Bayesian Calibration Toolbox (e.g., PyMC, Stan) | Provides statistical methods to infer model parameters and their uncertainties from observed data, yielding a posterior distribution. | Calibrating the parameters of a complex battery degradation model against laboratory cycling data with measurement noise. |

Building Your Monte Carlo Model: A Step-by-Step Framework for HRES Analysis

Application Notes: System Architecture & Component Definitions for MC Simulation

Within the framework of Monte Carlo (MC) simulation for hybrid renewable energy system (HRES) reliability research, the first critical step is the deterministic definition of system architecture and the stochastic modeling of its components. This establishes the foundational digital twin upon which probabilistic failure and performance analyses are conducted. The architecture is typically an AC-coupled system integrating diverse generation and storage sources to supply a specified load profile.

Core Architectural Principle: The system is designed for autonomous operation (off-grid/islanded mode), where the primary renewable sources (PV and Wind) are supplemented by battery energy storage and a diesel genset as a reliability backup. Power conversion devices (inverters/rectifiers) facilitate energy flow between AC and DC buses.

Component Modeling Philosophy: For MC reliability simulation, each component is modeled using a two-state (operational/failed) or multi-state Markov process. Key parameters include Mean Time To Failure (MTTF), Mean Time To Repair (MTTR), and performance coefficients that degrade stochastically over time. The models must capture both catastrophic failures and performance degradation.

Quantitative Component Modeling Parameters (Stochastic Inputs)

The following parameters, derived from recent literature and manufacturer datasheets, serve as primary inputs for the probability distributions used in MC sampling.

Table 1: Photovoltaic (PV) Array Stochastic Model Parameters

| Parameter | Symbol | Typical Value/Unit | Distribution Type (for MC) | Notes |

|---|---|---|---|---|

| Degradation Rate | d_PV | 0.5 - 1.0 %/year | Normal (μ=0.75, σ=0.15) | Annual power output loss. |

| Mean Time To Failure | MTTF_PV | 25 - 30 years | Weibull (λ=28, k=3) | Panel failure, excluding inverters. |

| Mean Time To Repair | MTTR_PV | 24 - 72 hours | Lognormal (μ=3.8, σ=0.5) | Replacement of faulty modules. |

| Temperature Coefficient | β | -0.3 to -0.5 %/°C | Uniform (-0.5, -0.3) | Efficiency loss per °C above STC. |

Table 2: Wind Turbine Stochastic Model Parameters

| Parameter | Symbol | Typical Value/Unit | Distribution Type (for MC) | Notes |

|---|---|---|---|---|

| Mean Time Between Failures | MTBF_WT | 3,000 - 7,000 hours | Exponential (λ=1/5000) | Mechanical/electrical failures. |

| Mean Time To Repair | MTTR_WT | 48 - 120 hours | Lognormal (μ=4.2, σ=0.6) | Complex mechanical repairs. |

| Availability | A_WT | 95 - 98% | Derived from MTBF/MTTR | Operational readiness. |

| Cut-in, Rated, Cut-out Wind Speed | Vc, Vr, V_f | 3, 12, 25 m/s | Deterministic | Power curve boundaries. |

Table 3: Battery Energy Storage (Lithium-ion) Stochastic Model Parameters

| Parameter | Symbol | Typical Value/Unit | Distribution Type (for MC) | Notes |

|---|---|---|---|---|

| Cycle Life (@80% DoD) | N_cyc | 3,000 - 6,000 cycles | Weibull (λ=4500, k=1.2) | Cycles to 80% initial capacity. |

| Calendar Life | T_cal | 10 - 15 years | Normal (μ=12.5, σ=1.5) | Ageing irrespective of cycles. |

| Round-Trip Efficiency | η_batt | 92 - 97% | Normal (μ=0.95, σ=0.01) | Energy in/out efficiency. |

| Depth of Discharge Limit | DoD | 70 - 80% | Uniform (0.70, 0.80) | Operational constraint to prolong life. |

Table 4: Power Converter & Diesel Genset Parameters

| Parameter | Symbol | Typical Value/Unit | Distribution Type (for MC) | Component |

|---|---|---|---|---|

| Mean Time To Failure | MTTF_Inv | 8 - 12 years | Weibull (λ=10, k=2) | Inverter/Charger |

| Efficiency | η_Inv | 94 - 98% | Normal (μ=0.96, σ=0.01) | Inverter/Charger |

| Failure Rate | λ_gen | 0.02 - 0.05 failures/oper.hr | Exponential (λ=0.035) | Diesel Genset |

| Minimum Load Ratio | L_min | 25 - 40% | Deterministic | Diesel Genset |

| Fuel Consumption at 100% Load | - | 0.25 - 0.30 L/kWh | Uniform (0.25, 0.30) | Diesel Genset |

Experimental Protocol: Component Failure Data Acquisition & Model Calibration

Objective: To empirically calibrate the stochastic failure and degradation models for each HRES component using field data and accelerated life testing (ALT) protocols.

Protocol 3.1: Field Reliability Data Collection for Wind Turbines

- Site Selection: Identify 50-100 geographically dispersed turbines of the same make/model with SCADA data logging.

- Data Logging: Extract time-series data for: operational status (on/off/fault), power output, wind speed, and error codes over a minimum 5-year period.

- Event Definition: Define a "failure event" as any unscheduled downtime >1 hour requiring manual intervention.

- Data Processing: Calculate time-to-failure and time-to-repair intervals. Censor ongoing operational periods.

- Statistical Fitting: Use Maximum Likelihood Estimation (MLE) to fit Exponential, Weibull, and Lognormal distributions to the TTF and TTR data. Select best fit via Akaike Information Criterion (AIC).

Protocol 3.2: Accelerated Life Testing for PV Module Degradation

- Sample Preparation: Select 20+ identical PV modules. Divide into 4 test groups.

- Stress Factors: Apply combined environmental stresses in climate chambers:

- Group A: Thermal Cycling (IEC 61215: -40°C to +85°C, 200 cycles).

- Group B: Damp Heat (85°C, 85% RH, 1000 hours).

- Group C: UV Exposure (15 kWh/m²).

- Group D: Combined sequence of B, A, C.

- Performance Measurement: At intervals of 100 thermal cycles or 250 damp-heat hours, measure I-V curves under Standard Test Conditions (STC) to determine peak power degradation.

- Model Calibration: Fit an Arrhenius-based degradation model to the power loss data. Translate accelerated stress hours to equivalent field years.

System Architecture and Simulation Workflow Diagrams

Diagram Title: Hybrid Renewable Energy System AC-Coupled Architecture

Diagram Title: Monte Carlo Reliability Simulation Workflow for HRES

The Researcher's Toolkit: Essential Materials & Reagents

Table 5: Key Research Reagent Solutions for HRES Reliability Experimentation

| Item / Solution | Function in Research Context | Example/Specification |

|---|---|---|

| SCADA & Field Data Logs | Raw empirical data source for failure/performance history. Essential for model validation. | 1-second to 1-hour resolution logs of power, status, environmental variables. |

| Accelerated Life Testing (ALT) Chamber | Applies elevated stress (thermal, humidity, UV) to components to induce rapid degradation for model calibration. | Climate chamber with thermal cycling (-40°C to +120°C) and humidity control (10-95% RH). |

| IV Curve Tracer | Measures the current-voltage characteristics of PV modules to quantify performance degradation during ALT or field aging. | Pulsed solar simulator meeting IEC 60904 standards. |

| Reliability Analysis Software | Fits statistical distributions (Weibull, Exponential, Lognormal) to time-to-failure data and calculates reliability indices. | Weibull++, R (survival package), Python (lifelines, reliability). |

| Monte Carlo Simulation Platform | Core engine for stochastic modeling and probabilistic reliability assessment of the integrated system. | MATLAB/Simulink, Python (numpy, pandas), HOMER Pro, or custom C++/Java code. |

| Energy Dispatch Algorithm Code | Deterministic logic that simulates system operation (source prioritization, charge/discharge) for each MC scenario. | Rule-based or optimization-based (e.g., LP) script integrated into the MC loop. |

1. Introduction Within the framework of a Monte Carlo simulation (MCS) for hybrid renewable energy system (HRES) reliability research, the accurate characterization of stochastic input variables is the computational bedrock. This step transforms abstract uncertainty into quantifiable probabilistic models that drive the simulation. For researchers and scientists, this involves treating environmental and mechanical inputs as random variables defined by appropriate probability density functions (PDFs) and temporal correlation structures. This application note details the protocols for characterizing four critical stochastic variable classes.

2. Variable Characterization Protocols & Data

2.1 Solar Irradiance Solar irradiance is characterized by its diurnal and seasonal cycles, superimposed with stochastic fluctuations due to cloud cover.

- Probability Distribution: The clearness index (Kt), defined as the ratio of global horizontal irradiance (GHI) to extraterrestrial irradiance, is commonly modeled using a Beta distribution.

- Spatio-temporal Correlation: Requires autoregressive models (e.g., AR(1)) to maintain realistic time-series sequences.

Table 1: Solar Irradiance Characterization Parameters

| Parameter | Symbol | Typical Distribution/Model | Key Parameters (Example Values) | Data Source |

|---|---|---|---|---|

| Clearness Index | Kt | Beta Distribution | α = 0.6 - 1.2, β = 0.8 - 1.5 (location-dependent) | NASA POWER, NSRDB, local pyranometers |

| Daily Profile | GHI(t) | Deterministic + Stochastic | Site-specific latitude/tilt, AR(1) φ ≈ 0.7 - 0.9 | Meteorological year data (TMY) |

| Spatial Correlation | ρ | Multivariate Normal | Correlation distance ~50-100 km | Satellite-derived irradiance maps |

Experimental Protocol for Site-Specific Beta Fitting:

- Data Acquisition: Obtain at least one year of high-resolution (hourly or sub-hourly) GHI data for the target site.

- Calculate Extraterrestrial Irradiance (G0): Compute G0 for each timestep using the solar geometry equations (Duffie & Beckman).

- Compute Clearness Index: Kt = GHI / G0 for all daylight hours. Filter out nighttime (G0 = 0) values.

- Distribution Fitting: Use Maximum Likelihood Estimation (MLE) to fit the Beta distribution to the Kt data series. The PDF is: f(kt) = (Γ(α+β)/(Γ(α)Γ(β))) * kt^(α-1) * (1-kt)^(β-1), for 0 ≤ kt ≤ 1.

- Goodness-of-Fit Test: Validate the fit using the Kolmogorov-Smirnov (K-S) test at a 95% confidence level.

2.2 Wind Speed Wind speed at hub height is characterized by its intermittency and autocorrelation.

- Probability Distribution: The two-parameter Weibull distribution is the standard model.

- Temporal Model: A first-order Markov chain or autoregressive moving average (ARMA) model is used to generate sequential, correlated data.

Table 2: Wind Speed Characterization Parameters

| Parameter | Symbol | Typical Distribution/Model | Key Parameters (Example Values) | Data Source |

|---|---|---|---|---|

| Hourly Speed | v | Weibull Distribution | Shape, k = 1.8 - 2.3; Scale, c = 6 - 10 m/s | MERRA-2, NOAA, SCADA data |

| Temporal Autocorrelation | - | ARMA(1,1) | AR coefficient φ₁ ≈ 0.85 - 0.98 | Time-series analysis of source data |

| Vertical Profile | v(z) | Power Law | Hellmann exponent α = 0.1 - 0.3 | Mast measurements at multiple heights |

Experimental Protocol for Weibull Parameter Estimation & Time-Series Generation:

- Data Collection: Aggregate time-series wind speed data at a reference height (e.g., 10m anemometer).

- Scale to Hub Height: Apply the power law: vhub = vref * (zhub / zref)^α.

- Weibull Fitting: Estimate shape (k) and scale (c) parameters using the Method of Moments (using mean speed

v̄and standard deviationσ: k = (σ/v̄)^-1.086, c = v̄ / Γ(1 + 1/k)) or MLE. - ARMA Model Calibration: Fit an ARMA(1,1) model to the normalized, detrended time series. The model form is: vt = μ + φ₁(v(t-1) - μ) + θ₁ε(t-1) + εt, where ε_t is white noise.

- Synthetic Generation: Use the fitted Weibull for marginal distribution and the ARMA model to impose realistic autocorrelation on the generated sequence.

2.3 Load Profiles Electrical demand is stochastic, driven by human activity and weather.

- Probability Distribution: Often modeled using a Normal or Log-Normal distribution for a given hour of the day and type of day (weekday/weekend).

- Time-Series Approach: A bottom-up approach using Markov chains for appliance usage or a top-down use of validated synthetic profiles (e.g., from CREST model).

Table 3: Load Profile Characterization Parameters

| Parameter | Typical Distribution/Model | Key Parameters | Data Source |

|---|---|---|---|

| Residential Hourly Load | Gaussian Mixture / Markov Chain | Mean & SD per hour, day-type; Appliance duty cycles | Smart meter data (e.g., UK-DALE, Pecan Street) |

| Commercial Load | Conditional Normal Distribution | Baseline dependent on occupancy & HVAC schedules | Building management system (BMS) logs |

| Aggregated Community Load | ARIMA models | Strong diurnal & weekly seasonality | Utility grid supply point data |

2.4 Failure & Repair Rates These rates define the stochastic availability of system components (PV inverters, wind turbines, batteries).

- Probability Distribution: Time-to-failure is typically modeled with an Exponential distribution (constant failure rate, λ) or a Weibull distribution (for aging). Time-to-repair is often log-normal.

- Process: Modeled as a two-state continuous-time Markov process (Up/Down states).

Table 4: Reliability Data for Common HRES Components

| Component | Failure Rate (λ) [failures/year] | Mean Time to Repair (MTTR) [hours] | Repair Time Distribution | Source (Example) |

|---|---|---|---|---|

| PV Module | 0.02 - 0.05 | 4 - 24 | Log-Normal | NREL Component Reliability Database |

| PV Inverter | 0.1 - 0.3 | 8 - 48 | Log-Normal | IEEE Gold Book, Manufacturer Data |

| Wind Turbine | 3 - 8 (per turbine) | 12 - 72 | Log-Normal | WMEP, LWK Databases |

| Li-ion Battery Bank | 0.05 - 0.1 | 24 - 96 | Log-Normal | Industry REX reports |

Experimental Protocol for Reliability Simulation:

- Define Reliability Block Diagram (RBD): Map the system series/parallel structure.

- Assign Parameters: For each component

i, assign failure rate (λi) and mean repair time (ri). Repair rate μi = 1/ri. - Generate Time-to-Failure (TTF): For a simulation run, sample TTFi ~ Exp(λi) or Weibull(β, η).

- Generate Time-to-Repair (TTR): Sample TTRi ~ LogNormal(mean = MTTRi, SD).

- State Determination: For each timestep in the MCS, determine component and system state based on the cumulative TTF and TTR sequences.

3. The Scientist's Toolkit: Research Reagent Solutions

Table 5: Essential Computational & Data Resources

| Item | Function/Description | Example Tools/Libraries |

|---|---|---|

| Meteorological Database | Provides long-term, validated time-series data for irradiance, temperature, and wind. | NASA POWER, ERA5, NSRDB, TMY files |

| Statistical Computing Environment | Platform for distribution fitting, time-series analysis, and MCS execution. | Python (SciPy, statsmodels, pandas), R, MATLAB |

| Probability Distribution Fitter | Software module to fit empirical data to theoretical PDFs and test goodness-of-fit. | SciPy.stats, R fitdistrplus, MATLAB Distribution Fitter app |

| Time-Series Generator | Generates synthetic, correlated sequences of stochastic variables from fitted models. | Custom ARMA/ Markov code, statsmodels.tsa |

| Reliability Database | Curated source of failure rate (λ) and repair time data for engineering components. | OREDA, IEEE Standard 493, NREL Database |

| High-Performance Computing (HPC) Resource | Enables the execution of thousands of MCS trials for robust statistical output. | Cloud computing (AWS, GCP), local computing clusters |

4. Visualization of the Characterization Workflow

Title: Stochastic Variable Characterization Workflow

Title: Input Variables Feed Monte Carlo Simulation

Within the broader thesis on Monte Carlo (MC) simulation for Hybrid Renewable Energy System (HRES) reliability research, the selection of simulation logic is critical. Two principal methodologies exist: Time-Series Simulation and Sequential Monte Carlo (SMC) Simulation. This application note details their comparative application for modeling HRES comprising solar photovoltaic (PV), wind turbines, and battery storage, providing protocols for implementation.

Core Methodologies: Comparative Framework

Time-Series (Quasi-Static) Simulation

This approach uses chronological, typically hourly, historical or synthetic data for renewable generation and load. It performs a deterministic power balance at each time step, counting failures (e.g., loss of load) when supply cannot meet demand. Reliability indices are calculated directly from the simulation history.

Sequential Monte Carlo (SMC) Simulation

SMC is a state-duration sampling approach. It uses probability distributions (e.g., for component failure and repair times, wind speed, solar irradiance) to randomly generate sequences of system states over time. It accounts for the stochastic dependencies between weather states and component failures, simulating the actual chronological transition of the system.

Table 1: Comparative Analysis of Simulation Logics for HRES Reliability

| Feature | Time-Series Simulation | Sequential Monte Carlo (SMC) Simulation |

|---|---|---|

| Core Logic | Deterministic analysis of chronological profiles. | Stochastic sampling of state duration sequences. |

| Input Data | Time-series data for load, wind speed, solar irradiance. | Probability distributions (e.g., Weibull for wind, Markov for component states). |

| Output | Single reliability index set from the series. | Statistical distribution of indices via repeated yearly simulations. |

| Key Advantage | Computationally efficient, simple to implement. | Models random contingencies and weather dependencies more realistically. |

| Key Limitation | Cannot inherently model random equipment failures; assumes perfect component reliability unless explicitly integrated. | Computationally intensive; requires more complex input data modeling. |

| Typical Use Case | Sizing studies, initial feasibility, systems where weather variability dominates reliability concerns. | Detailed reliability assessment incorporating both weather and component stochasticity. |

Experimental Protocols

Protocol 3.1: Time-Series Simulation for HRES

Objective: To calculate the Loss of Load Hours (LOLH) and Energy Not Supplied (ENS) using one year of hourly data.

Materials: See "Scientist's Toolkit" (Section 5).

Procedure:

- Data Preparation: Align one year of concurrent hourly time-series for: PV generation (from irradiance), wind generation (from wind speed), and electrical load. Normalize to system capacity.

- Power Balance Calculation: For each hour t (from 1 to 8760), compute:

Net Power = (PV_t + Wind_t) - Load_t. - Battery State Modeling:

a. Initialize Battery State of Charge (SOC)

SOC(0) = SOC_max * 0.5. b. IfNet Power > 0, charge battery:SOC(t) = min(SOC(t-1) + (Net Power * η_charge * Δt) / Capacity, SOC_max). c. IfNet Power < 0, discharge battery to meet load:Energy_Needed = |Net Power| * Δt.SOC(t) = max(SOC(t-1) - (Energy_Needed / η_discharge), SOC_min). d. IfSOC(t)reachesSOC_minand load is still unmet, record a Loss of Load event for that hour. - Index Calculation: After iterating through 8760 hours:

a.

LOLH = Σ (Hours with Loss of Load). b.ENS = Σ (Unmet Load during Loss of Load Hours).

Protocol 3.2: Sequential Monte Carlo Simulation for HRES

Objective: To estimate the probability distribution of the Loss of Load Expectation (LOLE) by simulating multiple synthetic years incorporating component failures.

Materials: See "Scientist's Toolkit" (Section 5).

Procedure:

- Define System States: Model key components (PV array, wind turbine, battery converter, grid connection) with two states: Up (operational) and Down (failed). Define failure rate (λ, failures/year) and mean repair time (r, hours) for each.

- Initialize: Set simulation clock

T = 0. Set all components to 'Up'. Initialize SOC. - Generate Transition Times:

a. For each component i, sample a Time-To-Failure (TTF) from an exponential distribution:

TTF_i = -ln(U) / λ_i, where U is a uniform random number (0,1]. b. Sample a Time-To-Repair (TTR) for each component from a lognormal distribution with meanr_i. c. For weather, use a Markov chain or an autoregressive model to sample the duration of weather states (e.g., low-wind/high-sun periods). - Determine Next Event: Advance simulation clock to the next imminent event (smallest of all TTF, TTR, and weather state transitions).

- Update System State & Power Balance: At the event time, update the state of the changed component. Perform the deterministic power balance calculation (as in Protocol 3.1, Step 3) for the duration since the last event, given the current system state (which components are up/down).

- Record & Loop: Record any Loss of Load events. Update the remaining time for all ongoing processes. Return to Step 3 to sample the next event for the component whose state just changed.

- Annualize & Repeat: Continue until

T >= 8760hours (one synthetic year). Calculate annual LOLE. Repeat from Step 2 forN = 5000synthetic years to build a distribution of LOLE. - Calculate Statistics: The expected LOLE is the mean of the

Nannual LOLE values. The confidence interval can be calculated from the standard deviation.

Visualization of Simulation Logic

Title: HRES Simulation Method Workflow Comparison

Title: HRES Simulation Model I/O Structure

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools & Data for HRES Reliability Simulation

| Item / Software | Function / Purpose | Typical Source / Example |

|---|---|---|

| Meteorological Data | Provides time-series for solar irradiance (GHI, DNI), wind speed, and temperature for energy modeling. | NASA POWER, ERA5 Reanalysis, NSRDB. |

| Load Profile Data | Chronological electrical demand curve for the studied site (residential, commercial, industrial). | OpenEI, site-specific metering, synthetic generation algorithms. |

| Component Reliability Parameters | Failure rate (λ) and Mean Time To Repair (MTTR) for PV modules, inverters, wind turbines, batteries. | IEEE Gold Book, PRISM database, manufacturer datasheets. |

| Numerical Computing Environment | Platform for implementing simulation algorithms, data processing, and statistical analysis. | Python (NumPy, Pandas), MATLAB, R. |

| Statistical Distribution Libraries | Functions to sample from exponential, Weibull, lognormal, and multinomial distributions for SMC. | numpy.random, MATLAB Statistics and ML Toolbox. |

| Markov Chain/AR Model Toolbox | For generating synthetic, stochastic weather sequences that preserve historical patterns. | Python pomegranate, statsmodels, custom code. |

| Parallel Computing Resources | To accelerate SMC by running multiple synthetic years simultaneously. | Python multiprocessing, joblib, high-performance computing clusters. |

Application Notes

In Monte Carlo (MC) simulation for hybrid renewable energy system reliability research, the sampling engine is the core computational driver. Its purpose is to generate stochastic input sequences that model the inherent variability of renewable resources (solar irradiance, wind speed) and load demand. The fidelity and computational efficiency of the simulation are directly governed by the quality of pseudo-random number generation (PRNG) and the effectiveness of applied variance reduction techniques (VRTs).

Key Considerations for Renewable Energy Systems:

- Temporal Correlation: Renewable resources exhibit strong autocorrelation and cross-correlation (e.g., between wind speeds at different turbines). PRNG streams must be transformable to preserve these statistical relationships.

- Rare Events: System failure events (e.g., prolonged low-generation periods) are rare but critical. Standard Monte Carlo requires prohibitively many samples to estimate their probability accurately, necessitating VRTs.

- High-Dimensionality: Systems with multiple, spatially distributed resources and components involve sampling from high-dimensional joint probability distributions.

Core Protocols & Methodologies

This protocol details the generation of time-series inputs for solar and wind power, respecting their marginal distributions and correlation structure.

Materials & Data Requirements:

- Historical time-series data for solar irradiance (kW/m²) and wind speed (m/s) at the site.

- Fitted probability distributions for each resource (e.g., Weibull for wind speed, Beta for solar irradiance clearness index).

- Calculated correlation matrix (lag-zero and potentially lag-one).

Procedure:

- Seed Initialization: Initialize a proven PRNG (e.g., Mersenne Twister, PCG64) with a defined seed for reproducibility.

- Generate Independent Normals: Sample a sequence of independent, identically distributed (i.i.d.) standard normal random variables

Z ~ N(0,1)for each time step and resource using the PRNG and an inverse transform method (e.g., Box-Muller). - Induce Correlation: Apply a Cholesky decomposition (

L) to the target correlation matrixC(whereC = L * L^T). Multiply the vector of independent normals byLto obtain a vector of correlated standard normal variablesX_corr = L * Z. - Transform to Target Marginals: Map each correlated normal variable to the uniform distribution using the Gaussian CDF:

U = Φ(X_corr). Then, apply the inverse CDF (quantile function) of the target marginal distribution (e.g., Weibull, Beta) to obtain the final correlated wind speed or solar irradiance sample:Y = F_{target}^{-1}(U). - Validation: Verify the output sequences maintain the target marginal distributions and inter-resource correlations using statistical tests (Kolmogorov-Smirnov, Pearson correlation).

Protocol 2.2: Implementing Stratified Sampling for Rare Failure Event Analysis

This protocol applies stratified sampling to reduce variance in estimating the Loss of Load Probability (LOLP) or Expected Energy Not Supplied (EENS).

Materials & Data Requirements:

- System model (power flow, dispatch logic).

- Defined failure state (e.g., load shedding > 0).

- Input probability distributions for all stochastic variables.

Procedure:

- Define Stratification Variables: Identify one or two key input variables that most influence system failure (e.g., total wind power output at a critical hour, or net load). These become the stratification variables.

- Partition the Distribution: Divide the range of each stratification variable into

Kmutually exclusive and exhaustive strata. Strata in the tail regions (very low wind, very high load) should be defined more finely. - Assign Probabilities: Calculate the probability

p_kof a random input falling into each stratumkbased on its theoretical distribution. - Allocate Samples: Determine the number of samples

n_kto draw from each stratum. For proportional allocation,n_k = N * p_k, whereNis the total sample budget. - Sample Within Strata: Within each stratum

k, samplen_kinput vectors. First, sample the stratification variable uniformly from the stratum's sub-interval. Then, conditional on this value, sample all other input variables (e.g., solar power at other times, component failures) using standard conditional sampling techniques or Latin Hypercube within the stratum. - Run Simulation & Aggregate: Execute the system reliability simulation for each sampled input vector. The unbiased estimate for the probability of failure

P_fis calculated as:P_f = Σ (p_k * (m_k / n_k)), wherem_kis the number of failure events observed in stratumk.

Data Presentation

Table 1: Comparison of Common PRNGs for Long-Duration Energy System Simulation

| PRNG Algorithm | Period | Speed | Key Strength | Key Weakness | Recommended Use Case |

|---|---|---|---|---|---|

| Mersenne Twister (MT19937) | 2^19937-1 | Medium | Extremely long period, proven. | Large state, not suitable for multiple parallel streams. | Baseline serial simulations. |

| PCG Family (e.g., PCG64) | 2^128 | Very High | Excellent statistical quality, small state, stream support. | Relatively new family. | General-purpose, especially for multiple independent replications. |

| Xoroshiro128+ | 2^128-1 | Very High | Very fast, good performance on statistical tests. | Fails some complex tests, limited period for large-scale simulations. | Preliminary, high-speed exploratory simulations. |

| Sobol Sequence | Deterministic | Low | Low-discrepancy, provides faster convergence (Quasi-Monte Carlo). | Sequence is deterministic, sensitive to dimensionality. | Final production runs for problems with moderate effective dimension. |

Table 2: Efficacy of Variance Reduction Techniques in Reliability Assessment

| Technique | Variance Reduction Principle | Computational Overhead | Implementation Complexity | Estimated Variance Reduction* (for LOLP) |

|---|---|---|---|---|

| Crude Monte Carlo | N/A (Baseline) | None | Low | 0% (Baseline) |

| Stratified Sampling | Ensures sampling from all important regions of input space. | Low | Medium | 40-70% |

| Latin Hypercube Sampling (LHS) | Ensures full stratification of each marginal distribution. | Low | Low-Medium | 20-50% |

| Importance Sampling | Biases sampling towards important failure regions. | Medium-High (requires good biasing distribution) | High | 60-90% |

| Control Variates | Uses correlated, analytically tractable variables to reduce error. | Low (if good CV is found) | Medium | 30-60% |

*Illustrative estimates based on typical hybrid system studies; actual reduction is highly problem-dependent.

Visualizations

Title: Workflow for Generating Correlated Renewable Resource Inputs

Title: Stratified Sampling for Rare Failure Event Analysis

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Libraries

| Item (Software/Package) | Function in Sampling Engine | Typical Specification / Notes |

|---|---|---|

| NumPy & SciPy (Python) | Core numerical engine. Provides PRNGs (NumPy), statistical functions, and probability distributions (SciPy). | Use numpy.random.Generator with PCG64. scipy.stats for distributions and statistical tests. |

| SALib (Python) | Library for sensitivity analysis. Helps identify key input variables for stratification or importance sampling. | Provides Sobol, Morris, and FAST methods to quantify input influence on output variance. |

| Chaospy / OpenTURNS | Advanced uncertainty quantification libraries. Facilitate generation of correlated random variables and polynomial chaos expansions. | Use for complex dependency structures and as a complement to Quasi-Monte Carlo methods. |

| Custom CUDA/C++ Code | For high-performance, parallel sampling of very large systems (e.g., thousands of scenarios, year-long simulations). | Requires careful implementation of parallel PRNGs (e.g., using Philox or Curand libraries on GPU). |

| Reproducibility Seed Manager | A custom code module to manage and log PRNG seeds across different simulation batches and parallel processes. | Critical for replicating results. Must ensure independent, non-overlapping random streams. |

In Monte Carlo simulation studies for hybrid renewable energy system (HRES) reliability, the final computational step involves transforming raw simulation outputs into statistically robust reliability metrics with quantified uncertainty. This protocol details the systematic process for calculating key performance indicators like Loss of Load Probability (LOLP), Expected Energy Not Supplied (EENS), and Frequency & Duration indices, and for constructing confidence intervals to communicate the precision of these estimates. This process is fundamental for making high-consequence decisions in energy system design and for validating models against regulatory or performance standards.

Core Statistical Metrics for HRES Reliability

The primary reliability metrics calculated from N Monte Carlo simulation runs are summarized below.

Table 1: Key Reliability Metrics for HRES Monte Carlo Simulation

| Metric | Formula | Interpretation | Typical Unit |

|---|---|---|---|

| Loss of Load Probability (LOLP) | $$LOLP = \frac{1}{N} \sum{i=1}^{N} Ii$$ where (I_i = 1) if load > supply in hour i, else 0. | Probability that the system cannot meet demand. | dimensionless (or %) |

| Expected Energy Not Supplied (EENS) | $$EENS = \frac{1}{N} \sum{i=1}^{N} (Loadi - Supply_i)^+$$ | Expected average energy deficit per time period. | kWh/year |

| Loss of Load Expectation (LOLE) | $$LOLE = \sum{i=1}^{T} LOLPi$$ | Expected number of hours/periods of failure. | hours/year |

| Frequency of Failure (f) | $$f = \frac{1}{N} \sum{i=1}^{N} (Number\ of\ failure\ events)i$$ | Expected rate of failure initiation. | events/year |

| Average Failure Duration (d) | $$d = \frac{EENS}{f \times Average\ Load\ during\ failure}$$ | Mean duration of a failure event. | hours/event |

Protocol: Calculating Metrics and Confidence Intervals

Experimental Protocol: Post-Simulation Statistical Analysis

Objective: To compute point estimates and (1-α)% confidence intervals for HRES reliability metrics from Monte Carlo simulation output data.

Materials & Input Data:

- Processed Simulation Output: A time-series matrix (or equivalent dataset) from N independent simulation runs, containing for each time step t: total electrical load (demand), total power supply from all HRES components, and system state (normal/failure).

- Statistical Software: Computational environment (e.g., Python with NumPy/SciPy, R, MATLAB).

Procedure:

Data Aggregation:

- For each simulation run j (j=1...N), calculate the run-specific metrics:

LOLP_j= (Number of time periods with deficit) / (Total periods in run).EENS_j= Sum of energy deficit across all time periods in run.

- Store results in vectors:

LOLP_vectorandEENS_vector.

- For each simulation run j (j=1...N), calculate the run-specific metrics:

Point Estimation:

- Calculate the sample mean for each metric:

LOLP_est = mean(LOLP_vector)EENS_est = mean(EENS_vector)

- These are the primary reliability indices for the system.

- Calculate the sample mean for each metric:

Confidence Interval (CI) Construction using Central Limit Theorem:

- Calculate the sample standard deviation (s) for each metric vector.

- Determine the standard error:

SE = s / sqrt(N). - Select confidence level (1-α), e.g., 95% (α=0.05). Find the critical z-value (e.g.,

z_{1-α/2}≈ 1.96 for 95% CI). - Compute the CI:

[Point_Estimate - z*SE , Point_Estimate + z*SE].

Reporting:

- Report all metrics as: Metric (Units): Estimate [Lower CI, Upper CI], Confidence Level%.

- Example: LOLE (hr/yr): 12.4 [9.8, 15.1], 95% CI.

Visualization: Statistical Output Workflow

Diagram Title: Monte Carlo Reliability Metric & Confidence Interval Workflow

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Computational & Analytical Tools for Reliability Output Analysis

| Item / Solution | Function / Purpose |

|---|---|

| NumPy / SciPy (Python) | Core libraries for efficient numerical computation, array operations, and statistical functions (e.g., mean(), std(), scipy.stats.norm.interval() for CI calculation). |

| Pandas (Python) | Provides DataFrame structures for organizing and manipulating time-series output data from multiple simulation runs. |

| Matplotlib / Seaborn (Python) | Enables visualization of result distributions, convergence plots, and confidence intervals for publication-quality figures. |

| R Statistical Language | Alternative environment offering extensive built-in statistical packages for robust interval estimation and distribution fitting. |

| Convergence Diagnostic Scripts | Custom code to plot metric estimates vs. number of simulations (N) to visually confirm result stability and determine necessary N. |

| High-Performance Computing (HPC) Scheduler | Manages batch processing of thousands of independent simulation runs required for statistically significant results. |

| Reliability Standards Database | Reference repository (e.g., IEEE 1366, regulatory documents) for target reliability metrics used in validation and benchmarking. |

This application note details the computational model structure for a remote hybrid renewable energy microgrid within a broader thesis employing Monte Carlo Simulation (MCS) to quantify system reliability. The primary research aim is to assess the Loss of Load Probability (LOLP) and Expected Energy Not Supplied (EENS) under stochastic resource and load variability, providing a risk-informed framework for system design—a methodological parallel to dose-response and toxicity risk assessment in pharmaceutical development.

System Component Modeling & Data

The microgrid comprises photovoltaic (PV) arrays, wind turbines, a battery energy storage system (BESS), and a diesel generator as backup. Performance models and stochastic input parameters are defined below.

Table 1: Stochastic Input Parameters for MCS

| Component | Parameter | Symbol | Value / Distribution | Data Source / Justification |

|---|---|---|---|---|

| PV System | Rated Power | PPVrated | 50 kW | Design specification |

| Actual Output | P_PV(t) | Prated * G(t)/Gstd * η_PV | Model equation | |

| Solar Irradiance | G(t) | Beta Distribution (α, β) derived from site data | NASA/POWER or local meteo. dataset | |

| Wind System | Rated Power | PWrated | 30 kW | Design specification |

| Actual Output | P_W(t) | 0 for v < vcut-in; Cubic law for vcut-in ≤ v ≤ vrated; Prated for vrated ≤ v ≤ vcut-out | Manufacturer power curve | |

| Wind Speed | v(t) | Weibull Distribution (k=2, c= mean wind speed) | Site measurement fitting | |

| BESS | Rated Capacity | EBESSrated | 200 kWh | Design specification |

| Initial State of Charge | SOC_initial | 50% | Simulation assumption | |

| Charge/Discharge Eff. | ηch, ηdis | 95% each | Manufacturer data | |

| Diesel Generator | Rated Power | PDGrated | 40 kW | Design specification |

| Fuel Curve | F(t) | 0.3 L/kWh (linear approximation) | Manufacturer specification | |

| Load | Hourly Demand | P_Load(t) | Normal Dist. (μ=load profile, σ=10% of μ) | Synthetic profile from community survey |

Core Experimental Protocol: Monte Carlo Reliability Simulation

This protocol details the steps to execute the MCS for calculating LOLP and EENS.

Protocol Title: Monte Carlo Simulation for Hourly Annual Microgrid Reliability Assessment. Objective: To statistically evaluate the reliability metrics LOLP and EENS through sequential, hourly simulation of system performance over one synthetic year (8760 hours) with N iterations. Materials (Computational): See "The Scientist's Toolkit" below. Procedure:

- Initialization: Define simulation horizon (T=8760 hours), number of trials (N=10,000), and set counters

LOLP_count = 0,EENS_sum = 0. - Stochastic Variable Generation: For each hour t in T and for each trial i in N: